Easing Application and Infrastructure Management with the Dell APEX Cloud Platform for Red Hat OpenShift

Fri, 13 Sep 2024 14:14:56 -0000

|Read Time: 0 minutes

Introduction

The approach to business application deployment is consistently evolving. At one time, software was installed on an operating system sitting directly on bare metal. As time progressed, advancements in hardware density caused virtualization to become the standard to bring greater flexibility in how workloads are organized and provided the basis needed for greater efficiency and resiliency where it was needed most. You no longer had to wait for a server to be ordered, shipped, racked, cabled, and deployed; you simply provision a new virtual machine (VM). This allowed the business to move at a pace that was previously unimaginable, hours or minutes instead of days or weeks. Now, containerization is enabling businesses to see even greater improvements.

Before we dive in, let’s take a moment to compare bare metal deployments, virtualization, and containerization. Imagine that you’re a developer, and you want to push an application into production. In a bare metal deployment, the application lives on a single host, and share its resources inside a physical chassis with the operating system (OS), and any other applications the OS may be running. Additional storage resources may be available externally, but the compute and memory resources are confined to what the server has available. If you were to deploy in a virtualized environment, the application would live within a VM that could potentially be moved between different nodes in a cluster. This provides flexibility for the resources needed to meet all your application needs, as well as application resiliency by migrating or restarting VMs on other nodes in the case that a node goes offline. The ability to migrate VMs also allowed for greater levels of hardware efficiency, because workloads can be consolidated. However, each VM is running its own OS, and each OS needs its own allocation of memory, storage, and compute resources.

Containerization is an evolution of this practice and resource consumption at scale is just one issue it solves. In a containerized environment, resource consumption is greatly reduced. A container is a sandbox environment of the application code and the necessary dependencies required to run the application. These containers share a kernel, where VMs each have their own. For example, a container could contain a Node.js application and its dependencies, such as Express, while another container may contain a Python application and its dependencies, like TensorFlow or Pandas. This is how containers have a smaller resource footprint than VMs. So, say you deploy a number of containerized applications, how do you manage them? You need an orchestration platform, which brings us to Kubernetes.

Kubernetes is a container orchestration platform hosted and maintained by the Cloud Native Computing Foundation (CNCF). Kubernetes facilitates the deployment, scaling, and management of container applications. Some applications are publicly available in a cloud-native format. Custom software solutions can also be deployed and managed using Kubernetes.

Pods are the smallest deployable unit of compute and storage resources in a Kubernetes environment. A pod may contain as few as one container but can contain as many as needed. A commonly accepted best practice is to deploy one process per container. If an application is needed to run three processes, then three containers would be deployed within a new pod and one process runs within each container. The containers run using Docker, a complementing software technology. Kubernetes is used to manage and modify containers and clusters.

What is Red Hat OpenShift?

Red Hat OpenShift is a collection of container software products developed by Red Hat. It’s based on Red Hat Enterprise Linux, and the container orchestration is powered by Kubernetes. OpenShift can also be considered a Kubernetes distribution developed by Red Hat, as opposed to the open-source Kubernetes release. It includes a number of software components curated by Red Hat packaged together into one solution. This solution is deployed and updated as one cohesive unit. Let’s take a moment to break these different components down to better understand them.

A purpose-built version of Red Hat Enterprise Linux called Red Hat CoreOS is the OS environment running directly on the hardware. Traditional services, like host networking and system drivers, run here. The base OS isn’t where we see the scalability benefits that containerization brings, though. For that, we need to move up another level. On top of Red Hat Enterprise Linux we find OpenShift. Red Hat used Kubernetes to develop OpenShift and create a platform that makes containerization much easier. The container runtime operates here. A container runtime is a software package that utilizes OS features to create the necessary space to run containerized applications, also known as container images. OpenShift includes a web console to create a graphical user interface. This is where admins manage workloads, storage, networking, and cluster settings. A variety of other tasks, such as user management, cluster updates, monitoring, log-related tasks can be done here also. Let’s examine a use case.

Once again, imagine you’re a developer. You need to update an application running in your cloud environment. To do this, you first make the necessary changes to the code and push those changes to a repository. Once the changes are published in the repository, OpenShift can pull them from it and run container images based on the new code. As workload demands increase or decrease, the number of running images can be increased or decreased to scale as needed to best match the demand.

Now that we have a better idea of what containerization is and what OpenShift does, let’s consider what the Dell APEX Cloud Platform adds to the experience.

How does the Dell APEX Cloud Platform Enhance Red Hat OpenShift?

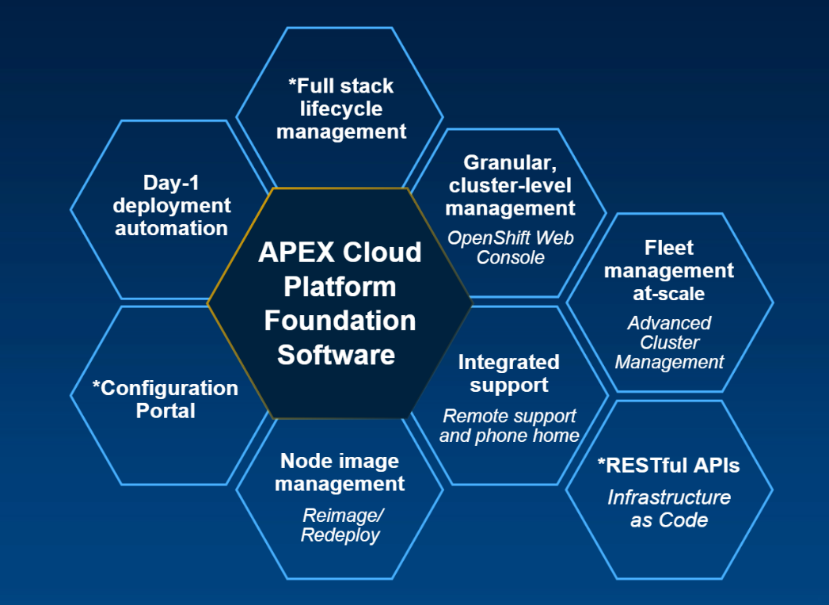

Containerization offers businesses so many benefits, especially when the conversation comes to scalability. As you run more applications in a container environment, stability becomes exponentially more important. Consider that if you’re running a large number of container images and the underlying nodes begin to conflict with the OS or OpenShift software, any resulting outages would be that much more impactful. The Dell APEX Cloud Platform for Red Hat OpenShift provides a more stable base to run your OpenShift environment while making lifecycle management of the clusters easy.

Planning and deploying an OpenShift environment, or any other Kubernetes variation, can be complex. Planning considerations begin at the component level. For example, network controllers that meet both business application throughput and OpenShift compatibility requirements. This process must be repeated for each node in the cluster and could lead to complications if an additional node is needed later. When businesses choose APEX nodes for their OpenShift environment, they select from a hardware catalog that has been curated to work with the requirements of OpenShift.

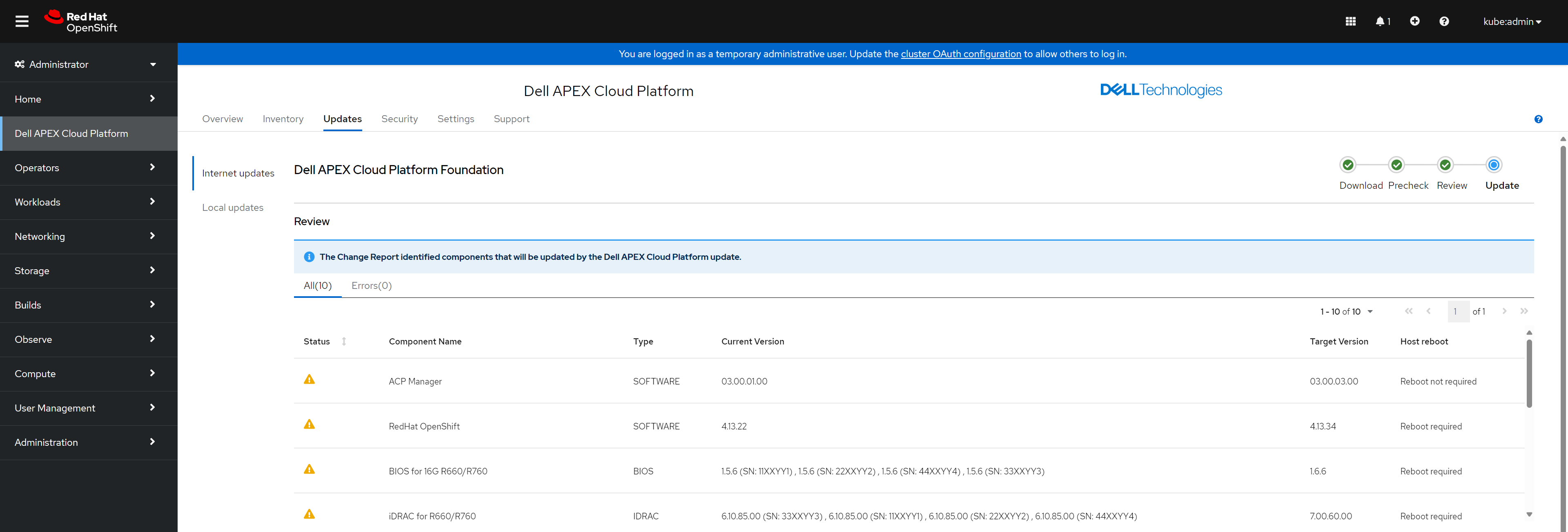

The APEX Cloud Platform for Red Hat OpenShift has been jointly engineered with Red Hat to create an integrated experience from installation through lifecycle management. Each update package complies with a Continuously Validated State developed by Dell engineers. Continuously Validated States are software and firmware configurations that are rigorously tested in Dell hardware labs to ensure that the new versions are compatible and stable. This eliminates the concern for testing, when it comes to updating the hardware and software platform and allows developers and administrators to focus on meeting business demands.

Without the benefit of updates conforming to a Continuously Validated State, administrators can easily spend hours of time seeking out update packages, reviewing release notes to check for interoperability, downloading and deploying the updates to cluster nodes in a test environment. This doesn’t even begin to account for the hours needed to have those new updates running in a test environment before they would be considered safe to push to a production environment. Once that testing is complete, there are even more hours to update production clusters. This can easily add up to enough hours to have staff who are constantly working with software updates, when there are far more productive tasks IT staff could be working on. Customers can use these benefits, and more, to externalize labor effort and reduce costs.

The APEX Cloud Platform offers more than simplified lifecycle management and hardware validation. In addition to these benefits, customers also have Dell engineers deploy APEX Cloud Platform clusters and provide end-to-end support. Deploying a DIY OpenShift or Kubernetes solution is an activity that requires a great deal of planning, preparation, and execution time. A customer could certainly hire an outside consultant to design and deploy the solution, but this creates segmentation that wouldn’t be present in APEX Cloud Platform. When issues do occur, customers benefit from faster resolutions due to support teams being equipped to support both the hardware and software layers for a single source of support, compared to needing to coordinate with potentially multiple different infrastructure vendors to restore a cluster to optimal health. Select OpenShift operators, software extensions that offer additional functional capabilities are supported for use with APEX Cloud Platform and can be deployed with the cluster. These include the Advanced Cluster Management, AI, and virtualization operators. Deploying OpenShift clusters using Dell APEX nodes makes a complicated process significantly easier.

Wrapping It Up

Containerization is a phenomenal way to utilize resources better and is the true cloud-native approach. When it comes to scalability, it’s certainly the best option. However, it does bring some additional complexity in a variety of areas. The Dell APEX Cloud Platform for Red Hat OpenShift greatly reduces this complexity and empowers businesses to keep their IT resources where they really need them the most. They can stay focused on business tasks and spend far fewer hours maintaining infrastructure. I hope you’ll join me for my next blog, where we’ll look deeper into what containers are.

Additional Resources

What are Red Hat OpenShift Containers? (2 of 4)

Simplify Cloud Application Deployments with Red Hat OpenShift (3 of 4)

Run Containers and Virtual Machines on the Same Cluster with Red Hat OpenShift (4 of 4)

APEX Cloud Platform for Red Hat OpenShift

Red Hat OpenShift Product Page

Kubernetes vs Red Hat OpenShift

Author: Dylan Jackson, Engineering Technologist