A Single RAG Solution to Generate CLI, REST API, and Ansible Tasks for PowerScale

Wed, 17 Jul 2024 13:47:15 -0000

|Read Time: 0 minutes

Code generation is one of the use cases where existing Generative AI models really excel. Using coding assistants like Codeium and GitHub Copilot is becoming the norm for developers. While coding assistants and genAI chat tools work great for general coding tasks in various programming languages, their underlying models may not be trained with a particular SDK that you want to use. This means there’s a need to train a model specifically with the SDK that you would like to use.

Me being an infrastructure-as-code advocate, I took up the task of generating Ansible playbooks/tasks for a given operation on Dell PowerScale. The PowerScale Ansible modules are publicly available along with documentation and examples. In addition to the Ansible modules, PowerScale OneFS has a solid command line interface (CLI) functionality (ISI command set) that many users find is the preferred way to work with PowerScale. OneFS also has a mature REST API that is very well documented and can be used in any programming language. With all this great content, why not train or “augment” an LLM to build a tool that can come up with CLI commands, API resources or entire Ansible code blocks that you can use to automate PowerScale operations?!

Let’s go over the various aspects of how I went about building this tool that can crunch 1300 pages of documentation to accelerate ITOps automation projects. I’ll highlight some of the issues I encountered and things I learned in the process.

OpenAI’s Custom GPT for rapid prototyping

To prototype a tool like this, I chose OpenAI’s Custom GPT capability. The Custom GPT feature (requires an OpenAI paid subscription) is very easy to use to build a typical Retrieval Augmented Generation (RAG) tool. It doesn’t involve any coding for ingesting the content into a vector database, having the API access LLMs, and then building a chat user interface for inference. Anthropic recently released Claude Projects which can also be used for building RAG models using a simple user interface. These tools also make it easy to share custom models with others.

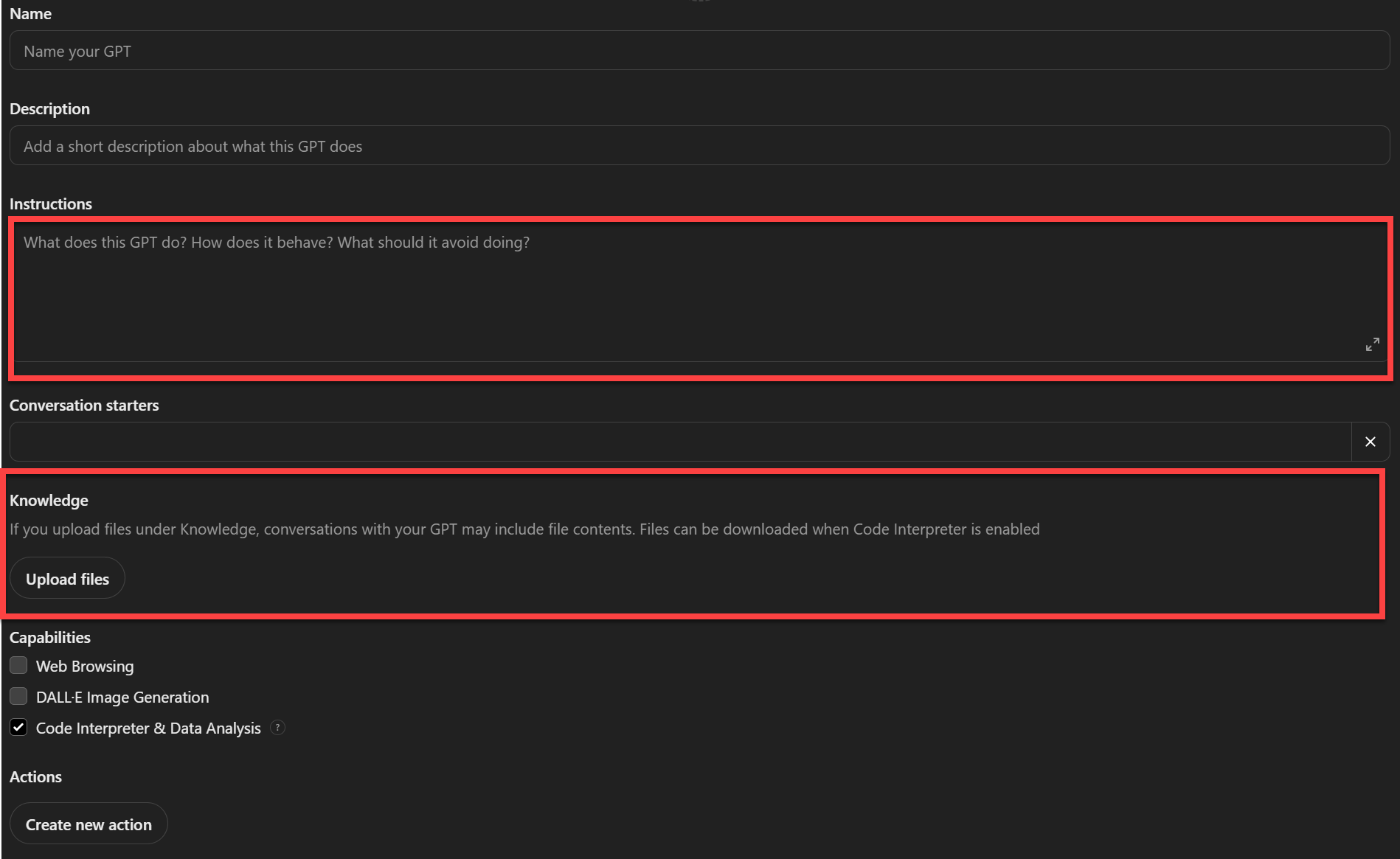

Here is the OpenAI interface to create a custom GPT:

Note that the main areas are the Instructions and Knowledge. The Knowledge area is where content sources are uploaded in various ASCII formats. The Instructions area is where detailed instructions are included about what is expected of the model and how to use the sources. You can also select whether you would want the model to use the internet or use any other custom tool or function as part of this model. This is the case where “agents” that can accomplish specific tasks can augment an LLM’s generation capabilities. To keep the model grounded in the documentation content, I did not include web browsing in my model definition.

RAG models and sources

I created the very first version of the RAG model using only the OneFS ISI CLI Command Reference PDF as the source. It was amazing to see how well the resulting model came up with ISI commands, subcommands, and options available for each command and subcommand combination. This I realized is mostly due to how well the ISI CLI Command Reference documented the CLI functionality in a very structured 850+ pages! I extended this first version by adding the entire REST API Reference Guide (another 250+ pages) to see how well it could come up with REST API resources. This second version was also working well, although more prompting was required to make sure that it was using the latest API version in which a resource was available.

Encouraged by the initial results, I tried training the model using Ansible playbook examples for each of the modules like this. I then tried the RST files, which are also very well structured, for each of the modules. Even this was not very effective. I suspected the large number of files and the chunking and embedding may not be optimal for RST files. This is when I merged all the RST files into a single PDF (200+ pages) with some Python help. The resulting PDF was like a reference guide for the Ansible modules where all the parameters, return values, and other information was presented in a structured format and where it was more like a well tagged supervised learning example.

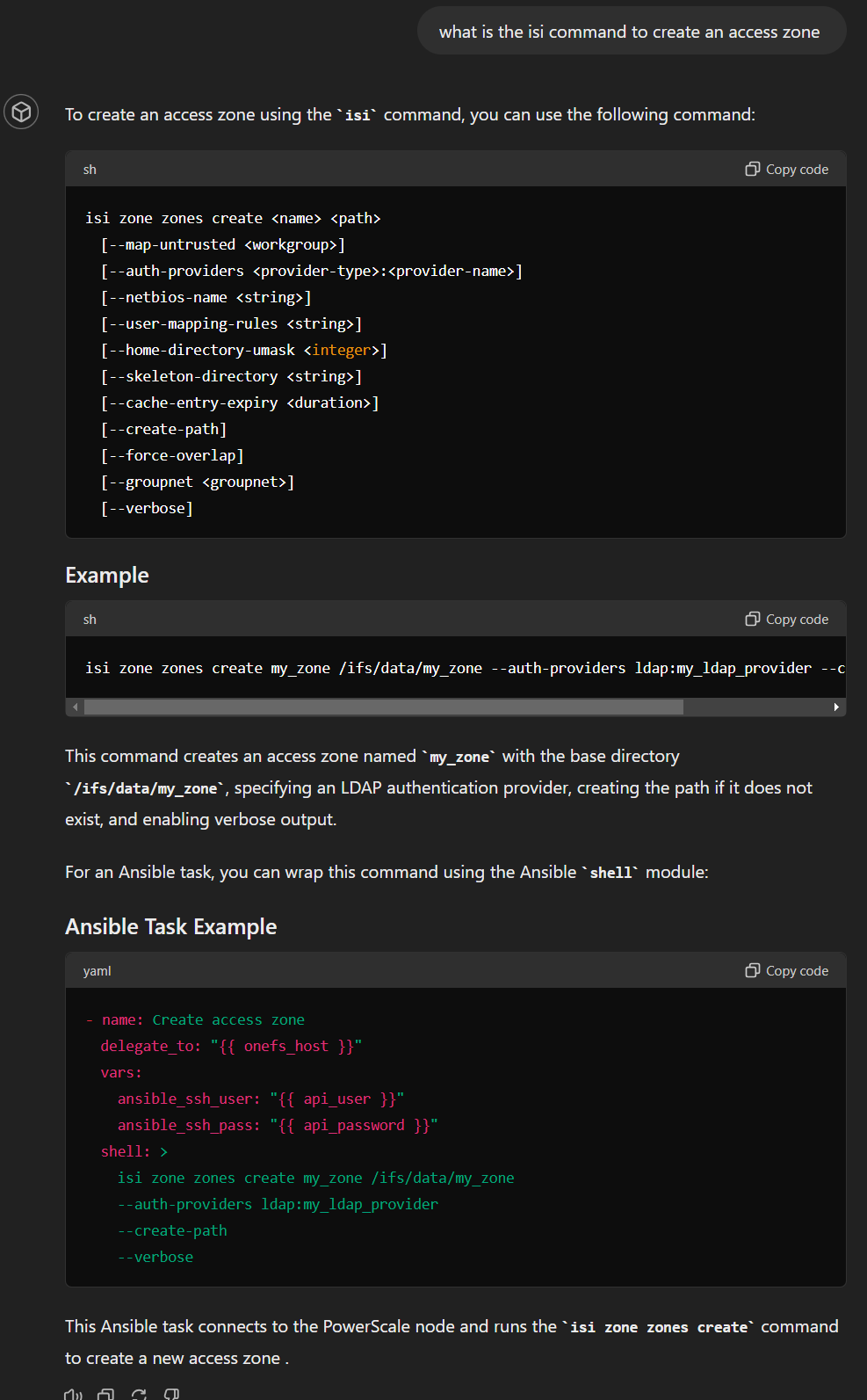

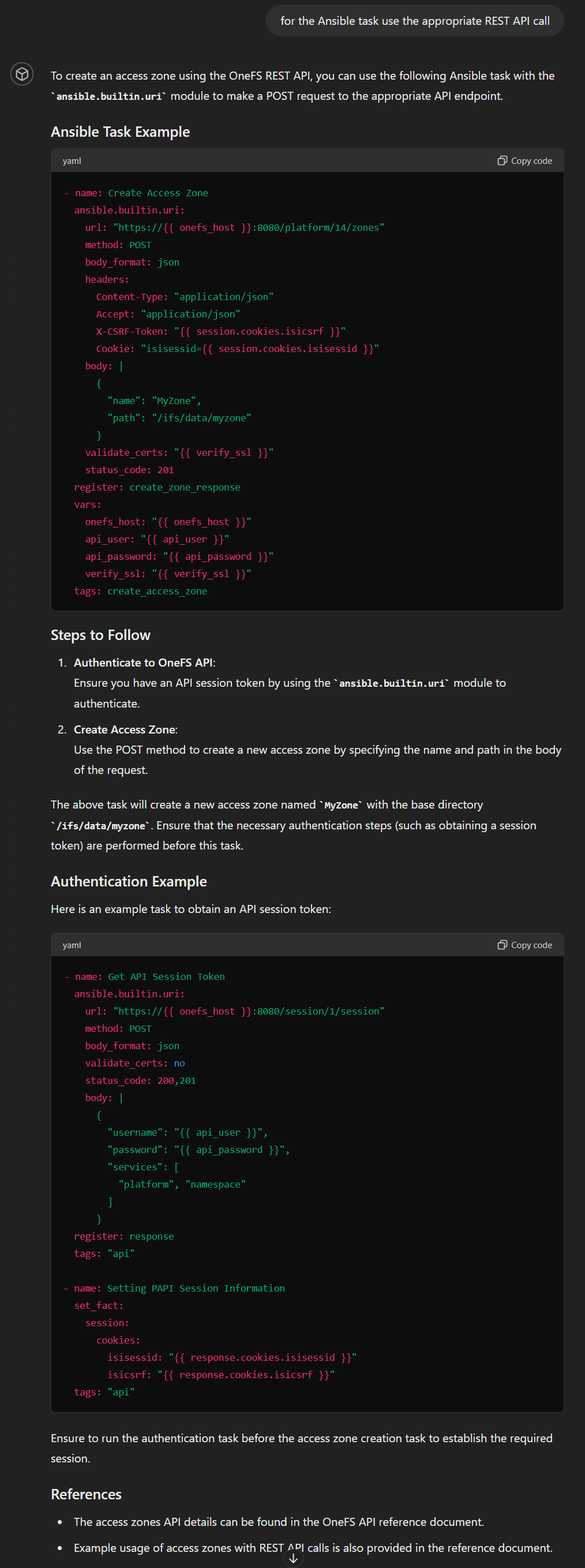

Ansible task blocks with REST API and ISI commands

For a given task request there may not be a PowerScale Ansible module. There are two workarounds for these situations: use the built-in URI module to make a REST API call or simply run the equivalent ISI CLI command (ISI is the popular CLI tool for OneFS) using the shell command module. Even though the latter is not great for error handling, I do use it in some cases with some error handling logic. For the code generation tool, in case there is no Ansible module for the requested task, I wanted the model to be able to do one of the following based on user input:

- Find the relevant ISI CLI command and wrap it in a shell command Ansible task. For the ISI CLI command retrieval, I provided the ISI CLI Command Reference PDF as the source. This is more than 850 pages. Turns out the model found the PDF super learnable. In fact, when I tested another custom GPT with just the CLI Command Reference, the results were great. The model spit out subcommands and options for any ISI command with precision.

- Find the relevant REST API call for the task and use Ansible’s builtin.uri module to wrap the API call to accomplish the task. The source here was the latest available REST API Reference Guide for PowerScale.

Model definition and instructions

Ok, now I have the source documents ready for the PowerScale-Ansible RAG. We are not done yet. In fact, specifying the “Instructions” to specify how exactly the model should function is the most important part. Here is what I provided:

Your role is to generate an Ansible task code block using the right Ansible module as per the request of the user. Attached is a PDF file that documents all of the dellemc.powerscale modules like dellemc.powerscale.ads, dellemc.powerscale.nfs, dellemc.powerscale.user etc. For each of the modules in the pdf there are separate sections for Synopsis, Requirements, Parameters, Notes, Examples and Return Values. When generating an Ansible task with one of the modules make sure you use the right module and with the right parameters needed. You can find examples of how each module can be used. Note that every task block needs to have the following details:

onefs_host: "{{onefs_host}}"

verify_ssl: "{{verify_ssl}}"

api_user: "{{api_user}}"

api_password: "{{api_password}}".It is possible that for a task requested by user there may not be an Ansible module. In such cases find the relevant ISI CLI command from the "isi cli reference.pdf" and create an ansible task by wrapping the ISI CLI command using an Ansible builtin "shell" module. Use the following format for such shell module tasks:

- name: event test create

delegate_to: "{{ onefs_host }}"

vars:

ansible_ssh_user: "{{ api_user }}"

ansible_ssh_pass: "{{ api_password }}"

shell: "isi event test create --message=\"Test Message, This is a new deployement\""For things related to isi cli, make sure you pick the right command, subcommand and options. for example "isi ntp" can be used for operations like "isi ntp servers list" and "isi ntp settings list".

If the user asks for Ansible tasks using REST API, generate task blocks using the ansible.builtin.uri module using the various GET/PUT/POST/DELETE methods on the appropriate OneFS API resource. All the resources can be found in the onefs-api-reference-en-us.pdf. Note that the api resources are organized as /platform/<version number>/<resource>/<sub resource>. Here are a few examples of a task using the ansible.builtin.uri :

- name: Create Alert Conditions

ansible.builtin.uri:

url: "https://{{ onefs_host }}:8080/platform/11/event/alert-conditions"

validate_certs: no

method: post

headers:

X-CSRF-Token: "{{ session.cookies.isicsrf }}"

Cookie: "isisessid={{ session.cookies.isisessid }}"

Referer: "https://{{ onefs_host }}:8080"

status_code: 200,201,400

body_format: json

body: |

{

"name": "SMTP - {{ item }}",

"condition": "{{ item }}",

"categories": [

"all"

],

"channels": [

"SMTP"

]

}

with_items:

- NEW

- RESOLVED

- SEVERITY INCREASE

tags: "alert-conditions"Another example:

- name: Enable Remote Support

ansible.builtin.uri:

url: "https://{{ onefs_host }}:8080/remote-service/11/esrs/status"

validate_certs: no

method: put

headers:

X-CSRF-Token: "{{ session.cookies.isicsrf }}"

Cookie: "isisessid={{ session.cookies.isisessid }}"

Referer: "https://{{ hostname }}:8080"

status_code: 200

body_format: json

body: |

{

"enabled": true

}

tags: "esrs-enable"Note the following Ansible tasks for OneFS API authentication:

- name: Get API Session Token

ansible.builtin.uri:

url: "https://{{ onefs_host }}:8080/session/1/session"

method: post

body_format: json

validate_certs: no

status_code: 200,201

body: |

{

"username": "{{ api_user }}",

"password": "{{ api_password }}",

"services": [

"platform", "remote-service", "namespace"

]

}

register: response

tags: "api"

- name: Setting PAPI Session Information

set_fact:

session:

cookies:

isisessid: "{{ response.cookies.isisessid }}"

isicsrf: "{{ response.cookies.isicsrf }}"

tags: "api"As you can imagine, I did not arrive at these long and detailed instructions in the very first attempt. I had to keep tweaking the instructions and examples to improve the accuracy of the output and reduce hallucinations that were a result of the base model’s original training data and inferencing capabilities.

Test cases

Arriving at a satisfactory RAG model involves iterating with document formats, tweaking instructions, and trying out different prompts. As you go through iterations, it is a good idea to have some test cases to test the many variants of a GPT to understand how the GPT is learning, when it hallucinates, and how to avoid hallucinations. Here is a collection of test cases:

Core functionality

The following are some test cases to see if the model works as expected:

- Generate an Ansible task to do particular operations using the PowerScale Ansible modules

- Generate Ansible tasks for cases where the right ISI CLI command for the task needs to be used with shell command delegation

- Generate Ansible tasks for cases where the right REST API call for the task needs to be used with builtin.uri module

Nice to have: for PowerScale users who know and love their CLI, it would be nice to generate the following Ansible task formats:

- ISI command to PowerScale module: Give an ISI CLI command and ask it to find if there is a PowerScale module available for it

- ISI command to REST API call: Give an ISI CLI command and generate a task with the equivalent REST API call

Testing for hallucinations

Here are the tests I used:

- Listing all available Ansible modules.

- This is a good test case to see if the RAG’s base model tries to come up with modules that sound as though they have very realistic names but are actually not retrieved from the sources provided.

- Listing all modules related to things like NFS Exports and SMB shares

- This test case is a similar to ‘Listing all available Ansible modules’ but is more specific. Here is where RAG models can hallucinate the same naming convention for module name for NFS and SMB, whereas the names of the PowerScale modules for NFS and SMB operations do not follow a tight convention and instead follow terminology like NFS Exports and SMB Shares for example.

- Listing available parameters for a given module

- This drills down further in terms of specificity and checks to see how well the model is using the sources.

Note that by no means do these tests represent an exhaustive list for this RAG model. These are just a few ways to see how well the retrieval is working.

Prompting to prevent hallucinations

The most effective prompting technique that worked for me is simply asking the model to cite the page numbers from the PDFs it referenced. This makes sure the generation process does not skip the content retrieval part and purely depends on its base model’s training and generative capabilities.

Final results

While it is not possible to present the test results in detail, I would like to share with you a few responses verbatim with an example I tried with the latest version of the RAG model that you can access here.

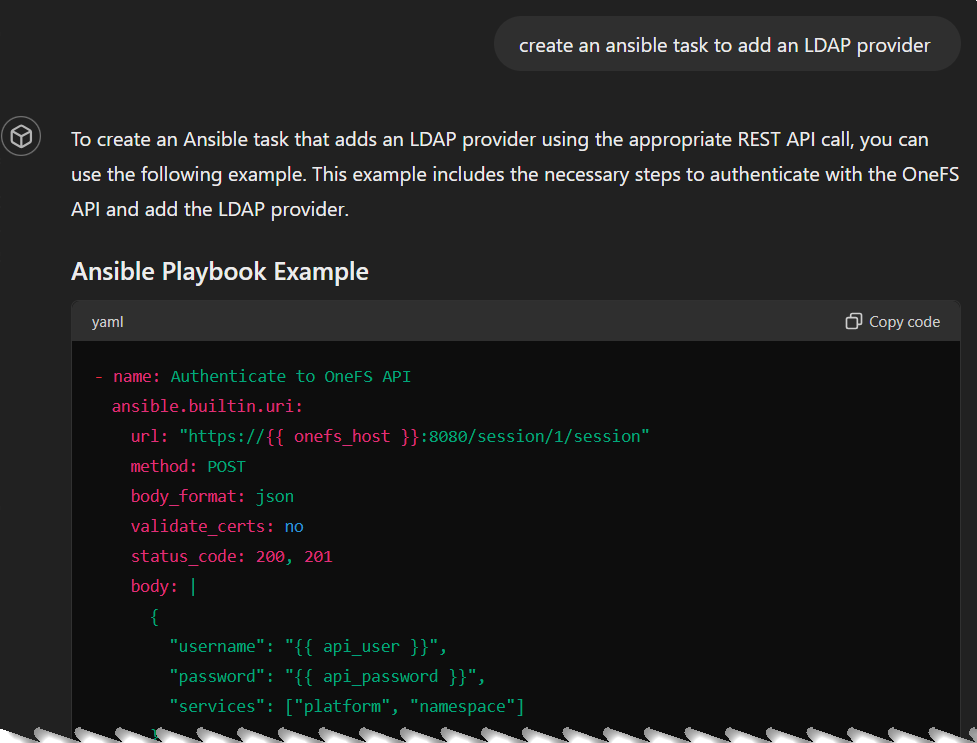

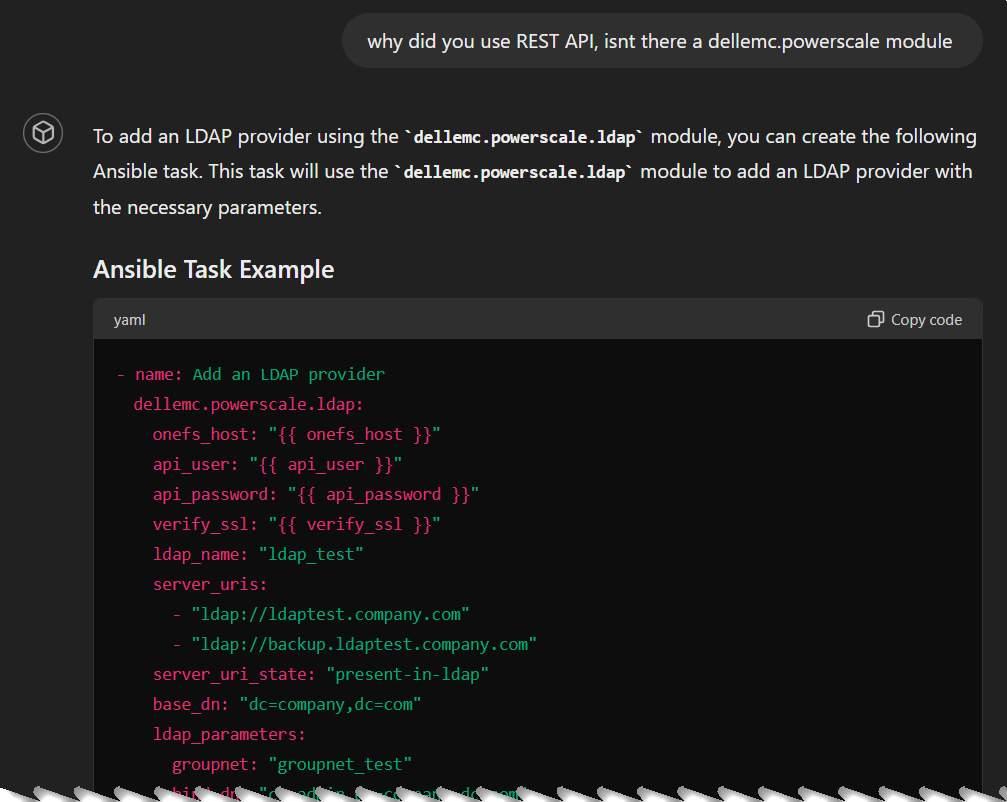

Ready for hallucination? I asked the model for an LDAP task, and it generated it using REST API even though there is an LDAP module:

I prompted the model to use the module instead and it did find the right Ansible module:

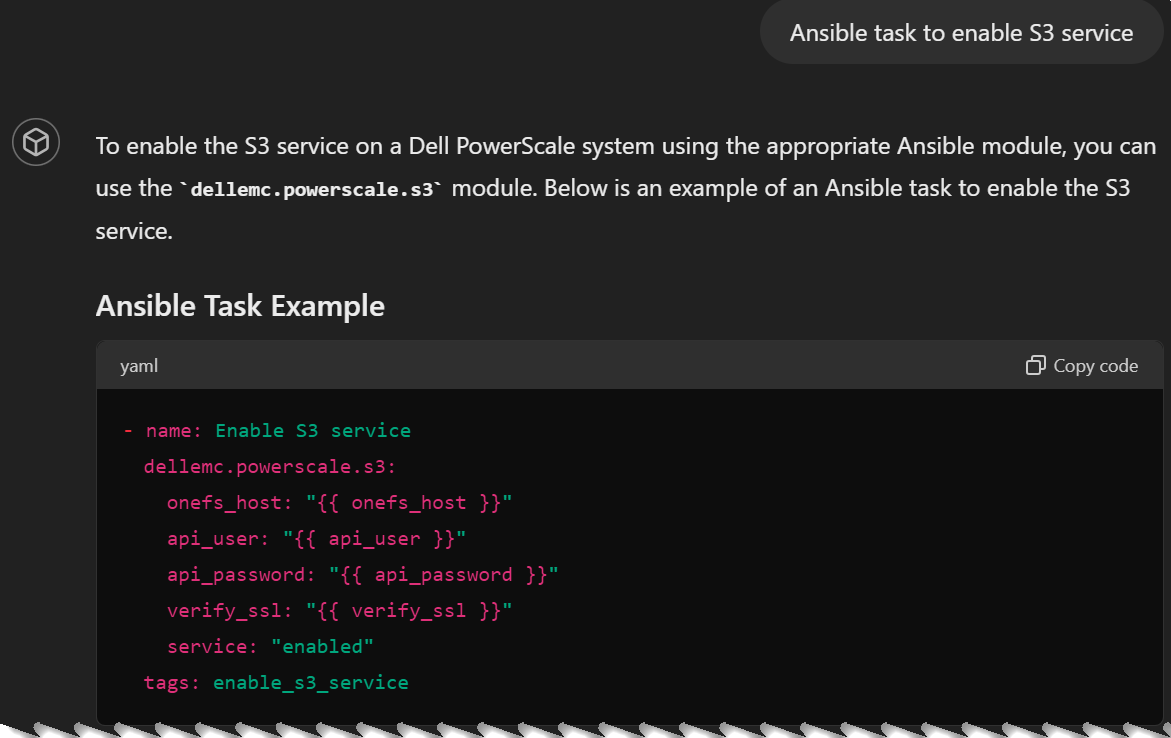

But thanks to this nudging it favored the Ansible module so much that it hallucinated with a non-existing module for the next prompt (Ansible task to enable S3 service):

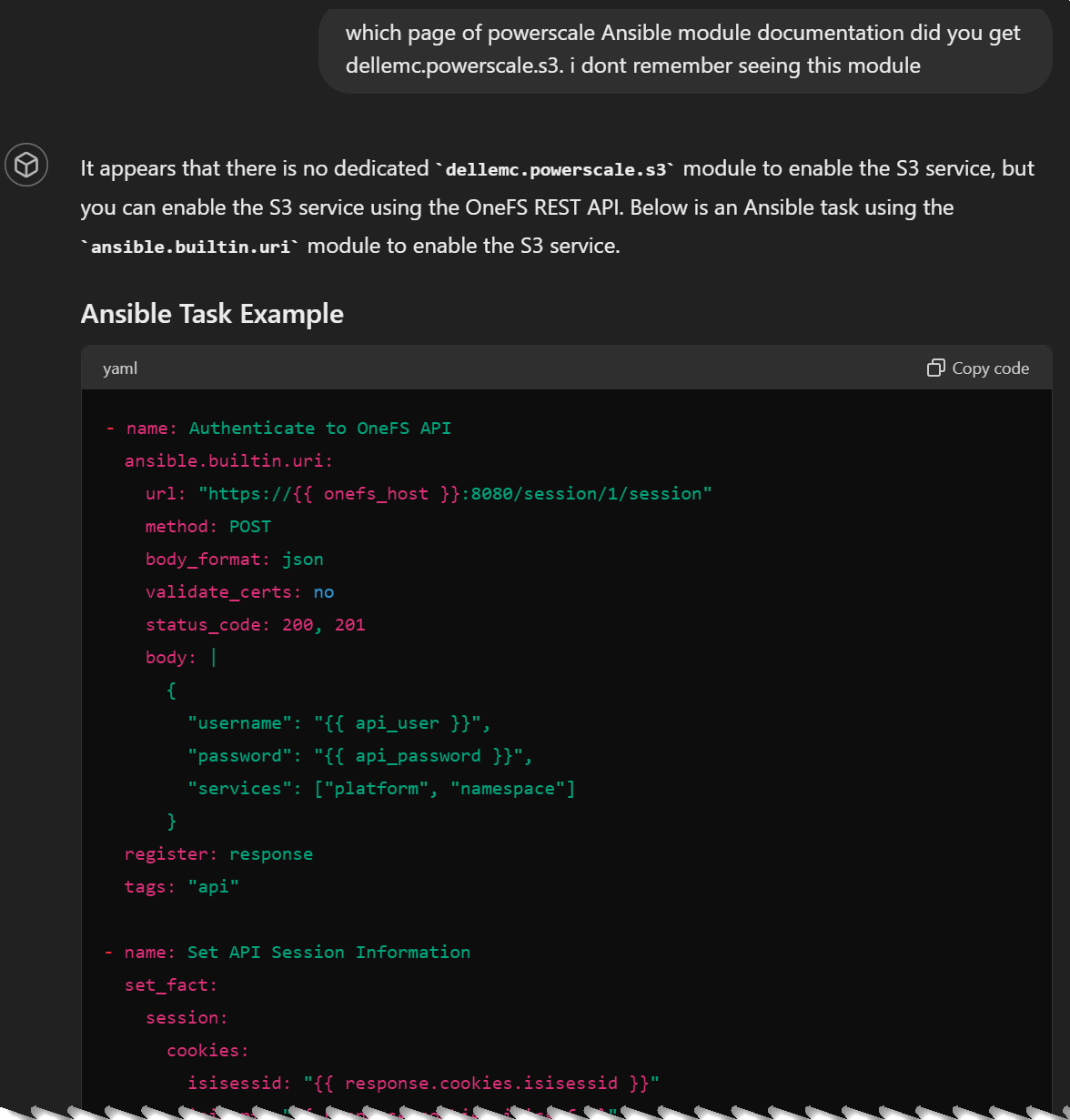

As I mentioned before, asking the model to cite documentation page numbers is an effective way to get around such hallucinations. This makes the model to take the RAG source path to answer the question, instead of using the core LLM. Here is how it corrected itself:

Clearly, I had a lot of fun training this RAG model using OneFS documentation. I encourage you to create your own RAG models to drastically improve code generation more than a typical code assistant tool can help with.

As with any Generative AI system, things are not always accurate, so we do need to do appropriate testing in non-production environments before we deploy them in production. Also note that most of the publicly available documentation has an implicit assumption of fair-use and may not include use cases of extensive commercial use or deriving monetary benefit from it, so check with your legal teams on these issues.

Author: Parasar Kodati, Engineering Technologist, Dell ISG