Home > Servers > Specialty Servers > White Papers > Introduction to Red Hat Enterprise Linux AI 1.1 on Dell PowerEdge GPU Enabled Servers > Business challenges

Business challenges

-

- Resource Constraints: Many organizations lack the necessary hardware and user-generated data resources to undertake resource-intensive training or fine-tuning of LLMs. RHEL AI provides a comprehensive suite of fine-tuning utilities (based on LAB) that minimizes dependence on costly human annotations and proprietary models, while allowing simplified adoption and updates using a composed appliance based model that can run on a single server.

- Freedom and Flexibility: Allows organizations to quickly get started with generative AI by bringing a solution closer to the data assets, with tooling that is adoptable by both professional data scientists and developers.

- Compliance and Regulatory Issues: Many countries have laws that require data to be stored within their borders, thus limiting their capability to use distributed services that are based in other countries. RHEL AI provides a way to fine-tune models within the borders/boundaries of your controlled environment.

- Security: Many organizations worry about the security and control of their data. RHEL AI uses Red Hat Enterprise Linux Image Model, which allows users to deploy and manage RHEL AI capabilities with a secure bootc container image. For more information see: https://www.redhat.com/en/technologies/linux-platforms/enterprise-linux/image-mode.

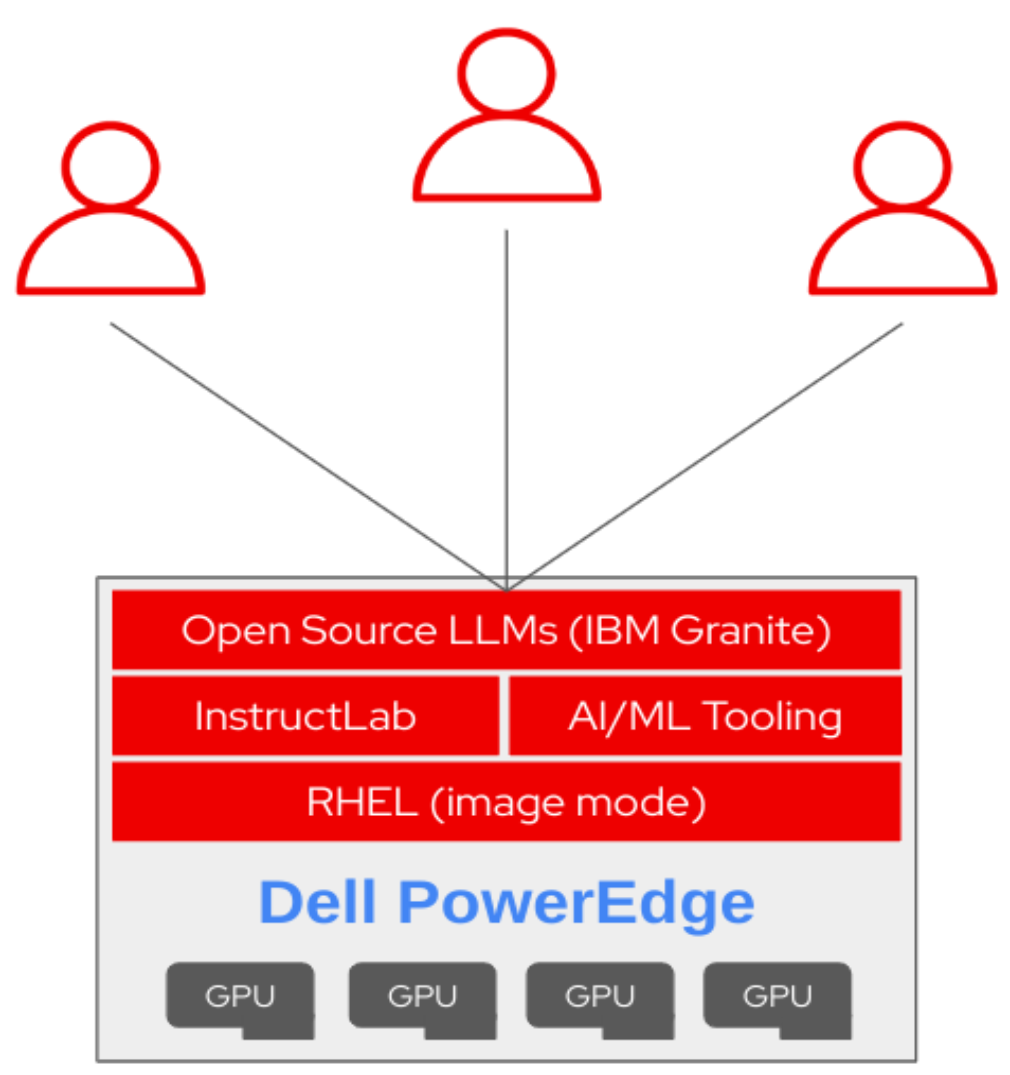

Figure 1. RHEL AI Appliance