Training the RetinaNet or ResNet18 model with TAO Toolkit and deploying it with DeepStream and Triton

Home > Servers > PowerEdge Components > White Papers > Developing and Deploying Vision AI with Dell and NVIDIA Metropolis > Training the RetinaNet or ResNet18 model with TAO Toolkit and deploying it with DeepStream and Triton

Training the RetinaNet or ResNet18 model with TAO Toolkit and deploying it with DeepStream and Triton

-

As we described before, we retrain the model with images from our target KITTI training set inside the RetinaNet Jupyter Notebook retinanet.ipynb using the TAO tools. The TAO models, both unpruned and pruned, are converted from the .etlt format to TensorRT inference engines by the TAO converter. A separate engine is created for each of the 32-bit floating point (FP32), 16-bit floating point (FP16), and 8-bit integer (INT8) precisions.

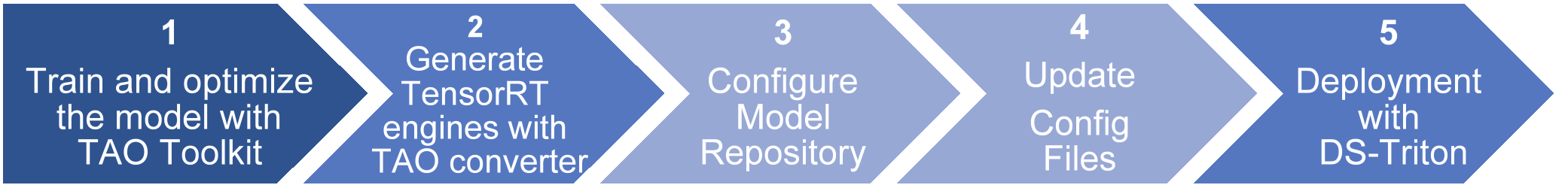

For deployment, we used the NVIDIA reference application DeepStream-app to run the object detection inference task and the DeepStream-Triton container image from the NVIDIA NGC catalog on the PowerEdge Server. The progress from development to deployment includes five steps shown in the following figure.

Figure 5. Workflow to deploy object-detection models with DeepStream-Triton integration

This workflow breaks down into substeps as detailed below:

1: Train and optimize the model with TAO Toolkit

The training process for RetinaNet requires as inputs the training config files, the pre-trained weights, datasets, and retinanet_labels.txt. Below are the main subtasks for running the training for each epoch:

- Load experiment spec file (retinanet_train_resnet18_kitti.txt)

- Merge specifications

- Use DALI to load the data

- Load model weights

- Set NCCL

- Train the epoch

- Save the model for the epoch

- Produce predictions

- Calculate accuracy mAP for each class

- Validate the loss

2: Generate TensorRT engines with TAO converter

The TAO converter enables the model to be converted into a device-specific, high-efficiency C++/ CUDA TensorRT inference engine implementation (at one of the three precisions 32-bit Floating Point, 16-bit Floating Point, or 8-bit Integer). These TensorRT engines are suitable to run within the DeepStream - Triton framework.

3: Configure model repository

The model repository is a configurable root directory where all the models reside. All the plug-in instances in a single process must share this same model root. It includes the folder with the model, the labels.txt file, and the Triton configuration file config.txt, which provides required and optional information about the model.

4: Update config files

DeepStream configuration files are in the samples folder. A useful tool is NVIDIA Polygraphy which is used to introspect the model and determine its input and output tensor shapes. This information is required as part of the configuration step. Below is the description of these files:

- source1_primary_retinanet_resnet18.txt: Reference app configuration file for using ResNet18-RetinaNet model as the primary detector.

- config_infer_primary_retinanet_resnet18.txt: Configuration file for the GStreamer nvinfer plugin for the RetinaNet detector model. In this file, we provided the inference plugin information, input / output shapes, preprocessing, postprocessing, and communication facilities required by the reference application.

5: Deployment with DeepStream-Triton

The device specific optimized TensorRT is now ready to run within the DeepStream-Triton framework.