Home > Servers > Rack and Tower Servers > Intel > Guides > Design Guide—VMware Cloud Foundation on PowerEdge Servers and Unity Storage > Storage design

Storage design

-

Ready Stack for VMware Cloud Foundation uses the Unity x50F storage platform that is integrated with vSphere and connected to a 32 Gb FC storage network. The Unity x50F platform is a flexible storage product that is designed for all-flash needs. Unity All Flash systems deliver consistent performance with fast response times and are ideal for mixed virtual workload requirements. Two Connectrix DS-6620B switches comprise the FC fabrics.

VMware Cloud Foundation enables you to deploy and manage the on-board vSAN-based storage. When you create a workload domain, SDDC Manager virtualizes the on-board storage resources of the PowerEdge servers and combines them into a single vSAN. The storage resources in this single vSAN can then be apportioned according to the needs of specific applications in the workload domain. After the workload domain is deployed, all ESXi hosts in the cluster share a single vSAN datastore.

Accordingly, VMware Cloud Foundation uses on-board storage for its management domain and for the workload domains on which applications are deployed. For secondary/ancillary storage, VMware Cloud Foundation supports either on-board storage or external storage. This guide describes using VMware Cloud Foundation with Unity storage arrays, but you can also use Dell EMC PowerMax arrays for external storage.

Unity All Flash array models

The following table shows a comparison of the Unity x50F storage arrays that the Ready Stack supports. The Disk Processor Enclosure (DPE) contains up to 25 SSD drives and includes two storage processors. You can use additional drive enclosures to expand overall storage capacity.

Table 6. Unity All Flash systems comparison

Component

Unity 350F

Unity 450F

Unity 550F

Unity 650F

Minimum/maximum drives

6/150

6/250

6/500

6/1000

Array enclosure

A 2U DPE with 25 x 2.5 in. drives

Drive enclosure

All models support 2U 25-drive and 3U 80-drive trays for 2.5 in. drives

RAID options

1/0, 5, 6

SAS I/O ports per array

4 x embedded 4‑lane* 12 Gb/s SAS

4 x embedded 4‑lane 12 Gb/s SAS

4 x embedded 4‑lane 12 Gb/s SAS

4 x embedded 4‑lane 12 Gb/s SAS

6 x 4-lane, or 2 x 4‑lane with I/O module

6 x 4-lane, or 2 x 4‑lane with I/O module

Host connectivity

4 embedded ports: 8/16 Gb FC, 10 Gb IP/iSCSI, or 1 Gb RJ45

CNA ports per array

4 additional ports per I/O module

Maximum raw capacity (drive type dependent)

2.4 PB

4.0 PB

8.0 PB

16.0 PB

IOPS (100% reads, 8K blocks)

Up to 130 K

Up to 305 K

Up to 395 K

Up to 440 K

I/O modules per array

Up to 4

Up to 4

Up to 4

Up to 4

*PCIe lane. For more information, see the Dell EMC Unity Hybrid Storage Specification Sheet.

Storage fabric configuration

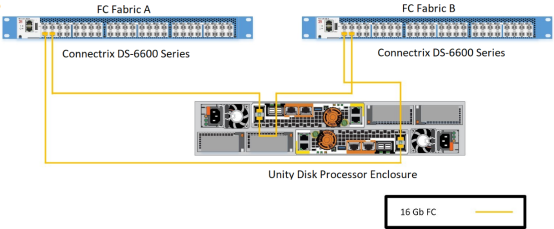

Ready Stack for VMware Cloud Foundation is configured with two FC fabrics for high availability.

The following figure illustrates the storage fabric configuration. For the Unity x50F arrays, FC port 0 from each controller connects to the FC fabric switch A, while port 1 connects to FC fabric switch B. Unity x50F arrays have expansion slots (not shown in the following figure) that can provide additional front-end (FC) or back-end (mini-SAS HD) ports. For more information, see the Dell EMC Unity Family Hardware Information Guide.

Figure 7. Dell EMC Unity x50F storage fabric configuration

Storage connectivity for compute servers

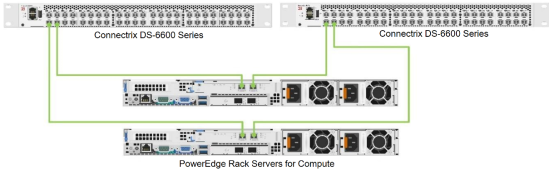

Each workload domain server that will have additional storage from the Unity array is configured with a QLogic 2692 dual-port FC adapter for connecting to the storage fabrics, with each port connecting to the Connectrix switch, as shown in the following figure.

Figure 8. PowerEdge rack server SAN connectivity

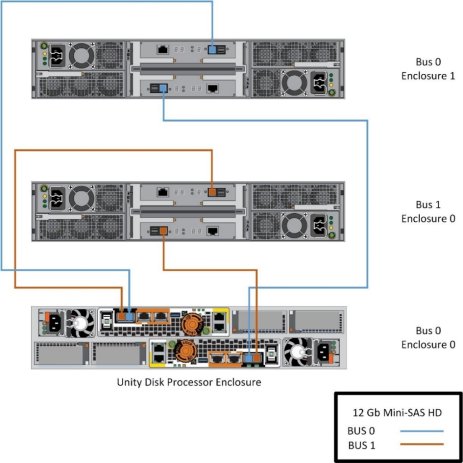

Unity x50F enclosures

Unity x50F series storage arrays are equipped with two back-end buses that use mini-SAS HD connectivity, as shown in the following figure. Connect any additional enclosures so that the load is balanced equally between the available buses. Expansion slots on the Unity x50F arrays (not shown in the following figure) can provide additional front-end or back-end (mini-SAS HD) ports. The DPE is on bus 0. Therefore, the first expansion enclosure must be on bus 1 while the second expansion enclosure must be on bus 0, and so on.

Figure 9. Dell EMC Unity x50F storage array back-end connectivity

Storage configuration

For both rack server platforms, each server’s HBA port 1 connects to Connectrix switch 1, while HBA port 2 connects to Connectrix switch 2. These ports are then zoned with the Unity array target ports to enable storage access for the hypervisor hosts.

Unity FC storage with VMware Cloud Foundation

For important considerations when using FC storage with VMware Cloud Foundation, see VMware Cloud Foundation with FC storage.

Unity arrays and VMware vSphere

Unity All Flash systems support thin provisioning with VMware ESXi. For details about how to take advantage of thin provisioning and about using vSphere with Unity storage, see Dell EMC Unity Storage with VMware vSphere and Dell EMC Unity: Best Practices Guide.