Home > Servers > Specialty Servers > White Papers > Deploy and Finetune Llama2 70B Chat on PowerEdge XE9680 with AMD Instinct MI300X > Token generation

None

-

In this section, we show how to deploy the Llam2 70 Chat Model using vLLM, first on a single AMD Instinct MI300X GPU and then across all eight GPUs within the GPU Platform.

Deploy the Llama2 70B Chat model on a single GPU

Now, we will run vLLM serving with the Llama2 70B Chat Model.

- Start the vLLM server for the Llama 2 70B Chat Model, which has FP16 precision loaded on a single AMD Instinct MI300X Accelerator.

python3 -m vllm.entrypoints.openai.api_server --model meta-llama/Llama-2-70b-chat-hf --dtype float16 --tensor-parallel-size 1

- Execute the following curl request to verify whether vLLM successfully serves the model at the chat completion endpoint.

curl http://localhost:8000/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "meta-llama/Llama-2-70b-chat-hf",

"max_tokens":256,

"temperature":1.0,

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Describe AMD ROCm in 180 words."}

]

}'

The response should look as follows:

{"id":"cmpl-42f932f6081e45fa8ce7a7212cb19adb","object":"chat.completion","created":1150766,"model":"meta-llama/Llama-2-70b-chat-hf","choices":[{"index":0,"message":{"role":"assistant","content":" AMD ROCm (Radeon Open Compute MTV) is an open-source software platform developed by AMD for high-performance computing and deep learning applications. It allows developers to tap into AMD Radeon GPUs' massive parallel processing power, providing faster performance and more efficient use of computational resources. ROCm supports a variety of popular deep learning frameworks, including TensorFlow, PyTorch, and Caffe, and is designed to work seamlessly with AMD's GPU-accelerated hardware. ROCm offers features such as low-level hardware control, GPU Virtualization, and support for multi-GPU configurations, making it an ideal choice for demanding workloads like artificial intelligence, scientific simulations, and data analysis. With ROCm, developers can take full advantage of AMD's GPU capabilities and achieve faster time-to-market and better performance for their applications."},"finish_reason":"stop"}],"usage":{"prompt_tokens":42,"total_tokens":237,"completion_tokens":195}}

Deploy a Gradio Chatbot with the Llama 2 70B Chat Model

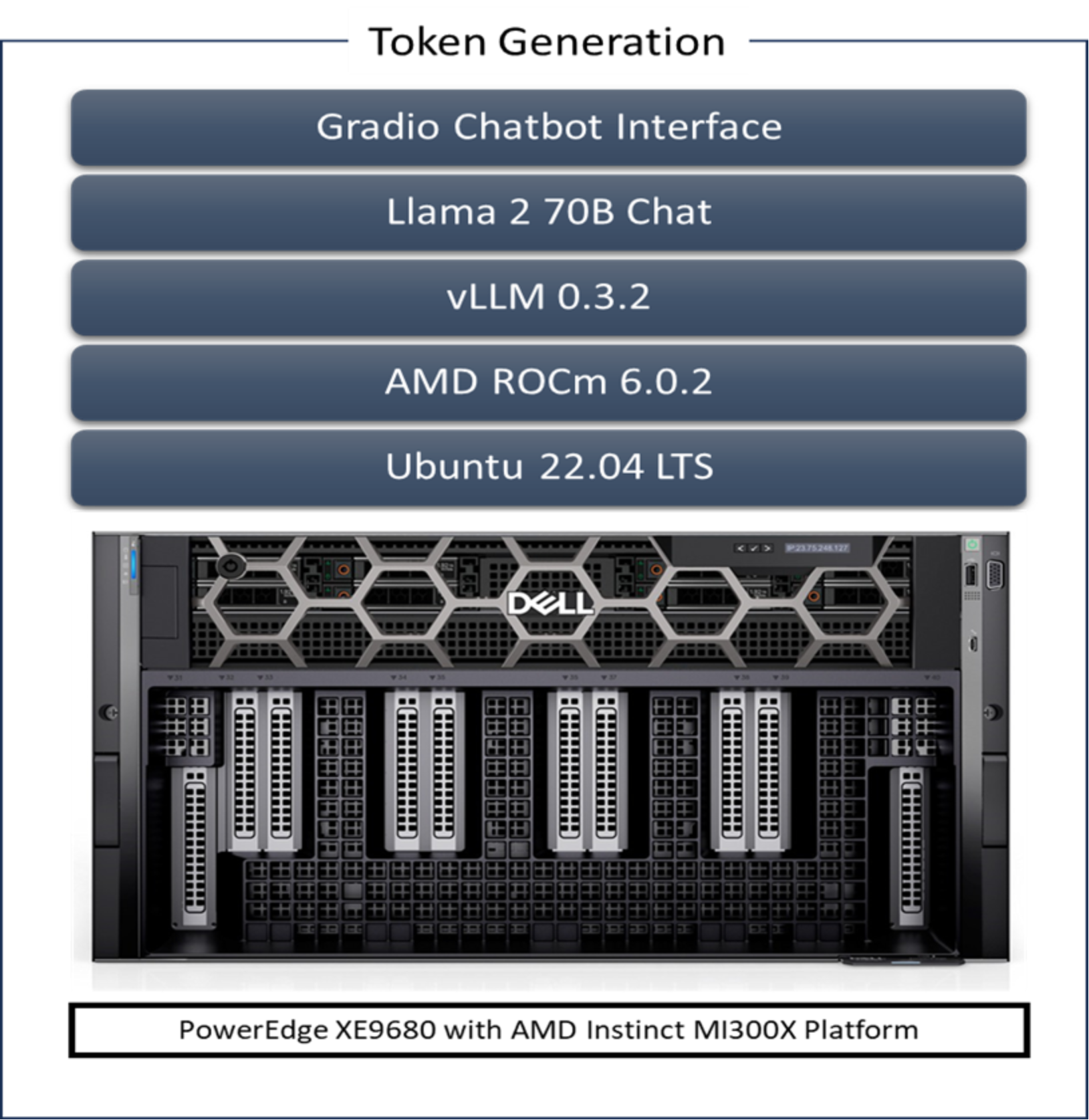

This Gradio Chatbot sends the user input query received through the user interface to the Llama 2 70B Chat Model served using vLLM. Because the vLLM server is compatible with the OpenAI Chat API, the request is sent in the OpenAI Chat API compatible format. The model generates the response based on the request sent back to the client. This response is displayed on the Gradio Chatbot user interface. The following figure illustrates the software stack.

Figure 2. Token Generation Software Stack

- If not already done, follow the instructions in the Setup section to install the AMD ROCm driver, libraries, and tools, clone the vLLM repository, build and start the vLLM ROCM Docker container, and request access to the Llama 2 Models from Meta.

- Install the prerequisites for running the chatbot.

pip3 install -U pip

pip3 install openai==1.13.3 gradio==4.20.1

- Log in to the Hugging Face CLI and enter your HuggingFace access token when prompted:

huggingface-cli login

- Start the vLLM server for Llama 2 70B Chat Model with data type FP16 on one AMD Instinct MI300X Accelerator.

python3 -m vllm.entrypoints.openai.api_server --model meta-llama/Llama-2-70b-chat-hf --dtype float16

- Run the gradio_openai_chatbot_webserver.py command from the /app/vllm/examples directory within the container with the default configurations.

cd /app/vllm/examples

python3 gradio_openai_chatbot_webserver.py --model meta-llama/Llama-2-70b-chat-hf

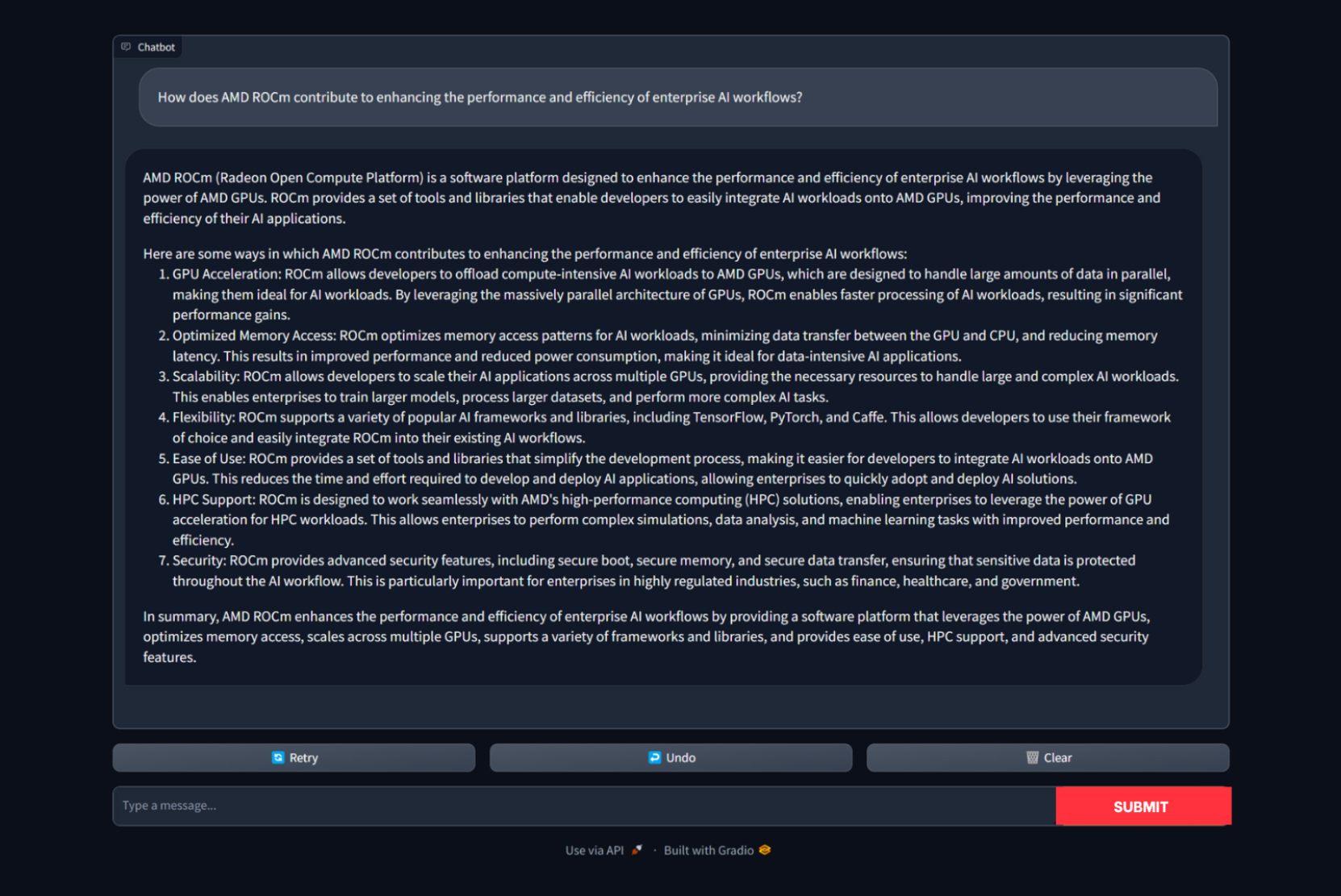

The Gradio chatbot will run on port 8001 and can be accessed using the URL http://localhost:8001. The query passed to the chatbot is “Describe the features of AMD ROCm in 5 points.” The output conversation with the chatbot is shown here:

Deploy eight instances of the Llama2 70B Chat Model across eight GPUs

Now that we have successfully deployed the Llama 2 70B Chat Model on a single GPU, it is time to maximize the capabilities of the Dell PowerEdge XE9680 server. We can capitalize on its potential by deploying eight concurrent Llama 2 70B Chat Model instances, each using FP16 precision. By harnessing the power of the 8x AMD Instinct MI300X Accelerators, we can significantly increase our capacity to handle multiple users simultaneously and achieve higher throughput. We can accomplish this by performing eight parallel vLLM serving deployments, ensuring efficient resource usage and enhancing overall performance.

To simplify the deployment of vLLM across multiple GPUs, we employ a Kubernetes-based stack. This stack comprises a Kubernetes Deployment featuring eight vLLM serving replicas alongside a Kubernetes Service tasked with exposing all vLLM serving replicas through a unified endpoint. Leveraging a round-robin strategy, the Kubernetes Service efficiently distributes requests across the vLLM serving replicas, optimizing resource usage and enhancing overall performance.

Required prerequisites

- Any Kubernetes distribution on the server.

- AMD GPU device plugins for Kubernetes set up on the installed Kubernetes distribution.

- A Kubernetes secret that grants access to the container registry, facilitating Kubernetes deployment.

Now, we deploy Llama2 Chat across all eight GPUs.

- If not already done, follow the instructions in the Setup section to install the AMD ROCm driver, libraries, and tools, clone the vLLM repository, build the vLLM ROCM Docker container, and request access to the Llama 2 Models from Meta. Push the built vllm-rocm:latest image to the container registry of your choice.

- Create a deployment yaml file “multi-vllm.yaml” based on the following sample:

# vllm deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: vllm-serving

namespace: default

labels:

app: vllm-serving

spec:

selector:

matchLabels:

app: vllm-serving

replicas: 8

template:

metadata:

labels:

app: vllm-serving

spec:

containers:

- name: vllm

image: container-registry/vllm-rocm:latest # update the container registry name

args: [

"python3", "-m", "vllm.entrypoints.openai.api_server",

"--model", "meta-llama/Llama-2-70b-chat-hf"

]

env:

- name: HUGGING_FACE_HUB_TOKEN

value: "" # add your huggingface token with Llama 2 models access

resources:

requests:

cpu: 15

memory: 150G

amd.com/gpu: 1 # each replica is allocated 1 GPU

limits:

cpu: 15

memory: 150G

amd.com/gpu: 1

imagePullSecrets:

- name: cr-login # kubernetes container registry secret

---

# nodeport service with round robin load balancing

apiVersion: v1

kind: Service

metadata:

name: vllm-serving-service

namespace: default

spec:

selector:

app: vllm-serving

type: NodePort

ports:

- name: vllm-endpoint

port: 8000

targetPort: 8000

nodePort: 30800 # the external port endpoint to access the serving

- Deploy the multi vLLM serving using the deployment configuration with kubectl. This will deploy eight replicas of vLLM serving using the Llama 2 70B Chat Model with FP16 precision.

kubectl apply -f multi-vllm.yaml

- Execute the following curl request to verify whether the model is successfully served at the chat completion endpoint at port 30800.

curl http://localhost:30800/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "meta-llama/Llama-2-70b-chat-hf",

"max_tokens":256,

"temperature":1.0,

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Describe AMD ROCm in 180 words."}

]

}'

The response should look as follows:

{"id":"cmpl-42f932f6081e45fa8ce7dnjmcf769ab","object":"chat.completion","created":1150766,"model":"meta-llama/Llama-2-70b-chat-hf","choices":[{"index":0,"message":{"role":"assistant","content":" AMD ROCm (Radeon Open Compute MTV) is an open-source software platform developed by AMD for high-performance computing and deep learning applications. It allows developers to tap into the massive parallel processing power of AMD Radeon GPUs, providing faster performance and more efficient use of computational resources. ROCm supports a variety of popular deep learning frameworks, including TensorFlow, PyTorch, and Caffe, and is designed to work seamlessly with AMD's GPU-accelerated hardware. ROCm offers features such as low-level hardware control, GPU Virtualization, and support for multi-GPU configurations, making it an ideal choice for demanding workloads like artificial intelligence, scientific simulations, and data analysis. With ROCm, developers can take full advantage of AMD's GPU capabilities and achieve faster time-to-market and better performance for their applications."},"finish_reason":"stop"}],"usage":{"prompt_tokens":42,"total_tokens":237,"completion_tokens":195}}