Home > Servers > Specialty Servers > White Papers > Deploy and Finetune Llama2 70B Chat on PowerEdge XE9680 with AMD Instinct MI300X > Solution approach

Solution approach

-

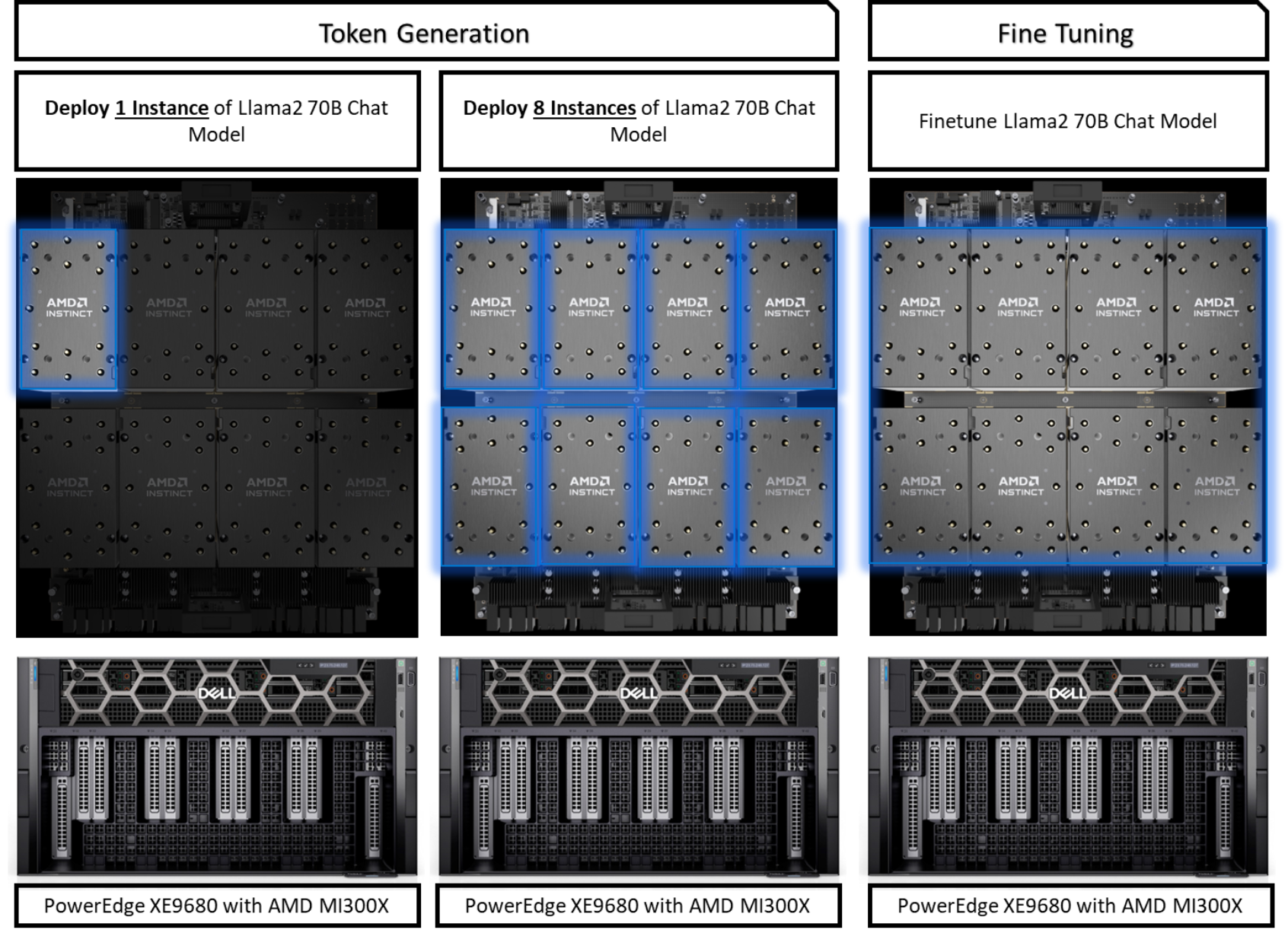

Considering the rapid evolution of generative AI models, enterprises must address every facet of the AI solution lifecycle, encompassing fine-tuning and token generation. This solution capitalizes on the formidable capabilities of the Dell PowerEdge XE9680, paired with AMD Instinct MI300X Accelerators, addressing both use cases. Boasting a remarkable 192GB of HBM3 memory per accelerator, this configuration facilitates the seamless deployment of the memory-intensive Llama2 70B Chat Model on a single GPU. This not only optimizes computational efficiency but also curtails hardware footprint. This setup also enables the deployment of up to eight instances of the model across all eight accelerators on a single server, thereby enabling enterprise scalability. Also, the solution entails fine-tuning the Llama2 70B Chat Model on a single server with FP16 precision, a pivotal measure to guarantee top-notch, precise output from the AI chat solution.

Token generation

Token generation, particularly with a Large Language Model (LLM), is essential when considering the memory requirements, typically around 1.2 times the model size on a GPU. In FP16 precision, this estimation translates to approximately two bytes per parameter, multiplied by the total number of model parameters. For instance, employing FP16 precision, the Llama 2 70B Chat Model requires a minimum of 168 GB of GPU memory for inference. A single AMD Instinct MI300X Accelerator, with 192 GB of GPU memory, is fully equipped to accommodate the entirety of a Llama 2 70B model for inference.

Fine-tuning

Fine-tuning empowers enterprises to craft bespoke models tailored to their unique datasets by capitalizing on the pre-existing knowledge embedded within pre-trained models. This approach significantly mitigates the need for extensive labeled data and reduces the training duration, in contrast to the laborious process of training a model from scratch. Consequently, fine-tuning emerges as an efficient methodology, optimizing computational resource usage and training time. This strategic refinement enhances competitiveness and makes it easier to deploy high-performing AI solutions, allowing swift adaptation to evolving business requirements.

The following figure illustrates the three step-by-step AI deployment scenarios when using the PowerEdge XE9680 with AMD Instinct MI300X Accelerators.

Figure 1. Llama2 70B chat model deployment scenarios