Home > Servers > Specialty Servers > White Papers > Deploy and Finetune Llama2 70B Chat on PowerEdge XE9680 with AMD Instinct MI300X > Setup

Setup

-

In this section, we will provision the server. The server has been configured as follows.

OS: Ubuntu 22.04.4 LTS

Kernel version: 5.15.0-94-generic

Docker Version: Docker version 25.0.3, build 4debf41

ROCm version: 6.0.2

Server: Dell PowerEdge XE9680

GPU: 8x AMD Instinct MI300X Accelerators

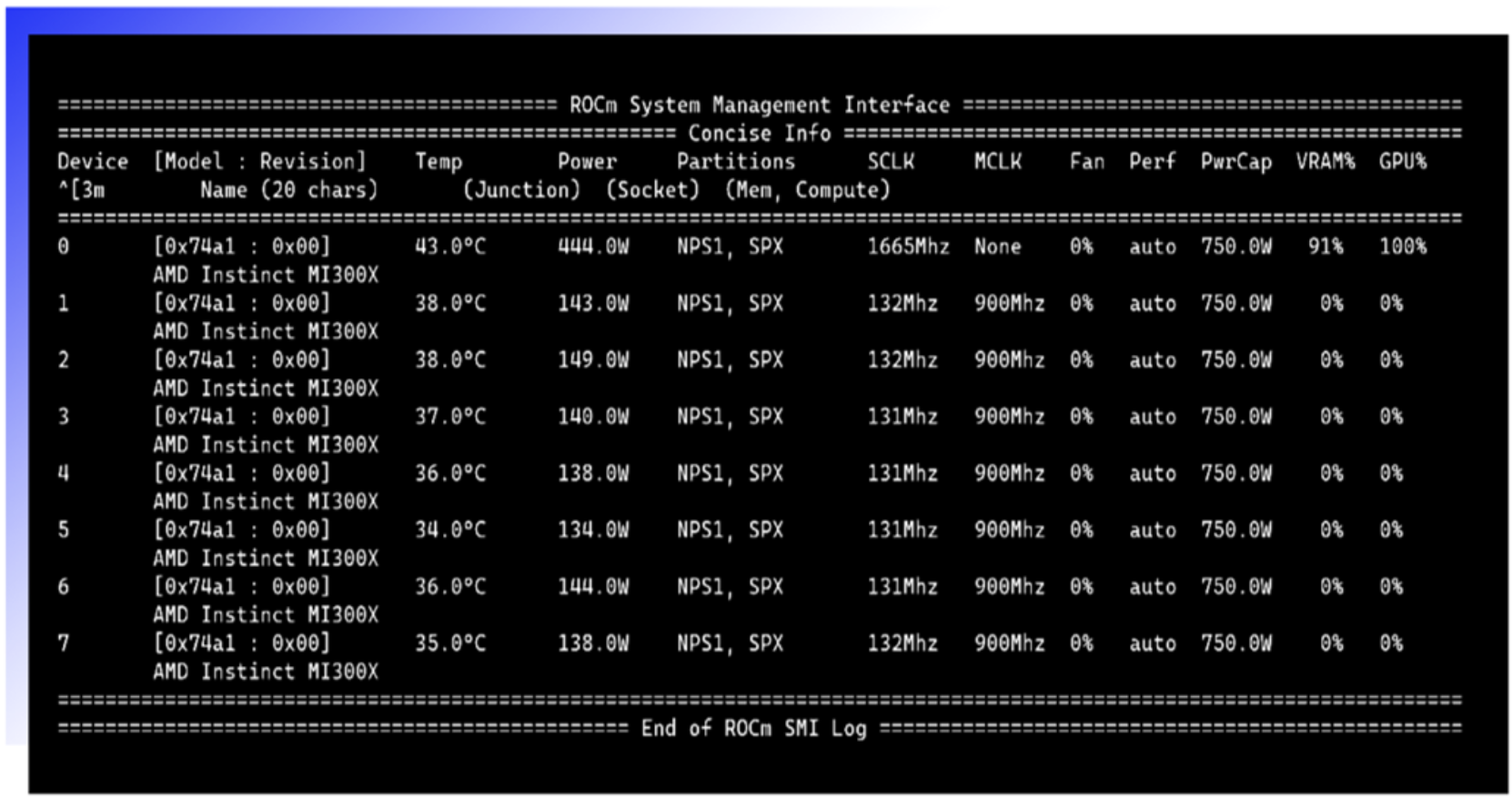

- Install the AMD ROCm driver, libraries, and tools. Follow the detailed installation instructions for your Linux-based platform. To ensure these installations are successful, check the GPU info using rocm-smi.

- Clone the vLLM GitHub repository for the 0.3.2 version:

git clone -b v0.3.2 https://github.com/vllm-project/vllm.git

- Build the Docker container from the Dockerfile.rocm file inside the cloned vLLM repository.

cd vllm

sudo docker build -f Dockerfile.rocm -t vllm-rocm:latest

- Start the vLLM ROCm Docker container and open the container shell using the following command.

sudo docker run -it \

--name vllm \

--network=host \

--device=/dev/kfd \

--device=/dev/dri \

--shm-size 16G \

--group-add=video \

--workdir=/ \

vllm-rocm:latest bash

- Request access to Llama 2 70B Chat Model from Llama 2 models from Meta and HuggingFace. When the request is approved, log in to the Hugging Face CLI and enter your HuggingFace access token when prompted:

huggingface-cli login