Home > Servers > Specialty Servers > White Papers > Deploy and Finetune Llama2 70B Chat on PowerEdge XE9680 with AMD Instinct MI300X > Fine-tuning

None

-

Having successfully deployed the Llama-2 70B Chat Model on both a single GPU and eight concurrent GPUs, the next step is to explore fine-tuning options using Hugging Face Accelerate.

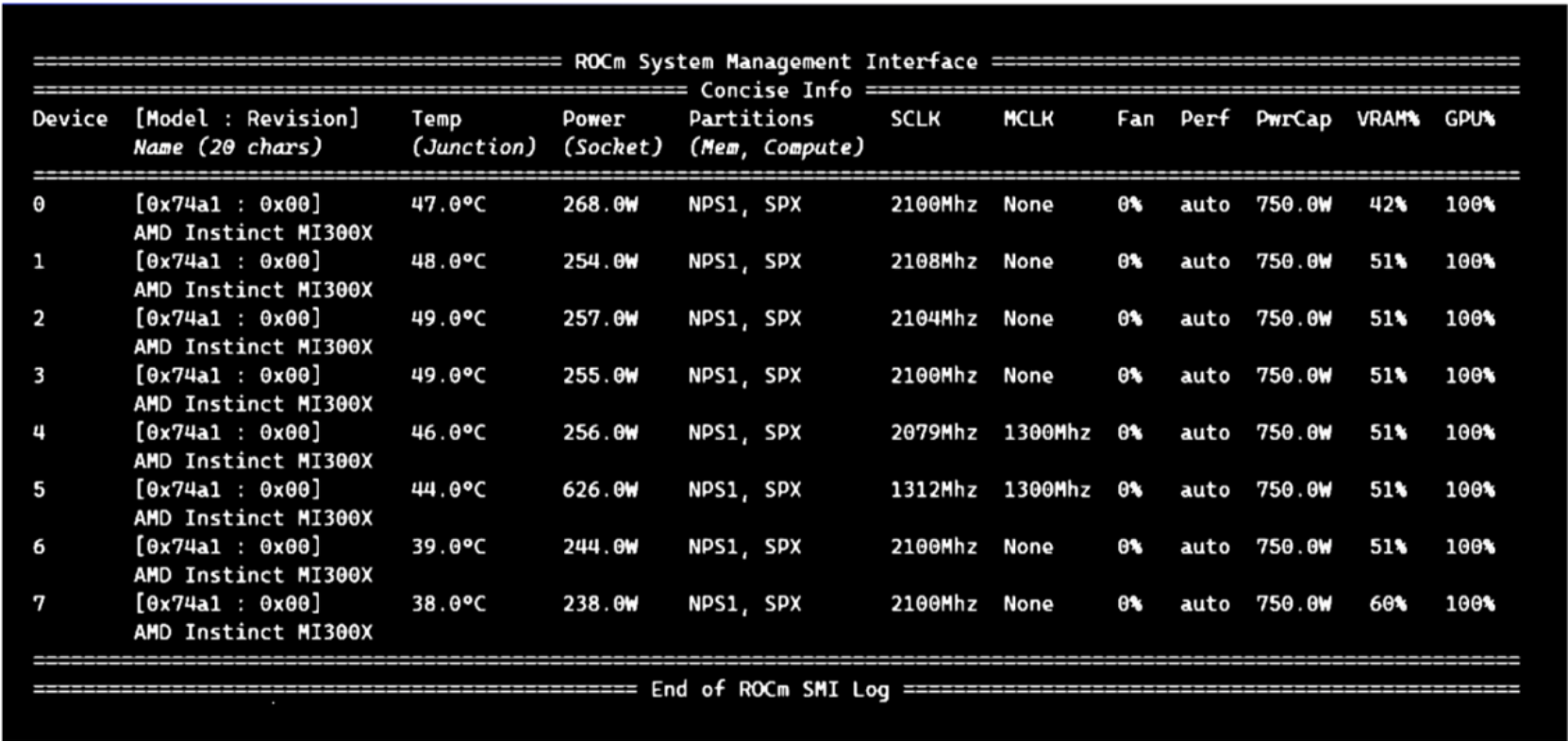

Figure 3. Fine-tuning Software Stack

As shown in Figure 3, the fine-tuning software stack begins with the AMD ROCm PyTorch image serving as the base, offering a tailored PyTorch library for optimal fine-tuning. Leveraging the Hugging Face Transformers library alongside Hugging Face Accelerate, facilitates multi-GPU fine-tuning capabilities. The Llama 2 70B Chat Model will be fine-tuned with FP16 precision, using the Guanaco-1k dataset from Hugging Face on eight AMD Instinct MI300X Accelerators.

In this scenario, we will perform full parameter fine-tuning of the Llama 2 70B Chat Model. Although you can also implement fine-tuning using optimized techniques such as Low-Rank Adaptation of Large Language Models (LoRA) on accelerators with smaller memory footprints, performance tradeoffs exist on specific complex objectives. These nuances are addressed by entire parameter fine-tuning methods, which generally require accelerators that support significant memory requirements.

Fine-tuning Llama 2 70B on 8x AMD Instinct MI300X Accelerators

Fine-tune the Llama 2 70B Chat Model with FP16 precision for question and answer tasks using the mlabonne/guanaco-llama2-1k dataset on the 8X AMD Instinct MI300X Accelerators.

- If not already done, install the AMD ROCm driver, libraries, and tools and request access to the Llama 2 Models from Meta following the instructions in the Setup section.

- Start the fine-tuning Docker container with the AMD ROCm PyTorch base image. The following command opens a shell within the Docker container.

sudo docker run -it \

--name fine-tuning \

--network=host \

--device=/dev/kfd \

--device=/dev/dri \

--shm-size 16G \

--group-add=video \

--workdir=/ \

rocm/pytorch:rocm6.0.2_ubuntu22.04_py3.10_pytorch_2.1.2 bash

- Install the necessary Python prerequisites.

pip3 install -U pip

pip3 install transformers==4.38.2 trl==0.7.11 datasets==2.18.0

- Log in to the Hugging Face CLI and enter your HuggingFace access token when prompted.

huggingface-cli login

- Import the required Python packages.

from datasets import load_dataset

from transformers import (

AutoModelForCausalLM,

AutoTokenizer,

TrainingArguments,

pipeline

)

from trl import SFTTrainer

- Load the Llama 2 70B Chat Model and the mlabonne/guanaco-llama2-1k dataset from Hugging Face.

# load the model and tokenizer

base_model_name = "meta-llama/Llama-2-70b-chat-hf"

# tokenizer parameters

llama_tokenizer = AutoTokenizer.from_pretrained(base_model_name, trust_remote_code=True)

llama_tokenizer.pad_token = llama_tokenizer.eos_token

llama_tokenizer.padding_side = "right"

# load the based model

base_model = AutoModelForCausalLM.from_pretrained(

base_model_name,

device_map="auto",

)

base_model.config.use_cache = False

base_model.config.pretraining_tp = 1

# load the dataset from huggingface

dataset_name = "mlabonne/guanaco-llama2-1k"

training_data = load_dataset(dataset_name, split="train")

- Define fine-tuning configurations and start fine-tuning for one epoch. The fine-tuned model will be saved in the finetuned_llama2_70b directory.

# fine tuning parameters

train_params = TrainingArguments(

output_dir="./runs",

num_train_epochs=1, # fine tuning for 1 epochs

per_device_train_batch_size=8 # setting per GPU batch size

)

# define the trainer

fine_tuning = SFTTrainer(

model=base_model,

train_dataset=training_data,

dataset_text_field="text",

tokenizer=llama_tokenizer,

args=train_params,

max_seq_length=512

)

# start the fine-tuning run

fine_tuning.train()

# save the fine tuned model

fine_tuning.model.save_pretrained("finetuned_llama2_70b")

print("Fine-tuning completed")

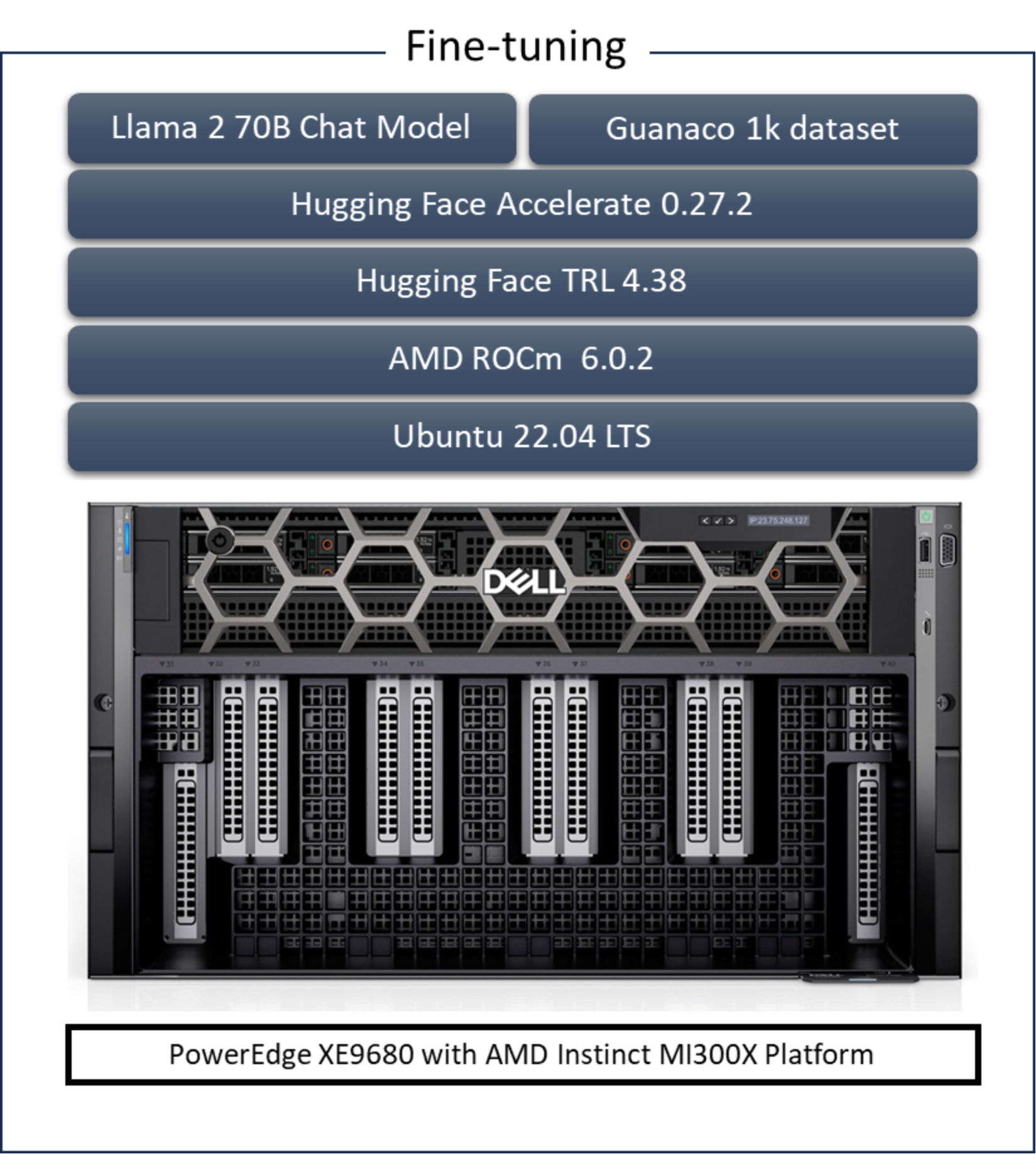

- Use the rocm-smi command to observe GPU utilization while fine-tuning.