Home > Storage > PowerMax and VMAX > Storage Admin > Dell PowerMax and VMware vSphere Configuration Guide > Storage I/O Control resource share and limits

Storage I/O Control resource share and limits

-

The second step in the setup of Storage I/O Control is to allocate the number of storage IO shares and upper limit of IO operations per second (IOPS) allowed for each virtual machine. When storage IO congestion is detected for a datastore, the IO workloads of the virtual machines accessing that datastore are adjusted according to the proportion of virtual machine shares each VM has been allocated.

Shares

Storage IO shares are similar to those used for memory and CPU resource allocation. They represent the relative priority of a virtual machine with regard to the distribution of storage IO resources.

Under resource contention, VMs with higher share values have greater access to the storage array, which typically results in higher throughput and lower latency. There are three default values for shares: Low (500), Normal (1000), or High (2000) as well as a fourth option, Custom. Choosing Custom will allow a user-defined amount to be set.

IOPS

In addition to setting the shares, one can limit the IOPS that are permitted for a VM.[1] By default, the IOPS are always unlimited as they have no direct correlation with the shares setting. If one prefers to limit based upon MB per second, it will be necessary to convert to IOPS using the typical IO size for that VM. For example, to restrict a backup application with 64 KB IOs to 10 MB per second a limit of 160 IOPS (10240000/64000) should be set.

One should allocate storage IO resources to virtual machines based on priority by assigning a relative number of shares to the VM. Unless virtual machine workloads are very similar, shares do not necessarily dictate allocation in terms of IO operations or MBs per second.

Higher shares allow a virtual machine to keep more concurrent IO operations pending at the storage device or datastore compared to a virtual machine with lower shares. Therefore, two virtual machines might experience different throughput based on their workloads.

Setting Storage I/O Control resource shares and limits

The screen to edit shares and IOPS can be accessed from a number of places within the vSphere Client. Perhaps the easiest is to select the VMs tab of the datastore on which Storage I/O Control has been enabled. In Figure 135 there are two VMs listed for the datastore STORAGE_IO: STAR_VM and STORAGE_IO_VM. To set the appropriate SIOC policy, the settings of the VM need to be changed. This can be achieved by right-clicking on the chosen VM and select Edit Settings.

Figure 135. Edit settings for VM for SIOC

Within the VM Properties screen shown in Figure 136, expand each hard disk to see both the Shares and the IOPs limit. Notice that for each hard disk the default of Normal for Shares and Unlimited for IOPS is present.

Figure 136. Default VM disk resource allocation

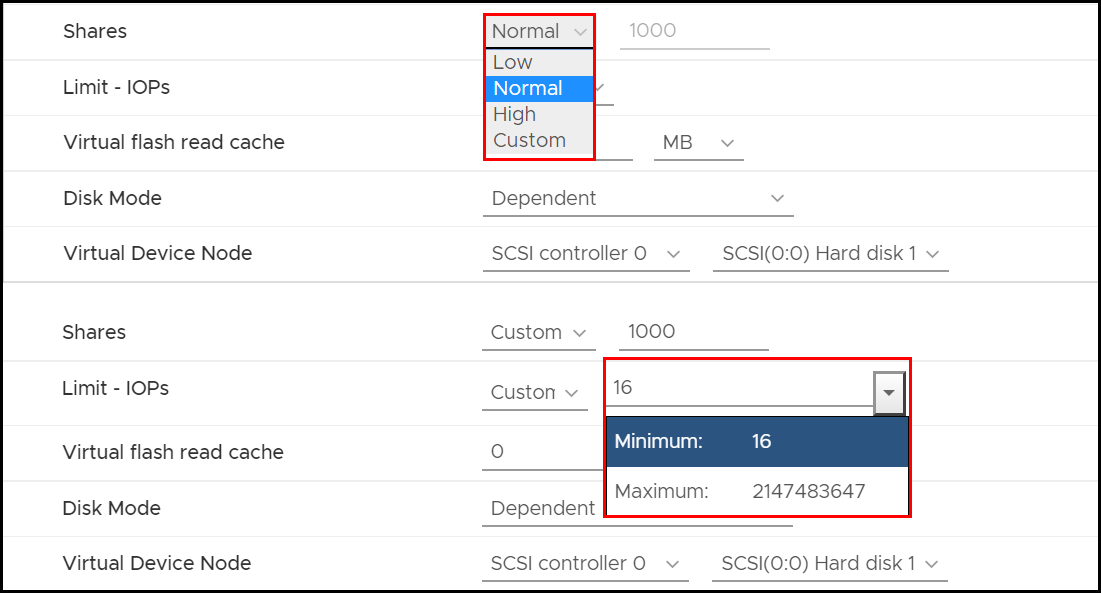

Using the drop-down boxes within the Shares and IOPS columns, one can change the values. Figure 137 shows that the Shares can be adjusted to Low (500), Normal (1000), or High (2000) as well as any custom value. Limit - IOPs are either unlimited or any custom value between the minimum and maximum.

Figure 137. Adjusting SIOC for an individual VM

Changing Congestion Threshold for Storage I/O Control

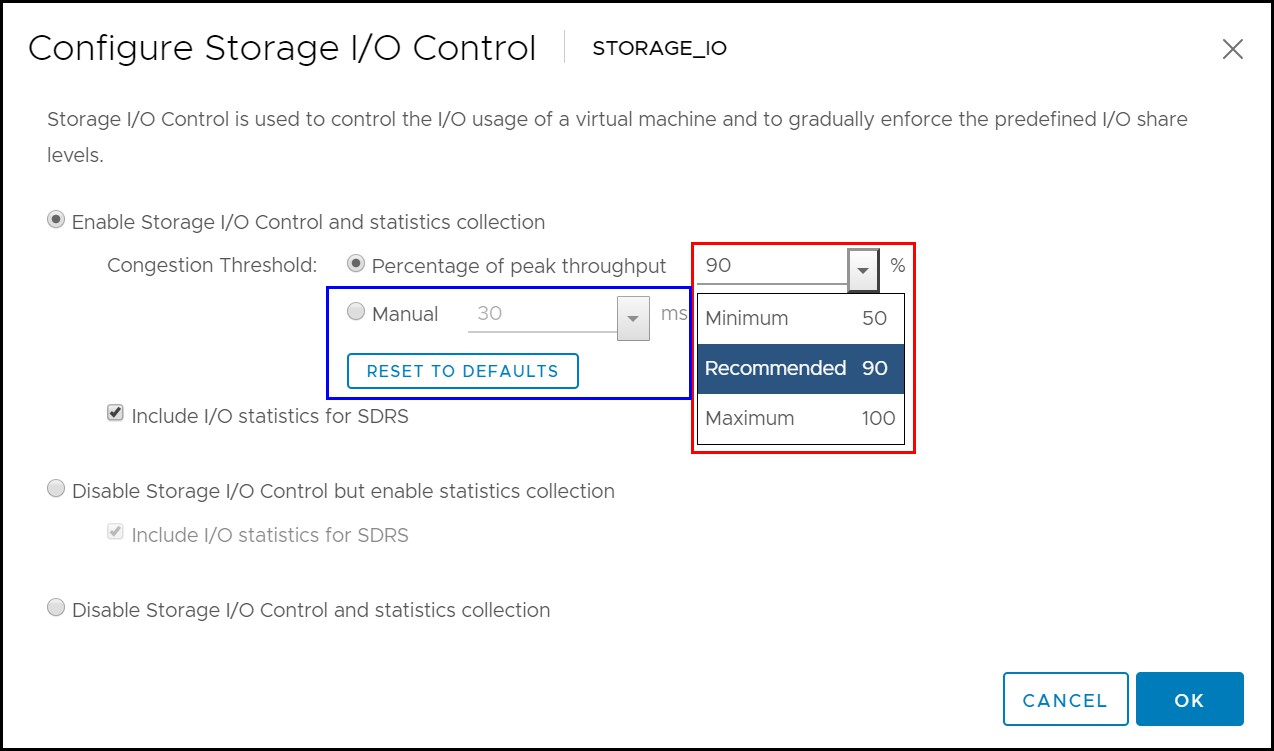

It is possible to change the Congestion Threshold, i.e., the value at which VMware begins implementing the shares and IOPS settings for a VM. By default, VMware will dynamically determine the best congestion threshold for each device. VMware does this by determining the peak latency value of a device and setting the threshold of that device to 90% of that value. This is seen in Figure 133. For example, a device that peaks at a 10 ms will have its threshold set to 9 ms. If the user wishes to change the percent amount, that can be done within the vSphere Client.

Figure 138 demonstrates how the percentage can be changed to anything between 50 and 100%. Alternatively, a manual millisecond threshold can be set between 5 and 100 ms. Dell recommends using the default value, allowing VMware to dynamically control the congestion threshold, rather than using a manual millisecond setting, though a different percent amount is perfectly acceptable if desired.

Figure 138. Storage I/O Control dynamic threshold setting

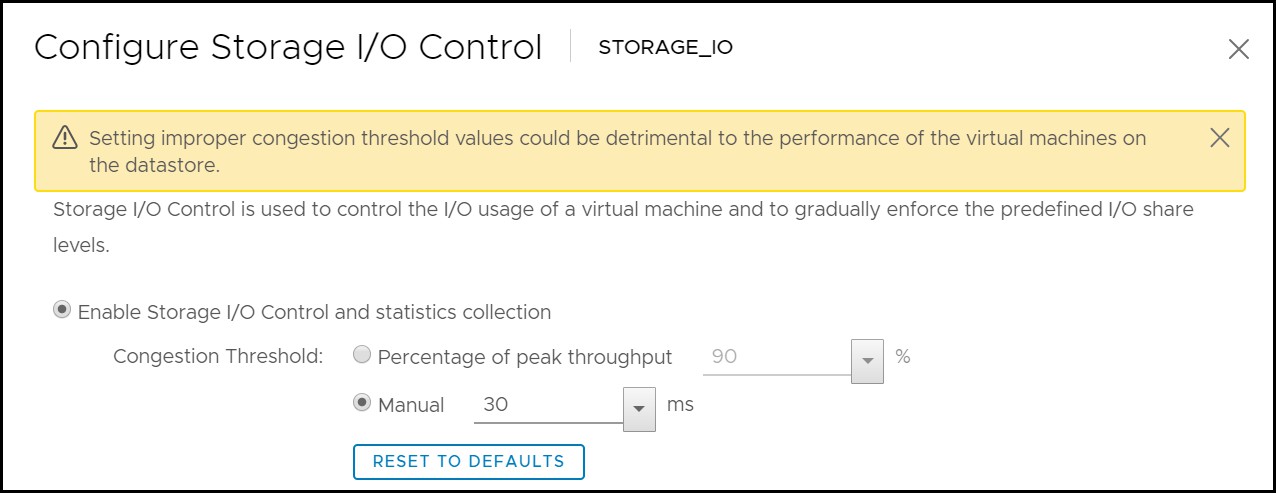

If an attempt is made to adjust the threshold to a manual setting, VMware will warn the user about the consequences as seen in Figure 139.

Figure 139. Storage I/O Control manual setting warning

Spreading IO demands

Storage I/O Control was designed to help alleviate many of the performance issues that can arise when many different types of virtual machines share the same VMFS volume on a large SCSI disk presented to the VMware ESXi hosts. This technique of using a single large disk allows optimal use of the storage capacity. However, this approach can result in performance issues if the IO demands of the virtual machines cannot be met by the large SCSI disk hosting the VMFS, regardless of SIOC being used.

SIOC and Dell Host I/O Limits

Because SIOC and Dell Host I/O Limits can restrict the amount of IO either by IOPS per second or MB per second, it is natural to question their interoperability. The two technologies can be utilized together, though the limitations around SIOC reduce its benefits as compared to Host I/O Limits. There are a few reasons for this. First, SIOC can only work against a single datastore that is comprised of a single extent, in other words one LUN. Therefore, only the VMs on that datastore will be subject to any Storage I/O Control and only when the response threshold is breached. In other words, it is not possible to setup any VM hierarchy of importance if the VMs do not reside on the same datastore. In contrast, Host I/O Limits work against the storage group as a whole which typically comprise multiple devices. In addition, child storage groups can be utilized so that individual LUNs can be singled out for a portion of the total limit. This means that a VM hierarchy can be establish across datastores because each device can have a different Host I/O limit but still be in the same storage group and thus bound by the shared parental limit.

The other problems to consider with SIOC is the type and number of disks. SIOC is set at the vmdk level and therefore if there is more than one vmdk per VM, each one requires a separate IO limit. Testing has shown this makes it extremely difficult to achieve an aggregate IOPs number unless the access patterns of the application are known so well that the number of IOs to each vmdk can be determined. For example, if the desire is to allow 1000 IOPs for a VM with two vmdks, it may seem logical to limit each vmdk to 500 IOPs. However, if the application generates two IOs on one vmdk for every one IO on the other vmdk, the most IOPs that can be achieved is 750 as one vmdk hits the 500 IOPs limit. If Raw Device Mappings are used in addition to vmdks, the exercise becomes impossible as they are not supported with SIOC.

This is not to say, however, that SIOC cannot be useful in the environment. If the VMs that require throttling will always share the same datastore, then using SIOC would be the only way to limit IO among them in the event there was contention. In such an environment, a Host I/O Limit could be set for the device backing the datastore so that the VM(s) that are not throttled could generate IOPs up to another limit if desired.

Note: As the PowerMax limits the IO when using Host I/O Limits, unless SIOC is set at the datastore level, individual VMs on a particular device will be free to generate their potential up to the Host I/O limit, regardless of latency.

[1] For information on how to limit IOPS at the storage level see Host I/O Limits.