Home > Storage > PowerMax and VMAX > Storage Admin > Dell PowerMax and VMware vSphere Configuration Guide > Provisioning

Provisioning

-

The following sections will discuss PowerMax device provisioning through FC, iSCSI, NVMeoF and NFS. As FC, iSCSI and NVMeoF devices are used for VMFS datastores, they will be presented together. NFS datastores will be covered in their own section and use PowerMax File to demonstrate the functionality. As vVols are a unique implementation, they are not included here. Please refer to the white paper Using VMware vSphere Virtual Volumes 2.0 and VASA 3.0 with Dell PowerMax for more information.

Driver configuration in VMware ESXi

The drivers provided by VMware as part of the VMware ESXi distribution should be utilized when connecting VMware ESXi to Dell PowerMax storage. However, Dell E-Lab™ does perform extensive testing to ensure the BIOS, BootBIOS and the VMware supplied drivers work together properly with Dell storage arrays.

NVMeoF

When using FC-NVMe, different drivers and/or firmware may be required on the Gen 6 (32 Gb) HBA to support the protocol. In addition, modification of HBA parameters might be necessary. For instance, when using Emulex HBAs, the parameter lpfc_enable_fc4_type should be set to 3 and the host rebooted:

esxcli system module parameters set -p lpfc_enable_fc4_type=3 -m lpfc -f

Review the HBA/network card vendor and VMware documentation for the correct drivers and configuration in a customer environment.

Adding and removing Dell PowerMax devices to VMware ESXi hosts

The addition or removal of Dell PowerMax devices to and from VMware ESXi is a two-step process for FC and iSCSI, while for NVMeoF it is a single step:

- Appropriate changes need to be made to the Dell PowerMax storage array configuration. In addition to LUN masking, this may include creation and assignment of Dell PowerMax volumes to the Fibre Channel, iSCSI, or NVMe/TCP ports utilized by the VMware ESXi. The configuration changes can be performed using Dell Solutions Enabler or Unisphere for PowerMax from an independent storage management host. Devices presented through NVMe/TCP are immediately available to ESXi, but a second step is required for the other protocols.

- The second step of the process forces the VMware kernel to rescan the Fibre Channel bus to detect changes in the environment or rescanning the iSCSI software adapter. This can be achieved by the one of the following two processes:

- Utilize the graphical user interface

- Utilize the command line utilities

The process to discover changes to the storage environment using these tools are discussed in the next two subsections.

Note: When presenting devices to multiple ESXi hosts in a cluster, VMware relies on the Network Address Authority (NAA) ID or Extended Unique Identifier (EUI) and not the LUN ID. Therefore, the LUN number does not need to be consistent across hosts in a cluster. This means that a datastore created on one host in the cluster will be seen by the other hosts in the cluster even if the underlying LUN ID is different between the hosts. Therefore, the setting of Consistent LUNs is unnecessary at the host level, though some customers do require it for business reasons.[1] Consistent LUNs is not needed for NVMeoF since the NSID is already consistent (1:1 mapping).

Changes to the storage environment can be detected using the vSphere Client by following the process listed below:

- In the vCenter inventory, right-click the VMware ESXi host (or, preferably, the vCenter cluster or datacenter object to rescan multiple ESXi hosts at once) on which you need to detect the changes.

- In the menu, select Storage -> Rescan Storage

- Selecting Rescan Storage results in a new window shown in Figure 7, providing users with two options: Scan for New Storage Devices and Scan for New VMFS Volumes. The two options allow users to customize the rescan to either detect changes to the storage area network, or to the changes in the VMFS volumes. The process to scan the storage area network is much slower than the process to scan for changes to VMFS volumes. The storage area network should be scanned only if there are known changes to the environment.

- Click OK to initiate the rescan process on the VMware ESXi.

Figure 7 Rescanning options in the vSphere Client

Using VMware ESXi command line utilities

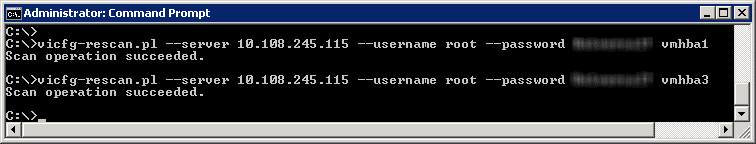

VMware offers the vCLI command vicfg-rescan to detect changes to the storage environment. The vCLI utilities take the VMkernel SCSI adapter name (vmhbax) as an argument. This command should be executed on all relevant VMkernel SCSI adapters if Dell PowerMax devices are presented to the VMware ESXi on multiple paths. Figure 8 displays an example using vicfg-rescan.

Note: The use of the remote CLI or the vSphere client is highly recommended, but in the case of network connectivity issues, ESXi still offers the option of using the CLI on the host (Tech Support Mode must be enabled). The command to scan all adapters is: esxcli storage core adapter rescan --all. Unlike vicfg-rescan there is no output from the esxcli command.

Figure 8 Using vicfg-rescan to rescan for changes to the SAN environment

Creating VMFS volumes on VMware ESXi

VMware Virtual Machine File System (VMFS) volumes created utilizing the vSphere Client are automatically aligned on 64 KB boundaries. Therefore, Dell strongly recommends utilizing the vSphere Client to create and format VMFS volumes.

Note: A detailed description of track and sector alignment in x86 environments is presented in the section Partition alignment.

Creating a VMFS datastore using the vSphere Client

The vSphere Client offers a single process to create an aligned VMFS. The following section will walk through creating the VMFS datastore in the vSphere Client in a vSphere 7.0 environment.

Note: A datastore in a VMware environment can be either a NFS file system, VMFS, or vVol in vSphere. Therefore, the term datastore is utilized in the rest of the document. Furthermore, a group of VMware ESXi hosts sharing a set of datastores is referred to as a cluster. This is distinct from a datastore cluster, the details of which can be found in Datastore clusters.

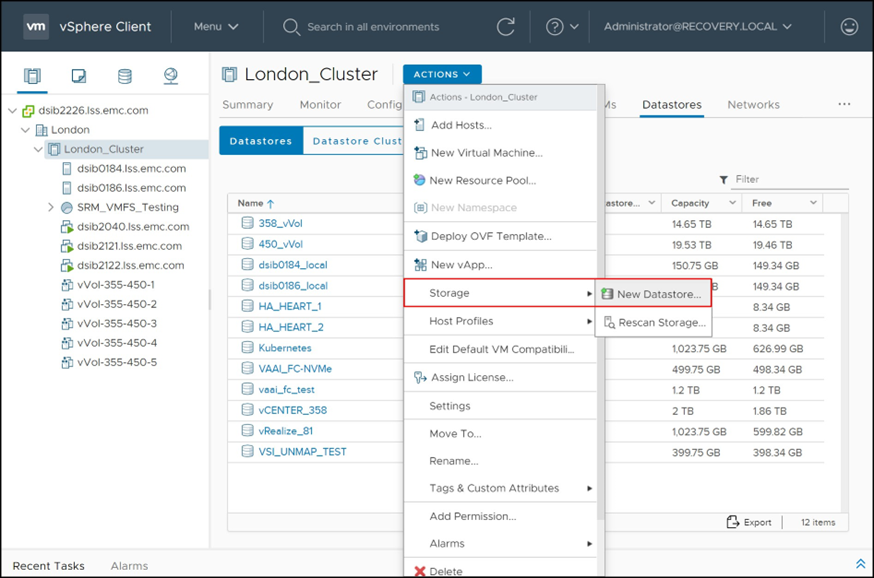

The user starts by selecting a cluster (or host) on the left-hand side of the vSphere Client. If shared storage is presented across a cluster of hosts, any host can be selected. A series of tabs are available on the right-hand side of the Client. Select the Datastores tab as in Figure 9. All available datastores on the VMware cluster are displayed here. In addition to the current state information, the pane also provides the options to manage the datastore information and create a new datastore. The wizard to create a new datastore can be launched by selecting ACTIONS -> Storage -> New Datastore menu option boxed in red in Figure 9.

Figure 9 Displaying and managing datastores in the vSphere Client

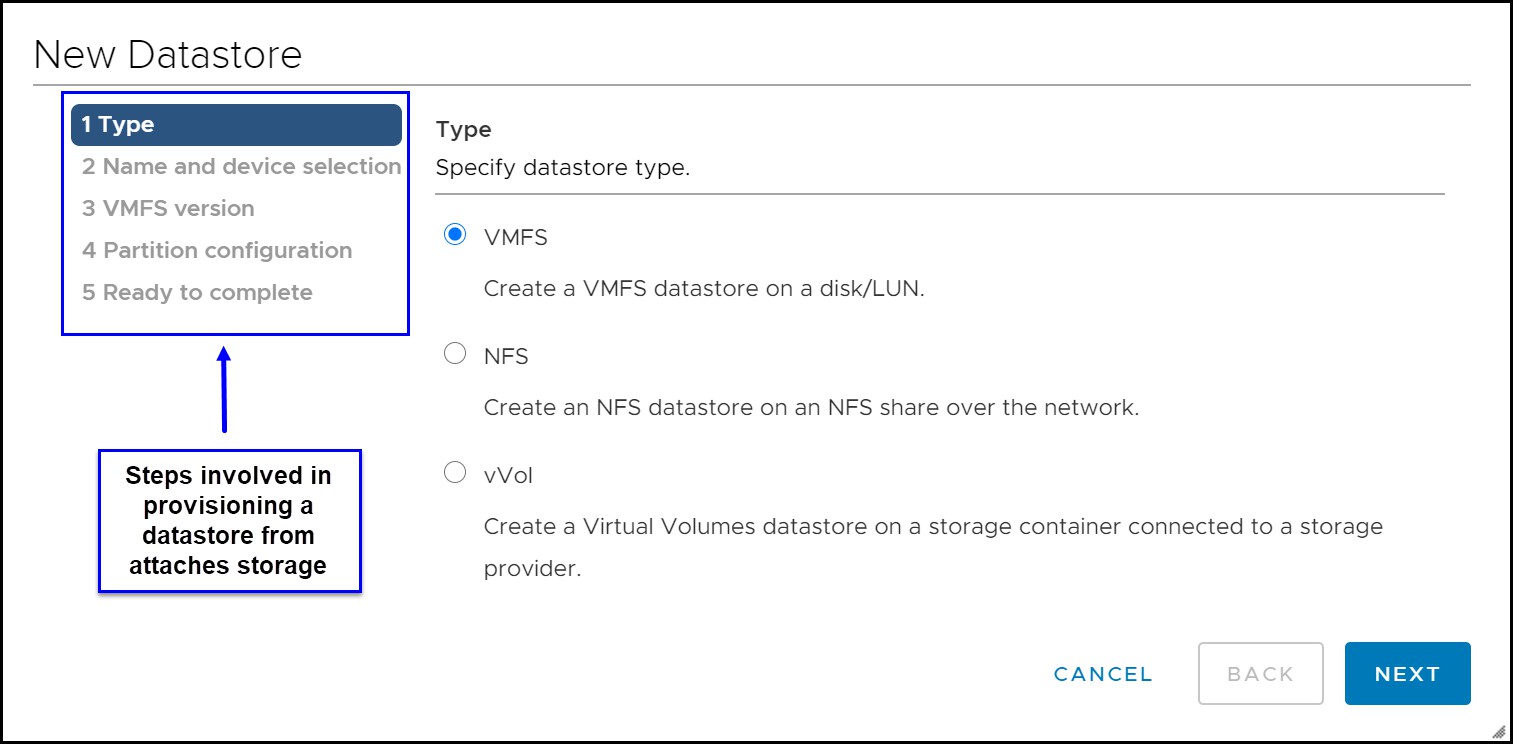

The New Datastore wizard on startup presents a summary of the required steps to provision a new datastore in vSphere, as seen in the highlighted box in Figure 10. Select the Type VMFS.

Figure 10 Provisioning a new VMFS datastore in the vSphere Client — Type

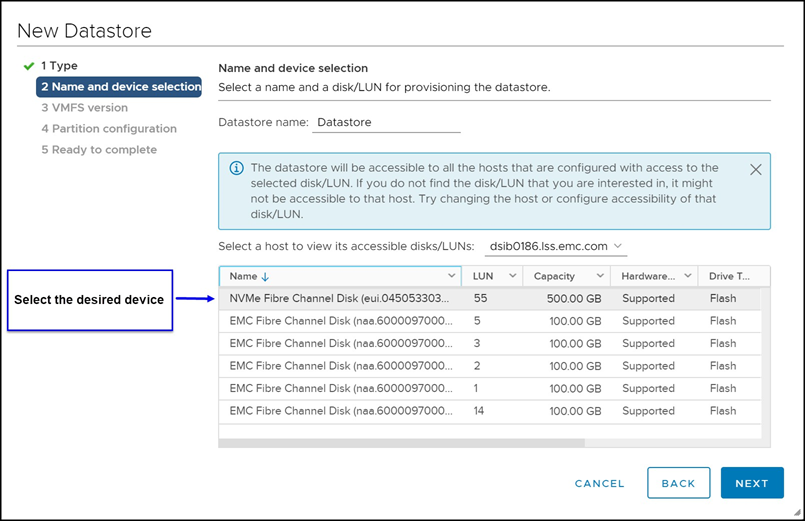

Selecting the NEXT button in the wizard presents all viable FC, NVMeoF, or iSCSI attached devices. The next step in the process involves selecting the appropriate device in the list provided by the wizard, supplying a name for the datastore, and selecting the NEXT button as in Figure 11.

Figure 11 Provisioning a new VMFS datastore in the vSphere Client — Disk/LUN

It is important to note that devices that have existing VMFS volumes are not presented on this screen.[2] This is independent of whether or not that device contains free space. However, devices with existing non-VMFS formatted partitions but with free space are visible in the wizard.

Note: The vSphere Client allows only one datastore on a device. Dell PowerMax storage arrays support non-disruptive expansion of storage LUNs. The excess capacity available after expansion can be utilized to expand the existing datastore on the LUN. Growing VMFS in VMware vSphere focuses on this feature of Dell PowerMax storage arrays. Dell does not recommend using multiple LUNs (extents) in a single datastore as it can cause a number of performance and management issues. It is also unnecessary as VMware supports very large datastores with a single device.

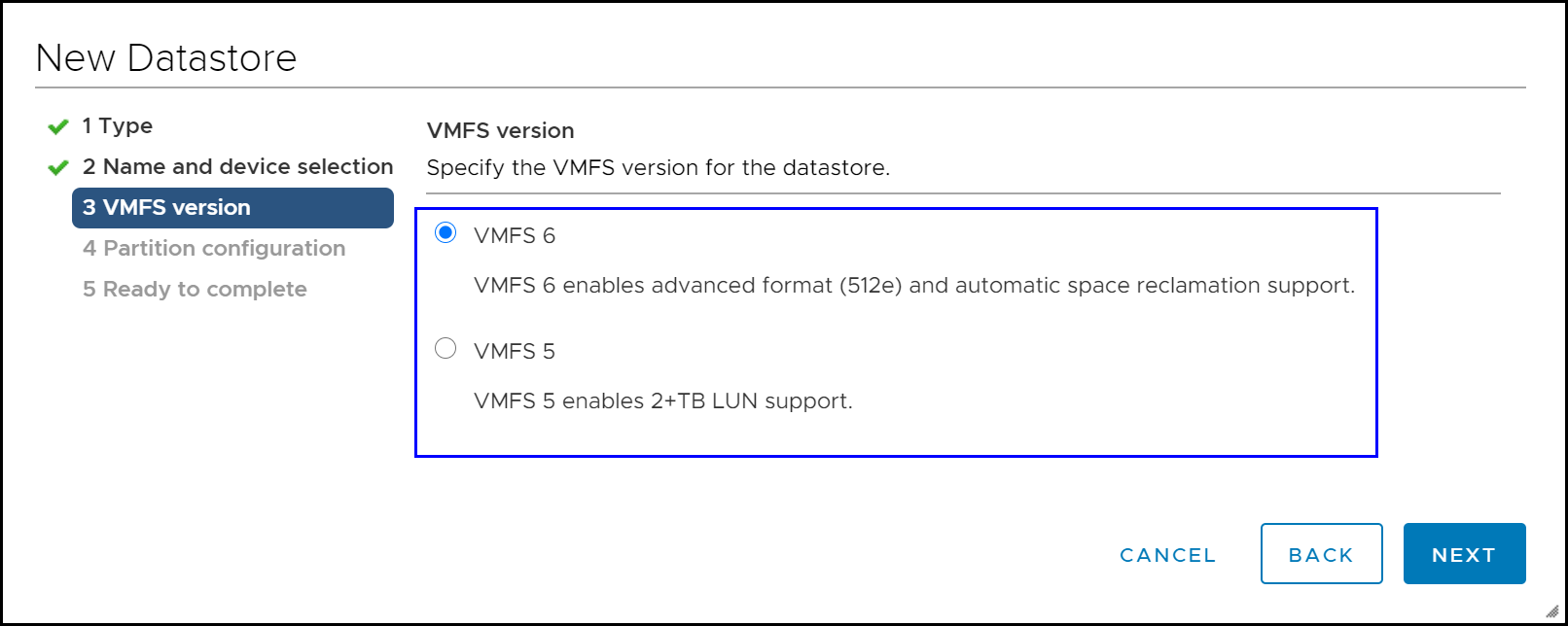

The next screen will prompt the user for the type of VMFS format, either the default VMFS 6 format or the older format VMFS 5 if older vSphere environments will access the datastore. This screen is shown in Figure 12.

Figure 12 Provisioning a new VMFS datastore in the vSphere Client — VMFS file system

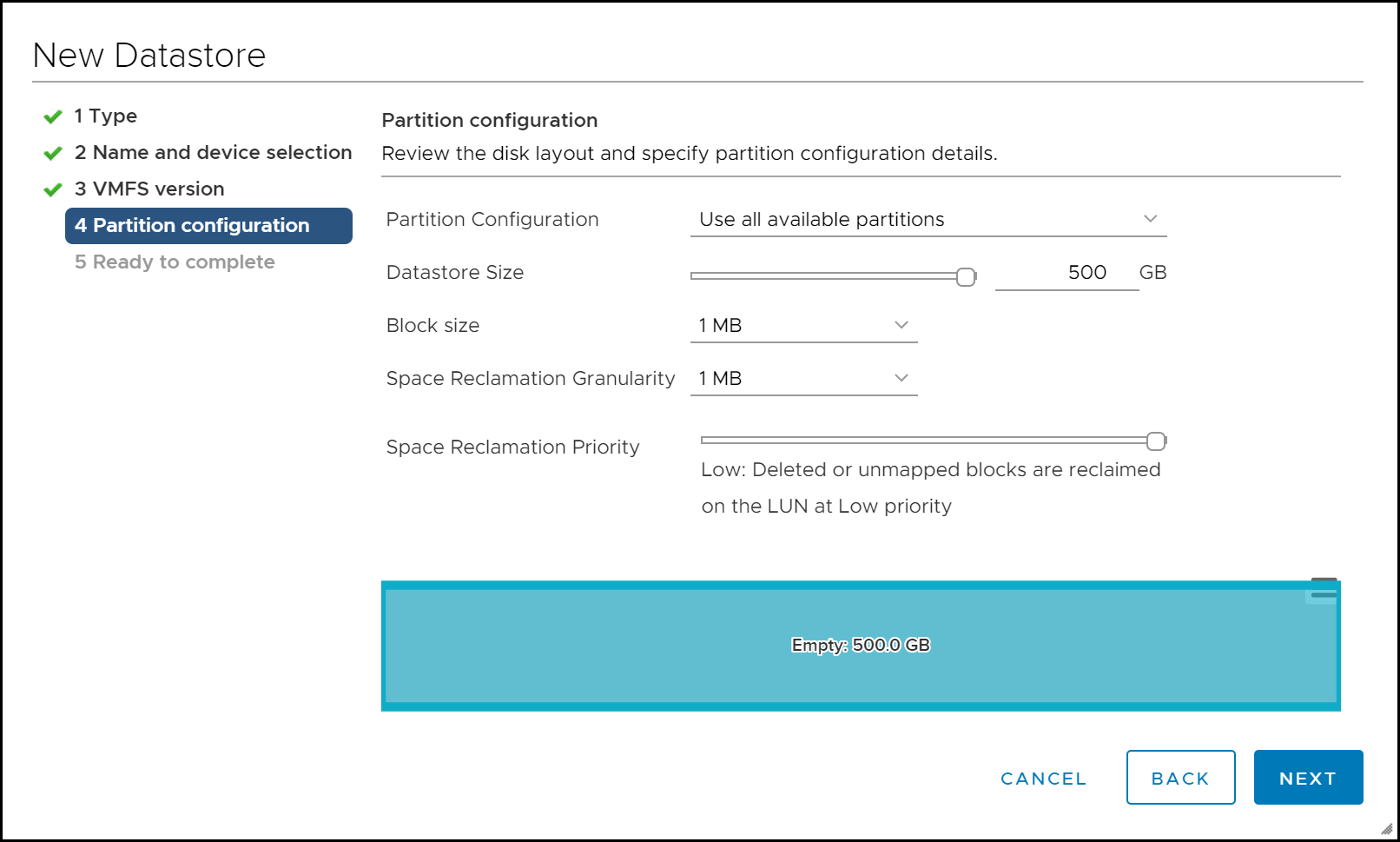

The user is then presented with the partition configuration. Here, if VMFS 6 has been chosen, there is the ability to set automated UNMAP. It is on by default in Figure 13, set to low. Although vSphere 6 and higher offer the ability to use a fixed size for UNMAP rather than priority, neither the vSphere Client nor vSphere Client permits setting it at creation time. In any case, Dell recommends using the defaults.

Figure 13 Provisioning a new VMFS datastore in the vSphere Client — Partition

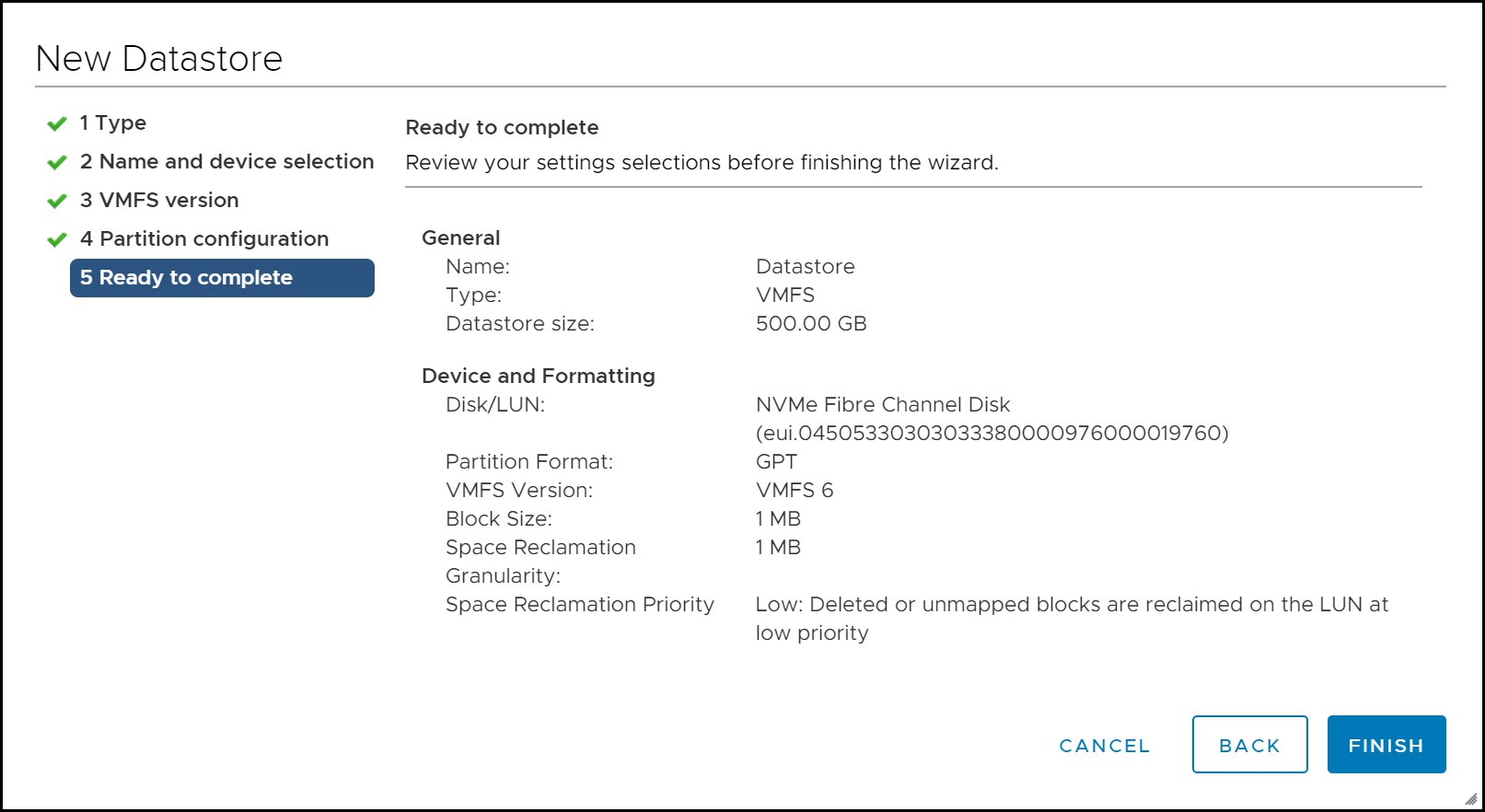

The final screen provides a summary. This is seen in Figure 14.

Figure 14 Provisioning a new VMFS datastore in the vSphere Client — Summary

Creating a VMFS datastore using command line utilities

VMware ESXi provides a command line utility, vmkfstools, to create VMFS.[3] The VMFS volume can be created on either FC, NVMeoF, or iSCSI attached Dell PowerMax storage devices by utilizing partedUtil for ESXi. Due to the complexity involved in utilizing command line utilities, VMware and Dell recommend use of the vSphere Client to create a VMware datastore on Dell PowerMax devices.

Creating an NFS datastore

Unlike VMFS which is created on a presented device to the ESXi host, the network file system (NFS) must be created on the PowerMax and then mounted directly on the ESXi host(s). The NFS creation will be briefly discussed first followed by the NFS datastore creation with the vSphere Client.

Note: vSphere 6 and higher support both NFS 3 and 4.1. eNAS and PowerMax File also support both file systems, but NFS 4.1 is not enabled by default on eNAS and must be configured before attempting to create an NFS 4.1 datastore.

Creating a Network File System on PowerMax File

When File is enabled on a PowerMax, access the System -> File Configuration -> FILE SYSTEMS screen. Select Create to start the wizard. Select the radio button for VMware File System in Figure 15.

Figure 15 Create File System wizard for PowerMax File - step 1

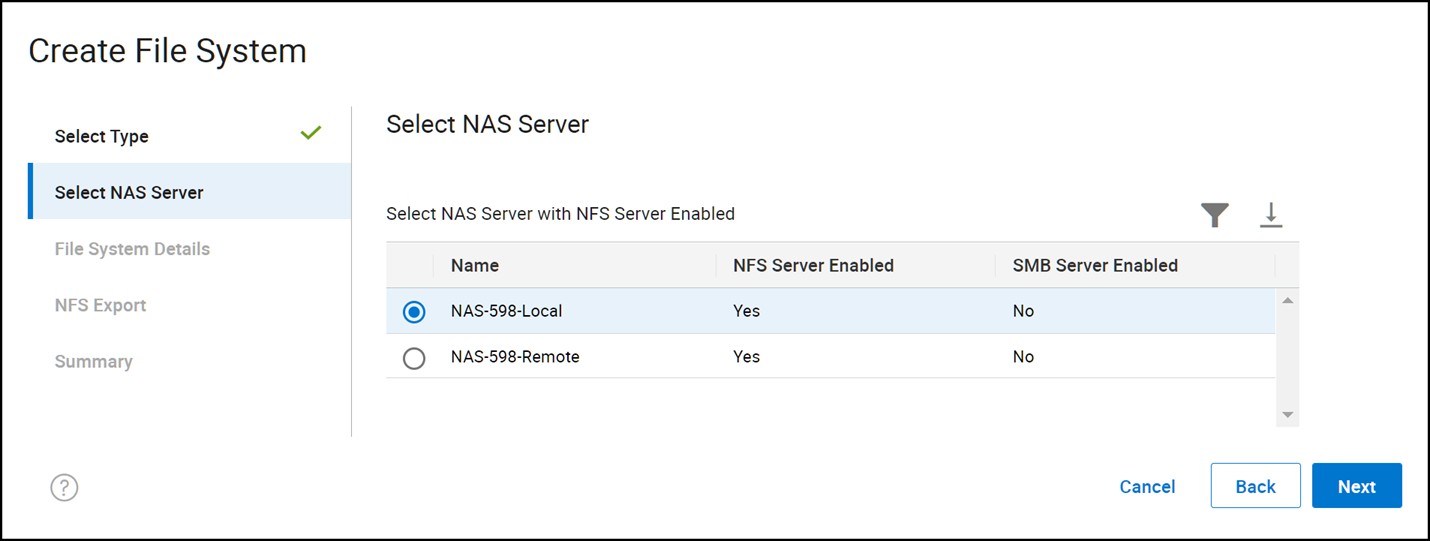

In the second step, select the appropriate NAS Server for the NFS. In this example in Figure 16, where replication is in use, the local NAS Server is selected. It supports both NFS 3 and 4.1 (assigned during NAS creation).

Figure 16 Create File System wizard for PowerMax File - step 2

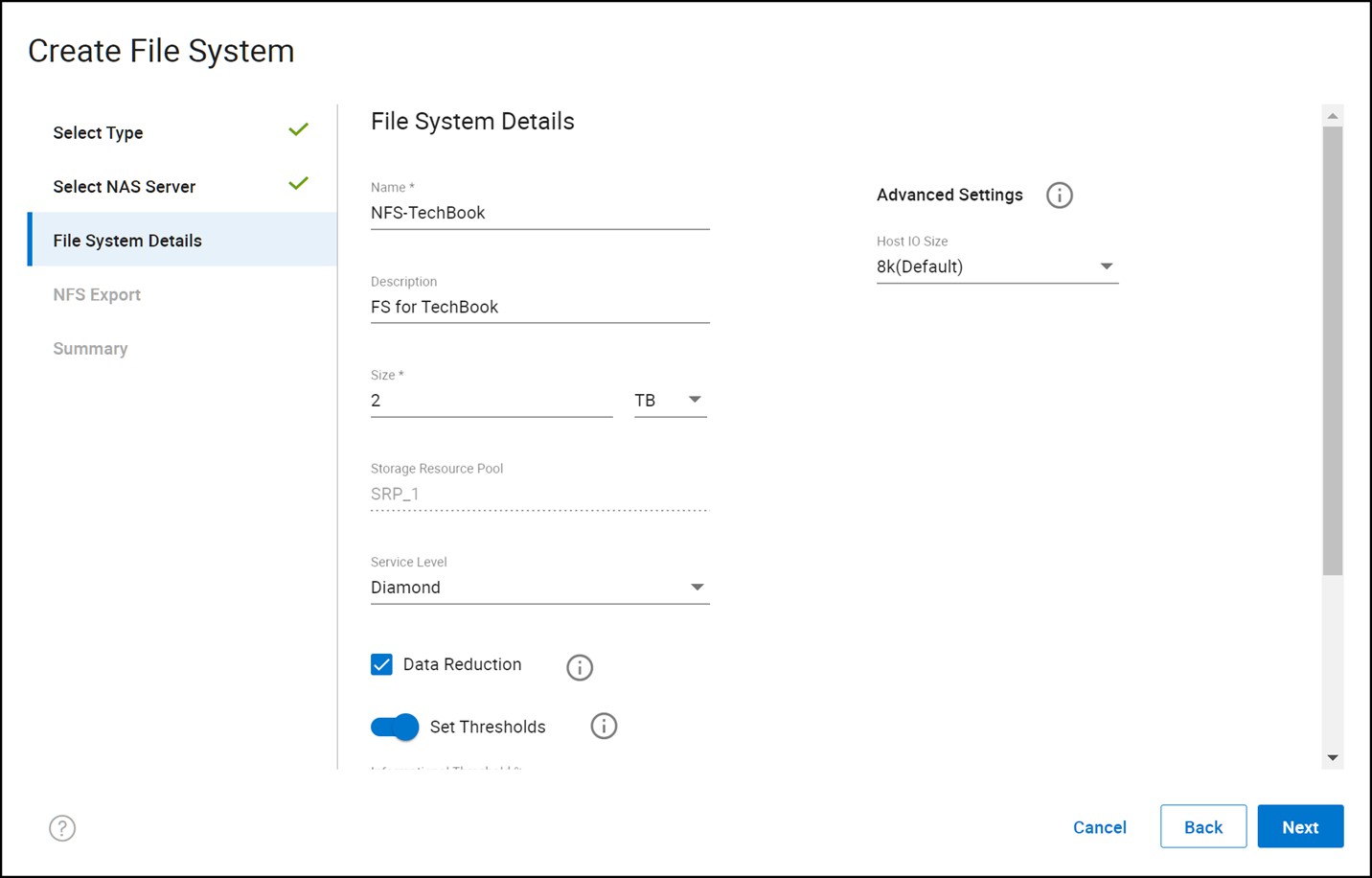

Next, supply the file system details in Figure 17. Dell does not recommend changing the Host IO Size from 8k unless the exact IO profile of the application accessing the NFS is known. If desired, change the Service Level and set Thresholds to allow alerting. Data Reduction is enabled by default but can be removed.

Figure 17 Create File System wizard for PowerMax File - step 3

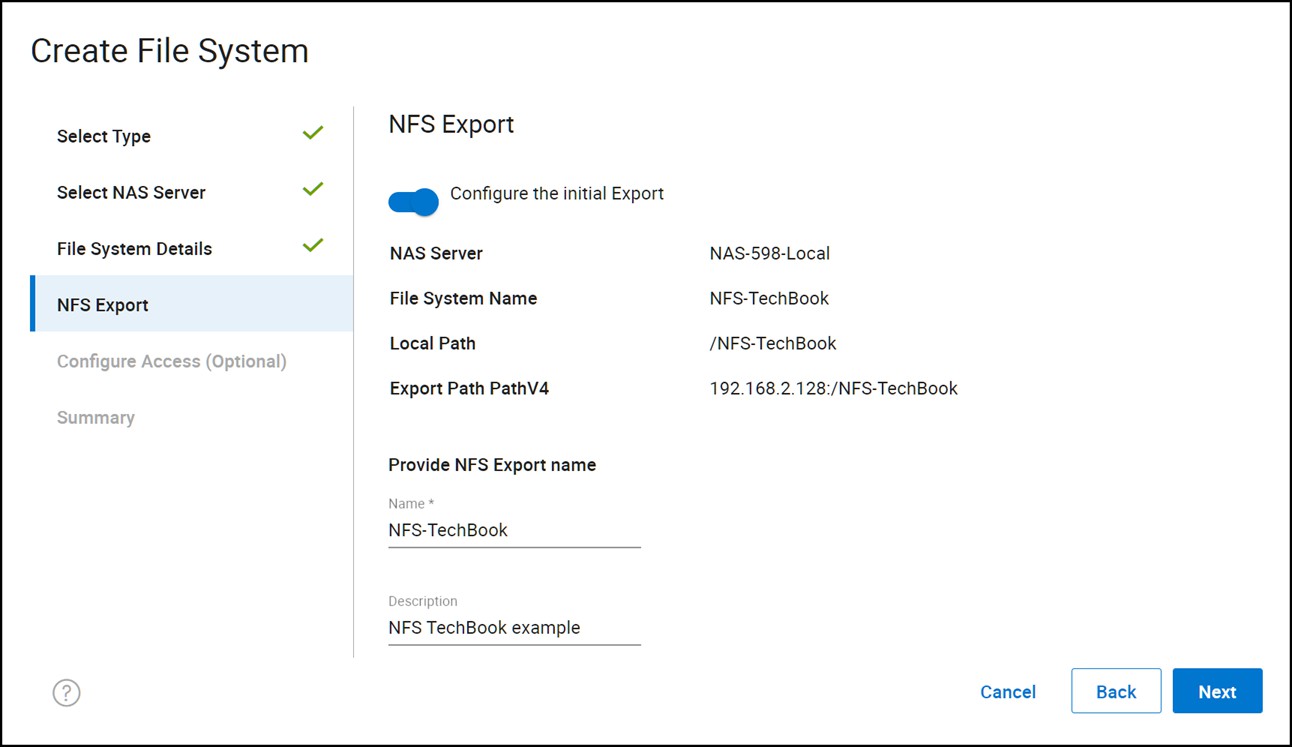

When creating the export in Figure 18, it is unnecessary to name the export the same as the file system, as has been done here. The export name is what will be provided in the vSphere Client when creating the NFS datastore along with the IP address.

Figure 18 Create File System wizard for PowerMax File - step 4

Lastly, configure access against the export. The PowerMax offers Kerberos for strong security when using NFS 4.1. Even if no added security is desired, it is still necessary to change the Default Access to Read/Write, allow Root. Failure to make this change will prevent ESXi from being able to fully access the file system since it mounts the NFS as the root user. The changes are shown in Figure 19.

Figure 19 Create File System wizard for PowerMax File - step 5

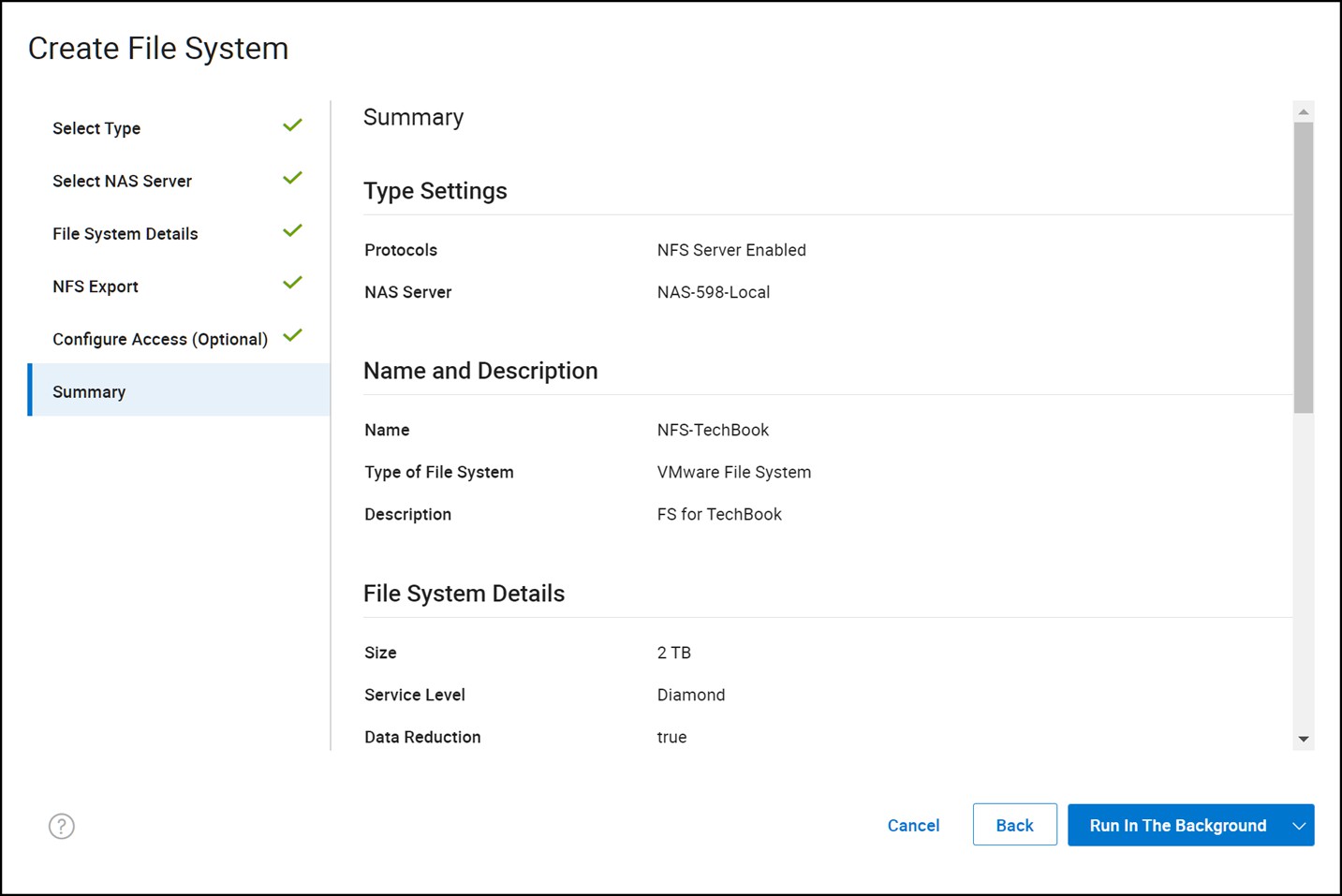

Review the summary page and create the NFS in Figure 20.

Figure 20 Create File System wizard for PowerMax File - step 6

Creating an NFS datastore using the vSphere Client

Begin by accessing the same wizard shown in Figure 9 to create a datastore.

The New Datastore wizard on startup presents a summary of the required steps to provision a new datastore in vSphere, as seen in the highlighted box in Figure 21. Select the NFS radio button. Select the Type NFS.

Figure 21 Provisioning a new NFS datastore in the vSphere Client — Type

Figure 21 Provisioning a new NFS datastore in the vSphere Client — TypeSelecting the NEXT button in the wizard presents the two different options for NFS version: NFS 3 and NFS 4.1 in Figure 22. Generally, NFS 4.1 is preferable as it has Kerberos support and multipathing; however, in this example NFS 3 is selected.

Figure 22 Provisioning a new NFS datastore in the vSphere Client — NFS version

In step 3 all the NFS details are entered. While the Folder and Server values must match the entries from Figure 17, the name of the datastore itself can be any value. In this example, in Figure 23, the same name is selected for continuity.

Figure 23 Provisioning a new NFS datastore in the vSphere Client — Name and configuration

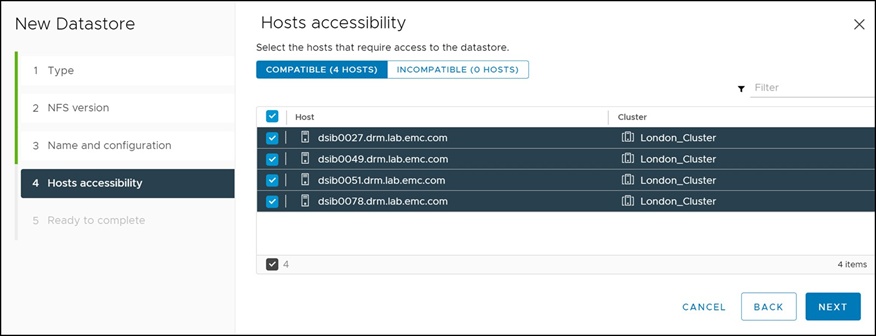

In the next screen in Figure 24, choose which hosts in the vSphere Cluster should mount the share. For any file system, the same NFS version should be used across any ESXi host accessing it. In other words, do not mount the NFS-TechBook NFS on one ESXi host using NFS 3, and then on a different ESXi host use NFS 4.1. This is not supported.

Figure 24 Provisioning a new NFS datastore in the vSphere Client — Host accessibility

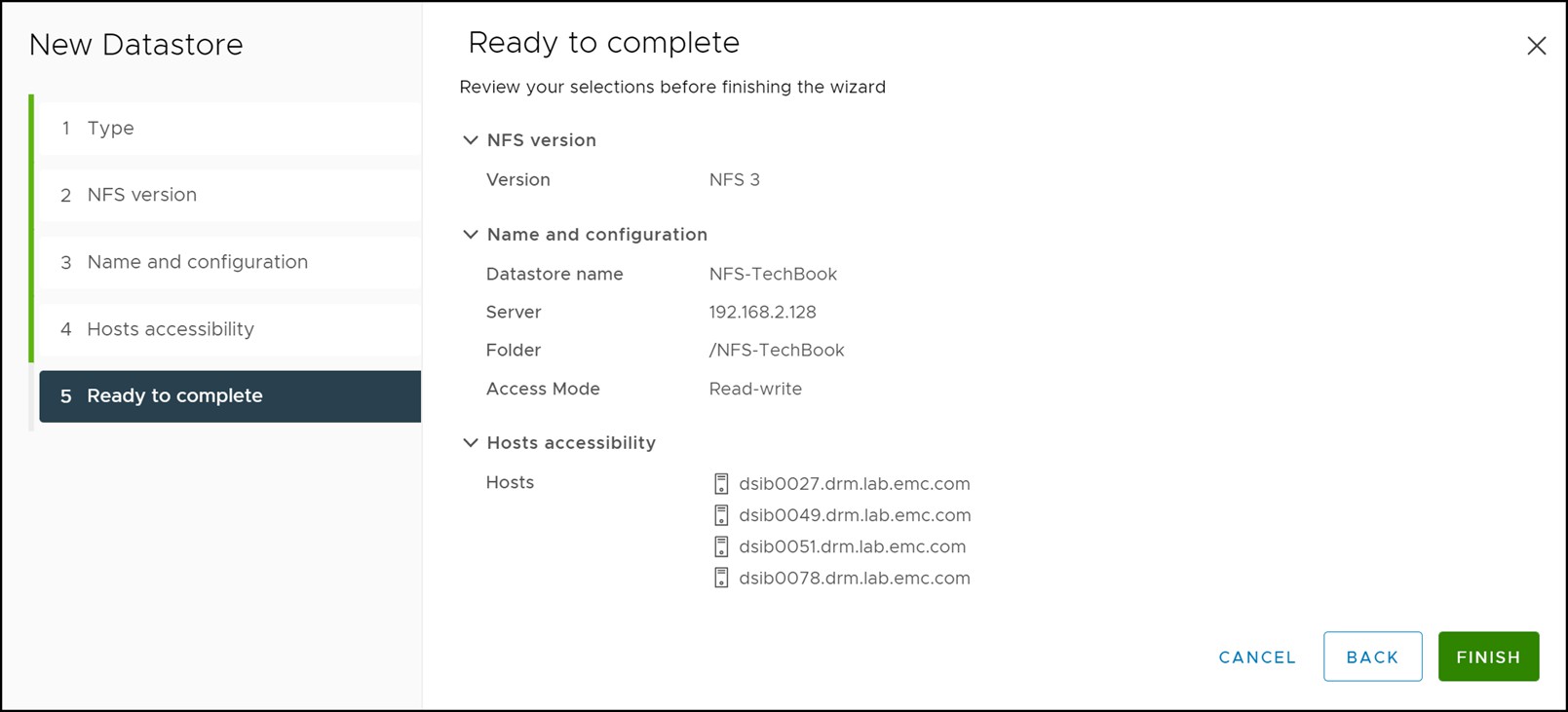

The final screen provides a summary. This is seen in Figure 25.

Figure 25 Provisioning a new NFS datastore in the vSphere Client — Summary

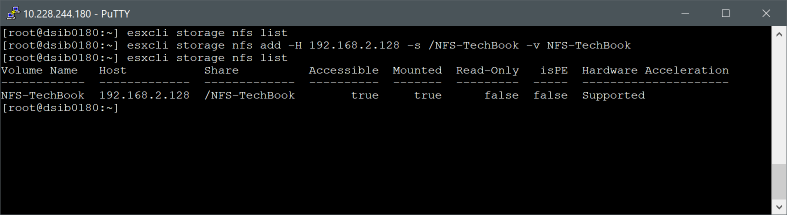

Creating an NFS datastore using command line utilities

VMware ESXi provides a command line utility, esxcli storage nfs (nfs41 for 4) add, to create NFS datastores. An example is seen in Figure 26.

Figure 26 esxcli storage nfs datastore creation

Due to the complexity involved in utilizing command line utilities, VMware and Dell recommend use of the vSphere Client to create a VMware datastore on Dell PowerMax devices.

Upgrading VMFS volumes from VMFS 5 to VMFS 6

VMware does not support upgrading from VMFS 5 to VMFS 6 datastores because of the changes in metadata in VMFS 6 to make it 4k aligned. VMware offers a number of detailed use cases on how to move from to VMFS 6 and they can be found in VMware KB 2147824; however, for most environments a simple Storage vMotion of the VM from VMFS 5 to VMFS 6 will be sufficient. Note that it will be necessary to first upgrade the vCenter to a minimum of vSphere 6.5 as vSphere 6.0 does not support VMFS 6.

[1] Microsoft Failover Cluster is one application that requires consistent LUN when using physical RDMs’ Also a known issue exists with datastore expansion in vCenter which is related to Consistent LUN. It can be avoided by expanding the datastore on the ESXi host and rescanning the cluster.

[2] Devices that have incorrect VMFS signatures will appear in this wizard. Incorrect signatures are usually due to devices that contain replicated VMFS volumes or changes in the storage environment that result in changes to the SCSI personality of devices. For more information on handling these types of volumes please refer to section, Cloning virtual machines using Storage APIs for Array Integration (VAAI).

[3] The vmkfstools vCLI can be used with ESXi. The vmkfstools vCLI supports most but not all of the options that the vmkfstools service console command supports. See VMware Knowledge Base article 1008194 for more information.