Home > Storage > PowerMax and VMAX > Storage Admin > Dell PowerMax and VMware vSphere Configuration Guide > Automated unmap

Automated unmap

-

Automated UNMAP

Beginning with vSphere 6.5, VMware offers automated UNMAP capability at the VMFS 6 datastore level (VMFS 5 is not supported). The automation is enabled by a background process (UNMAP crawler) which keeps track of which LBAs that can be unmapped and then issues those UNMAPs at various intervals. It is an asynchronous process. VMware estimates that if there is dead space in a datastore, automated UNMAP should reclaim it in under 12 hours.

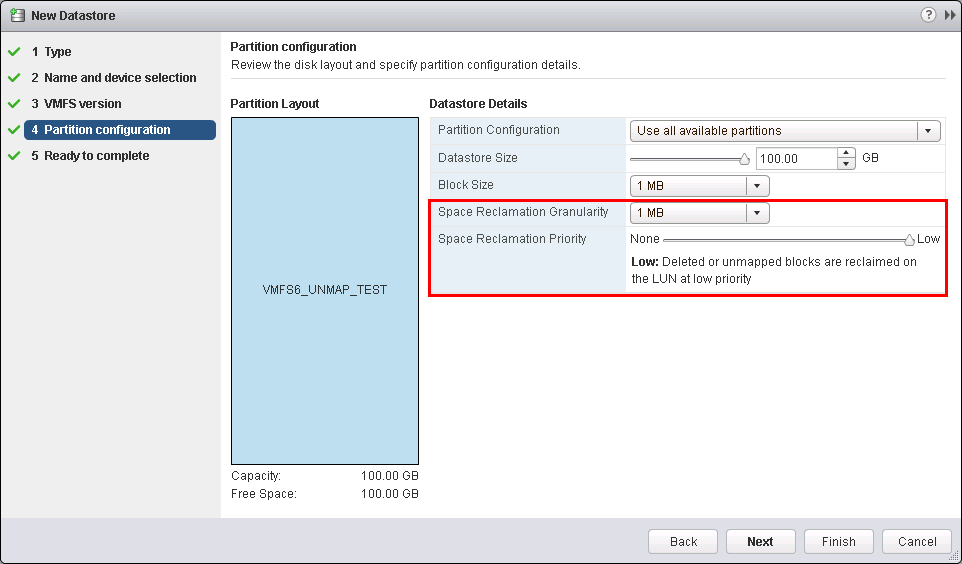

UNMAP is enabled by default when a VMFS 6 datastore is created through a vSphere Client wizard. There are two parameters available, one of which can be modified: Space Reclamation Granularity and Space Reclamation Priority. The first parameter, Space Reclamation Granularity, designates at what size VMware will reclaim storage. It cannot be modified from the 1 MB value. The second parameter, Space Reclamation Priority, dictates how aggressive the UNMAP background process will be when reclaiming storage. This parameter only has 2 values: None (off), and Low. It is set to Low by default. Dell recommends leaving it at this setting. These parameters are seen in Figure 116.

Figure 116. Enabling automated UNMAP on a VMFS-6 datastore

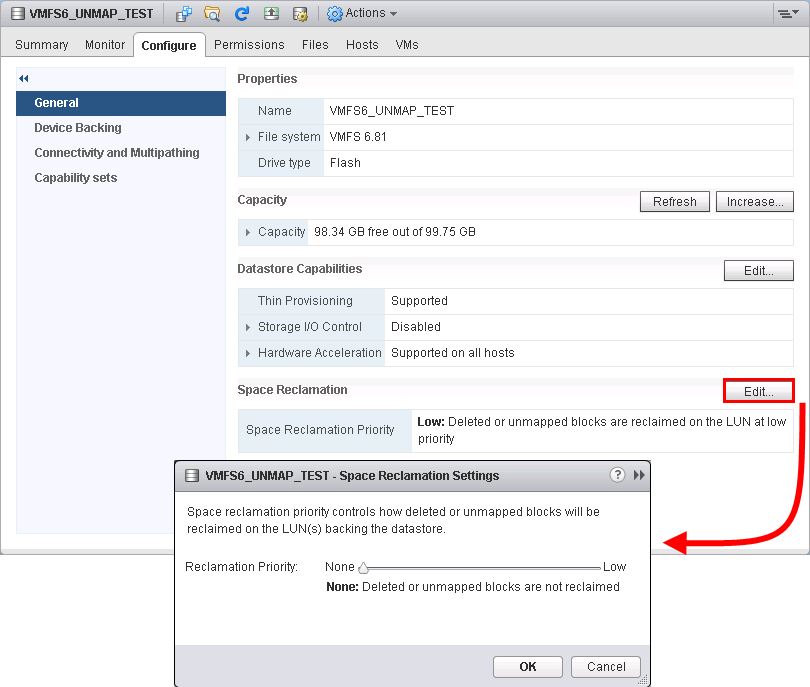

If it becomes necessary to either enable or disable UNMAP on a VMFS 6 datastore after creation, the setting can be modified through the vSphere Client or CLI. Within the datastore view in the Client, navigate to the Configure tab and the General sub-tab. Here find a section on Space Reclamation where the Edit button can be used to make the desired change as in Figure 117.

Figure 117. Modifying automated UNMAP on a VMFS 6 datastore

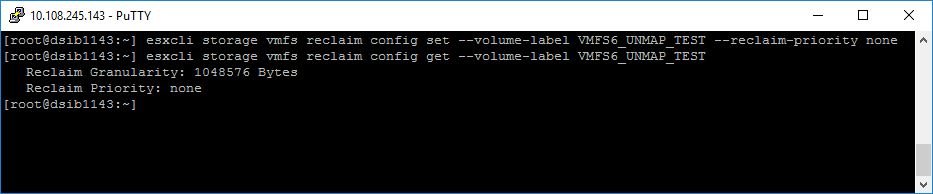

Changing the parameter is also possible through CLI, demonstrated in Figure 118.

Figure 118. Modifying automated UNMAP through CLI

Note that manual UNMAP using esxcli is still supported and can be run on a datastore whether or not it is configured with automated UNMAP. Some customers may choose to use manual UNMAP to control when the commands are issued and avoid any unwanted impact.

ESXi 6.7

Beginning with vSphere 6.7, VMware offers the ability to not only change the reclamation priority that is used for UNMAP, but also change the method from priority to a fixed of bandwidth if desired. As explained above, prior to vSphere 6.7 automated UNMAP was either on, which meant low priority, or off. Now priority can be changed to medium or high, each with their own bandwidth. Medium will translate into 75 MB/s while high will be 256 MB/s (despite the bandwidth indicating 0 MB/s). To change the priority, however, only the CLI is offered.

By default, the “Low” priority setting translates into reclaiming at a rate of 25 MB/s. To change it to medium priority, issue the following command seen in Figure 119.

esxcli storage vmfs reclaim config set -volume-label RECLAIM_TEST_6A -reclaim-priority medium

Figure 119. Modifying reclaim priority

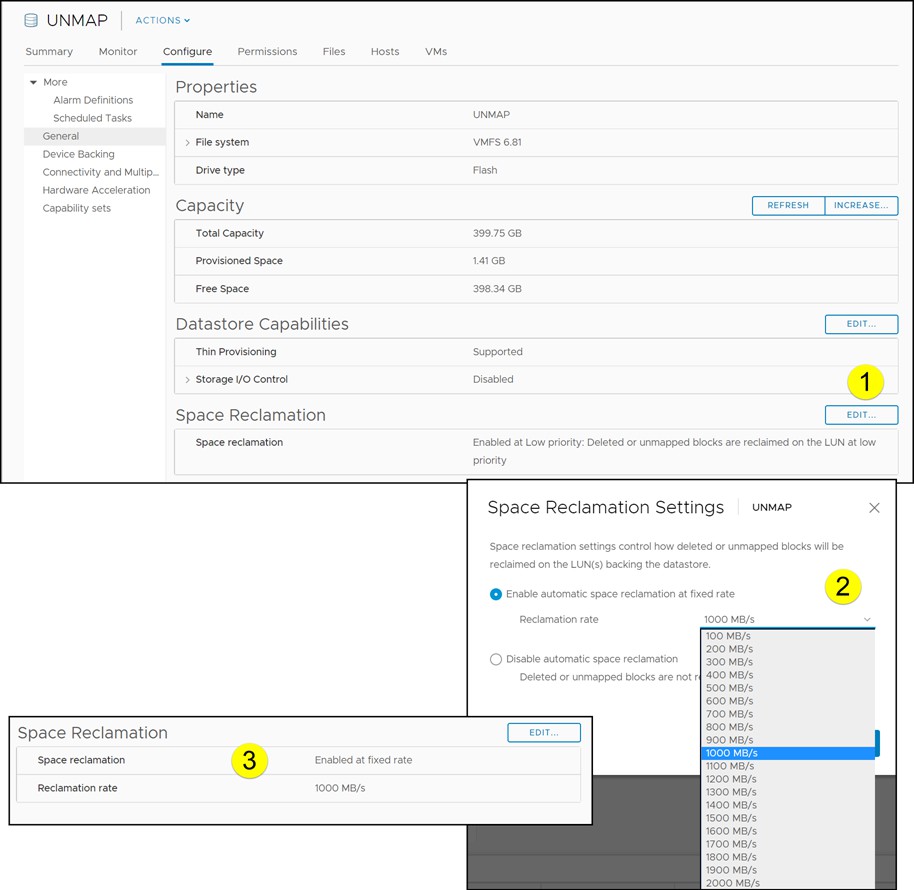

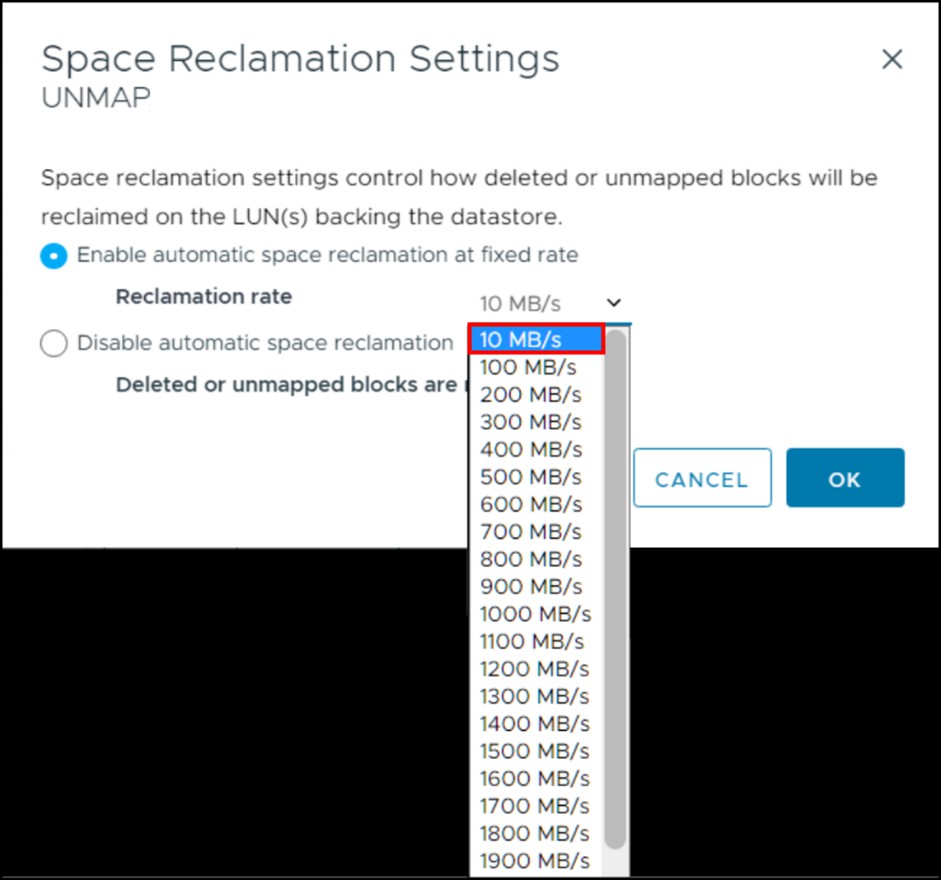

To take advantage of the power of all flash storage arrays, VMware also offers the ability to set a fixed rate of bandwidth. The user can set the value anywhere from 100 MB/s to a maximum of 2000 MB/s in multiples of 100. This capability, unlike priority, is offered in the GUI and CLI. In the following example in Figure 120, the reclaim method is changed to “fixed” at a 1000 MB/s bandwidth. In this interface it is clear that the priority cannot be changed as previously mentioned.

Figure 120. Modifying reclaim method to fixed

The CLI can also be used for the fixed method. For example, to change the reclaim rate to 200 MB/s on the datastore "RECLAIM_TEST_6A" issue the following:

esxcli storage vmfs reclaim config set -volume-label RECLAIM_TEST_6A -reclaim-method fixed -b 200

Here is the actual output in Figure 121 along with the config get command which retrieves the current value.

Figure 121. Changing reclaim priority in vSphere 6.7

VMware has indicated that they do not advise changing the reclaim granularity.

Note: While an active UNMAP operation is underway, changing the bandwidth value will take longer than normal to complete.

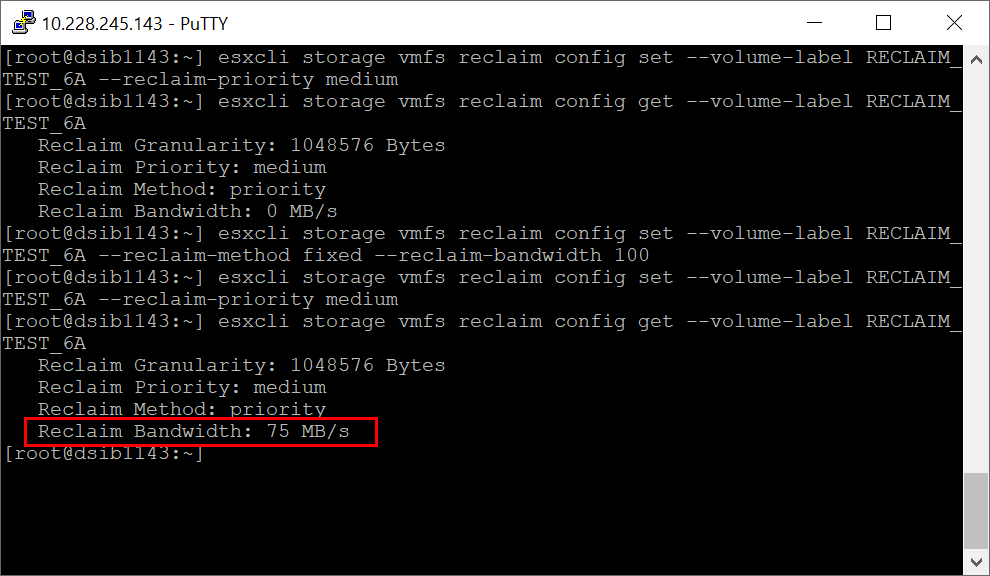

One may notice that in Figure 119, the reclaim bandwidth shows as 0 MB/s, despite knowing the actual value is 75 MB/s. VMware will not show the correct value, however, unless the reclaim method is first set to fixed, then changed back to priority as in Figure 122.

Figure 122. Showing the actual reclaim bandwidth of medium priority

ESXi 8

While the default of low priority for UNMAP is the preferred value for automation, nevertheless some customers with extremely active arrays have found the value of 26 MB/s to produce too much load on the system. To help address these customer environments in vSphere 8, VMware added a lower fixed value of 10 MB/s. The new value is available in the drop-down options show in Figure 123.

Figure 123. Fixed value of 10 MB/s in vSphere 8

NVMeoF

NVMeoF on the PowerMax supports both datastore and in-guest UNMAP.

Recommendations

Dell conducted numerous tests on UNMAP capabilities but found little difference in how quickly data is reclaimed on the array based on size or priority. This is consistent with manual UNMAP block options. However, at higher bandwidths and priority, if the array is already operating at close to peak, performance was impacted. Based on the testing, therefore, the recommendation is to continue to use the priority method at the low setting. By default, VMware keeps the UNMAP rate set to low priority to minimize the impact on VM IO across the array, without regard to the type of storage backing the datastores. If, for some reason, space needs to be reclaimed more quickly, a manual UNMAP can be issued.

Manual UNMAP

It is always possible to run manual UNMAP against VMware datastores whether or not automation is in use. VMware utilizes the esxcli command framework to unmap storage. The command is “unmap” and is under the esxcli storage vmfs namespace:

esxcli storage vmfs unmap

<--volume-label=<str>|--volume-uuid=<str>> [--reclaimunit=<long>]

Command options:

-n|--reclaim-unit=<long>: Number of VMFS blocks that should be unmapped per iteration.

-l|--volume-label=<str>: The label of the VMFS volume to unmap the free blocks.

-u|--volume-uuid=<str>: The uuid of the VMFS volume to unmap the free blocks.

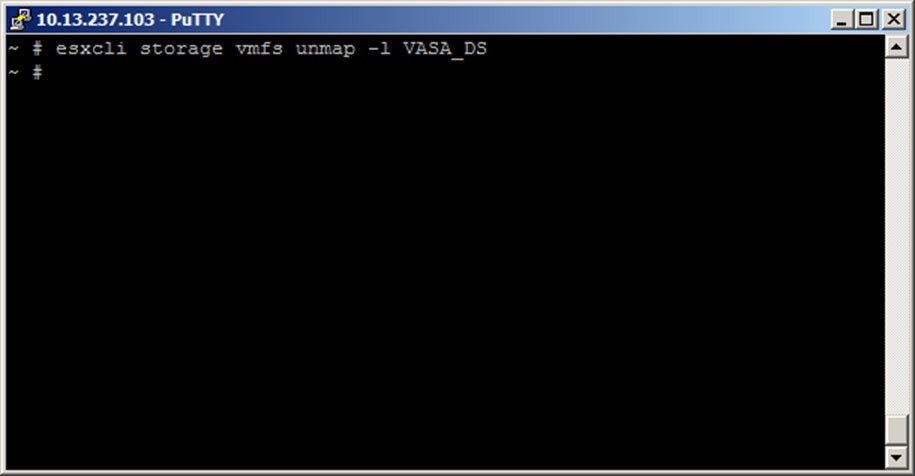

The command has no output when run as displayed in Figure 124.

Figure 124. SSH session to ESXi server to reclaim dead space with esxcli storage vmfs unmap command

When the user runs an unmap command, dead space is reclaimed in multiple iterations. A user can provide the number of blocks (the default is 200) to be reclaimed during each iteration. VMware issues UNMAP to all free blocks, even if those blocks have never been written to.

This unmap command uses the UNMAP primitive to inform the array that blocks can be reclaimed. This enables a correlation between what the array reports as free space on a thin-provisioned datastore and what vSphere reports as free space.

Dell recommends using VMware’s default block size number (200), though fully supports any value VMware does. Dell has not found a significant difference in the time it takes to unmap by increasing the block size number. If using manual UNMAP, Dell suggests reclaiming space in off-peak time periods to avoid any impact on production environments.