Home > Workload Solutions > Data Analytics > Guides > Ready Solutions for AI & Data Analytics: Edge Analytics for Industry 4.0 with Confluent Platform > MLOps and DevOps

MLOps and DevOps

-

The practice of Development Operations (DevOps) emerged from the challenges that arose in deploying new or updated software applications when the software development and IT operations teams functioned almost independently. The situation is often described as a process where software developers would build applications and throw them over the wall to the IT staff that are expected to deploy the software and support users. In order to understand how this scenario connects to data science and machine learning, a data model must be defined.

In 2018, the Wall Street Journal published an opinion editorial titled Models Will Run the World. (Cohen, Steven A. and Granade, Matthew W. (April 2018) Models Will Run the World https://www.wsj.com/articles/models-willrun-the-world-1534716720) The author concluded that the impact of software development on business, commonly called the Digital Transformation, was ready to transition to the next phase of maturity. That level is intelligent applications with embedded machine learning technology. Intelligent applications that incorporate the predictive power of these data analytics models is one of the most common forms of applied artificial intelligence (AI). These same concepts are being developed in this transition from the last industrial revolution. This transition is fueled by digital transformation to the current industrial revolution, and depends on developing intelligent cyber-physical systems that are based on AI principles.

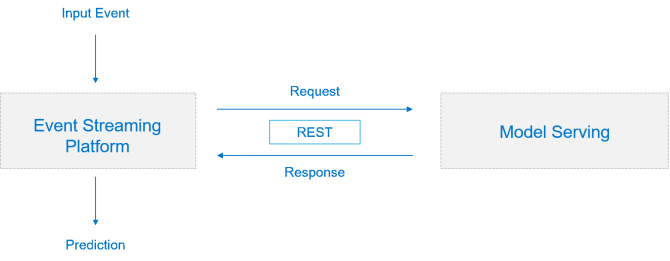

Machine Learning Operations (MLOps) is an attempt to build on the success of Dev/Ops while recognizing that managing the deployment of production class machine learning models has key differences. These differences depend on how tightly integrated the model is with the application or service that uses it. The following figure shows a loosely coupled application where the model serving is hosted on a separate platform from the service that uses the model. In this diagram, the service calling into the model is a process running unattended on an event streaming platform.

Figure 8: Loosely-coupled application

The languages and toolsets that are used for the streaming service and model hosting are decoupled using a lightweight REST interoperability layer. The requirement to deploy and manage two independent platforms adds to the complexity of this model. The complexity increases if this solution is deployed at an edge location where it is desirable to have the smallest maintenance footprint possible.

The deployment of a tightly coupled application and model is at the other end of the spectrum. Java is still the language with the most support for integrating machine learning and application development. There are several modeling frameworks that support exporting trained models into Java classes that can be linked into an application project before compilation. Data scientists will typically use Python, R, or Scala for data preparation, model development, and model training.

Many Python and R machine learning libraries have features for exporting trained model objects that can be imported and used in other server environments. This approach requires carefully managing both the language and model library dependencies on the target platforms. Container virtualization and management tools make this job easier, but there are still other challenges that must be addressed. Applications that are developed in compiled languages must access these model objects that will run in a separate process on the server. This approach adds more dependencies for libraries that support the remote calls and has significant performance overhead. These examples are a few of many issues that lead to unreliable and failed deployment of models into production environments. These challenges are especially complicated when working with distributed application environments with few support resources at the remote sites like IIoT.

After the model is trained with a framework that supports creation of Java objects from trained models, the data scientist exports a model object (the structure and parameters of the model) to a text formatted Java class. Before some frameworks added this feature, software developers would hand code the model structure with embedded parameters before building the final application.