Home > Communication Service Provider Solutions > Telecom Multicloud Foundation > Red Hat > Guides > Red Hat Open Stack Platform Guides > Jumbo frames Performance Analysis Over Red Hat OpenStack 16.1 Infrastructure > Troubleshooting

Troubleshooting

-

To troubleshoot and analyze the reasons for packet loss in Dell EMC RA based RHOSP 16.1, standalone KVM based environment was setup with intent to replicate the Problem statement. In isolated setup, we replicated similar software versions and configurations supported by Red Hat OpenStack 16.1.

The debug analysis was done in collaboration with Intel covering multiple test cases that were run incrementally in a standalone server setup to reproduce the issue for packet loss.

Refer to Appendix B for the test results of the aforementioned test cases.

The variations implemented in test cases include use of single and dual OVS bridges, modifying TX and RX queues, bonding on NICs and VLAN transitions. Isolated and combinations of these parameters were incrementally applied. Each parameter played a vital role in minimizing the packet drops but scaling the RX queues was the major optimization that eliminated the packet drops for jumbo frames.

The following section highlights the test results obtained on Dell EMC PowerEdge R740xd in RHOSP 16.1 before and after scaling the RX queues. Implementing the findings obtained from debug analysis, RX queues were scaled in the OpenStack environment and 100% line rate was achieved. The RX queues were scaled on both physical and virtual ports of traffic sender and receiver nodes. Details for the scaling of RX queues and their impact on network throughput are given below.

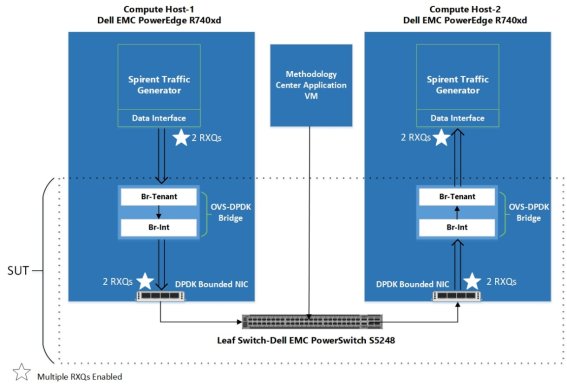

Figure 2 highlights the virtual and physical ports where multiple RX queues are enabled in an OpenStack environment.

Figure 2. Scaled RX queues on Dell Technologies OpenStack Solution

The detailed implementation of scaling the RX queues has been discussed in Appendix C.

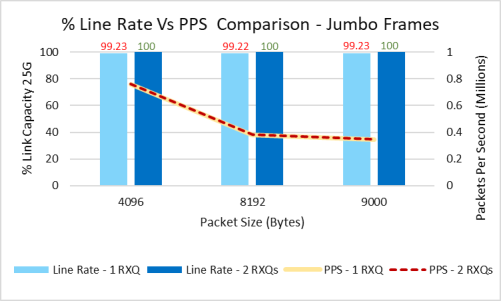

Table 1 shows the benchmarked throughput results for jumbo frames with single and multiple RXQs in RHOSP 16.1. In addition to scaled RX queues, other optimization considerations are mentioned in Learnings and Best-Known Practices.

Table 1. Line rate % and PPS performance comparison for jumbo frames

Packet Size (B)

1 RXQ

2 RXQs

Line Rate (%)

Packet Per Second

Line Rate (%)

Packet Per Second

4096

99.23

758570

100

759828

8129

99.22

380206

100

380845

9000

99.23

346724

100

346953

The following figure is a graphical representation of the results in Table 1.

Figure 3. Line rate % vs PPS comparison results for jumbo frames

Note: The recommended configurations are suggested based on 25 GbE Intel® Ethernet Network Adapter XXV710-DA2 results. Higher speed NICs would require additional parameters to consider for best performance numbers for jumbo frames.