Home > Workload Solutions > Container Platforms > Red Hat OpenShift Container Platform > Archive > Implementation Guide—Red Hat OpenShift Container Platform 4.6 on Dell Infrastructure > Installing the control-plane nodes

Installing the control-plane nodes

-

To install the control-plane nodes:

- Connect to the iDRAC of a control-plane node and open the virtual console.

- Ensure that the NIC specified in step 5.b.ii of Preparing and running the Ansible playbooks

Preparing and running the Ansible playbooksis enabled with PXE. - In the iDRAC UI, click Configuration and select BIOS Settings.

- Expand Network Settings.

- Set PXE Device1 to Enabled.

- Expand PXE Device1 Settings.

- Set NIC in Slot 2 Port 1 Partition 1 as the interface.

- Scroll to the bottom of the Network Settings section and select Apply.

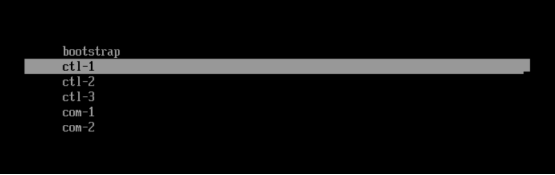

The system boots automatically into the PXE network and displays the PXE menu, as shown in the following figure:

Figure 6. iDRAC console PXE menu

- Select ctl-1 (the first node). After the installation is complete but before the node reboots into the PXE, ensure that the hard disk is placed above the PXE interface in the boot order:

- Press F2 to enter System Setup.

- Select System BIOS > Boot Settings > UEFI Boot Settings > UEFI Boot Sequence.

- Select PXE Device 1 and click -.

- Repeat the preceding step until PXE Device 1 is at the bottom of the boot menu.

- Click OK, and then click Back.

- Click Finish and save the changes.

- Let the node boot into the hard drive where the operating system is installed.

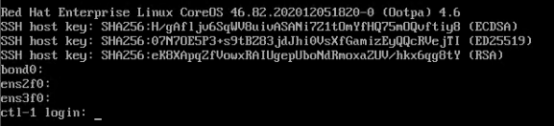

- After the node comes up, ensure that the hostname is displayed as ctl-1 in the iDRAC console, as shown in the following figure:

Figure 7. Control-plane node (ctl-1) iDRAC console

- Repeat the preceding steps for the remaining two control-plane nodes, selecting ctl‑2 for the second control-plane node and ctl-3 for the third control-plane node.

- After three control-plane nodes are installed and running, from the CSAH node, log in to the bootstrap node as user core and check the status of the bootkube service:

[core@bootstrap ~]$ journalctl -b -f -u release-image.service -u bootkube.service

-- Logs begin at Mon 2021-03-15 22:11:04 UTC. --

Mar 15 23:08:05 bootstrap.demo.lab bootkube.sh[18339]: I0315 23:08:05.513817 1 waitforceo.go:67] waiting on condition EtcdRunningInCluster in etcd CR /cluster to be True.

Mar 15 23:08:06 bootstrap.demo.lab bootkube.sh[18339]: I0315 23:08:06.629107 1 waitforceo.go:64] Cluster etcd operator bootstrapped successfully

Mar 15 23:08:06 bootstrap.demo.lab bootkube.sh[18339]: I0315 23:08:06.629469 1 waitforceo.go:58] cluster-etcd-operator bootstrap etcd bootkube.service complete

- Ensure that the output of the bootkube.service is complete.

Completing the bootstrap setup

To complete the bootstrap process:

- As user core, run the following command in /home/core:

[core@csah-pri ~]$ ./openshift-install --dir=openshift wait-for bootstrap-complete --log-level debug

DEBUG OpenShift Installer 4.6.20

DEBUG Built from commit 9c86c823fff234c104f574eaf25953485edfe4b1

INFO Waiting up to 20m0s for the Kubernetes API at https://api.ocp.demo.lab:6443...

INFO API v1.19.0+2f3101c up

INFO Waiting up to 30m0s for bootstrapping to complete...

DEBUG Bootstrap status: complete

INFO It is now safe to remove the bootstrap resources

INFO Time elapsed: 0s

- Validate the status of the control-plane nodes:

[core@csah-pri ~]$ oc get nodes

NAME STATUS ROLES AGE VERSION

ctl-1.demo.lab Ready master 3h18m v1.19.0+8d12420

ctl-2.demo.lab Ready master 175m v1.19.0+8d12420

ctl-3.demo.lab Ready master 175m v1.19.0+8d12420

Note: In a 3-node cluster, each control plane node has an additional ROLE worker along with the master node.

- Run oc get co to view the cluster operator status.

Note: In a 5+ node cluster, compute nodes must be in the Ready state before the cluster operator AVAILABLE state is displayed as True.