Home > Workload Solutions > Container Platforms > Red Hat OpenShift Container Platform > Archive > Design Guide—Red Hat OpenShift Container Platform 4.6 on Dell Infrastructure > Cloud-native infrastructure

Cloud-native infrastructure

-

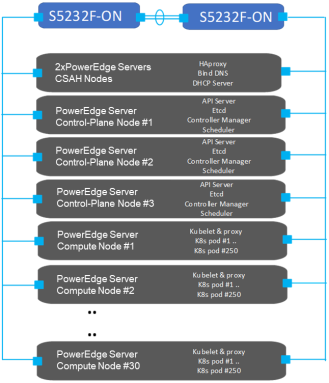

A cloud-native infrastructure must accommodate a large, scalable mix of service-oriented applications and their dependent components. These applications and components are generally microservices-based. The key to sustaining their operation is to have the right platform infrastructure and a sustainable management and control plane. The reference design that this guide describes helps you specify infrastructure requirements for building an on-premises OpenShift Container Platform 4.6 solution. The following figure shows this design:

Figure 3. OpenShift Container Platform 4.6 standard cluster design

OpenShift Container Platform terminology

This architectural design recognizes four host types that make up every OpenShift Container Platform cluster: the bootstrap node, the control-plane nodes, the compute nodes, and the storage nodes.

The deployment process also requires a node called the Cluster System Admin Host (CSAH) node. For a description of the deployment process, see the Red Hat OpenShift Container Platform 4.6 on Dell EMC Infrastructure Implementation Guide.

Note: Red Hat official documentation does not refer to a CSAH node in the deployment process.

CSAH nodes

The CSAH nodes are not part of the cluster but they are required for OpenShift cluster administration and operation. CSAH nodes also provision DHCP, PXE, DNS, and HAProxy services for cluster operation. While a single CSAH node can be used for development and testing purposes, this approach does not provide resilient load-balancing. For resilient load-balancing, Dell Technologies recommends using two CSAH nodes running HAProxy and KeepAlived. Further, Dell Technologies strongly discourages directly logging in to a control-plane node to manage the cluster. The OpenShift CLI tool called oc and the authentication tokens that are required to administer the OpenShift cluster are installed on both CSAH nodes as part of the deployment process. For redundancy, it is recommended that you store backups of OpenShift authentication credentials outside the cluster.

Note: Control-plane nodes are deployed using immutable infrastructure, further driving the preference for an administration host that is external to the cluster.

Bootstrap node (VM)

The CSAH nodes manage the operation and installation of the container ecosystem cluster. Installation of the cluster begins with the creation of a bootstrap VM on the primary CSAH node to be used to install control-plane components on the nodes. The initial minimum cluster can consist of three nodes running both the control plane and applications, or three control-plane nodes and at least two compute nodes. OpenShift Container Platform requires three control-plane nodes in both scenarios.

Basic node configuration

Node components are installed and run on every node in the cluster; that is, on control-plane nodes and compute nodes. The components are responsible for all node run-time operations. Key components consist of:

- Kubelet: An agent that runs on each node to perform declarations or actions that are provided to the cluster-API. Kubelet performs node service functions to ensure that running pods are compliant with PodSpecs and remain healthy. Kubelet does not manage containers or pods that were not created by Kubernetes.

- Kube-proxy: An instance of kube-proxy runs on every node of the cluster. The kube-proxy instance implements Kubernetes network services that run on the node. Kube-proxy also manages network connectivity and traffic route management based on host operating system packet filtering.

- Container run-time: The chosen container run-time engine (CRE) must be deployed on each node in a Kubernetes cluster. The CRE must comply with the Kubernetes Container Runtime Interface (CRI) specifications. OpenShift Container Platform defaults to the CRI-O container run-time and cannot be changed.

Control-plane nodes

Nodes that implement control-plane infrastructure management are called control-plane nodes. Three control-plane nodes establish a unified control plane for the operation of an OpenShift cluster. The control plane operates outside the application container workloads and is responsible for ensuring the overall continued viability, health, availability, and integrity of the container ecosystem. Removing control-plane nodes is not allowed.

OpenShift Container Platform also deploys additional control-plane infrastructure to manage OpenShift-specific cluster components.

The control plane provides the following functions:

- API Server: The API server exposes the Kubernetes control-plane API for other platform services (such as a web console) to consume and has API endpoints to manage cluster resources.

- Etcd: A highly available and consistent key-value store used to maintain Kubernetes cluster data. The etcd daemon is run on each control plane node and requires a majority consensus to achieve quorum (the formula used for quorum is

, where n is the number of control plane nodes). For production clusters, at least three control-plane nodes are required, each running an etcd daemon. This requirement means that at least two control planes are required to achieve quorum.

, where n is the number of control plane nodes). For production clusters, at least three control-plane nodes are required, each running an etcd daemon. This requirement means that at least two control planes are required to achieve quorum. - Scheduler: The Kubernetes scheduler assigns new pods to a node based on the resource requirements (for CPU, RAM, and GPU, for example) and the affinity and anti-affinity mechanisms.

- Controller manager: The controller managers run all controller processes. While each controller process is independent, the processes are run as a single executable to reduce complexity. The controllers include the node, replication, endpoints, service, and token controllers.

- OpenShift API server: The OpenShift API server validates and configures the data for OpenShift resources such as projects, routes, and templates. The OpenShift API server is managed by the OpenShift API server operator.

- OpenShift controller manager: The OpenShift controller manager watches etcd for changes to OpenShift objects such as project, route, and template controller objects, and then uses the API to enforce the specified state. The OpenShift controller manager is managed by the OpenShift controller manager operator.

- OpenShift OAuth API server: The OpenShift OAuth API server validates and configures the data to authenticate to OpenShift Container Platform, such as users, groups, and OAuth tokens. The OpenShift OAuth API server is managed by the cluster authentication operator.

- OpenShift OAuth server: Users request tokens from the OpenShift OAuth server to authenticate themselves to the API. The OpenShift OAuth server is managed by the cluster authentication operator.

Backup and disaster recovery

Even though OpenShift Container Platform is resilient to node failure, it is recommended to take regular backups of the etcd data store. Because etcd backups are a blocking procedure, take them at off-peak hours in production environments. Keep in mind that when you update a cluster within minor versions (for example, from 4.6.2 to 4.6.3), you should take an etcd backup of the version of OpenShift Container Platform that is currently running on your cluster or clusters. Take etcd backups 24 hours after the cluster has been installed to let the initial rotation of certificates occur; otherwise, the etcd backup may contain expired certificates. For more information, see the Red Hat documentation about backing up etcd.

Quorum requirements for etcd dictate that if enough control-plane nodes fail (and, as a result, most control planes are no longer operating), restoring from a previous cluster state becomes the only option for cluster recovery. If most control-plane nodes are still operating, meaning that quorum can be achieved but no redundancy exists for further node failure, it is necessary to replace unhealthy etcd members. To perform this task, follow the steps in Red Hat documentation for replacing an unhealthy etcd member.

Compute plane

In an OpenShift cluster, application containers are deployed to run on compute nodes by default. The term “compute node” is arbitrary; nothing specific is required to run compute nodes, and applications can be run on control-plane nodes, if wanted. Cluster nodes advertise their resources and resource utilization so that the scheduler can allocate containers and pods to these nodes and maintain a reasonable workload distribution. The Kubelet service runs on all nodes in a Kubernetes cluster. This service receives container deployment requests and ensures that the requests are instantiated and put into operation. The Kubelet service also starts and stops container workloads and manages a service proxy that handles communication between pods that are running across compute nodes.

Logical constructs called MachineSets define compute node resources. MachineSets can be used to match requirements for a pod deployment to a matching compute node. OpenShift Container Platform supports defining multiple machine types, each of which defines a compute node target type.

Compute nodes can be added to or deleted from a cluster if doing so does not compromise the viability of the cluster. If the control-plane nodes are not designated as schedulable, at least two viable compute nodes must always be operating to run router pods that manage ingress networking traffic. Further, enough compute platform resources must be available to sustain the overall cluster application container workload.

Storage nodes

Storage can be either provisioned from dedicated nodes or shared with compute services. Provisioning occurs on disk drives that are locally attached to servers that have been added to the cluster as compute nodes. For more information, see Dell storage options.

The Red Hat Data Services portfolio of solutions includes persistent software-defined storage (SDS) and data services that are integrated with and optimized for OpenShift Container Platform. As part of the portfolio, Red Hat OpenShift Data Foundation (formerly known as OpenShift Container Storage) delivers resilient and persistent SDS and data services based on Ceph, Rook, and NooBaa technologies.

OpenShift Data Foundation

Running as a Kubernetes service, OpenShift Data Foundation is engineered, tested, and certified to provide data services for OpenShift Container Platform on any infrastructure. OpenShift Data Foundation can be deployed within an OpenShift Container Platform cluster on existing worker nodes, infrastructure nodes, or dedicated nodes. Alternatively, OpenShift Data Foundation can be decoupled and managed as a separate, independently scalable data store, delivering data for one or many OpenShift Container Platform clusters. To streamline configuration options, Red Hat and Intel® have jointly developed three workload-optimized configurations for OpenShift Data Foundation external data nodes: edge, capacity, and performance. These configurations are optimized for Dell PowerEdge R750 servers, as described in Appendix A. It is also possible to use existing compute nodes if they meet OpenShift Data Foundation hardware requirements.

You can start the deployment of OpenShift Data Foundation from the embedded OperatorHub when you are logged into OpenShift Container Platform as the cluster administrator. For more information, see OpenShift Container Storage 4.6 Documentation.

Note: At the time of publication of this guide, some Red Hat documentation and the operator and product interface of OpenShift Data Foundation may still use the product name OpenShift Container Storage for OpenShift Data Foundation.