Home > Workload Solutions > Container Platforms > Red Hat OpenShift Container Platform > Archive > Design Guide—Dell Ready Stack for Red Hat OpenShift Container Platform 4.3 CSI Attached Storage > Deployment process

Deployment process

-

Dell Technologies has simplified the process of bootstrapping the OpenShift Container Platform 4.3 cluster. To use the simplified process, ensure that:

- The cluster is provisioned with network switches, servers, and storage.

- There is Internet connectivity to the cluster. Internet connectivity is necessary to install OpenShift Container Platform 4.3.

The deployment procedure begins with initial switch provisioning. See the OpenShift 4.3 Deployment Guide for sample switch configurations.

This step enables preparation and installation of the CSAH node, involving:

- Installation of Red Hat Enterprise Linux 7.6+

- Subscription to the necessary repositories

- Creation of an Ansible user account with sudo privileges

- Cloning of a GitHub Ansible playbook repository from the Dell ESG container repository

- Running an Ansible playbook to initiate the installation process

Dell Technologies has generated Ansible playbooks that fully prepare the CSAH node. Before the installation of the OpenShift Container Platform 4.3 cluster begins, the Ansible playbook sets up a PXE server, DHCP server, DNS server, and HTTP server. The playbook also creates ignition files to drive installation of the bootstrap, master, and worker nodes and it configures HAProxy so that the installation infrastructure is ready for the next step. The playbook presents a list of node types that must be deployed in top-down order.

Note: For enterprise sites, consider deploying appropriately hardened DHCP and DNS servers. Similarly, consider using resilient multiple-node HAProxy configuration. The Ansible playbook for this design deploys a single HAProxy instance. The CSAH Ansible playbooks in this guide are provided for reference only at the implementation stage.

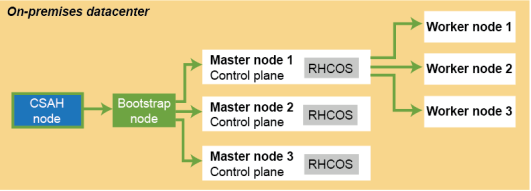

The Ansible playbook creates an installconfig file that is used to control deployment of the bootstrap node. For more information, see the Dell EMC Ready Stack: Red Hat OpenShift Container Platform 4.3 Deployment Guide. An ignition configuration control file starts the bootstrap node, as shown in the following figure:

Figure 2. OpenShift Container Platform 4.3 installation workflow: Creating the bootstrap, master, and worker nodes

Note: An installation that is driven by ignition configuration generates security certificates that expire after 24 hours. You must install the cluster before the certificates expire, and the cluster must operate in a viable (nondegraded) state so that the first certificate rotation can be completed.

The cluster bootstrapping process includes the following phases:

- After startup, the bootstrap node creates the resources that are needed to start the master nodes. Do not interrupt this process.

- The master nodes pull resource information from the bootstrap node to bring them up into a viable state. This resource information is used to form the etcd control plane cluster.

- The bootstrap node instantiates a temporary Kubernetes control plane that is under etcd control.

- A temporary control plane loads the application workload control plane to the master nodes.

- The temporary control plane is shut down, handing control over to the now viable control plane operating on the master nodes.

- OpenShift Container Platform components are pulled into the control of the master nodes.

- The bootstrap node is shut down.

The master node (control plane) now drives creation and instantiation of the worker nodes.

- The control plane adds operator-based services to complete the deployment of the OpenShift Container Platform ecosystem.

The cluster is now viable and can be placed into service in readiness for Day 2 operations. You can expand the cluster by adding worker nodes. See the OpenShift 4.3 Deployment Guide for adding additional worker nodes.