Home > Workload Solutions > Container Platforms > Red Hat OpenShift Container Platform > Archive > Design Guide—Dell Ready Stack for Red Hat OpenShift Container Platform 4.3 CSI Attached Storage > Cloud-native infrastructure

Cloud-native infrastructure

-

A cloud-native infrastructure must accommodate a large, scalable mix of services-oriented applications and their dependent components. These applications and components are generally microservices-based, and the key to sustaining their operation is to have the right platform infrastructure and a sustainable management and control plane. This reference design helps you specify infrastructure requirements for building a private OpenShift Container Platform 4.3 solution.

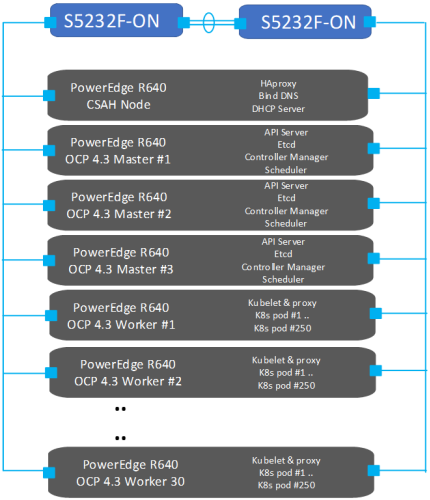

The following figure shows the solution design:

Figure 1. OpenShift Container Platform 4.3 cluster design

Our design recognizes four host types that make up every OpenShift Container Platform cluster: the bootstrap node, master nodes, worker nodes, and storage nodes. The deployment process also uses a Cluster System Admin Host (CSAH) node that is unique to the Dell EMC design.

Note: Red Hat online documentation does not refer to the CSAH node.

CSAH node

The CSAH node is not part of the cluster, but it is required for OpenShift cluster administration. Dell Technologies strongly discourages logging in to a master node to manage the cluster. The OpenShift CLI administration tools are deployed onto the master nodes; while the authentication tokens that are needed to administer the OpenShift cluster are installed on the CSAH node only as part of the deployment process.

Note: Master nodes are deployed using immutable infrastructure (RHEL CoreOS), further driving the preference for an administration host that is external to the cluster.

Bootstrap node

The CSAH node is used to manage the operation and installation of the container ecosystem cluster. Installation of the cluster begins with the creation of a bootstrap node, which is needed only during the bring-up phase of the installation. The node is necessary to create the persistent control plane that the master nodes manage. Dell Technologies recommends provisioning a dedicated host for administration of the OpenShift Container cluster. The initial minimum cluster consists of three master nodes and at least two worker nodes. After this cluster becomes operational, you can redeploy the bootstrap node as a worker node.

Master nodes

Nodes that implement control plane infrastructure management are called master nodes. A minimum of three master nodes establish the control plane for operation of an OpenShift cluster. The control plane operates outside the application container workloads and is responsible for ensuring the overall continued viability, health, and integrity of the container ecosystem.

Master nodes provide an application programming interface (API) for overall resource management. These nodes run etcd, the API server, and the Controller Manager Server. Removing master nodes from a cluster is not allowed.

Worker nodes

In an OpenShift cluster, application containers are deployed to run on worker nodes. Worker nodes advertise their resources and resource utilization so that the scheduler can allocate containers and pods to these nodes and maintain a reasonable workload distribution. The Kubelet service runs on each worker node. This service receives container deployment requests and ensures that the requests are instantiated and put into operation. The Kubelet service also starts and stops container workloads and manages a service proxy that handles communication between pods that are running across different worker nodes.

Logical constructs called MachineSets define worker node resources. MachineSets can be used to match requirements for a pod deployment to a matching worker node. OpenShift Container Platform supports defining multiple machine types, each of which defines a worker node target type.

Worker nodes can be added to or deleted from a cluster if doing so does not compromise the viability of the cluster. At least two viable worker nodes must always be operating. Further, enough compute platform resources must be available to sustain the overall cluster application container workload.

Storage Array

Storage can be provisioned from dedicated Dell EMC storage arrays that can be integrated using CSI drivers. Necessary switch configuration needs to be in place to discover external storage arrays for Dell EMC storage arrays such PowerMax and Isilon. Dell has developed CSI drivers for these storage arrays. The CSI drivers are located in the Operator Hub.

Note: Dell EMC CSI drivers do not support RHCOS-based worker nodes as of this release.