Home > Workload Solutions > High Performance Computing > White Papers > Dell Validated Design for HPC pixstor Storage > Architecture overview

Architecture overview

-

Solution overview

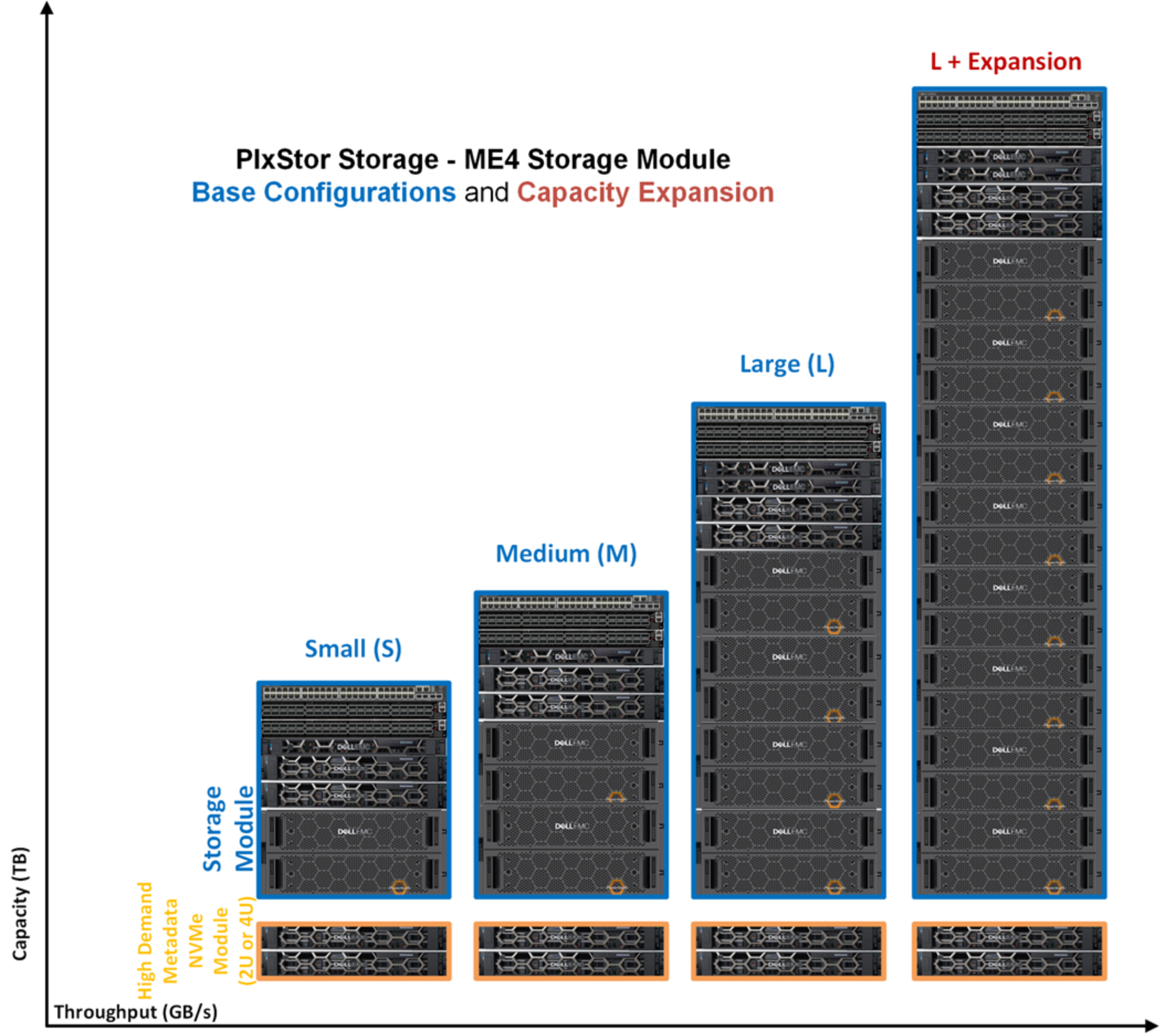

The Dell Validated Design for HPC pixstor Storage uses a modular approach to meet customer requirements. The basic building block is a storage module, which is available in three base configurations: Small, Medium, and Large, with an optional capacity expansion for the Large configuration. Also, an optional High Demand MetaData (HDMD) NVMe module is available for high metadata performance or if a very large number of files is required. These configurations can be used as scale-out building blocks to meet different capacity and performance goals as shown in the following figure:

Figure 1. HPC pixstor storage - Storage module configurations

Introduction

Today’s HPC environments have increased demands for high-speed storage. Storage was becoming the bottleneck in many workloads due to higher core count CPUs, larger and faster memory, a faster PCIe bus, and increasingly faster networks. Parallel File Systems (PFS) typically addresses these high-demand HPC requirements. They provide concurrent access to a single file or a set of files from multiple nodes, efficiently and securely distributing data to multiple LUNs across several storage servers.

PFS are usually based on spinning media to provide the highest capacity at the lowest cost. Often, the speed and latency of spinning media cannot keep up with the demands of many modern HPC workloads. The use of flash technology in the form of burst buffers, faster tiers, or even fast scratch (local or distributed) can mitigate this issue. The solution for HPC PixStor Storage offers both storage nodes based on the PowerVault ME4084 arrays for a cost-effective high capacity tier and NVMe nodes to cover high-bandwidth, low-latency demands and the optional HDMD module, in addition to being flexible, scalable, efficient, and reliable.

Frequently, data cannot be accessed using the native PFS client software on some machines for different reasons. Instead, other protocols like NFS or SMB must be used. An example is when customers require access to data from workstations or laptops with MS-Windows or Apple macOS and are restricted from installing the PFS client software, or research/production systems that only offer connectivity using standard protocols. The Dell Ready Solution for HPC pixstor Storage uses gateway nodes as the component to allow such connectivity with scalable performance in an efficient and reliable way.

Storage solutions frequently require access to other storage devices (local or remote) to move data to and from those devices, such as when the PixStor gateway is not the most appropriate choice or when it is highly desirable to integrate those devices as another tier to the pixstor system (for example, adding object storage, cloud storage, tape libraries, and so on). Under those circumstances, the pixstor solution can provide tiered access to other storage devices using other enterprise protocols, including cloud protocols, and using ngenea nodes with arcastream proprietary software that allows that level of integration while remaining cost effective.

Note: Because arcastream changed its branding to all lower-case characters, we have modified instances of “arcastream,” “pixstor,” and “ngenea” accordingly.

Architecture

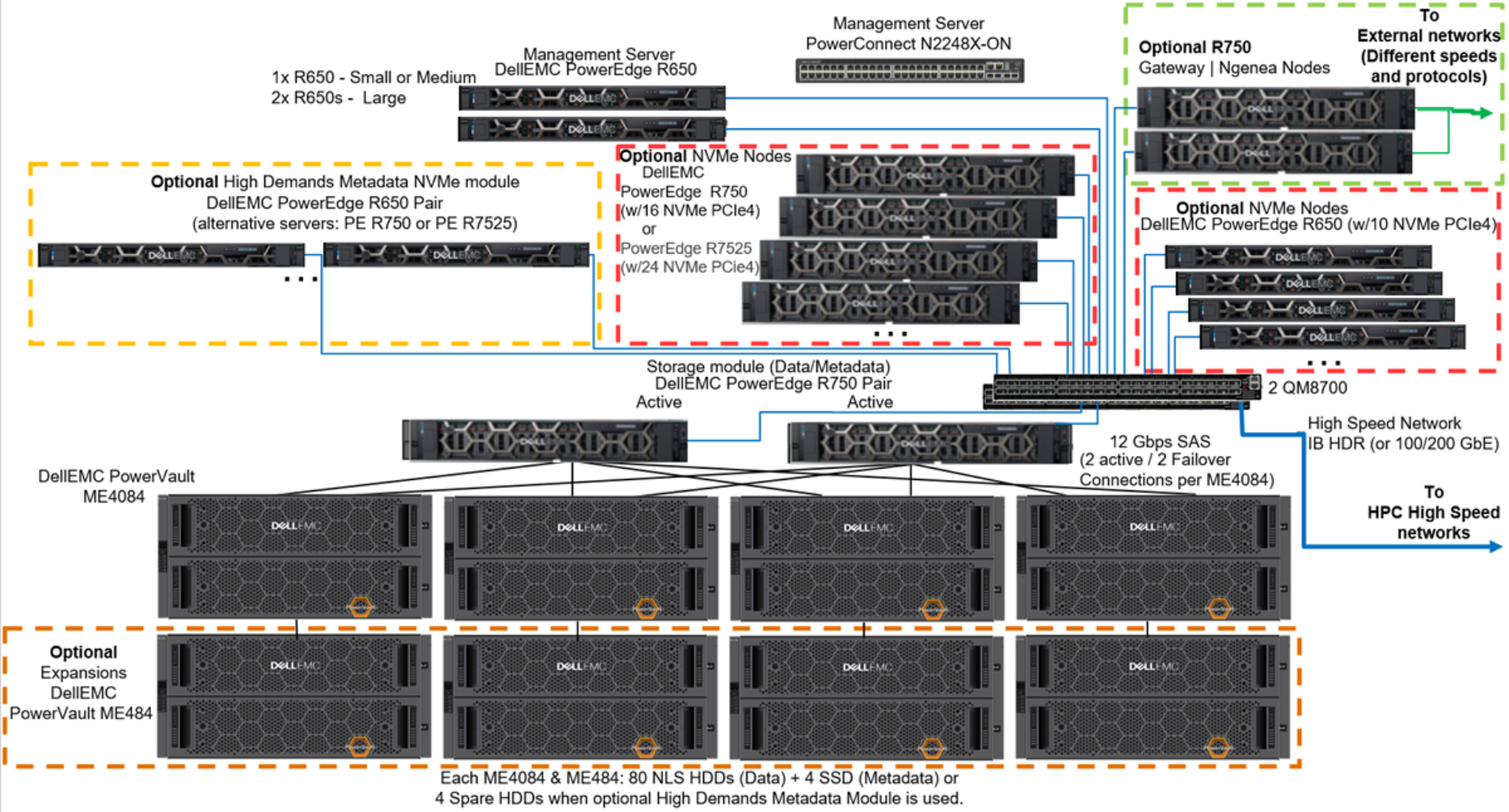

The following figure shows the architecture for the new generation of the Dell Validated Design for HPC pixstor Storage, which uses PowerEdge R650, R750, and R7525 servers and PowerVault ME4084 storage arrays, with the new pixstor 6.0 software from our partner company arcastream.

Figure 2. Solution architecture

Optional PowerVault ME484 EBOD arrays can increase the capacity of the solution. The figure shows such capacity expansion as SAS additions to the existing PowerVault ME4084 storage arrays.

The pixstor software includes the widespread GPFS, also known as Spectrum Scale, as the PFS component that is considered software-defined storage due to its flexibility and scalability. In addition, the pixstor software includes many other arcastream software components such as advanced analytics, simplified administration and monitoring, efficient file search, advanced gateway capabilities, and many others.

The main components of the pixstor solution include:

- Management servers—PowerEdge R650 servers provide UI and CLI access for management and monitoring of the pixstor solution, as well as performing advanced search capabilities, compiling some metadata information in a database, to speed up searches and prevent the search from loading metadata Network Shared Disks (NSDs).

- Storage module—The storage module is the main building block for the pixstor storage solution. Each module includes:

- One pair of storage servers

- One, two, or four backend storage arrays (ME4084) with optional capacity expansions (ME484)

- NSDs contained in the backend storage arrays

- Storage server (SS)—The storage server is an essential storage module component. HA pairs of PowerEdge R750 servers (failover domains) are connected to ME4084 arrays using SAS 12 Gbps cables to manage data NSDs and provide access to NSDs using redundant high-speed network interfaces. For the standard pixstor configuration, these servers have the dual role for data servers and managing metadata NSDs (using SSDs that replace all spare HDDs).

- Backend storage—Backend storage is a storage module component that stores the file system data (ME4084) shown in Figure 2.

- Capacity expansion storage—Optional PowerVault ME484 capacity expansions (in the lower dotted orange square in Figure 2) are connected behind ME4084 arrays using SAS 12 Gbps cables to expand the capacity of a storage module. For pixstor solutions, each ME4084 array is restricted to use only one ME484 expansion for performance and reliability (despite ME4084 arrays officially supporting up to three ME484 expansions).

- Network Shared Disks (NSDs)—NSDs are backend block devices (that is, RAID 6 LUNs from ME4 arrays or RAID 10 NVMe devices) that store data, metadata, or both. In the pixstor solution, file system data and metadata are stored in different NSDs. Data NSDs use spinning media (NLS SAS3 HDDs) or NVMe. Metadata NSDs use SSDs in the standard configuration and recently started to use NVMe devices for high metadata demands (Metadata include directories, filenames, permissions, timestamps, and the location of data in other NSDs).

- NVMe nodes—An NVMe node is the main component of the optional NVMe Tier Modules (in the dotted red squares in Figure 2). Pairs of 15G PowerEdge servers in HA (failover domains) provide a high-performance flash-based tier for the pixstor solution. For this release, two PowerEdge servers were benchmarked as part of the NVMe tier. You can use PowerEdge R650 servers with 10 NVMe direct attached drives, PowerEdge R750 servers with 16 NVMe direct attached devices, and PowerEdge R7525 servers with 24 direct attached drives. To maintain homogeneous performance across all the NVMe nodes, allowing striping data across nodes in the tier, mixing different server models in the same NVMe tier is not supported. However, multiple NVMe tiers each on homogeneous serves, eacrh tier using different filesets as access points, are supported.

These PowerEdge servers support NVMe PCIe4 devices. Mixing NVMe PCIe4 devices with lower performant PCIe3 devices is not recommended for the solution and it is not supported for the same NVMe tier. Additional pairs of NVMe nodes can scale out performance and capacity for this NVMe tier. Increased capacity is provided by selecting the appropriate capacity for the NVMe devices supported on the servers or adding more pairs of servers.

An important difference from previous pixstor releases is that NVMesh is no longer a component of the solution. For HA purposes, an alternative based on GPFS replication was implemented for each NVMe server pair. - High Demand Metadata Server (HDMD)—An HDMD server is a component of the optional HDMD (in dotted yellow square in Figure 2). Pairs of PowerEdge R650 NVMe servers with up to 10 NVMe devices each in HA (failover domains) manage the metadata NSDs and provide access using redundant high-speed network interfaces. Other servers supported as NVMe nodes can be used instead of the PowerEdge R650 server.

- Native client software—Native client software is installed on the clients to allow access to the file system. The file system must be mounted for access and appears as a single namespace.

- Gateway nodes—The optional gateway nodes (in the dotted green square in Figure 2) are PowerEdge R750 servers (the same hardware as ngenea nodes but using different software) in a Samba Clustered Trivial Data Base (CTDB) cluster providing NFS or SMB access to clients that do not have or cannot have the native client software installed.

- ngenea nodes—The optional ngenea nodes (in the dotted green square in Figure 2)) are PowerEdge R750 servers (the same hardware as the gateway nodes but using different software) that offer access to external storage devices that can be used as another tier in the same single namespace (for example, object storage, cloud storage, tape libraries, and so on) using enterprise protocols, including cloud protocols.

- Management switch—A PowerConnect N2248X-ON Gigabit Ethernet switch connects the different servers and storage arrays. It is used for administration of the solution interconnecting all the components.

- High-speed network switch—Mellanox QM8700 Switches provide high-speed access using InfiniBand (IB) HDR and HDR100. For Ethernet solutions, the Mellanox SN3700 switch is used.