Home > Storage > PowerVault > Guides > Dell PowerVault ME5 Series and Linux > SAN-attached storage

SAN-attached storage

-

Given the limited host ports available on a ME5, attaching the storage system and hosts to a SAN allows more hosts to connect to a single storage system concurrently. While this can significantly improve the usage efficiency of the storage system, administrators should carefully balance the total workload driven by all connected hosts and the resource availability on the storage system.

Fibre Channel protocol

When connecting to the storage system using FC switches, use Fibre Channel zones to segment the fabric to restrict access and isolate traffic. A zone contains paths between initiators (server HBAs) and targets (storage array front-end ports). Either physical ports (port zoning) on the Fibre Channel switches or the WWNs (name zoning) of the end devices can be used in zoning. The following list includes key zoning rules and recommendations.

Zoning Fibre Channel switches for Linux hosts is essentially no different than zoning any other hosts to the ME5 Series storage system. The following list includes key zoning rules and recommendations.

- It is recommended to use name zoning because it offers better flexibility. With name zoning, server HBAs and storage array ports are not tied to specific physical ports on the switch. See Identify Linux FC port WWNs and Identify ME5 host port FC WWNs for instruction on gathering the WWNs information.

- Use the point-to-point protocol to connect to the fabric switches.

- The ME5 storage system and the Linux hosts should be connected to two different Fibre Channel switches (fabrics) for high availability and redundancy.

- When defining the zones, it is a best practice to use single-initiator (Linux host port), multiple-target (ME5 host ports) zones. For example, for each Fibre Channel HBA port on the server, create a zone that includes the server HBA port WWN and all the storage port WWNs on the storage system.

Note: Dell Technologies recommends using name zoning and creating single-initiator, multiple-target zones.

Identify Linux FC port WWNs

The Linux host FC initiator WWNs are required for FC zoning and creating the host connection on the ME5 storage system.

Create and run the following bash shell script to identify the HBA WWNs on the Linux host. The fcshow.sh script is provided as an example to extract the host HBA information.

# cat fcshow.sh

#!/bin/bash

printf "%-10s %-20s %-10s %-10s %-28s %-s\n" "Host Port" "WWPN" "State" "Cur Speed" "Supported Speeds" "Port Type"

printf "%115s\n" |tr ' ' -

ls -1d /sys/class/fc_host/host* | while read host

do

port_name=`cat $host/port_name`

port_state=`cat $host/port_state`

port_speed=`cat $host/speed`

port_type="`cat $host/port_type`"

supported_speeds="`cat $host/supported_speeds`"

printf "%-10s %-20s %-10s %-10s %-28s %-s\n" ${host##*/} $port_name $port_state "$port_speed" "$supported_speeds" "$port_type"

done# bash fcshow.sh

Host Port WWPN State Cur Speed Supported Speeds Port Type

-------------------------------------------------------------------------------------------------------------------

host11 0x2100f4e9d4561392 Online 16 Gbit 8 Gbit, 16 Gbit, 32 Gbit NPort (fabric via point-to-point)

host12 0x2100f4e9d4561393 Online 16 Gbit 8 Gbit, 16 Gbit, 32 Gbit NPort (fabric via point-to-point)

Identify ME5 host port FC WWNs

Locate the ME5 host port WWN information in the PowerVault Manager or by running the interactive CLI command. See Dell PowerVault ME5 Series Storage System CLI Reference Guide on Dell.com/support for a detailed explanation of all available CLI commands.

Identify WWNs using PowerVault Manager

- In PowerVault Manager, click Settings > Peer Connections.

Figure 1. Display Port WWN information in PowerVault Manager

- Detailed information about each port can also be found under Maintenance > Hardware > Rear View. Click a specific port to show the details.

Figure 2. Display detailed port information in PowerVault Manager

Identifying WWNs using interactive CLI commands

- Log in to the ME5 storage system controller using ssh.

# ssh manage@{controller-IP-address}

- Run the following CLI command to show the host-port information.

# show ports detail

# show ports detail

Ports Media Target ID Status Speed(A) Speed(C) Health Reason Action

----------------------------------------------------------------------------------------

A0 FC(P) 207000c0fff03b1e Up 16Gb Auto OK

Topo(C) PID SFP Status Part Number Supported Speeds

------------------------------------------------------------------

PTP N/A OK FTLF8532P4BNV-E5 8G,16G,32G

A1 FC(P) 217000c0fff03b1e Up 16Gb Auto OK

Topo(C) PID SFP Status Part Number Supported Speeds

------------------------------------------------------------------

PTP N/A OK FTLF8532P4BNV-E5 8G,16G,32G

……. Truncated for brevity

Ports Media Target ID Status Speed(A) Speed(C) Health Reason Action

----------------------------------------------------------------------------------------

B0 FC(P) 247000c0fff03b1e Up 16Gb Auto OK

Topo(C) PID SFP Status Part Number Supported Speeds

------------------------------------------------------------------

PTP N/A OK FTLF8532P4BNV-E5 8G,16G,32G

B1 FC(P) 257000c0fff03b1e Up 16Gb Auto OK

Topo(C) PID SFP Status Part Number Supported Speeds

------------------------------------------------------------------

PTP N/A OK FTLF8532P4BNV-E5 8G,16G,32G

--- Truncated for brevity

iSCSI protocol

The following iSCSI network best practices are recommended:

- Use dual network switches to provide network-level redundancy.

- Use two dedicated iSCSI networks or VLANs to isolate iSCSI traffic between the hosts and the ME5 Series storage system from other public or application traffic.

- Use two dedicated HBA ports on the Linux host for HBA and path redundancy.

- Use 25 Gb network HBAs and switches for better performance.

- Use auto-negotiate for all interfaces that negotiate at full-duplex and at the maximum speed of the connected port.

- Enable flow control on all servers and switch ports that handle iSCSI traffic. A minimum of receive (RX) flow control should be enabled for all switch interfaces used by the servers and storage systems for iSCSI traffic.

- Enable symmetric flow control for all server interfaces used for iSCSI traffic.

- Disable unicast storm control on iSCSI switches.

- Disable multicast or set multicast storm control to be enabled at the switch level for all iSCSI VLANs.

- If using jumbo frames for improved performance, make sure all devices on the transmission path have jumbo frames enabled and support 9000 as the maximum transmission unit (MTU).

- If the switches do not support both flow control and jumbo frames simultaneously, Dell Technologies recommends choosing flow control over jumbo frames.

See Dell PowerVault ME5 Series Storage System Deployment Guide for additional iSCSI best-practice information.

Identify ME5 iSCSI host port IP addresses

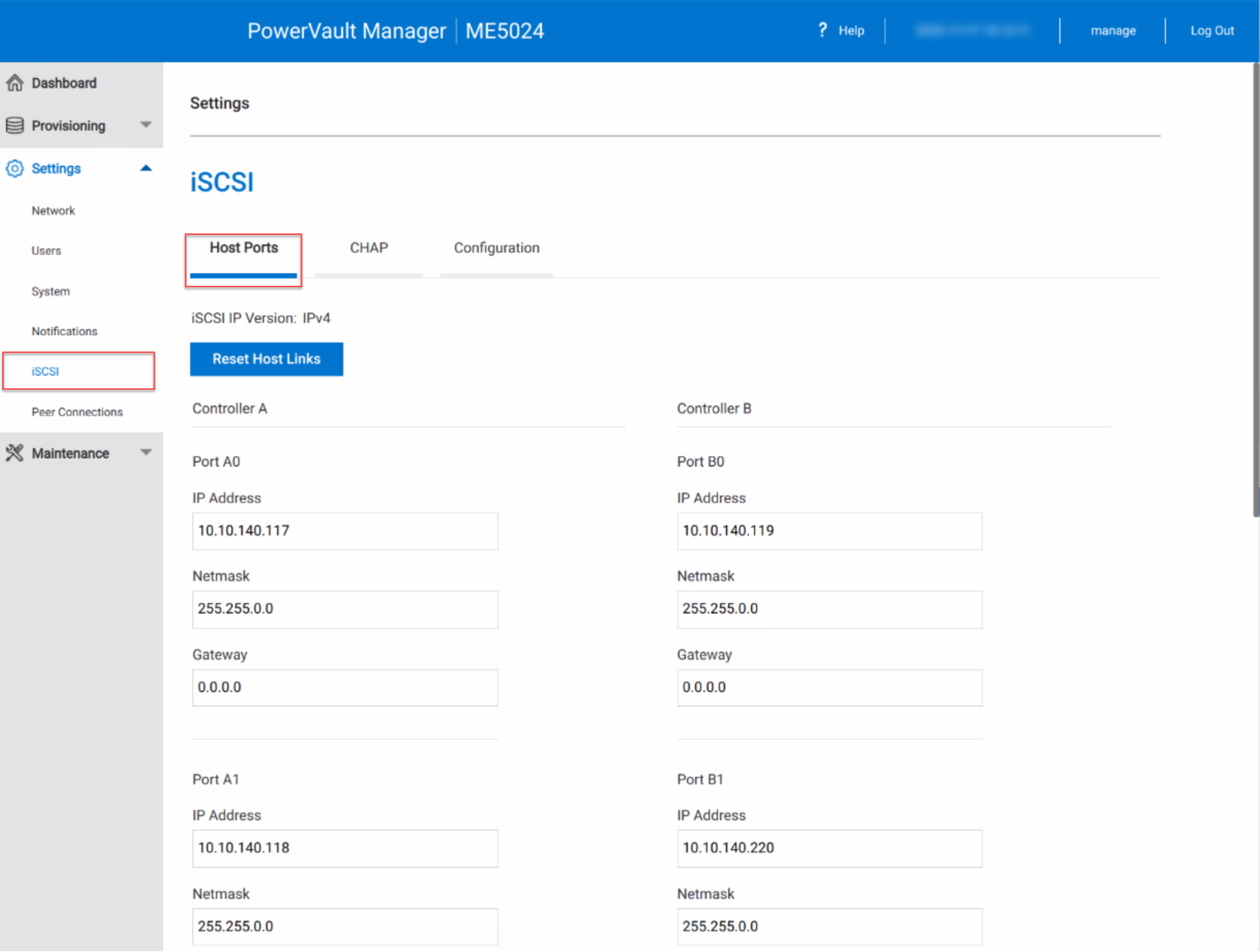

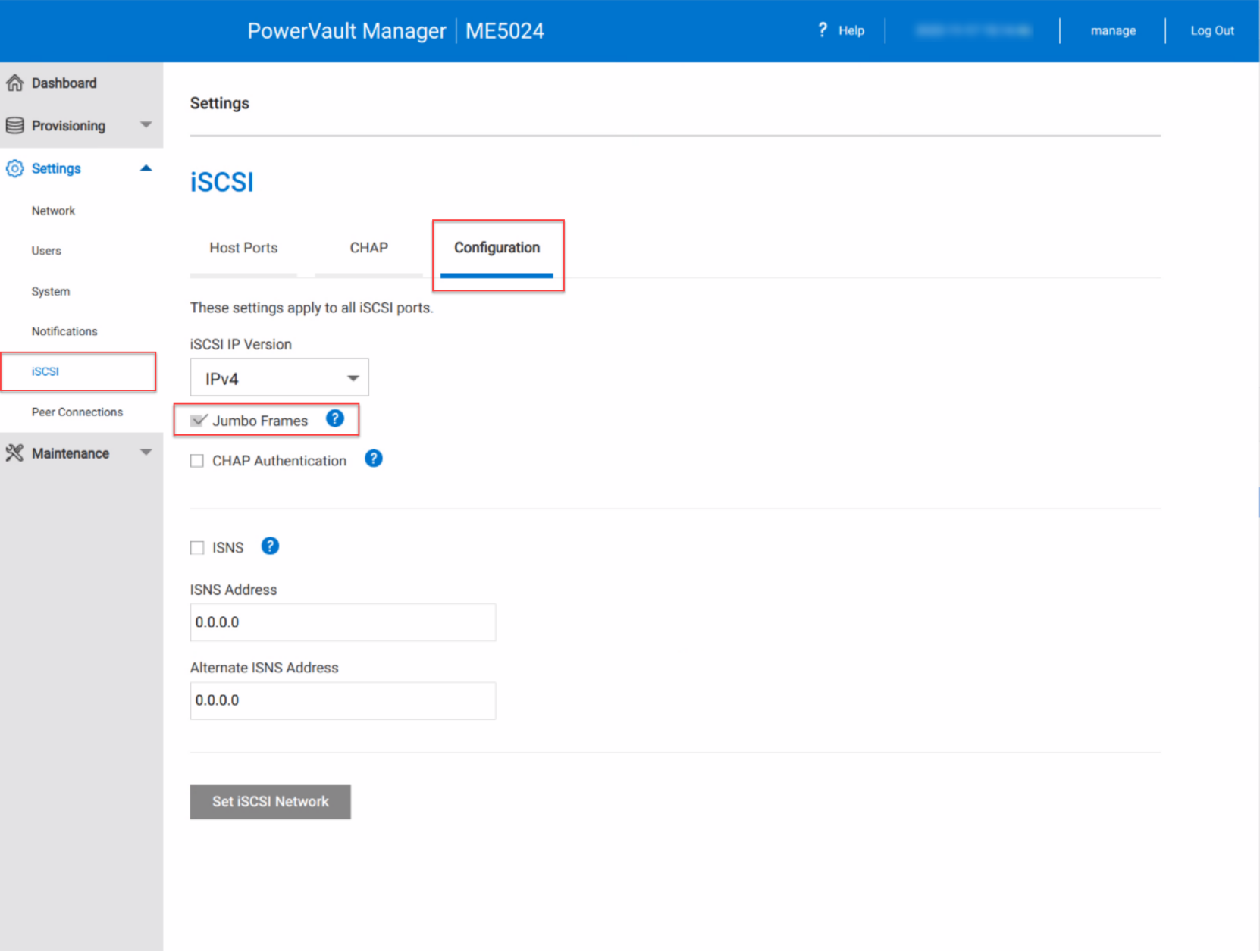

The iSCSI port network information can be found under the Settings > iSCSI > Host Ports and Configuration in the PowerVault Manager. See Figure 3 and Figure 4.

Figure 3. PowerVault Manager iSCSI Host Ports setting

Figure 4. PowerVault Manager iSCSI Jumbo Frames setting

Alternatively, use the ME5 interactive CLI command to display the port information.

- Log in to the ME5 storage system controller using ssh.

# ssh manage@{controller-IP-address}

- Run the following CLI command to show all host-port information. The following shows the output for one iSCSI port.

# show ports detail

Ports Media Target ID Status Speed(A) Health Reason Action

---------------------------------------------------------------------------------------------------------

A0 iSCSI iqn.1988-11.com.dell:01.array.bc305bf03b18 Up 10Gb OK

Port Details

------------

IP Version: IPv4

IP Address: 10.10.xxx.xxx

Gateway: 0.0.0.0

Netmask: 255.255.0.0

MAC: 00:C0:FF:5C:B0:23

SFP Status: OK

Part Number: D0R73

10G Compliance: Special

Ethernet Compliance: N/A

Cable Technology: Passive

Cable Length: 2

…… Truncated for brevity

B0 iSCSI iqn.1988-11.com.dell:01.array.bc305bf03b18 Up 10Gb OK

Port Details

------------

IP Version: IPv4

IP Address: 10.10.xxx.xxx

Gateway: 0.0.0.0

Netmask: 255.255.0.0

MAC: 00:C0:FF:5C:AC:1F

SFP Status: OK

Part Number: D0R73

10G Compliance: Special

Ethernet Compliance: N/A

Cable Technology: Passive

Cable Length: 2

…… Truncated for brevity

Configure Linux host network adapter

The Linux host requires at least one HBA port configured on the iSCSI network. For redundancy, configure dual iSCSI networks on the Linux host and the ME5 storage system. The following table shows an example of the redundant iSCSI network.

Table 1. Redundant iSCSI networks configuration

iSCSI Networks

Linux network interfaces

ME5 iSCSI ports

VLAN 1

eth1

A0, B0

VLAN 2

eth2

A1, B1

If using jumbo frames, ensure that the Linux iSCSI network interfaces have the proper setting for the MTU size. Typically, the network interface configuration files are in the /etc/sysconfig/network-scripts or /etc/sysconfig/network directory. An example of the interface file is shown below.

DEVICE=eth1

STARTMODE=onboot

USERCONTROL=no

BOOTPROTO=static

NETMASK=255.255.0.0

IPADDR=10.10.xxx.xxx

MTU=9000

To test the interface with the new setting, use ping with the -M do -s {packet size} arguments.

# ping -M do -s 8972 -c 3 10.10.xxx.xxx (ME5 iSCSI port IP)

Configure Linux iSCSI initiator

To configure the host iSCSI initiator, use the following steps to make the recommended settings and activate the iSCSI transport.

- Install and enable iSCSI software on the Linux host.

Red Hat

# dnf install iscsi-initiator-utils

# systemctl enable iscsi --now

SUSE

# zypper install open-iscsi

# systemctl enable iscsi --now

- If the Linux host is created from a VM template that has the iSCSI software pre-installed, it inherits the same iSCSI qualified name (IQN) from the template. You must create a unique IQN for the host before creating connections to the ME5 storage system. To generate a new IQN, run the following command and replace the IQN in the /etc/iscsi/initiatorname.iscsi file.

# iscsi-iname

# vi /etc/iscsi/initiatorname.iscsi

InitiatorName={output of iscsi-iname}

- Edit the /etc/iscsi/iscsid.conf file and set the following values. These values dictate the failover timeout and queue depth. The values shown serve as a starting point and might require adjustment depending on the environment.

node.session.timeo.replacement_timeout = 5

node.session.cmds_max = 1024

node.session.queue_depth = 128

- Perform iSCSI discovery against one of the storage iSCSI port IPs. This step sets up the nodedb in the /var/lib/iscsi directory. See the section Identify ME5 iSCSI host port IP addresses to identify the iSCSI host port IPs on the storage system.

# iscsiadm -m discovery -t st -p {ME5_A0_IP} -discover

- Log in to the discovered iSCSI qualified name.

# iscsiadm -m node –L all

- Verify that the Linux host has established the iSCSI sessions to all the ME5 storage system iSCSI ports. The following command displays all established sessions to the storage system. Each line in the output represents a session to a ME5 storage system iSCSI port.

# iscsiadm -m node

{ME5-A0-IP}:3260,1 iqn.1988-11.com.dell:01.array.bc305bf03b18

{ME5-A1-IP}:3260,3 iqn.1988-11.com.dell:01.array.bc305bf03b18

{ME5-B0-IP}:3260,2 iqn.1988-11.com.dell:01.array.bc305bf03b18

{ME5-B1-IP}:3260,4 iqn.1988-11.com.dell:01.array.bc305bf03b18

Linux TCP settings and other tuning considerations

Kernel parameters that can be tuned for performance are found in the /proc/sys/net/core and /proc/sys/net/ipv4 kernel parameters. When you have determined the optimal values, permanently set these parameters in the /etc/sysctl.conf file. Like most other modern operating system platforms, Linux can efficiently auto-tune TCP buffers. However, by default, some settings such as buffer size are conservatively low. Experimenting with the following kernel parameters can lead to improved network performance, and subsequently improve iSCSI performance.

To set these parameters permanently, enter them in the /etc/sysctl.conf file and reboot the servers.

Table 2. Linux system parameters

Parameter

Value

Description

net.core.rmem_max

134217728

Maximum receive buffer size used by each TCP socket

net.core.wmem_max

134217728

Maximum send buffer size used by each TCP socket

net.core.netdev_max_backlog

300000

Maximum number of incoming connections backlog queue

net.ipv4.tcp_rmem

4096 87380 134217728

Auto-tuned

TCP buffer limits: min, default, and max size of the receive buffer used by each TCP socket

net.ipv4.tcp_wmem

4096 65536 134217728

Auto-tuned

TCP buffer limits: min, default, and max size of the send buffer used by each TCP socket

net.ipv4.tcp_moderate_rcvbuf

1

Auto-tuned

TCP receiver buffer size

net.bridge.bridge-nf-call-iptables

0

netfilter

net.bridge.bridge-nf-call-arptables

0

netfilter

net.bridge.bridge-nf-call-ip6tables

0

netfilter

Configure hosts in PowerVault Manager

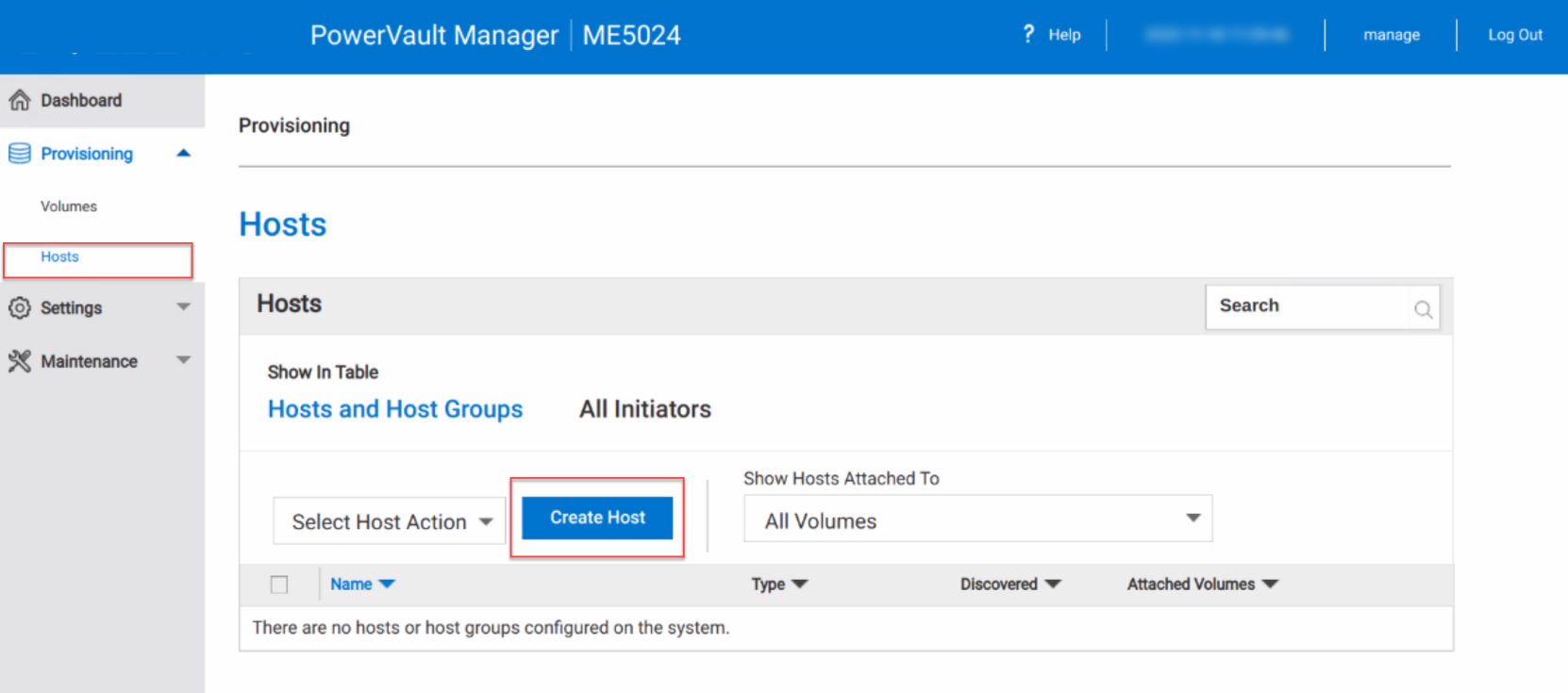

After configuring the FC zones or iSCSI initiators, add the Linux hosts on the ME5 storage system.

- In PowerVault Manager, go to Provisioning > Hosts. The Create Host button is available for selection under Hosts table.

Note: If the Create Host button remains gray instead of blue, it means that the storage system does not have any visible connections to the host initiators. Verify the host connections, FC zones, iSCSI initiator configuration, and switch configuration and correct the issues.

Figure 5. Create Host in PowerVault Manager

- In the Create Host dialog window, all available host initiators are listed for selection. Confirm and select the Linux host initiators to be added. See the sections Identify Linux FC port WWNs and Configure Linux iSCSI initiator for how to collect the host initiator information.

Figure 6. Create Host from iSCSI initiators

Figure 7. Create Host from FC initiators

- Follow the rest of the wizard to confirm the selection and complete the operation.