Home > Storage > PowerStore > Virtualization and Cloud > Dell PowerStore: Virtualization Integration > vVol storage and virtual machine compute

vVol storage and virtual machine compute

-

To enable optimal virtualization performance, accounting for a virtual machine’s compute and storage placement is important. This section provides recommendations for when you are using PowerStore storage with external compute and internal compute (AppsON).

vSphere Virtual Volumes storage with external compute

For optimal performance, keep all vVols for a VM together on a single appliance. When provisioning a new VM, PowerStore groups all its vVols onto the same appliance. In a multi-appliance cluster, the appliance that has the highest amount of free capacity is selected. This selection is maintained even if the provisioning results in a capacity imbalance between appliances afterwards. If all vVols for a VM cannot fit on a single appliance due to space, system limits, or health issues, the remaining vVols are provisioned onto the appliance with the next-highest amount of free capacity.

When provisioning a VM from a template or cloning an existing VM, PowerStore places the new vVols onto the same appliance as the source template or VM. This action enables the new VM to take advantage of data reduction to increase storage efficiency. For VM templates that are frequently deployed, it is recommended to create one template per appliance and evenly distribute VMs between appliances by selecting the appropriate template.

When taking a snapshot of an existing VM, new vVols are created to store the snapshot data. These new vVols are stored on the same appliance as the source vVols. In situations where the source vVols are spread across multiple appliances, the vVols created by the snapshot operation also become spread. vVol migrations can be used to consolidate a VM’s vVols on to the same appliance.

In this configuration, PowerStore provides storage and an external hypervisor provides compute. The external hypervisor connects to the storage system through an IP or FC network. Since the external hypervisor always travels through the SAN to communicate with the storage system, no further considerations are needed for compute placement.

vVol storage with internal compute (AppsON)

On PowerStore X model appliances, AppsON enables customers to run their applications using the internal ESXi nodes. When using AppsON, using the same appliance for a virtual machine’s storage and compute minimizes latency and network traffic. In a single appliance cluster, compute and storage for AppsON VMs are always collocated, and no further considerations are needed for compute placement.

When a multi PowerStore X cluster is configured, this action also creates an ESXi cluster in vSphere with all the PowerStore X model ESXi nodes. From a vSphere perspective, each PowerStore X model ESXi node is weighted equally so it is possible for a VM’s storage and compute to be separated. This configuration is not ideal because it increases latency and network traffic. For example, if a virtual machine’s compute is running on Node A on appliance-1 but its storage resides on appliance-2. Then, I/O must traverse through the top-of-rack (TOR) switches for the compute node to communicate with the storage appliance.

For optimal performance, it is recommended to keep all vVols for a VM together on a single appliance. When provisioning a new VM, PowerStore groups all its vVols onto the same appliance. This grouping is maintained even if the provisioning results in a capacity imbalance between appliances afterwards. If all vVols for a VM cannot fit on a single appliance due to space, system limits, or health issues, the remaining vVols are provisioned on to the appliance with the next-highest amount of free capacity.

When provisioning a new AppsON VM, the administrator can control the vVol storage placement. When deploying a VM to the vSphere cluster, the VM’s vVols are placed on the appliance with the highest amount of free capacity. When deploying a VM to a specific host within the vSphere cluster, its vVols are stored on the appliance to which the node belongs.

When deploying a new AppsON VM using a template or cloning an existing VM, PowerStore places the new vSphere Virtual Volumes onto the same appliance as the source template or VM. This action enables the new VM to take advantage of data reduction to increase storage efficiency. For VM templates that are frequently deployed, it is recommended to create one template per appliance and evenly distribute VMs between appliances by selecting the appropriate template.

Regardless of how the VM is deployed, the compute node is always determined by VMware DRS when the VM is initially powered on. If DRS chooses a compute node that is not local to the vVol’s storage appliance, compute and storage are not collocated. It is also possible for DRS to move virtual machines afterwards so that its compute and storage become separated later.

When taking a snapshot of an existing AppsON VM, new vVols are created to store the snapshot data. These new vVols are stored on the same appliance as the source vVols. In situations where the source vVols are spread across multiple appliances, the vVols created by the snapshot operation also become spread. vVol migrations can be used to consolidate a VM’s vVols onto the same application.

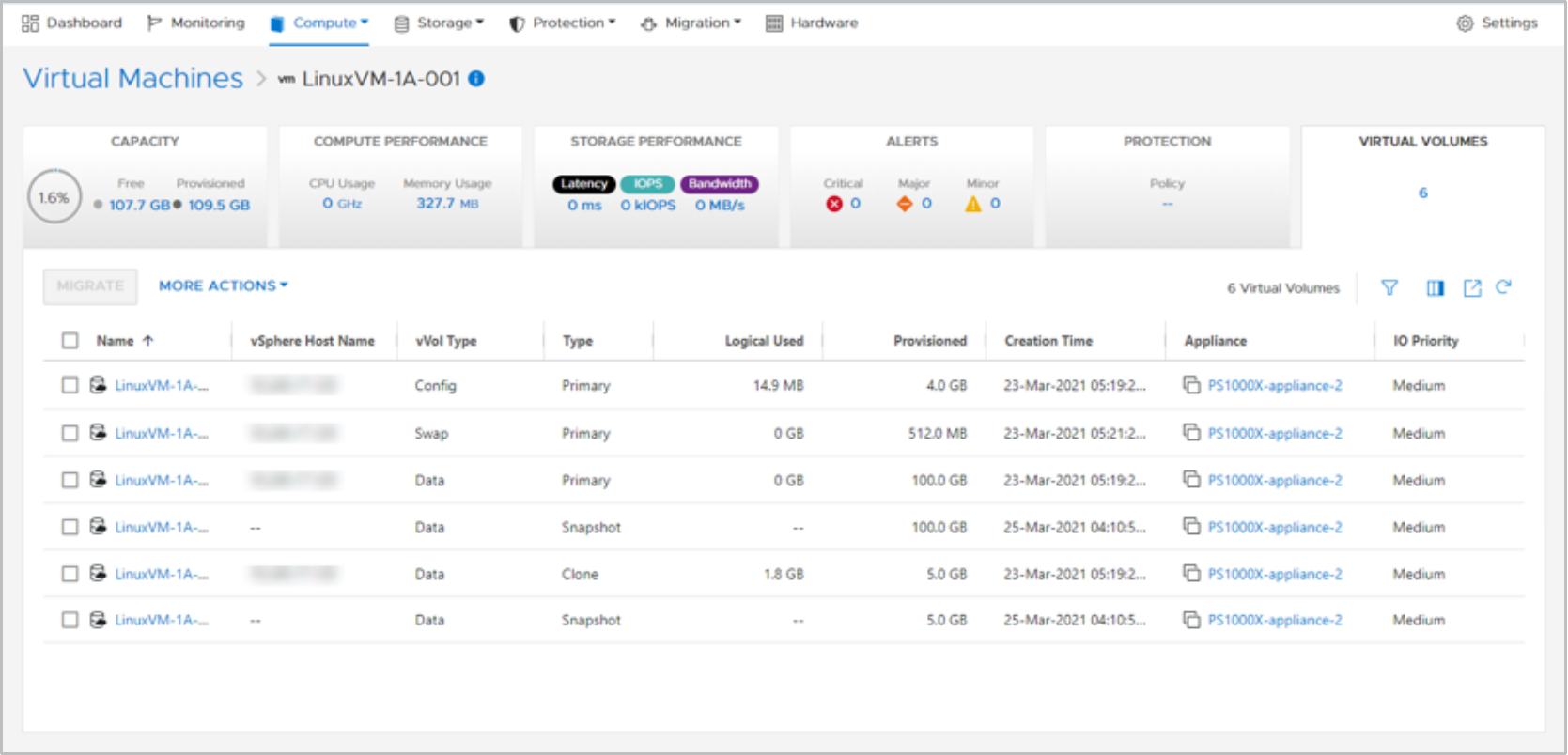

To confirm compute and storage collocation for an AppsON virtual machine, go to Compute > Virtual Machines > Virtual Machine details > Virtual Volumes. The vSphere Host Name column displays the vSphere name of the compute node for that vVol. The Appliance column displays the name of the storage appliance where that vVol is being stored. The following figure displays an optimal configuration:

Figure 25. Virtual Volumes page for a virtual machine

For an optimal configuration, store all vVols for a specific virtual machine on a single appliance. Also, the compute node for these vVols should be one of the two nodes of the appliance that is being used for storage. If there are any discrepancies, vSphere vMotion and PowerStore vVol migration can be used to move compute or storage to create an aligned configuration.

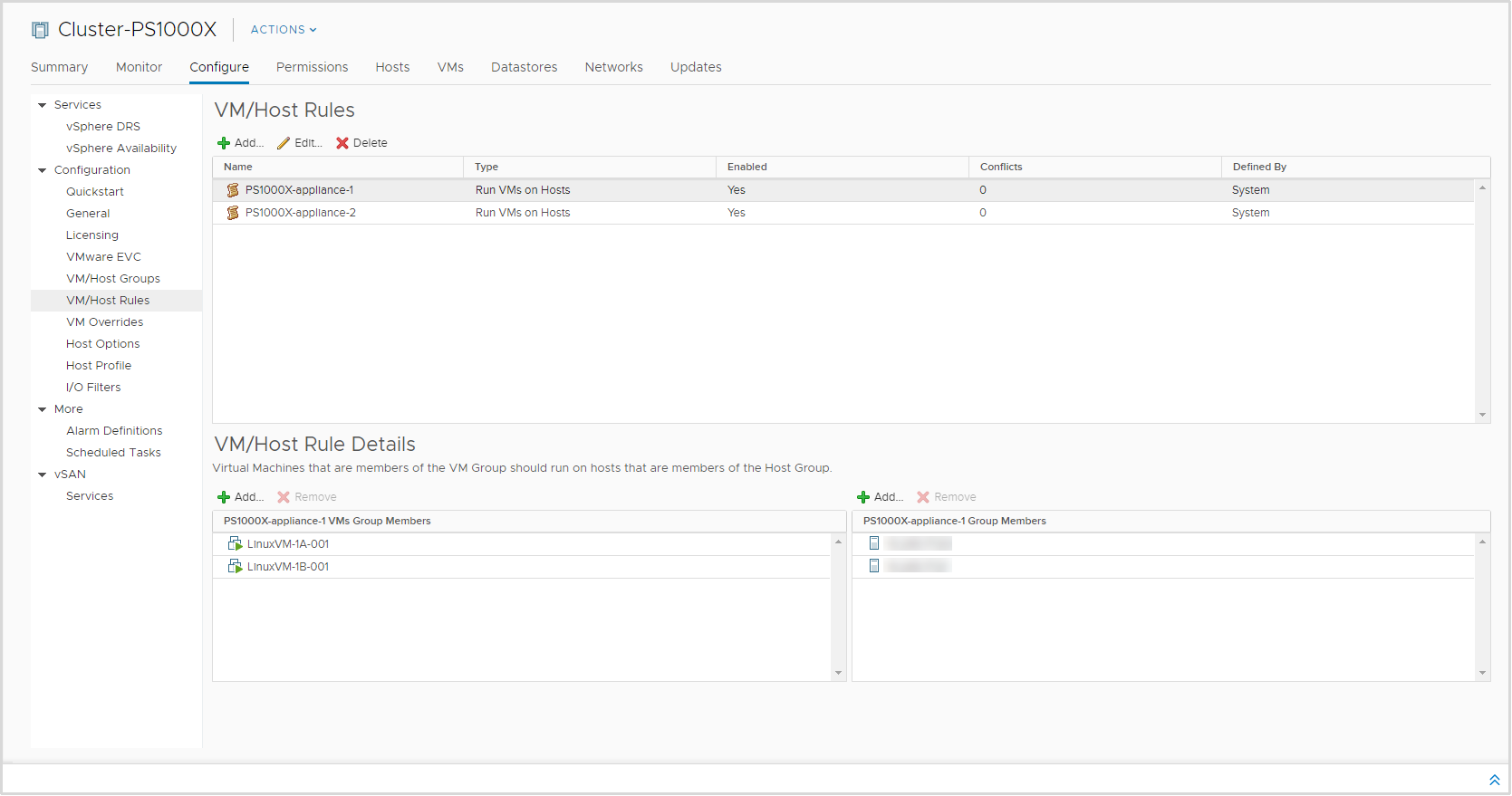

Starting with PowerStoreOS 2.0, PowerStore automatically creates a host group, VM group, and VM/host affinity rule that ties them together in VMware vSphere. The host group contains the two internal ESXi hosts, and one host group is created per appliance. The VM group is initially empty, and one VM group is created per appliance.

Administrators should manually add the relevant virtual machines into the VM group based on where their storage resides. The affinity rule states that the VMs in the group should run on the specified appliance. This rule ensures that VMs run on a compute node that has direct local access to its storage. These groups and rules are automatically added and removed as appliances get added and removed from the cluster.

To manage the affinity rules, go to Cluster > Configure > VM/Host Rules in the vSphere Web Client. When a host group is selected, the two internal ESXi nodes for that appliance are displayed in the members list, as shown in Figure 26. Any VMs that have storage residing on this appliance can be added in the VM group, as shown in the following figure. If VM storage is migrated to another appliance within the cluster, update these rules to reflect the new configuration.

Figure 26. Host/VM Rules