Home > Workload Solutions > Artificial Intelligence > White Papers > Automate Machine Learning with H2O Driverless AI on Dell Infrastructure > Invoking H2O Driverless AI from cnvrg.io MLOps Platform

Invoking H2O Driverless AI from cnvrg.io MLOps Platform

-

As shown in Figure 1, AutoML enables automatic model building. However, it does not offer the complete life cycle for a machine learning application. Also, AutoML automated model building does not support all scenarios and use cases. For example, AutoML supports training only for supervised data and unsupervised learning. It does not support reinforcement learning.

For building models for such complex use cases and to maintain a complete life cycle of AI models, enterprises rely on an MLOPs platform. MLOps is a defined process and life cycle for machine learning data, models, and coding. The MLOps life cycle begins with data extraction and preparation as the dataset is massaged into a structure that can effectively feed the model. MLOps platforms provide constant monitoring to ensure that the process is running smoothly. MLOps enables data scientists to build complex pipelines that allow for continuous learning. Automatic retraining can be implemented to help adjust the deployed process and improve the accuracy with each iteration.

Enterprises that have multiple ongoing AI projects to support progress towards their business intelligence goals can use both MLOps and AutoML platforms to their respective strengths. Dell Technologies has worked closely with cnvrg.io to deliver MLOps for AI and machine learning adopters through a jointly engineered and tested solution to help organizations capitalize on the benefits of MLOps for machine learning and AI workloads. The Optimize Machine Learning Through MLOps with Dell Technologies and cnvrg.io White Paper and Design Guide provide guidance for architecting, deploying, and operating MLOps in the data center.

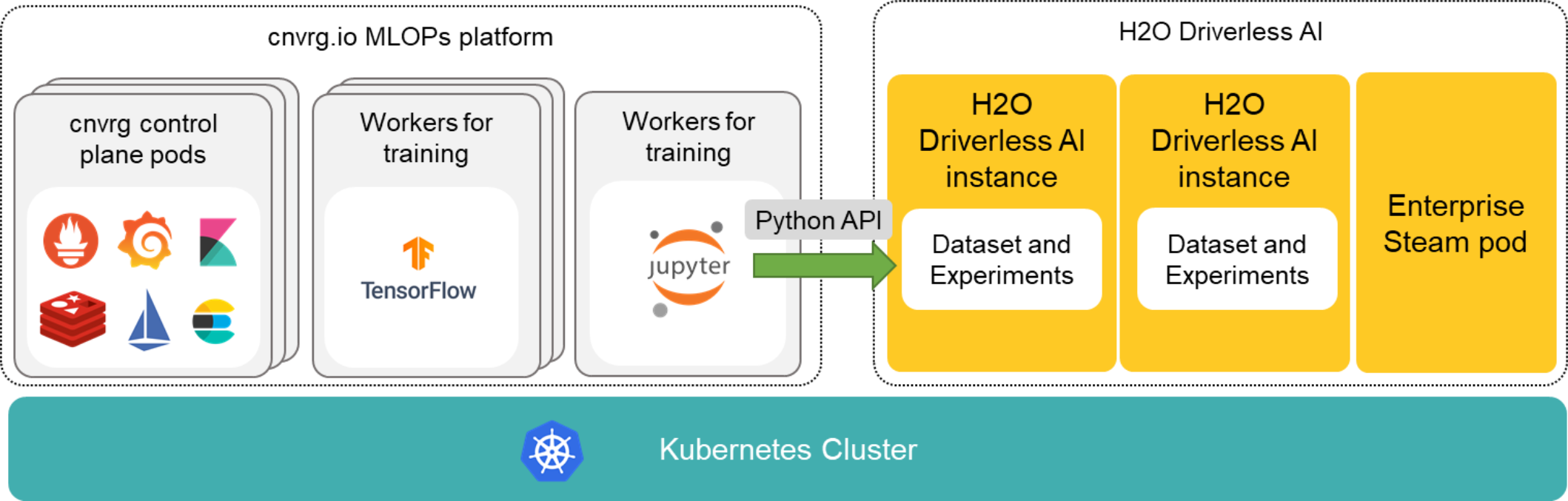

Data scientists can use H2O Driverless AI’s Python API to access its capabilities from a MLOps platform, like cnvrg.io, as shown in the following figure. Both cnvrg.io and H2O Driverless AI are deployed on Kubernetes. The cnvrg.io platform consists of control plane pods and worker pods. The control plane pods consist of all the pods in the cnvrg.io management plane, such as application server and Sidekiq. Machine learning workloads run as worker pods (or workers). A cnvrg.io workspace is an interactive environment for developing and running code. You can run popular notebooks, interactive development environments, Python scripts, and more. From this workspace, you can invoke Python APIs to access H2O Driverless AI.

Figure 4. Invoking a H2O Driverless AI instance from a cnvrg.io workspace

Data scientists can perform complex data cleaning and preparation in cnvrg.io. The data can then be made available to a H2O Driverless AI instance through an NFS share. Using the APIs, data scientists can perform automated feature engineering and model development. They can evaluate the performance generated by H2O Driverless AI. The data scientists can proceed with developing their models if the performance does not satisfy them or H2O Driverless AI does not support their use case.

Data practitioners can also use cnvrg.io to push a model generated by H2O Driverless AI to production in cnvrg.io. Using the APIs, they can download all the artifacts created by the experiment. Artifacts include scoring pipelines, autodocumentation, prediction CSVs, and logs. The scoring pipelines can then be packaged as a Docker container and can be hosted in the cnvrg.io platform. The container exposes a REST API that can be used for predictions. For instructions, see Appendix A.

Note: H2O Driverless AI cannot further optimize models developed manually. Similarly, models selected by H2O Driverless AI cannot be further tuned and optimized manually in cnvrg.io.

Now, automated feature engineering, model development, and productizing a model from H2O Driverless AI can be part of complex machine learning pipeline in cnvrg.io allowing enterprises to build continuous learning to strengthen their AI models.