Home > Workload Solutions > Oracle > Guides > Design Guide—Modernize Your Oracle Database Server Platform and Accelerate Deployments > Storage best practices

Storage best practices

-

The following list highlights default settings that we recommend maintaining for optimal storage performance:

- For mission critical Oracle databases, we recommend separating the following database components into different ASM disk groups:

- GRID disk group for Oracle grid infrastructure and Voting disks.

- DATA disk group for database files

- REDO disk group for online Redo logs

- TEMP disk group for Temp and FRA disk group for flashback logs and Archivelogs

- Follow Oracle ASM best practices to create ASM disk groups for GRID, DATA, REDO, FRA, and TEMP.

- Enable block multi-queue (MQ), also known as blk-mq, by default in Red Hat Enterprise Linux 8 operating system.

- The Red Hat Enterprise Linux 8 I/O scheduler default setting is Deadline.

- Use multiple I/O controllers in VM hard disk configuration to distribute storage volumes and VM hard disks to multiple I/O controllers for I/O load balance.

In addition to these default storage settings, we applied the following storage configurations on different test to and explore how they impacted performance.

- Multiple volume ASM Disk Groups

- Setting FINE grained striping of REDO and FRA

- SAN zoning to include all storage nodes and front-end ports

- Set PowerStore Performance Policy

- Set PowerStore Node Affinity

Multiple volume ASM Disk Groups

For this tuning practice, we have four volumes per ASM database disk group (DATA, REDO, and FRA).

The following list describes the tasks that we performed to present the additional volumes to the guest VM Red Hat Enterprise Linux OS on the Oracle database before adding them to the ASM disk groups.

- Presented these volumes to the ESXi host and created the corresponding datastores based on these volumes

- Created the hard disks from these data stores and presented these hard disks to the guest VM Red Hat Enterprise Linux OS as block devices

- Created a single partition on each of these devices

- Created udev rules to assign Linux 0660 permission and ownership of these devices to the grid user. In this udev rules file, we created one entry per device. For example, the following entry assigns the Linux 0660 permission and the ownership of this device with SCSI ID ‘368ccf09800cd6a0eaf106e5a2155cf92’ to the grid user and create a soft link ‘/dev/oracleasm/disks/ora-data2’ to direct to the device:

KERNEL=="sd[a-z]*[1-9]", SUBSYSTEM=="block", OPTIONS:="nowatch" PROGRAM=="/usr/lib/udev/scsi_id -g -u -d /dev/$parent", RESULT=="368ccf09800cd6a0eaf106e5a2155cf92", SYMLINK+="oracleasm/disks/ora-data2", OWNER="grid", GROUP="asmadmin", MODE="0660"

- Ran the Linux command # udevadm trigger to take apply all these udev rules.

The following table shows the device names, their corresponding storage groups, and the ASM disk groups that were created after performing these tasks.

Table 16. Multiple Volume ASM disk groups

VolumeGroup

VolumeName

Volume Size

(GB)

Datastore

Device Name

Disk Group (Baseline)

Salt_VM_OS

Salt_VM_OS-001

300

Salt_OS_VM_1

/dev/sda

NA

Salt_VM_Grid

Grid_VM1-001

50

Salt_VM1_Grid_C1

/dev/oracleasm/disks/ora-ocr1

GRID

Grid_VM1-002

50

Salt_VM1_Grid_C2

/dev/oracleasm/disks/ora-ocr2

Grid_VM1-003

50

Salt_VM1_Grid_C3

/dev/oracleasm/disks/ora-ocr3

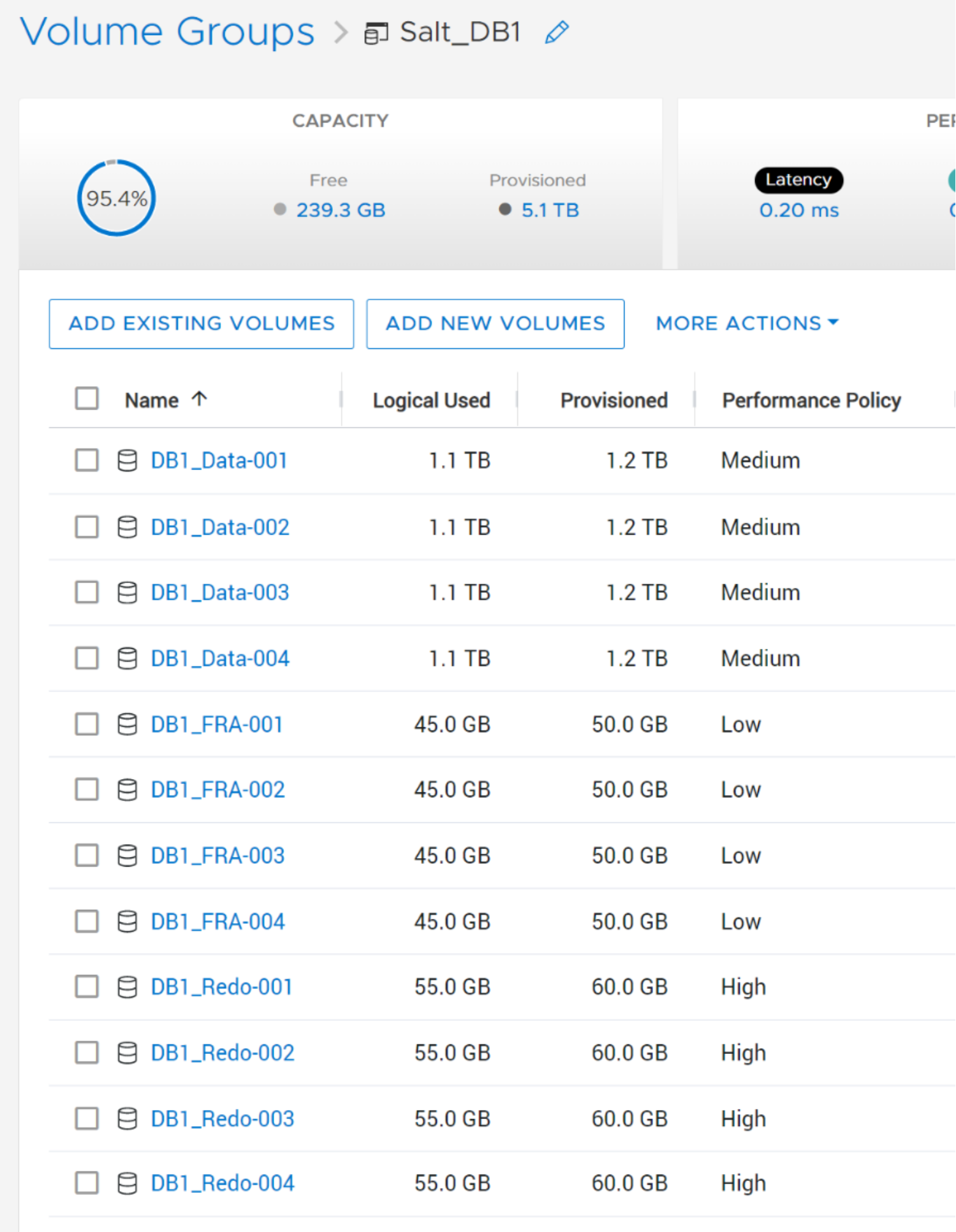

Salt_DB1

DB1_Data-001

1200

Salt_VM1_Data1

/dev/oracleasm/disks/ora-data1

DATA

DB1_Data-002

1200

Salt_VM1_Data2

/dev/oracleasm/disks/ora-data2

DB1_Data-003

1200

Salt_VM1_Data3

/dev/oracleasm/disks/ora-data3

DB1_Data-004

1200

Salt_VM1_Data4

/dev/oracleasm/disks/ora-data4

DB1_Redo-001

60

Salt_VM1_Redo1

/dev/oracleasm/disks/ora-redo1

REDO

DB1_Redo-002

60

Salt_VM1_Redo2

/dev/oracleasm/disks/ora-redo2

DB1_Redo-003

60

Salt_VM1_Redo3

/dev/oracleasm/disks/ora-redo3

DB1_Redo-004

60

Salt_VM1_Redo4

/dev/oracleasm/disks/ora-redo4

DB1_Fra-001

50

Salt_VM1_FRA1

/dev/oracleasm/disks/ora-fra1

FRA

DB1_Fra-002

50

Salt_VM1_FRA2

/dev/oracleasm/disks/ora-fra2

DB1_Fra-003

50

Salt_VM1_FRA3

/dev/oracleasm/disks/ora-fra3

DB1_Fra-004

50

Salt_VM1_FRA4

/dev/oracleasm/disks/ora-fra4

Salt_VM_Temp

Salt_Temp-001

500

Salt_VM1_Temp

/dev/oracleasm/disks/ora-temp

TEMP

Perform the following SQL statement in the ASM instance to add three volumes to ASM the disk groups DATA, REDO, and FRA:

Note: Before adding the three additional volumes to the disk group, ensure that all the volumes are the same size.

SQL> alter disk group DATA add disk

'/dev/oracleasm/disks/ora-data2' NAME DATA_0001,

'/dev/oracleasm/disks/ora-data3' NAME DATA_0002,

'/dev/oracleasm/disks/ora-data4' NAME DATA_0003;

SQL> alter disk group REDO add disk

'/dev/oracleasm/disks/ora-redo2' NAME REDO_0001,

'/dev/oracleasm/disks/ora-redo3' NAME REDO_0002,

'/dev/oracleasm/disks/ora-redo4' NAME REDO_0003;

SQL> alter diskgroup FRA add disk

'/dev/oracleasm/disks/ora-fra2' NAME FRA_0001,

'/dev/oracleasm/disks/ora-fra3' NAME FRA_0002,

'/dev/oracleasm/disks/ora-fra4' NAME FRA_0003;

You can perform these SQL statements while the database is still running. However, it is preferable to run these statements off the database workloads peak time, because adding disk volumes could result in data rebalancing to the new volumes, which may cause a significant performance overhead. ASM_POWER_LIMIT initialization is the rebalancing power of the system. The default value for ASM_POWER_LIMIT is 1, and the maximum limit is 1024. The higher the limit, the faster a rebalance operation may complete, resulting in more performance overhead caused by the rebalance.

To monitor the status of the rebalancing operation, perform the following SQL command to check the operation status:

SQL> select INST_ID, OPERATION, STATE, POWER, SOFAR, EST_WORK, EST_RATE, EST_MINUTES from GV$ASM_OPERATION;

INST_ID OPERA STAT POWER SOFAR EST_WORK EST_RATE EST_MINUTES

---------- ----- ---- ---------- ---------- ---------- ---------- -----------

1 REBAL WAIT 1 0 0 0 0

1 REBAL RUN 1 14185 179546 7085 23

1 REBAL DONE 1 0 0 0 0

This example shows three rebalancing operations: the first is waiting, the second is running, and the third one is complete.

For the running operation, EST_WORK represents how many total allocations units are required to move and SOFAR shows how many have already been moved. EST_MINUTES represents how many minutes are expected to take to complete the operation

Setting FINE grained striping of REDO and FRA

To set the FINE granted stripping for REDO and FRA disk groups, perform these SQL statements in ASM instance:

ALTER DISKGROUP REDO ALTER TEMPLATE ONLINELOG ATTRIBUTES (FINE);

ALTER DISKGROUP FRA ALTER TEMPLATE ARCHIVELOG ATTRIBUTES (FINE);

With these fine stripping settings, the fine striping writes 128 KB data to each ASM Disk in the REDO and FRA disk groups in a round robin fashion: 128 KB goes to the first disk, the next 128 KB goes to the next disk, and so on. The fine-grained stripe size always equals 128 KB in any configuration, this provides lower I/O latency for small I/O operations.

SAN zoning to include all nodes and front-end ports

We used the two HBA ports of each R750 server and two storage front-end modules per node for the baseline configuration. For the best practices test case, we added the remaining two HBA ports to each R750 server and two storage front-end modules per node. The Figure 12 shows the four HBAs ports that are paired with eight front-end PowerStore controller nodes. This configuration not only ensures high availability against any component failure, but also increases the SAN network bandwidth by providing more I/O paths with four HBA ports of each server and eight PowerStore front-end ports.

Figure 13. SAN FC Zoning for multiple I/O paths

PowerStore Performance Policy

Volume performance can be managed in PowerStore by adjusting the performance policy to high, medium, or low. Selecting a performance policy sets a relative priority for the volume by using a share-based system that prioritizes the higher performance policy when resources are constrained. The performance policy does not impact on system behavior unless some volumes have a low performance policy setting while other volumes are set to medium or high.

In the baseline case all the volumes were set to medium performance policy, which is the default setting. For the test case, we changed the performance policy of all REDO log volumes to high and set the FRA volumes to low. We did not change DATA volumes performance policy.

The following figure shows the performance policy for the volumes of VM1.Figure 14. Volume Performance Policy settings

- For mission critical Oracle databases, we recommend separating the following database components into different ASM disk groups: