Home > Workload Solutions > Oracle > Guides > Design Guide—Enterprise Deployment of Oracle Analytics Server on Dell PowerFlex Infrastructure > Software architecture and configuration

Software architecture and configuration

-

Software architecture overview

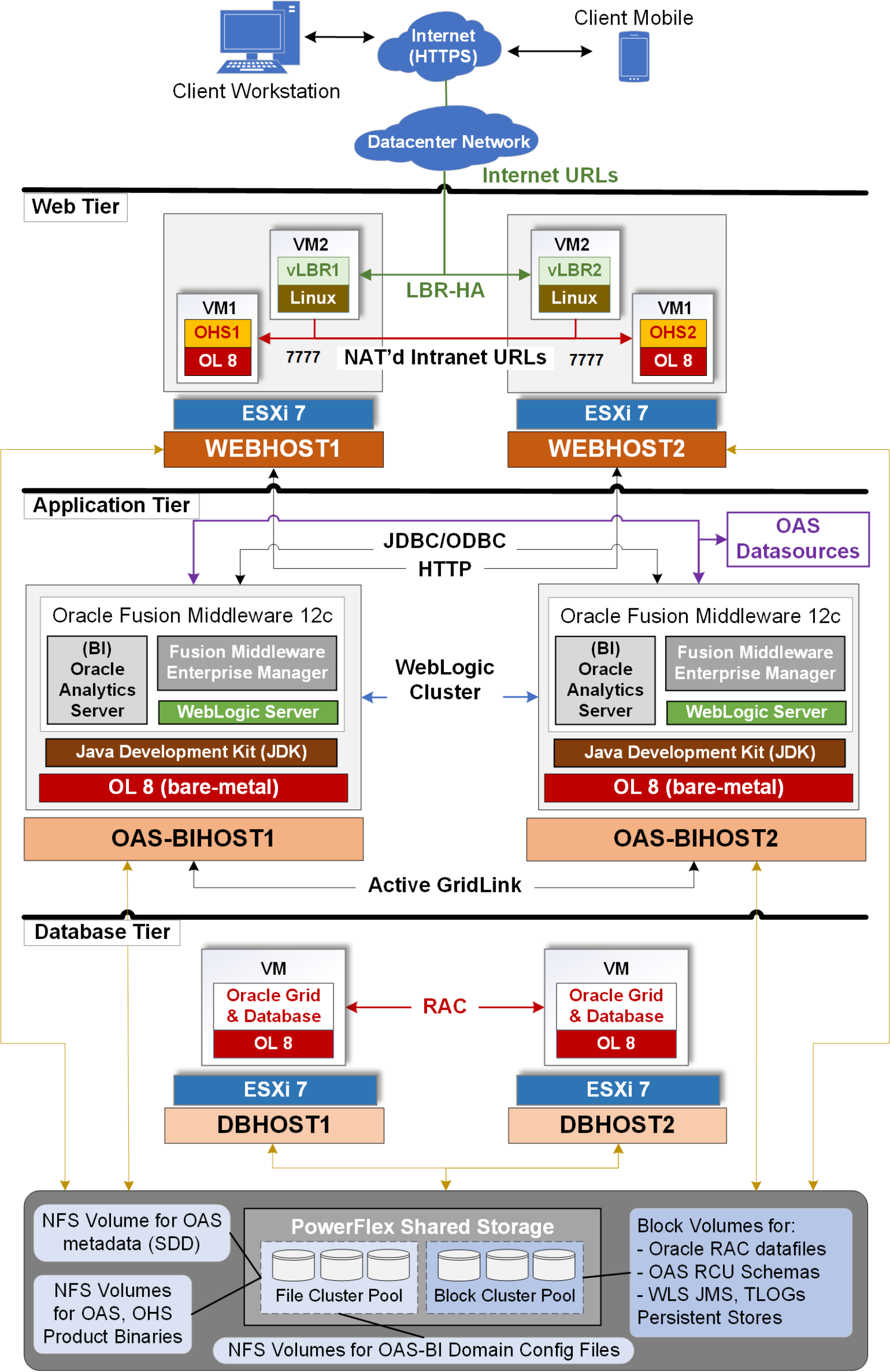

Figure 3 shows the software architecture of the various software products required and deployed across the OAS three-tier solution stack.

Figure 3. Software architecture overview of OAS platform deployment in an enterprise topology

Web tier software design and configuration

As noted in the solution introduction, the load balancers (LBR) in the web tier can be either hardware devices or virtual devices. A web server like Oracle HTTP Server (OHS) is a lightweight software product that can be deployed inside a virtual machine. So, since both the virtual load balancers (vLBR) and the Oracle HTTP (web) Servers are not very compute resource hungry, in our design, we deployed them inside dedicated virtual machines (VMs). For high availability (HA) of both the OHS and the vLBRs, we created two VMs for each and deployed their respective VMs across two ESXi 7.0 web hosts (WEBHOST1 and WEBHOST2), as shown in Figure 3 above.

Progress Kemp Technologies Virtual LoadMaster load balancer: In our design, we deployed virtual LoadMaster (VLM) from Progress Kemp Technologies as the load balancer product. VLM is available as a virtual appliance (VMware OVF file). Using VMware vCenter we deployed two instances of VLM, as two VMs (VM2-vLBR1 and VM2-vLBR2), across the two ESXi web hosts. VLM also supports high availability, and in our design, we configured the two VLM load balancers to operate in an active-passive model. VLM provides all the features and configuration options required for an OAS enterprise solution. For details on VLM installation see the Virtual LoadMaster VMware Installation Guide and for details on HA configuration see the LoadMaster HA Guide.

Note: Progress Kemp Technologies also offers Hardware LoadMaster load balancers. Customers can choose to deploy the hardware version if they prefer. The configuration, features, and functionality offered by both the hardware and virtual LoadMaster load balancers are identical.

Oracle HTTP Server (OHS) web server: In our design, we deployed the Oracle HTTP Server (OHS) as the web server product. Starting with Oracle FMW 12c, OHS can be set up either as part of the application tier (WLS) domain or as separate stand-alone domains. In our design, we installed and configured OHS as part of the web tier, as two separate stand-alone domains. OHS software was installed inside two Oracle Linux 8 VMs – OHS1 VM running on WEBHOST1 and OHS2 VM running on WEBHOST2. By deploying each OHS instance as a separate stand-alone unit makes it easier to configure, easier to maintain, and lesser resources to run.

For details on the software products and versions used in the web tier, refer to Table 12 in the Appendix.

Application tier software design and configuration

In the OAS enterprise solution, the application tier consists of at least two physical hosts (OAS-BIHOST1 and OAS-BIHOST2) where we install the Oracle FMW products, including WLS and OAS, and configure the domains to run in a cluster. In our design and testing, we deployed two PowerFlex R760 compute-only nodes (SDCs) running bare-metal Oracle Linux 8 as the two OAS-BIHOSTs. In the OAS enterprise solution, the application tier software deployment includes the installation of the following software products:

- Java Development Kit (JDK) 8 software.

- Oracle Fusion Middleware (FMW) Infrastructure (12.2.1.4.0) distribution software which includes FMW binaries, FMW Enterprise Manager (EM), WebLogic Server (WLS), and Oracle JRF software – all in one distribution.

- Oracle Analytics Server (OAS) 7.0.0 software.

For details on the software products and versions used in the web tier, see Table 13 in the Appendix.

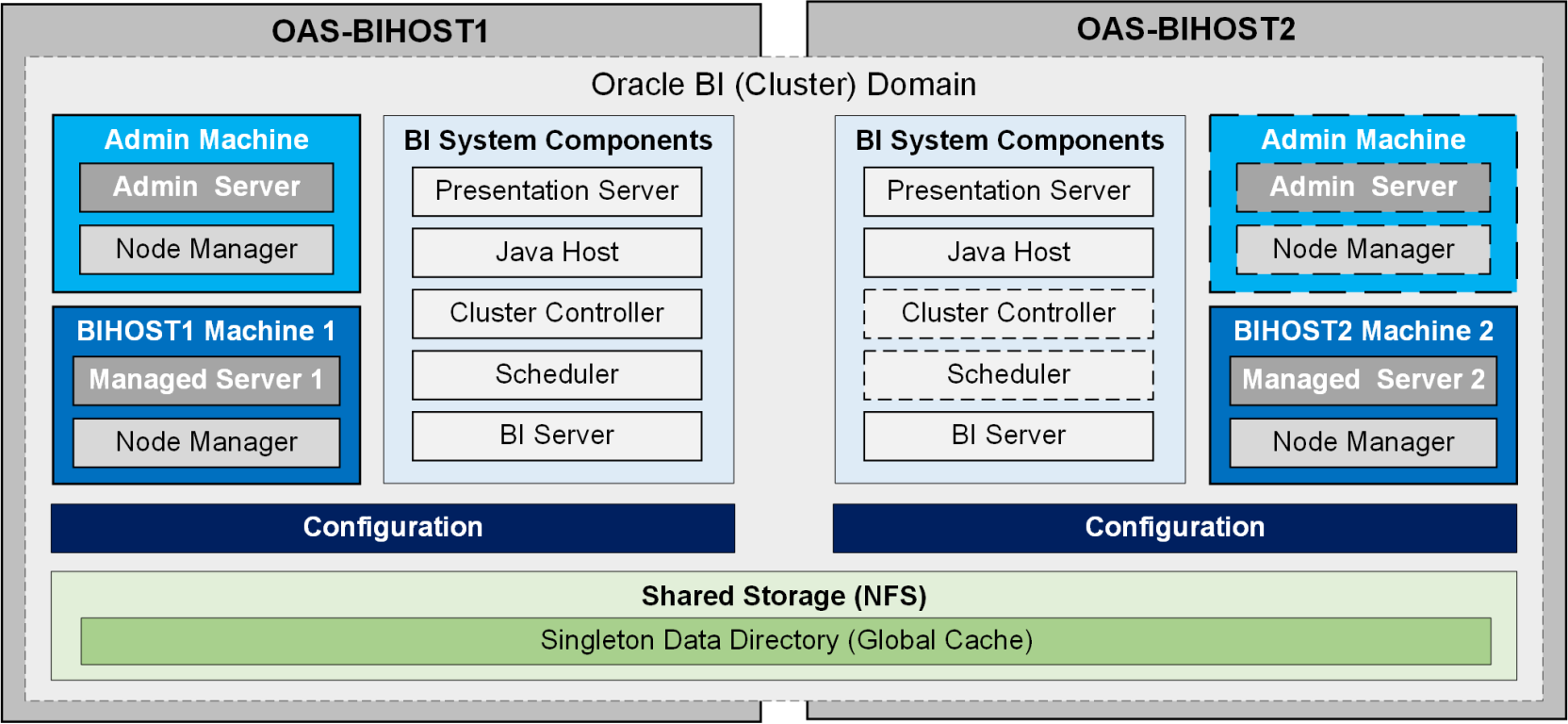

Following Oracle’s best practices and recommendations for deploying OAS as an enterprise solution, especially for production environments, the key aspect of our design and deployment focused on high availability, redundancy, and scalability of the application tier components. Figure 4 below shows the OAS-BI high availability, horizontal scale-out architecture that we deployed. Figure 4 shows the OAS platform installation and configuration involves creation or extension of the Oracle WLS domain, also called Oracle BI domain in this guide. And, in an enterprise topology it is recommended to deploy two or more OAS-BIHOSTs to form a clustered BI domain, it is also referred to as horizontally scaled-out or clustered OAS configuration.

Figure 4. OAS-BI highly available, horizontal scale-out architecture

Note: Unlike the Managed Servers in the domain, the Administration (Admin) Server uses an active-passive HA configuration. This is because only one Admin Server can be running within a BI cluster domain (as shown as a dotted box in the OAS-BIHOST2 in Figure 4). Similarly, the two BI System Components (Cluster Controller and the Scheduler) are shown in dotted boxes in the OAS-BIHOST2 because these are non-scalable components.

A clustered OAS deployment requires a shared storage to enable high availability and failover of its components. Shared storage is also required to enable the OAS Global Cache for enhanced performance. In a clustered OAS-BI domain, the Oracle WebLogic Server components–Java Messaging Service (JMS) and Java Transaction Logs (TLogs) –need to be stored on shared persistent stores. Shared storage is required in order for the JMS and TLogs to be available and accessible by any of the OAS-BIHOSTs. The JMS and TLogs persistent stores can be placed either on file-based or JDBC-accessible stores. In our design, we placed the JMS and TLogs on the JDBC-accessible stores in the backend Oracle RAC database since it provides a lot of advantages over the file-based store. For more details on the shared storage design and best practices that we implemented, see Shared storage architecture and configuration.

As part of the high availability architecture, OAS supports failover of its domain components–Admin Server and the Managed Servers–between the various hosts that are members of the cluster. OAS supports manual failover of the Admin Server while it supports automatic failover of the Managed Servers between the hosts. Automatic failover of the Managed Servers feature is part of the Whole Server Migration (WSM) feature of WLS. But, for this to work, it requires that the network and the storage on the hosts be configured a specific way. In our design, we implemented the WSM feature and the manual failover of the Admin Server, details of which can be found in Application tier network configuration and Persistent stores for JMS, TLOGs, and DB leasing.

Oracle highly recommends to create a dedicated user and group that completely owns the OAS clustered platform, including its directories and sub-directories. Following this best practice, we created the oracle user and the oinstall group for deploying and configuring the entire OAS platform in the application tier. For more details on the directory structure and permissions that we deployed in our design, see Directory structure and variables design. For the complete detailed steps of deploying the horizontal scale-out OAS architecture, see OAS Enterprise Deployment Guide.

Database tier software design and configuration

Installation of OAS and other Oracle FMW products (WLS) in the application tier requires a specific set of its schemas to be installed in a supported database. The schemas are created using the Oracle FMW Repository Creation Utility (RCU). In an enterprise deployment, Oracle recommends a highly available database like Oracle Real Application Clusters (RAC) database to store the Oracle FMW schemas. Hence, for max availability, load balancing, and for seamless integration with the Oracle FMW products, including OAS, in the database tier we deployed Oracle 19c Grid Infrastructure and 19c RAC Database.

A database instance running on each RAC node does not require all the CPU cores (up to 192 physical cores in a dual-socket server) and the huge memory capacity (up to 12 TB) that a current generation server may offer. We have the option to start with a low-end server that offers a single socket (running a CPU with eight physical cores) and having a small memory footprint. However, in an enterprise and production setup, it can be an administrative nightmare to later scale-up or scale-out physical servers if the need arises to expand with growing business needs. Even if we could overcome the challenges of adding more RAC nodes or servers, the additional cost for expensive Oracle RAC licensing can be deterring. A good alternative would be to start with a virtualized two-node Oracle RAC setup and allocate as much virtual CPU and memory as required by each database instance running inside the VMs. This provides the great benefit of allowing to quickly spin additional RAC node VMs as needed with growing business and application needs, while not having to spend additional on the expensive Oracle licensing. Thus, in our design, we deployed the Oracle RAC database across two ESXi-based virtualized servers (DBHOST1 and DBHOST2), inside Oracle Linux 8 VMs. Thereby, allowing to maximize the hardware resource utilization, and more importantly, keeping the Oracle licensing costs low, keeping future needs in mind in an enterprise environment. Virtualized database tier also provides the flexibility to deploy additional Oracle databases across additional VMs for use with other Oracle application products while not requiring adding additional hardware resources.

Before we do the installation of OAS in the application tier, one of its pre-requisites is to have a service in the Oracle database available. Oracle recommends not to use the default service in the Oracle database but to create a new service instead for each application product, OAS in our case. This permits the isolation of the services for different application products and thereby allowing prioritizing throughput and performance accordingly. Following the Oracle’s best practice, we created a separate new service – srvctl add service -db oasracdb -service oasedg.delllabs.net -preferred oasracdb1,oasracdb2 – specifically for our OAS database service needs.

Oracle FMW supports other databases for storing its schemas. A complete list of supported databases can be found at Oracle Fusion Middleware 12c Certifications. However, for enterprise deployments, Oracle highly recommends using Oracle GridLink datasources feature to connect to Oracle RAC database. In our design, we used GridLink datasources to connect OAS to Oracle RAC database as briefly further described in Persistent stores for JMS, TLOGs, and DB leasing.

For details on the software products and versions used in the web tier, see Table 14 in the Appendix.