Home > Workload Solutions > Oracle > Guides > Design Guide—Enterprise Deployment of Oracle Analytics Server on Dell PowerFlex Infrastructure > Shared storage architecture and configuration

Shared storage architecture and configuration

-

Shared storage overview

One of the key elements to a resilient, scalable deployment solution for OAS in an enterprise topology is having a highly available shared storage. OAS enterprise topology deployed as a scale-out clustered solution, ideally requires:

- NAS-based storage (NFS volumes):

- (Web and application tier) To store the product binaries.

- (Application tier) To store the BI domain configuration files.

- (Application tier) To store security certificates in Java KeyStores.

- (Application tier) To store the Shared Data Directory (SDD) or Global Cache.

- SAN-based storage (block-based volumes):

- (Database tier) To store Oracle RAC Database datafiles and schemas.

- (Application tier) To store OAS Repository Creation Utility (RCU) Database schemas in Oracle Database.

- (Application tier) To store WLS Java Messaging Service (JMS) and Transaction Logs (TLogs) in a JDBC-based persistent store.

The Dell software-defined PowerFlex Storage is ideal for OAS enterprise deployment because it meets all the above needs of providing both NAS-based and SAN-based storage. The key advantage in using the PowerFlex Storage is that it allows to start small for both the NAS-based storage (PowerFlex File Nodes) and the SAN-based storage (PowerFlex SDS) and each can scale-out separately as the demand for capacity and performance increases.

PowerFlex File (NAS) storage design

Table 5 shows all the NFS v3 volumes we created in the PowerFlex File nodes and configured on the two OAS-BI hosts and the two OHS VMs. The following nfs mount options were configured for all the NFS volumes in the /etc/fstab configuration file:

rw,bg,hard,nointr,tcp,vers=3,timeo=600,rsize=32768,wsize=32768,_netdev

Table 5. PowerFlex NFS volumes configured on OAS-BIHOSTs and OAS-OHS-VMs

NFS Volume #

NFS Volume Name

Volume Used For

Server Volume Mounted On

Mount Directory Path

NFS Volume 1

bihost1-products

Product binaries

OAS-BIHOST1

/u01/oracle/products/

NFS Volume 2

bihost2-products

Product binaries

OAS-BIHOST2

/u01/oracle/products/

NFS Volume 3

bihosts-AServer

Admin Server domain configuration files, Java KeyStore

OAS-BIHOST1, OAS-BIHOST2

/u01/oracle/config/

NFS Volume 4

bihosts-runtime

Runtime files, SDD (Global Cache)

OAS-BIHOST1, OAS-BIHOST2

/u01/oracle/runtime/

NFS Volume 5

ohs1-product-domain

Product binaries, Domain config files

OAS-OHS1-VM

/u01/oracle/

NFS Volume 6

ohs2-product-domain

Product binaries, Domain config files

OAS-OHS2-VM

/u01/oracle/

OAS Admin Server and Managed Servers storage design

The Admin Server setup in the OAS platform uses an active-passive high availability configuration. To support failover of the Admin Server in the event of a host failure, its domain configuration files need to be stored on a shared storage device. Table 5 shows the required shared NFS volume (#3) we created in the PowerFlex File nodes for storing the Admin Server domain configuration files.

Note: Even though we have mounted the Admin Server’s nfs volume on both the OAS-BI hosts, the Admin Server is active only the host where its VIP is currently running. The Admin Server’s VIP is configured manually to run only on one host at a time [or reconfigured manually to run on a different host (example OAS-BIHOST2) in the event the host on which it was running actively (example OAS-BIHOST1) fails].

Unlike the Admin Server, it is recommended to store the Managed Servers’ configuration data on local storage for better performance. As a result, after the initial Admin Server’s domain is created on a shared storage, we use the WLS’s pack and unpack utility to create a copy of this domain configuration on a local storage and start the Managed Servers locally from this copy. Because we separate the Admin Server and the Managed Servers’ domains, each has their own separate Node Manager which is used to start, stop, and monitor their respective services and components. Oracle FMW 12c supports using such a per domain Node Manager which is shown in our enterprise design in Figure 4. To see other key advantages of using a per domain Node Manager, see About the Node Manager Configuration in a Typical Enterprise Deployment.

In our storage design, we created a RAID 1 virtual disk (VD) on two local NVMe drives within each OAS-BIHOSTs for storing the Managed Server’s domain configuration files, as shown in Table 6 below:

Table 6. Local virtual storage disk for storing Managed Server domain configuration files.

Local Volume

Local Block Device Name Within OS

Local Block Device Used For

Server Device Mounted On

Mount Directory Path

RAID 1 VD

/dev/sda1

Managed Server 1 domain configuration files

OAS-BIHOST1

/u02/oracle/config/

RAID 1 VD

/dev/sda1

Managed Server 2 domain configuration files

OAS-BIHOST2

/u02/oracle/config/

Directory structure and variables design

The Table 7 below shows the various directory variables and their corresponding directory paths we configured in the OAS and OHS hosts:

Table 7. OAS and OHS directory variables and their corresponding directory paths.

Directory Variable Name

Directory Path

Directories and variables configured on OAS-BIHOSTs (Application tier)

ORACLE_BASE

/u01/oracle/

Variables and directories configured on NFS Volumes 1 and 2 mounted on /u01/oracle/products/ in their respective OAS-BIHOST1 and OAS-BIHOST2 servers

JAVA_HOME

/u01/oracle/products/jdk/

ORACLE_HOME

/u01/oracle/products/fmw/

ORACLE_COMMON_HOME

/u01/oracle/products/fmw/oracle_common

WL_HOME

/u01/oracle/products/fmw/wlserver/

(OAS) PROD_DIR

/u01/oracle/products/fmw/bi/

EM_DIR

/u01/oracle/products/fmw/em/

Variables and directories configured on NFS Volume 3 mounted on /u01/oracle/config/

SHARED_CONFIG_DIR

/u01/oracle/config/

ASERVER_HOME

/u01/oracle/config/domains/bi_domain/

APPLICATION_HOME

/u01/oracle/config/applications/bi_domain/

KEYSTORE_HOME

/u01/oracle/config/keystores/

Variables and directories configured on NFS Volume 4 mounted on /u01/oracle/runtime/

ORACLE_RUNTIME

/u01/oracle/runtime/

SDD_HOME (Global Cache)

/u01/oracle/runtime/domains/bi_domain/

Variables and directories configured on Local VD mounted on /u02/oracle/config/

PRIVATE_CONFIG_DIR

/u02/oracle/config/

MSERVER_HOME

/u02/oracle/config/domains/bi_domain/

Directories and variables configured on OAS-OHS-VMs (Web tier)

Variables and directories configured on NFS Volumes 5 and 6 mounted on /u01/oracle/ in their respective OAS-OHS1-VM and OAS-OHS2-VM servers

ORACLE_BASE

/u01/oracle/

ORACLE_HOME

/u01/oracle/products/fmw/

JAVA_HOME

/u01/oracle/products/jdk/

OHS_HOME

/u01/oracle/config/domains/ohs_domain/

It is recommended to create a new separate OS user that owns the complete OAS platform in the application tier. Hence, we created a new user oracle and a new group oinstall as under:

uid=1001(oracle) gid=54325(oinstall) groups=54325(oinstall),54326(dba)

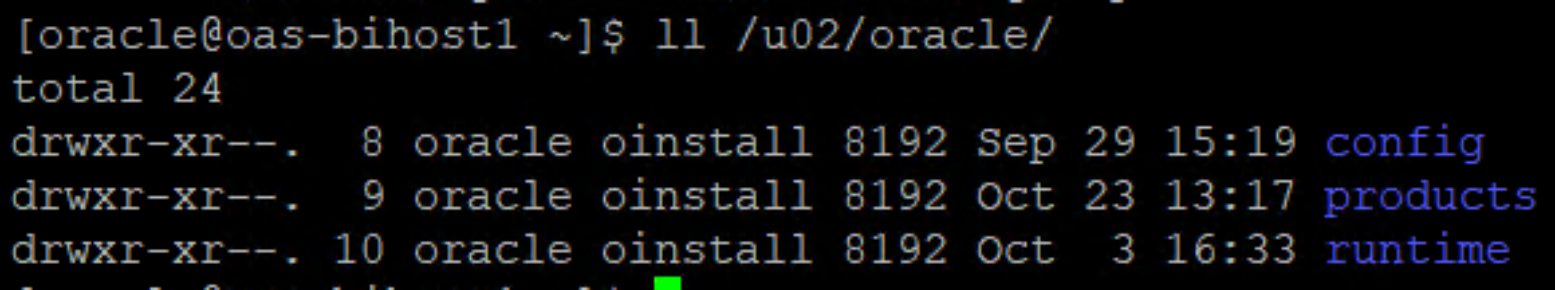

Figure 8 below shows the recommended ownership (oracle.oinstall) and permission (754) settings for all OAS platform directories and its sub-directories.

Figure 8. Recommended OAS directory ownership and permission settings

Persistent stores for JMS, TLOGs, and DB leasing

In addition to the required network configuration, for WSM to work it also requires JMS and TLogs be accessible by both the original and the failover OAS-BI hosts. This requires putting the JMS and TLogs persistent stores on a shared storage. OAS supports putting JMS and TLogs persistent stores on either file-based stores or JDBC-accessible stores.

There are several advantages of putting the JMS and TLogs persistent stores on JDBC-accessible stores over file-based stores, including:

- Oracle RAC Database setup in our database tier provides a convenient way to create and configure JDBC-accessible stores.

- Using Oracle JDBC stores provides the consistency, data protection, high availability, and load balancing features of Oracle RAC Database.

- JDBC stores support Oracle’s GridLink data sources which provide failover between the Oracle RAC nodes.

WSM functionality also requires the configuration of a leasing datasource that is used by the OAS-BI hosts to store alive timestamps.

To meet these WSM requirements and best practices, in our design, we configured Oracle GridLink datasources for JMS, TLogs, and Database leasing. For details on how to set this up refer to Using JDBC Persistent Stores for TLOGs and JMS in an Enterprise Deployment and Creating a GridLink Data Source for Leasing.

Storage design for Oracle RAC Database

Table 8 below shows the thin volumes we created in the PowerFlex block-based storage for the Oracle RAC Database.

Table 8. Oracle RAC Database volumes created in PowerFlex block-based storage (SDS)

(Example) Volume Name

Type

Size

Compressed

OAS-RAC-VM-OS

Thin

512 GB

No

OAS-RAC-OCR1

Thin

48 GB

No

OAS-RAC-OCR2

Thin

48 GB

No

OAS-RAC-OCR3

Thin

48 GB

No

OAS-RAC-GIMR

Thin

48 GB

No

OAS-RAC-DATA1

Thin

512 GB

No

OAS-RAC-DATA2

Thin

512 GB

No

OAS-RAC-DATA3

Thin

512 GB

No

OAS-RAC-DATA4

Thin

512 GB

No

OAS-RAC-FRA

Thin

192 GB

No

OAS-RAC-TEMP

Thin

512 GB

No

OAS-RAC-REDO1-1

Thin

56 GB

No

OAS-RAC-REDO1-2

Thin

56 GB

No

OAS-RAC-REDO2-1

Thin

56 GB

No

OAS-RAC-REDO2-2

Thin

56 GB

No

Each PowerFlex block-based volume was mapped and made visible in both the ESXi-based database hosts – DBHOST1 and DBHOST2. In vCenter, a VMFS 6 datastore (example, FlexDS-OAS-RAC-VM-OS) was created on top of the OAS-RAC-VM-OS PowerFlex volume. In both of the RAC VM’s (OAS-RAC-VM1 and OAS-RAC-VM2) settings, a virtual disk of size 256GB each was created on the FlexDS-OAS-RAC-VM-OS datastore, and OL 8 guest OS was installed on top of it. All the other PowerFlex volumes related to Oracle RAC Database were mapped directly to the first RAC VM (OAS-RAC-VM1) as Raw Device Mapping (RDM) or raw disks. Next, on the second RAC node VM (OAS-RAC-VM1) these same RDM disks were added and mapped as ‘existing Hard Disks’. Each Oracle database virtual disk’s sharing property is set to ‘multi-writer’ option to enable write protection. This prevents both RAC VMs from writing simultaneously to the shared raw disks and prevent data loss.

Note: PowerFlex 4.0 does not support Clustered VMFS Datastores. Hence, the Oracle Database disks need to be attached as RDM or raw devices to the RAC VMs.

- NAS-based storage (NFS volumes):