Home > AI Solutions > Artificial Intelligence > White Papers > Training Models Made Easy with Dell Enterprise Hub > Security considerations

Security considerations

-

Selecting a training model

Select a model from the catalog that fits your platform and business needs. Training processes can be GPU-intensive, so it is important to select a model that fits your hardware specifications.

On opening the model card, you will find that a training model has two cards: train and deploy. Training the model consists of three tasks: load the model, train the model on selected data, and then redeploy the model using the new training.

Perform the following steps:

- Select the Train card at the top of the page.

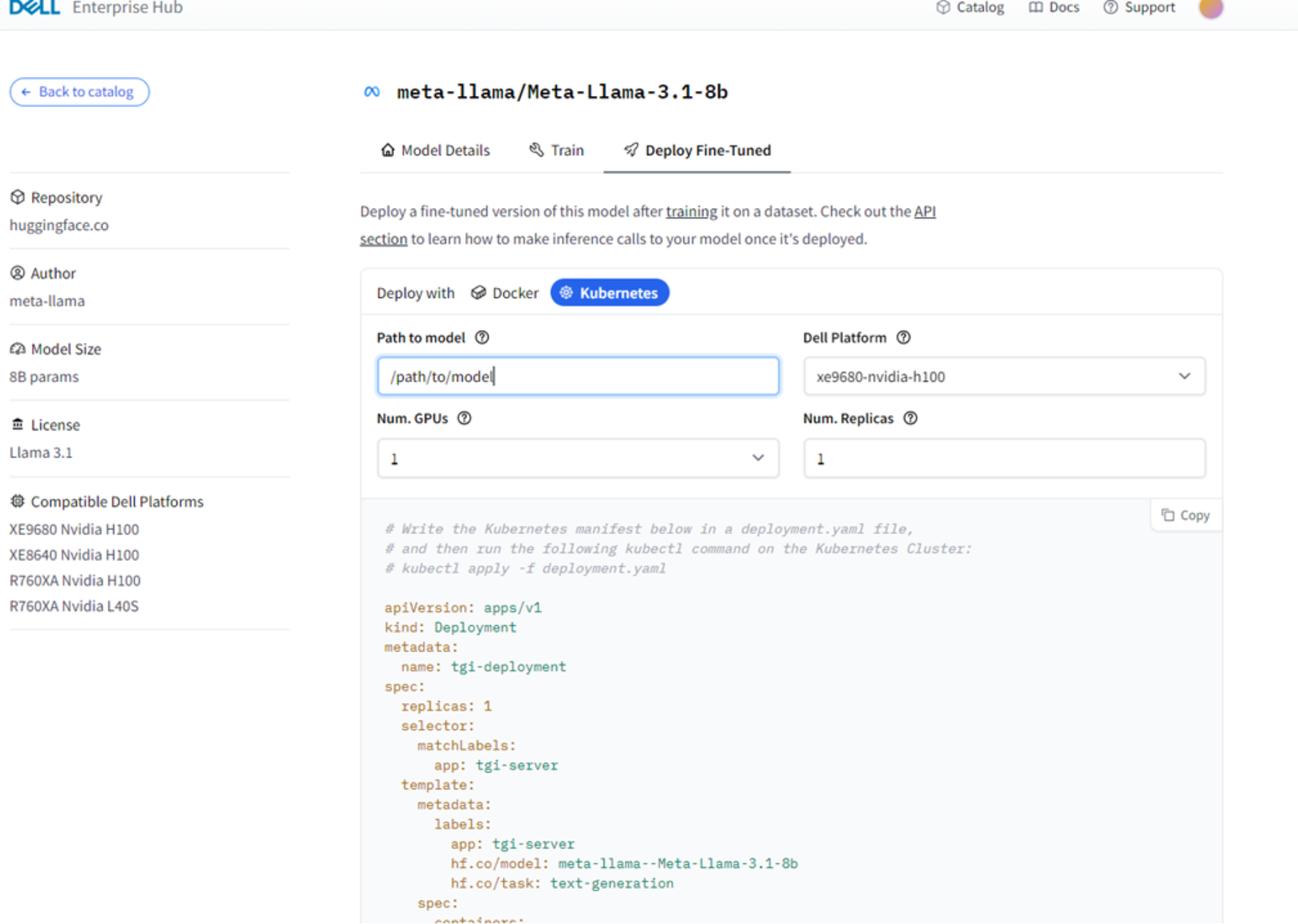

A model card opens that is similar to the sample card shown in the following figure:

Figure 4. Model card example on the Train tab with Kubernetes selected

- On the Training tab, provide the following information:

- Dell Platform

- Number of GPUs

- Path to dataset: The path on your system that leads to the dataset that you want to use to train the model.

- Path to target directory: The path to where the fine-tuned model is to be saved and stored. From there, you can use the Kubernetes or Docker code to deploy the training model to your machine.

- To ensure that your training data is compatible with the AutoTrain tool, column map your data so that AutoTrain knows the purpose of each column.

Column mapping links a corresponding input and output so that the model knows how to process the input data and what to expect from the output. For more information, see Understanding Column Mapping. All the files must be in the CSV format, but the formatting of each dataset should be different based on your use case. Depending on the trainer, the data must have the following columns and names:

- SFT/generic training: A single column named text and containing all the data.

- Reward trainer: Two columns: text and rejected text.

- DPO/ORPO trainer: Three columns: prompt, text, and rejected text.

Regardless of which trainer you are using, you must ensure accurate mapping for correct training. To do this:

- Verify the column names.

- Ensure that the correct data format is used.

- Update mappings for new datasets as needed.

The following code snippet shows a sample Kubernetes deployment for an SFT training model:

apiVersion: batch/v1

kind: Job

metadata:

name: autotrain-dell-sft

spec:

template:

metadata:

name: autotrain-dell

labels:

app: autotrain-dell

hf.co/model: meta-llama-meta-llama-3.1-8b

spec:

nodeSelector:

kubernetes.io/hostname: node032

# nvidia.com/gpu.product: NVIDIA-L40S

containers:

- name: trl-container

image: registry.dell.huggingface.co/enterprise-dell-training-meta-llama-meta-llama-3.1-8b

args:

- "--model=/app/model"

- "--project-name=fine-tune"

- "--data-path=/app/data"

- "--text-column=text"

- "--trainer=sft"

- "--epochs=3"

- "--mixed_precision=bf16"

- "--batch-size=2"

- "--peft"

- "--quantization=int4"

- "--merge_adapter"

env:

- name: ACCELERATE_LOG_LEVEL

value: "INFO"

- name: TRANSFORMERS_LOG_LEVEL

value: "INFO"

- name: TQDM_POSITION

value: "-1"

resources:

requests:

nvidia.com/gpu: 2

limits:

nvidia.com/gpu: 2

volumeMounts:

- mountPath: /dev/shm

name: dshm

- name: data-mount

mountPath: /app/data

readOnly: true

- name: output-mount

mountPath: /app/autotrain

readOnly: false

volumes:

- name: dshm

emptyDir:

medium: Memory

sizeLimit: 32Gi

- name: data-mount

nfs:

server: f600-21.ai.lab

path: /ifs/data/huggingface/phase-2/autotrain-example-datasets

- name: output-mount

nfs:

server: f600-21.ai.lab

path: /ifs/data/huggingface/phase-2/fine_tunned_model/llama-3.1-8b/760xa/2xL40S

restartPolicy: "Never"

Because AutoTrain is embedded in the container, no additional configuration is required. When the model is deployed, it is immediately ready for training. The Dell AI Solutions team applied the alpacas dataset from Hugging Face.

- Ensure that your dataset is at the path to dataset location.

The model will begin training and provide summarized accuracy and precision results.

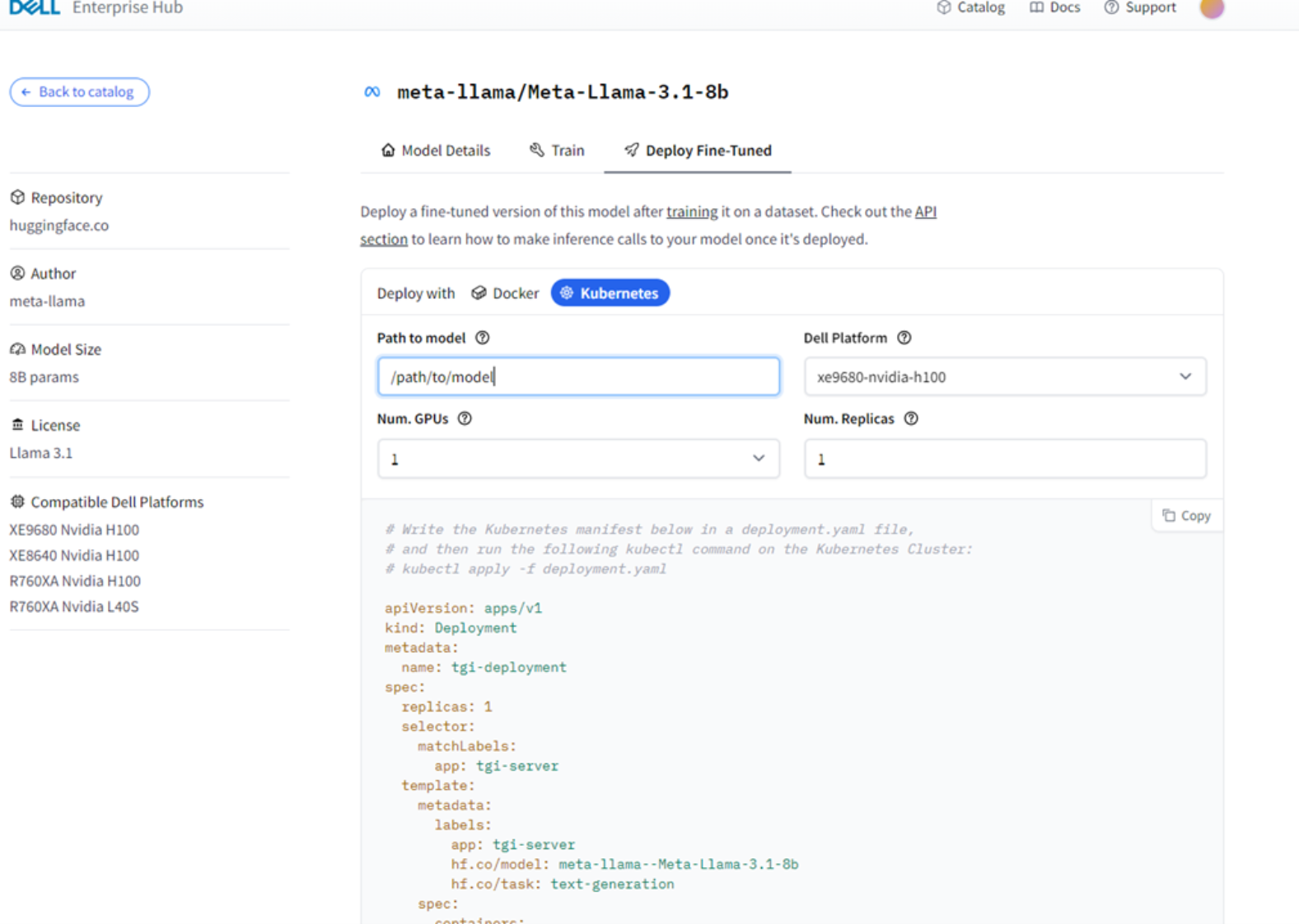

Return to the model page and click Deploy Fine-tuned at the top of the page.

Figure 5. Model card example with the Deploy Fine-Tuned tab and Kubernetes selected

- Verify that you have provided the correct path to the trained model and hardware specifications.

- Deploy the fine-tuned model with Kubernetes or Docker to begin using this model.