Home > Workload Solutions > Data Analytics > White Papers > Scale AI Training and Fine-Tuning with Dell PowerScale and PowerEdge Servers > Concurrency

Concurrency

-

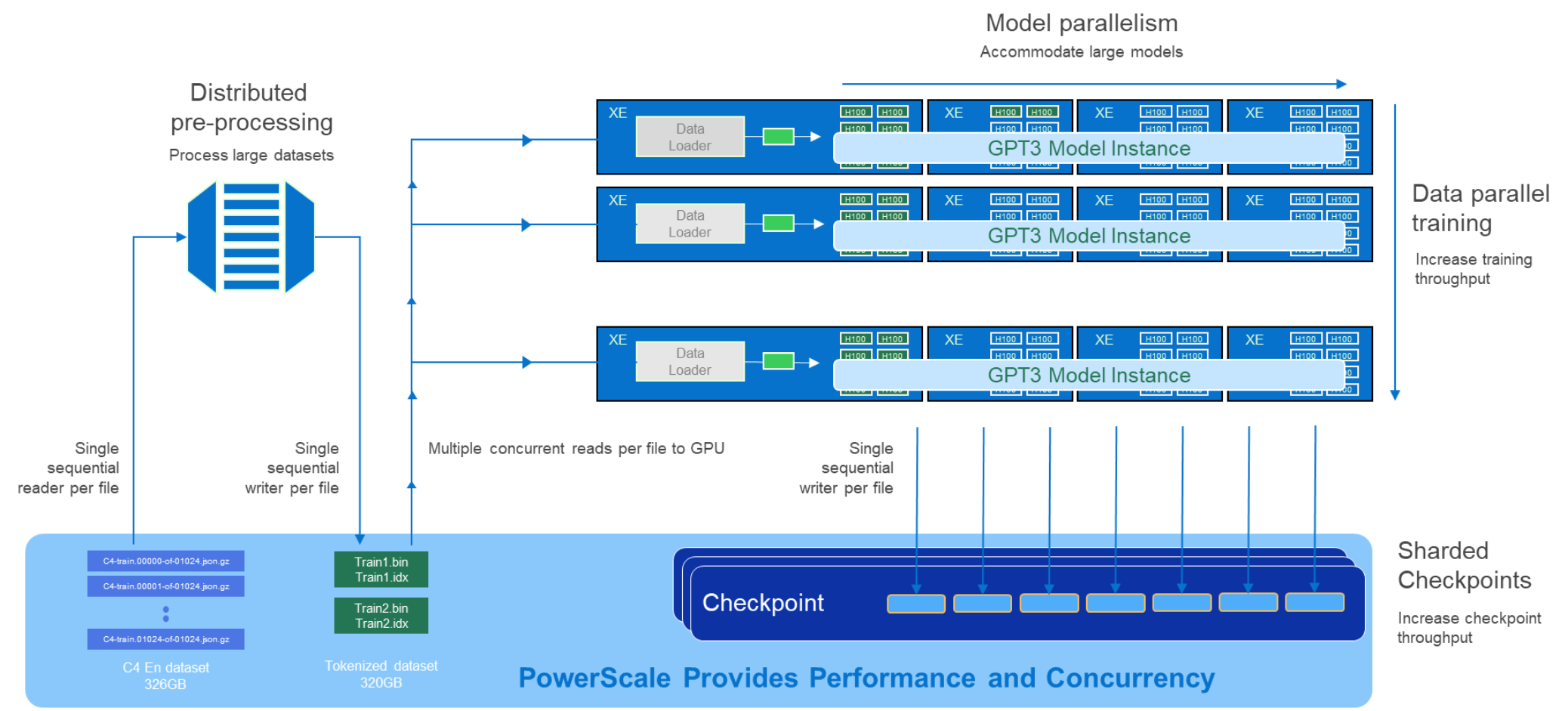

The AI development lifecycle spans preparing data, training, evaluating, retraining, and inferencing in production. Across all lifecycle phases is the need for concurrent IO. Throughout the training/retraining phases, GPUs read data from storage and write checkpoints back out. In large GPU clusters, this requires hundreds and sometimes thousands of simultaneous reads and writes to the storage system. To meet this level of demand, the storage platform must be able to handle sustained and transient concurrency peaks with ease.

Dell PowerScale was originally designed and optimized for industries with workloads that require extreme read and write concurrency. This inherent in-market experience has positioned Dell PowerScale ideally to meet emerging AI infrastructure market needs.

Figure 3. Architecture of workload characteristics