Home > Workload Solutions > High Performance Computing > White Papers > Ready Solutions for HPC Digital Manufacturing with 3rd Generation Intel Xeon for Siemens Simcenter STAR-CCM+ > Storage

Storage

-

Dell Technologies offers a wide range of general purpose and HPC storage solutions. For a general overview of the Dell Technologies HPC solution portfolio, please visit https://delltechnologies.com/hpc. There are typically three tiers of storage for HPC: scratch storage, operational storage, and archival storage, which differ in terms of size, performance, and persistence.

Scratch storage tends to persist for the duration of a single simulation. It may be used to hold temporary data that is unable to reside in the compute system’s main memory due to insufficient physical memory capacity. HPC applications may be considered “I/O bound” if access to storage impedes the progress of the simulation. For these HPC workloads, typically the most cost-effective solution is to provide sufficient direct-attached local storage on the compute nodes.

For situations where the application may require a shared file system across the compute cluster, a high-performance shared file system may be better suited than relying on local direct-attached storage. Typically, using direct-attached local storage offers the best overall price/performance and is considered best practice for most computer-aided engineering (CAE) simulations. For this reason, local storage is included in the recommended configurations with appropriate performance and capacity for a wide range of production workloads. If anticipated workload requirements exceed the performance and capacity provided by the recommended local storage configurations, care should be taken to size scratch storage appropriately based on the workload.

Operational storage is typically defined as storage used to maintain results over the duration of a project and other data, such as home directories, such that the data may be accessed daily for an extended period. Typically, this data consists of simulation input and results files, which may be transferred from the scratch storage, typically in a sequential manner, or from users analyzing the data, often remotely. Since this data may persist for an extended period, some or all of it may be backed up at a regular interval, where the interval chosen is based on the balance of the cost to either archive the data or regenerate it if need be.

Archival data is assumed to be persistent for a very long term, and data integrity is considered critical. For many modest HPC systems, use of the existing enterprise archival data storage may make the most sense, as the performance aspect of archival data tends to not impede HPC activities. Our experience in working with customers indicates that there is no ‘one size fits all’ operational and archival storage solution. Many customers rely on their corporate enterprise storage for archival purposes and instantiate a high-performance operational storage system dedicated for the HPC environment.

Operational storage is typically sized based on the number of expected users. For fewer than 30 users, a single NFS storage server, such as the Dell EMC PowerEdge R740xd is often an appropriate choice. A suitably equipped storage server may be:

- Dell EMC PowerEdge R740xd server

- Dual Intel® Xeon® Silver 4210 processors

- 96 GB of memory, 12 x 8GB 2666 MTps DIMMS

- PERC H740P RAID controller

- 2 x 480GB Mixed-use SATA SSD in RAID-1 (For OS)

- 12 x 12TB 3.5: NLSAS HDDs in RAID-6 (for data)

- Dell EMC iDRAC9 Express

- 2 x 750 W power supply units (PSUs)

- ConnectX-6 HDR100 InfiniBand Adapter

- Site specific high-speed Ethernet Adapter(optional)

This server configuration would provide 144TB of raw storage. For customers expecting between 25-100 users, an operational storage solution, such as the Dell EMC PowerScale A200 scale-out NAS may be appropriate.

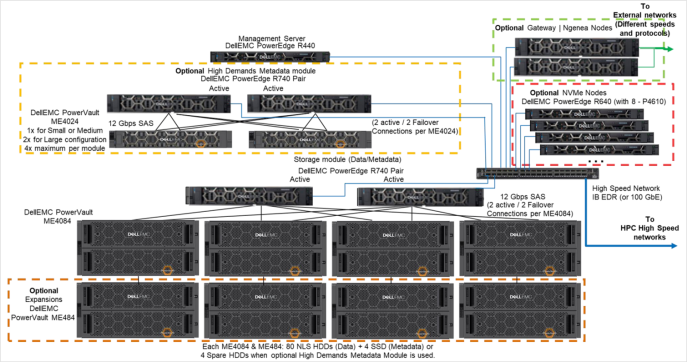

For customers desiring a shared high-performance parallel filesystem, the Validated Design for HPC PixStor Storage solution shown in Figure 2 is appropriate. This solution can scale up to multiple petabytes of storage.

Figure 2. Validated Design for PixStor Storage