Home > Workload Solutions > Data Analytics > Guides > Ready Solutions for AI & Data Analytics: Edge Analytics for Industry 4.0 with Confluent Platform > IIoT and the edge

IIoT and the edge

-

A concrete definition of the edge given the complex landscape of IoT environments is elusive. Rigid definitions of IoT that imply a universal architecture that is built from technologies like digital sensors, networks, and AI is unhelpful as it hinders the analysis of alternative system architectures that are needed for unique use cases like Industrial IoT (IIoT). (Boyes, H., Hallaq, B., Cunningham, J., & Watson, T. (2018). The industrial internet of things (IIoT): An analysis framework. Computers in Industry, 101, 1-12.) The only thing that can universally be agreed on is that when the things are stationary they define the outer boundary of the edge.

For IIoT, the edge was initially defined as a band technology that includes sensors and controllers along with some gateways, switches, and even routers for data collection and communication. Recently edge devices are also starting to be associated with some types of data processing technology used for analytics. How broad this new band is, and what is included, is up to each group of architects designing systems.

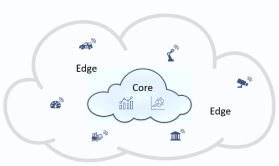

For most use cases, it is advantageous to explicitly define the boundaries of the edge. That definition will help make clear the connections necessary to connect to other layers such as a core or cloud data center. The IIoT use case in this document, using the Confluent Platform, uses two zones:

This document shows how data that is generated in the edge flow to the core, and how data models developed in the core flow back to the edge. Anyone designing cyber-physical systems for IIoT applications should be wary of jumping on the all analytics are moving to the edge bandwagon too quickly. There are many good reasons to design for the smallest footprint edge possible, especially for organizations that have many geographically dispersed manufacturing facilities. The use case that is described shows how applying sophisticated data filtering and analytics close to the data sources can have significant operational benefits.

Dell EMC also designed this solution to take advantage of centralized investment in corporate IT data centers and data science personnel. This design follows a pattern of keeping the edge thin by concentrating big data analytics technology and skilled staff in a central location in a core data center. (Sahal, R., Breslin, J. G., & Ali, M. I. (2020). Big data and stream processing platforms for Industry 4.0 requirements mapping for a predictive maintenance use case. Journal of Manufacturing Systems, 54, 138-151. https://doi.org/10.1016/J.JMSY.2019.11.004)

This use case demonstrates hosting an anomaly detection model inferencing against near real-time data at the edge. Data for model training was moved from the edge to the core for use by a data science team for model development. New versions of the fully trained model are then moved back to the edge as needed. This procedure involved:

- Selecting and routing data from the edge to the core for training.

- Designing a method for moving the trained model artifact back to the edge.

The next two chapters have more to say about how this procedure was accomplished.