Home > Workload Solutions > Data Analytics > Guides > Ready Solutions for AI & Data Analytics: Edge Analytics for Industry 4.0 with Confluent Platform > Hardware infrastructure

Hardware infrastructure

-

This section describes the Dell EMC recommended server and network configurations for Confluent Platform. Topics include:

The Dell EMC PowerEdge XE2420 Edge Server can be used as an alternative to the Dell EMC PowerEdge R640. The Dell EMC PowerEdge XE2420 provides durability, speed, storage capacity, management capability, and serviceability for industrial environments. Contact your Dell EMC sales representative for more information.

Edge configurations

Edge configurations are run on bare metal in each edge or factory location. The configuration consists of a minimum of four machines, and supports an entire edge facility. The cluster design can be scaled up for extra capacity.

Control Center Node

The Control Center Node is used to host Confluent Control Center. The Dell EMC recommended hardware configuration is listed in the following table.

Table 3: Control Center Node configuration

Machine function

Component

Platform

Dell EMC PowerEdge R640

Chassis

2.5” chassis with up to eight hard drives and three PCIe slots

Processor

Intel Xeon Gold 5218 2.3 G, 16C/32T, 10.4 GT/s, 22 M Cache, Turbo, HT (125 W) DDR4-2666

RAM

Six 8 GB RDIMM, 2666 MT/s, single rank

Network daughter card

Mellanox ConnectX-4 LX dual port 10/25 GbE SFP28, rNDC

Boot configuration

From RAID controller

Storage controller

Dell EMC PERC H330 RAID controller, mini card

Disk - SSD

Two 480 GB SSD SATA mix use 6 Gbps 512 2.5 in hot-plug AG Drive, 3 DWPD, 2628 TBW

Drive configuration

C7, unconfigured RAID for HDDs or SSDs (Mixed drive types allowed.)

Platform Node

The Platform Node configuration is used for multiple roles, including Kafka Connect, the Confluent Schema Registry, and the Confluent RESTful API Proxy. The Dell EMC recommended configuration is listed in the following table.

Table 4: Platform Node configuration

Machine function

Component

Platform

Dell EMC PowerEdge R640

Chassis

2.5" chassis with up to eight hard drives and three PCIe slots

Processor

Intel Xeon Gold 6226 2.7 G, 12C/24T, 10.4 GT/s, 19.25 M cache, turbo, HT (125 W) DDR4-2933

RAM

Six 8 GB RDIMM, 2666 MT/s, single rank

Network daughter card

Mellanox ConnectX-4 LX dual port 10/25 GbE SFP28, rNDC

Boot configuration

From SSD

Storage controller

Dell EMC PERC H330 RAID controller, mini card

Disk - SSD

Two 240 GB SSD SATA mixed use 6 Gbps 512e 2.5" hot-plug S4610 Drive

Drive configuration

C7, unconfigured RAID for HDDs or SSDs (Mixed drive types allowed.)

KSQL Node

The KSQL Node is used to host Confluent KSQL. The Dell EMC recommended configuration is listed in the following table.

Table 5: KSQL Node configuration

Machine function

Component

Platform

Dell EMC PowerEdge R640

Chassis

2.5” chassis with up to eight hard drives and three PCIe slots

Processor

One Intel Xeon Gold 6226 2.7 G, 12C/24T, 10.4 GT/s, 19.25 M cache, turbo, HT (125 W) DDR4-2933

RAM

Six 8 GB RDIMM, 2666 MT/s, single rank

Network daughter card

Mellanox ConnectX-4 LX dual port 10/25 GbE SFP28, rNDC

Boot configuration

From RAID controller

Storage controller

Dell EMC PERC H330 RAID controller, mini card

Disk - SSD

Two 480 G SSD SATA mix use 6 Gbps 512 2.5" hot-plug AG drive, 3 DWPD, 2628 TBW

Drive configuration

C7, unconfigured RAID for HDDs or SSDs (Mixed drive types allowed.)

Broker Node

The Broker Node is used to host the Kafka Broker, and provides a balance of compute and storage. The Dell EMC recommended configuration is listed in the following table.

Table 6: Broker Node configuration

Machine function

Component

Platform

Dell EMC PowerEdge R640

Chassis

2.5” chassis with up to 10 hard drives, two 2.5" SATA/SAS drives and one PCIe slot, two CPUs only

Processor

Two Intel Xeon Gold 5218 2.3 G, 16C/32T, 10.4 GT/s, 22 M cache, turbo, HT (125 W) DDR4-2666

RAM

12 8 GB RDIMM, 2666 MT/s, single rank

Network daughter card

Mellanox ConnectX-4 LX dual port 10/25 GbE SFP28, rNDC

Boot configuration

From BOSS controller

Storage controller

Dell EMC PERC H740P RAID controller, 8 GB NV cache, mini card

Boot optimized storage cards

BOSS controller card + with two M.2 sticks 240 G (RAID 1), LP

Note: The Kafka partitions should use a reliable storage layer - either RAID 5, RAID 6, or RAID 10.

Dell EMC recommends RAID 6 for the best performance and reliability with the Dell EMC PERC H740P storage controller. RAID 5 provides slightly larger storage capacity but lower redundancy. RAID 10 has lower performance than RAID 6 during normal I/O operations, and when running with a degraded RAID array.

Core configurations

The core runs Confluent Platform underneath Red Hat OpenShift Container Platform, and is designed to scale support for multiple edge facilities. These configurations provide a solid base for a modern analytics environment. The OpenShift environment is large enough to support additional machine learning and MLOps capabilities. The OpenShift cluster is based on the Dell EMC Ready Stack for Red Hat OpenShift Container Platform 4.3.

The minimum OpenShift deployment consists of seven nodes:

Table 7 describes the Worker Node types.

CSAH Node

The CSAH Node is used to manage the operation and installation of the container ecosystem cluster. It runs the infrastructure services required for the OpenShift platform, including:

Configuration details are described in Table 8.

Master Node

Master Nodes provide an application programming interface (API) for overall resource management. These nodes run etcd, the API server, and the Controller Manager Server.

Configuration details are described in Table 8.

Worker Node

Worker Nodes are where the application containers are deployed . A minimum of two Worker Nodes must always be operating.

There are two alternative configurations:

Table 7: OpenShift node descriptions

Type

Description

Count

Notes

CSAH Node

Dell EMC PowerEdge R640 server

One

Runs the infrastructure services required for the OpenShift platform including HAProxy, DNS, DHCP, TFTP, web server, and PXE server.

Master Nodes

Dell EMC PowerEdge R640 server

Three

Run the OpenShift control plane services.

Worker Nodes

Dell EMC PowerEdge R640 or Dell EMC PowerEdge R740xd server

Three or more

Run the OpenShift container services.

Table 8: OpenShift Dell EMC PowerEdge R640 CSAH Node/Master Nodes configuration

Machine function

Component

Platform

Dell EMC PowerEdge R640

Chassis

2.5" chassis with up to 10 hard drives, eight NVMe drives, and three PCIe slots, two CPUs only

Processor

Two Intel Xeon Gold 6238R 2.2 G, 28C/56T

RAM

192 GB RAM

Network daughter card

Mellanox ConnectX-4 LX dual port 10/25 GbE SFP28, rNDC

Boot configuration

From SSD

Storage controller

Dell EMC HBA330 controller, 12 Gbps mini card

Disk - NVMe

One to eight Dell 1.6 TB, NVMe, mixed use Express Flash, 2.5" SFF drive, U.2, P4610 with carrier

Disk - SSD

Two 960 GB SSD SAS read intensive 12 Gbps

Drive configuration

RAID 1, JBOD

Table 9: OpenShift Dell EMC PowerEdge R640 Worker Nodes configuration

Machine function

Component

Platform

Dell EMC PowerEdge R640

Chassis

2.5" chassis with up to 10 hard drives, eight NVMe drives, and three PCIe slots, two CPUs only

Processor

Two Intel Xeon Gold 6238R 2.2 G, 28C/56T

RAM

192 GB RAM

Network daughter card

Mellanox ConnectX-4 LX dual port 10/25 GbE SFP28, rNDC

Boot configuration

From SSD

Storage controller

Dell EMC HBA330 controller, 12 Gbps mini card

Disk - NVMe

One to eight Dell 1.6 TB, NVMe, mixed use Express Flash, 2.5" SFF drive, U.2, P4610 with carrier

Disk - SSD

Two 960 GB SSD SAS read intensive 12 Gbps

Drive configuration

RAID 1, JBOD

Table 10: OpenShift Dell EMC PowerEdge R740xd Worker Nodes configuration

Machine function

Component

Platform

Dell EMC PowerEdge R740xd

Chassis

Chassis with up to 24 2.5” hard drives including a maximum of 12 NVME drives

Processor

Two Intel Xeon Gold 6252 2.1 G, 24C/48T

RAM

192 GB RAM

Network daughter card

Mellanox ConnectX-4 LX dual port 10/25 GbE SFP28, rNDC

Additional network card

Mellanox ConnectX-4 LX dual port 10/25 GbE SFP28, network adapter

Boot configuration

From SSD

Storage controller

Dell EMC HBA330 controller, 12 Gbps mini card

Disk - NVMe

One to 12 Dell 1.6 TB, 3.2 TB, or 6.4 TB, NVMe, mixed use Express Flash, 2.5" SFF drive, U.2, P4610 with carrier, CK

Disk - SSD

One to 24 800 GB, 1.92 TB, or 3.84 TB SSD SAS mixed use 12 Gbps 512e 2.5" hot-plug AG drive with carrier

Drive configuration

RAID 1, JBOD

Network configurations

Edge Analytics for Industry 4.0 with Confluent Platform uses two networks - one for an edge or factory facility, and one for the core cluster in a data center:

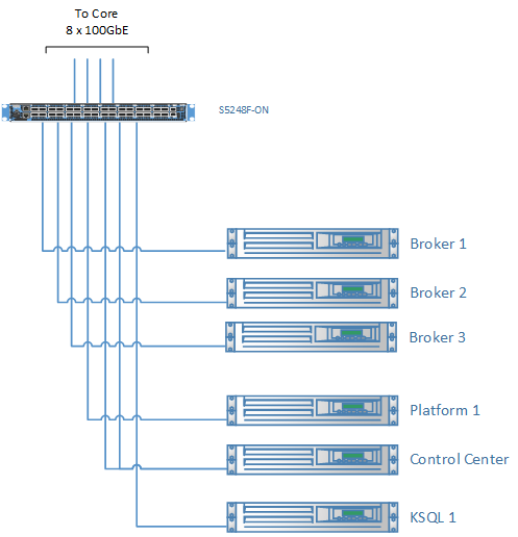

Edge Cluster network

Dell EMC recommends the Dell EMC PowerSwitch S5248F-ON as the rack level switch for deployments. It is a high-density 25/10/1 GbE form factor switch that provides 4.0 Tbps (full-duplex) nonblocking, cut-through switching fabric and delivers line-rate performance under full load.

Networking requirements vary based on the deployment scenario. For single-rack cluster deployments, a single switch is adequate and can support 40 nodes, with spare connections for integration. The following figure illustrates a typical single-rack scenario.

Figure 13: Single-rack Edge cluster network connections

Multi-rack deployments are often integrated into existing network infrastructure. In multi-rack scenarios, nodes from each function should be distributed across racks to increase resiliency and avoid single points of failure. In some application scenarios, using the RESTful API and a load balancer using session affinity (sticky sessions) may be required. In other cases, IP rotation or simple load balancing are adequate.

Dell EMC recommends the Dell EMC PowerSwitch S3148-ON for iDRAC or management network interfaces. See the following table.

Table 11: Network configurations

Function

Recommended switch hardware

Top of rack or cluster

Dell EMC PowerSwitch S5248F-ON

Management

Dell EMC PowerSwitch S3148-ON

Load balancing

Hardware load balancer or IP rotation

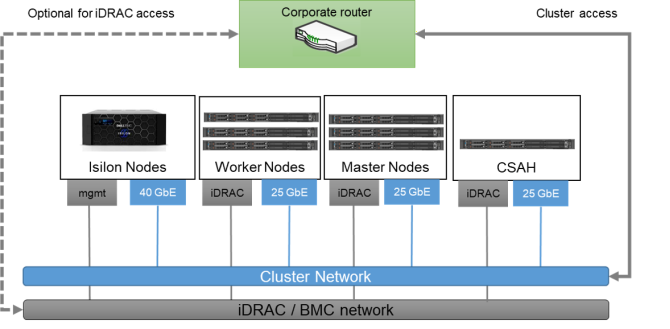

Core Cluster network

Dell EMC networking products are designed for ease of use and to enable resilient network creation. Red Hat OpenShift Container Platform 4.4.9 introduces various advanced networking features to enable containers for high performance and monitoring. The Dell EMC recommended design applies the following principles:

- Meet the network capacity and segregation requirements of the container pod.

- Configure dual-homing of the OpenShift Platform Node to two Virtual Link Trunked (VLT) switches.

- Create a scalable and resilient network fabric to increase cluster size.

- Provide monitoring and tracing of container communications.

Container network capacity and segregation

Container networking takes advantage of the high speed (25/100 GbE) network interfaces of the Dell Technologies server portfolio. In addition, pods can attach to more networks using available Container Networking Interface (CNI) plug-ins to meet network capacity requirements.

Extra networks are useful when network traffic isolation is required. Networking applications such as Container Network Functions (CNFs) have control traffic and data traffic. These different traffic types have different processing, security, and performance characteristics.

Figure 14: Core Cluster network organization

Dell EMC recommends the switches listed in the following table for the Core Cluster network.

Switch function

Recommended switch

Leaf (Top of Rack)

Dell EMC PowerSwitch S5248F-ON

Spine

Dell EMC PowerSwitch Z9100-ON

iDRAC network and Out of Band (OOB) management

Dell EMC PowerSwitch S3148-ON

Note: A typical installation uses two leaf switches per rack for high availability.

The Core Cluster network organization is shown in Figure 14.