Home > AI Solutions > Artificial Intelligence > White Papers > Model Customization for Code Creation with Red Hat OpenShift AI on Dell AI Optimized Infrastructure > Software design

Software design

-

AI or Solution Software

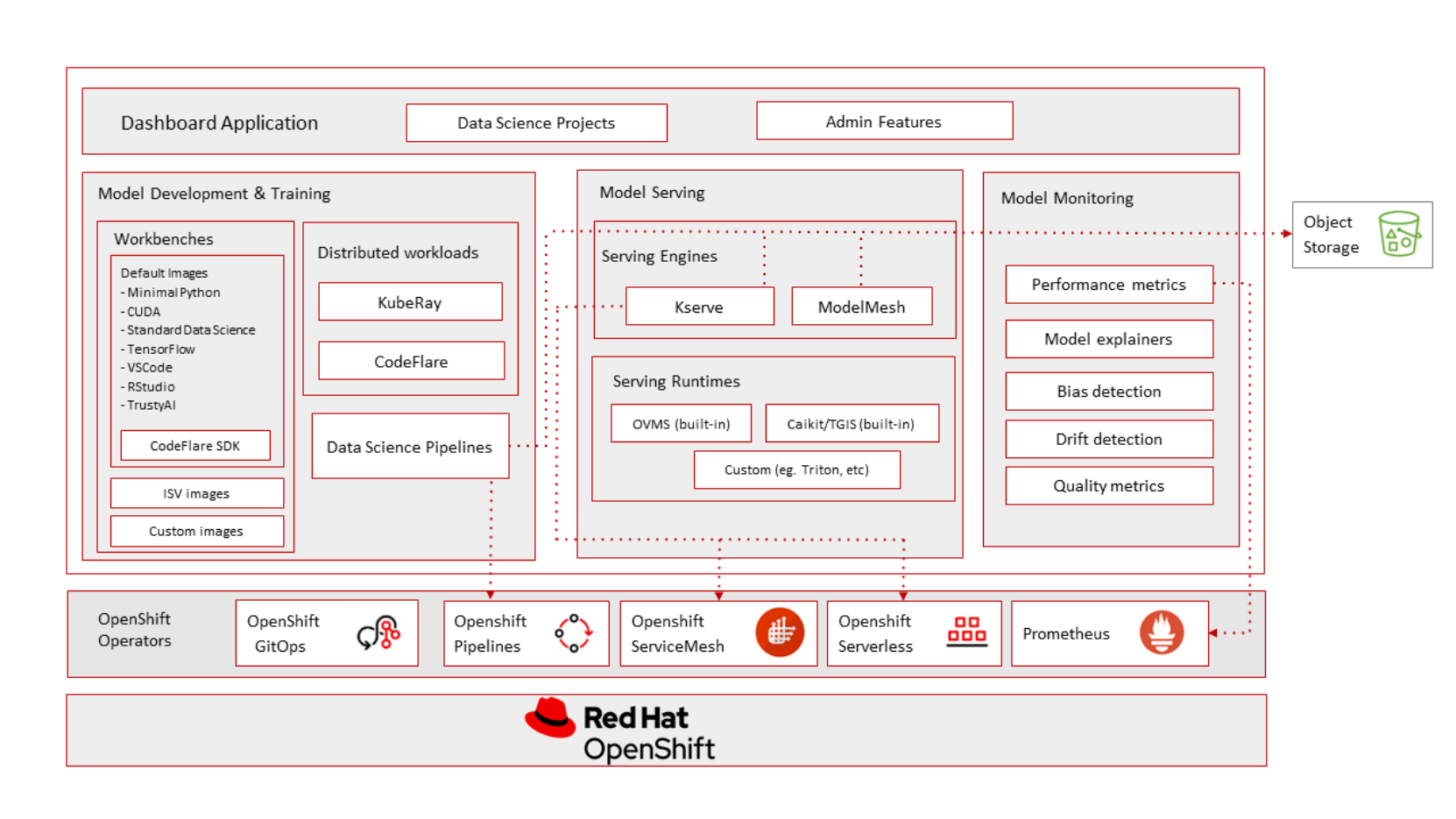

Red Hat OpenShift AI enables AI adoption with an integrated MLOps platform for building, tuning, deploying, and monitoring AI-enabled applications and models at scale.

Figure 2. Red Hat OpenShift AI Architecture

The following software component versions were used and are discussed in further detail below.

Software Component

Version

Red Hat OpenShift

4.15.5

Red Hat OpenShift AI

2.11

NVIDIA GPU Operator

23.9.2

Distributed workloads components (managed by Red Hat OpenShift AI)[2]

CodeFlare

KServe

Ray

Kueue

Workbenches

DashboardWorkbench Notebook Image

Red Hat Standard Data Science Notebook Image

Version 2024.1 Python 3.9LLM

meta-llama/CodeLlama-70b

Dataset

cognitivecomputations/dolphin-coder

vLLM

0.5.0-post1

Visual Studio Code

1.90.0

Continue Plugin

0.8.43

Jupyter notebooks enabled by Red Hat OpenShift AI provide a sandbox for development and execution of Python code related to model training.

From a notebook, the CodeFlare framework enables direct workload configuration (for example Ray cluster creation) and simplifies the task orchestration and monitoring. It also offers seamless integration for automated resource scaling and optimal node utilization with advanced GPU support.

The Ray cluster provides a flexible and scalable distributed set of head and worker pods on which the Python training code is run.

As described in this GitHub page, DeepSpeed, an open-source deep learning optimization library for PyTorch, is used for the basis of the distributed fine-training code for the Code Llama 70b model using the LoRA method.

Finally, for fast and easy-to-use inference and serving of base and trained models, the vLLM library is used. For the end user experience, Visual Studio Code is the IDE using the Continue plugin to interface with the inference server to generate and explain code.