Home > Workload Solutions > High Performance Computing > White Papers > Machine Learning Using Red Hat OpenShift Container Platform > Comparison of distributed with non-distributed training

Comparison of distributed with non-distributed training

-

Performance comparison

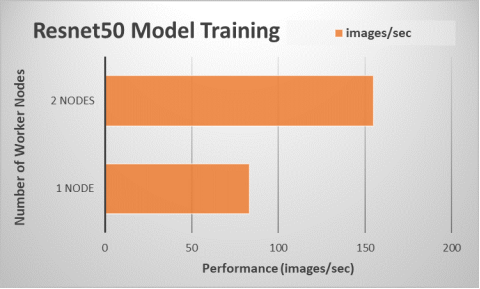

We compared the performance results from executing the TensorFlow benchmark on a single application node (non-distributed training) with execution using training on two application nodes (one PS node and two worker nodes). The following figure shows the results:

Figure 10. Performance scaling for model training using multiple server nodes

The throughput indicates that a training job using two application nodes is almost 1.9 times faster than a training job using a single application node. Using the optimized Tensorflow framework distribution from Intel and applying the appropriate parameters when launching a training job enables the ML practitioner to execute their Tensorflow jobs efficiently on Intel Xeon Scalable processors.