Home > Workload Solutions > Oracle > Guides > Implementation Guide—Oracle Database 19c Best Practices on PowerStore > Baseline configuration

Baseline configuration

-

ESXi system configuration

Use the following steps to configure the physical servers that host the database VMs:

- Use the BIOS settings for the PowerEdge servers described in 0.

- Create a single virtual disk in a RAID 1 configuration with two local disks (SSDs or HDDs) per server to install the bare-metal operating system (ESXi 7.3).

- Install ESXi 7.3 by using the Dell customized ISO image.

- Configure, monitor, and maintain the ESXi host, virtual networking, and the VM by using the VMware vSphere web client and VCSA, which is deployed as a VM on the management server.

- Zone two dual-port 32 Gb/s HBAs (four initiators in total) and configure these HBAs with the PowerStore front-end Fibre Channel ports for high bandwidth, load-balanced, and highly available SAN traffic.

PowerStore storage configuration details

The PowerStore volume group feature enables the administrator to create a configuration that facilitates ease of management, efficient snapshots, and replication. This section explains the design principles used to create volumes. For the baseline storage configuration, all four virtualized databases (DB1 to DB4) used the same design. The following table shows the baseline storage configuration for DB1. The database used was named ORABP22:

Table 8: PowerStore storage volumes

Volume Group

Volume Name

Number of LUNs

VMware Datastore

Volume size (GB)

Notes

orabp22-vm1-os

orabp22-vm1-os

1

orabp22-vm1-os-ds

400

Operating System

orabp22-vm1-grid

orabp22-vm1-grid-001

1

orabp22-vm1-grid-001-ds

50

GRID

orabp22-vm1-grid-002

1

orabp22-vm1-grid-002-ds

50

orabp22-vm1-grid-003

1

orabp22-vm1-grid-003-ds

50

orabp22-vm1-db1

orabp22-vm1-db1-data-001

1

orabp22-vm1-data-001-ds

1000

Data files

orabp22-vm1-db1-redo-001

1

orabp22-vm1-redo-001-ds

55

Online redo logs

orabp22-vm1-db1-fra-001

1

orabp22-vm1-fra-001-ds

60

Flash Recovery Area

orabp22-vm1-temp

orabp22-vm1-db1-temp

1

orabp22-vm1-temp-ds

500

Temp files

The naming convention using VMn and DBn allowed the database administrators to increment the value to create volume groups for other databases. For example, the second copy of the database had a volume group name of ORABP22_VM2_DB2. Using this naming convention allowed the administration team to repurpose copies of the database quickly. Each LUN was configured as a VMware datastore. For detailed PowerStore best practices and features, see Storage Best Practices.

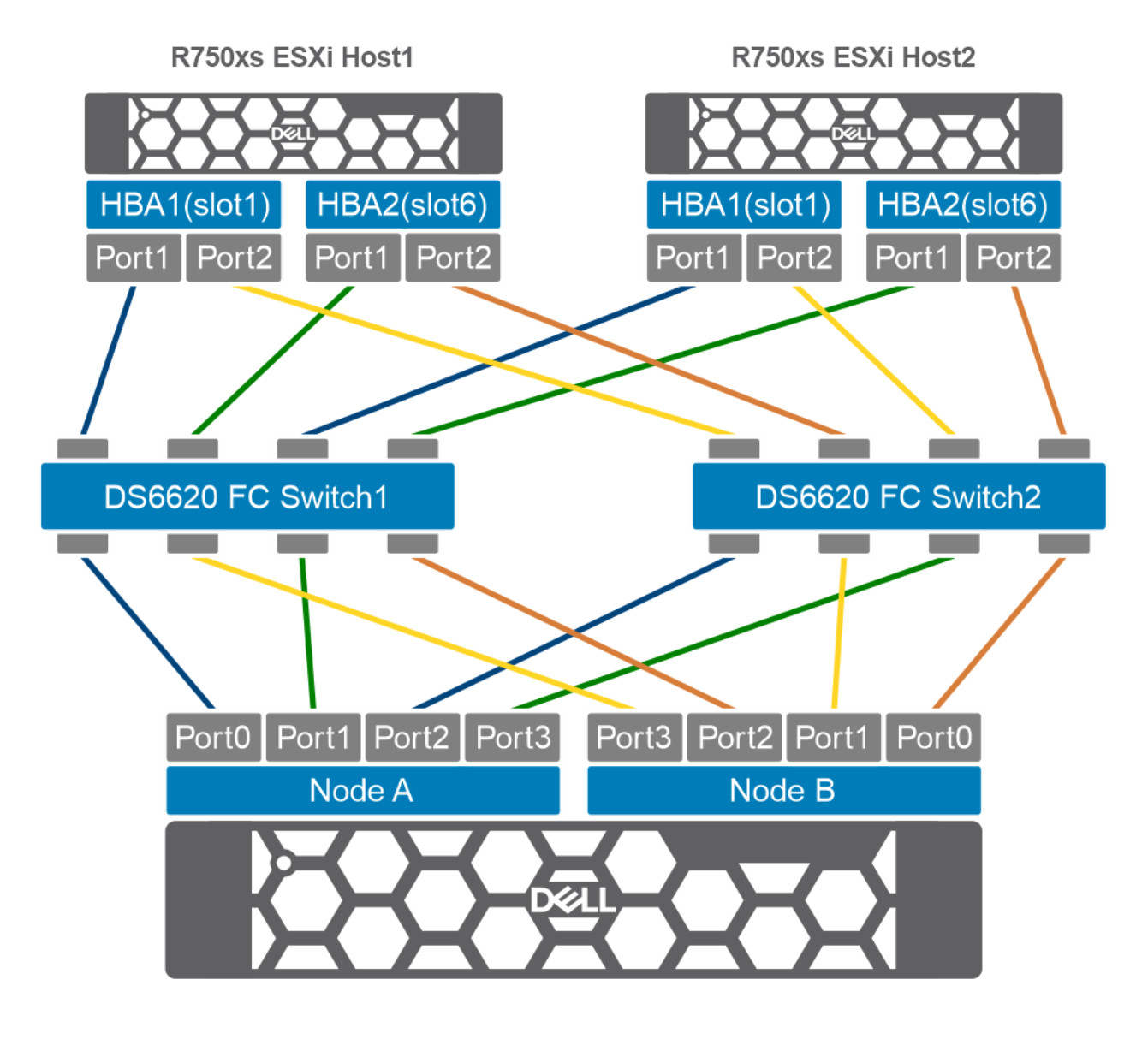

SAN FC network zoning configuration

Each ESXi host (R750xs server) has two dual-port Fibre Channel HBAs. The PowerStore 5000T under test has two Fibre Channel front-end I/O modules (one per node). Each I/O module has four 32Gbps ports with a total of eight 32Gbps front-end ports. There are two Connectrix Fibre Channel switches. Each Connectrix switch is its own fabric. For connectivity, each ESXi host was zoned to all eight front-end ports of the PowerStore across the two switches. This configuration should be the default setup. This configuration ensures high availability against a single component failure of the stack. Figure 2 shows the SAN zoning configuration.

Figure 2: Baseline SAN FC connectivity and zoning

VM network: Distributed Network switches

We configured the following two distributed network switches: ESXI-Mgmt DS ESXi server management that connects to the vCenter and ESXi hosts and OraPub-vMotion that supports the VM network for Oracle database public network and vMotion.

Table 9 describes the details of these two distributed network switches.

Table 9: Distributed network switches

Distributed network switches

Uplink ports

Purpose

VM port group

ESXI-Mgmt DS

On 2x 1GB network (rNDC) vmnic0 and vmnic 1

ESXi server host management

OraPub-vMotion

2x25 GbE physical network: vmnic5 and vmnic11

Oracle Database public network and vMotion network

OraPub

Table 10: VMware Network settings

Network Settings

Jumbo Frames

Disabled

VMXNET3 adapter

Yes

Server physical NIC port, HBA port, and switch connectivity

Table 11 shows the physical NIC ports, their associated switches, and port groups. The last two columns, SAN FC A and SAN FC B, are the two dual port HBAs that are connected to the Fibre channel switches. The last row describes the switch ports that are connected the corresponding NICs ports or the HBA ports.

Table 11: Switches ports, NIC ports and HBA ports in Ethernet network and SAN FC network

Server

ESXi Mgmt Network

Oracle Pub Group

vMotion Network

SAN FC A

SAN FC B

R750xs-92P-18

vmnic0(e:1), vmnic1(e:2)

vmnic2(i:1)

vmnic4(2:1)

1:1, 6:1

1:2, 6:2

R750xs-92P-20

vmnic0(e:1), vmnic1(e:2)

vmnic2(i:1)

vmnic4(2:1)

1:1, 6:1

1:2, 6:2

Switch Ports

L42K-92O-SW1:10 On L45K-98Q-SW01 Fex 132 (ACCESS VLAN 379)

S5224-92O-40:11 (TRUNK VLAN 382, 99)

S5224-92O-40:11 (TRUNK VLAN 382, 99)

DS6620-92J:1:8

DS6620-92J:2:8

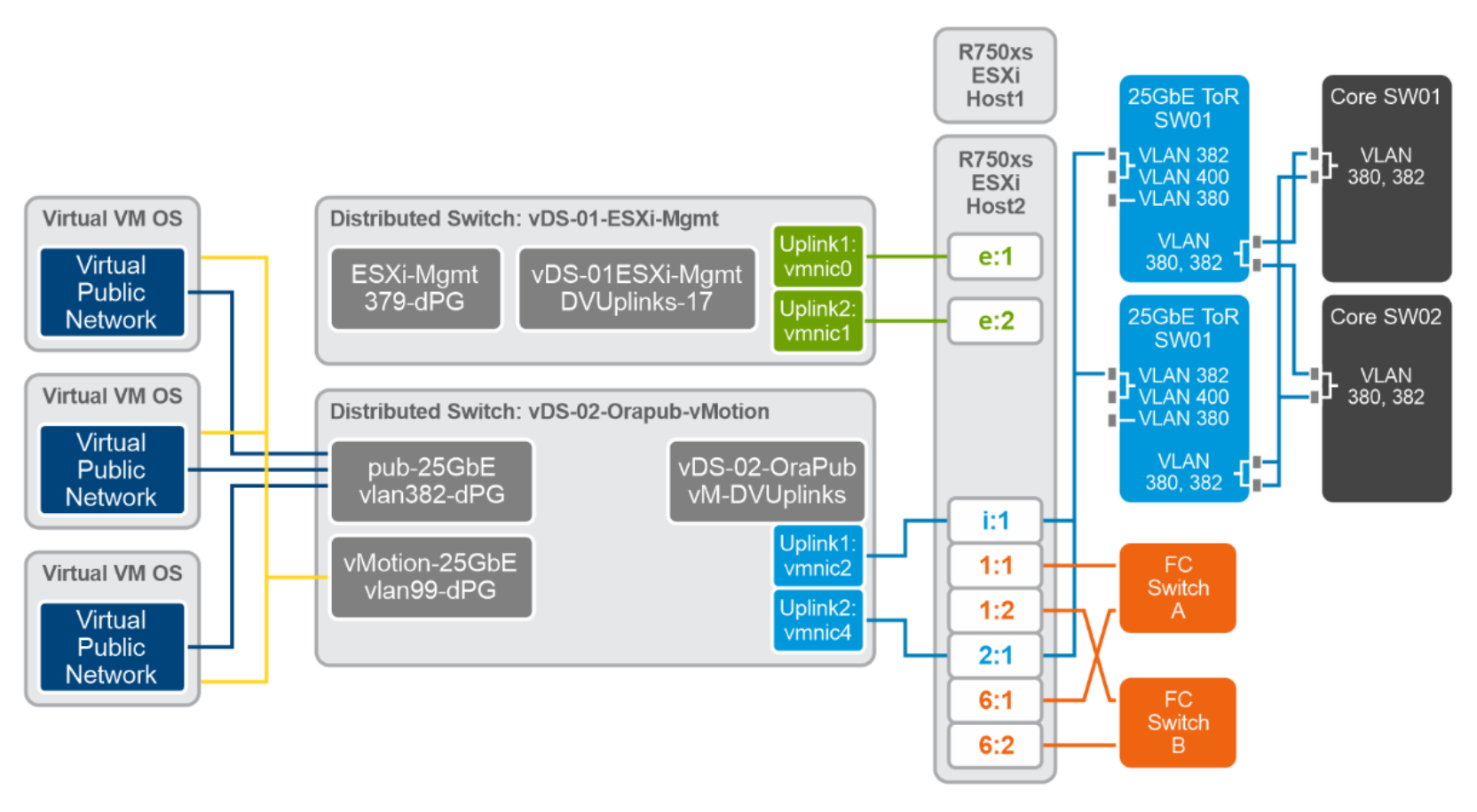

Figure 3 describes the configuration of the physical and virtual network and the SAN Fibre Channel Network. In the middle of the figure, two virtually distributed switches established the mapping of the virtual network port groups and physical uplinks:

Distributed switch vDS-01-ESXi-Mgmt connects the port group ESXi-Mgmt-3780dPG and the two physical uplinks vmnic0 and vmnic1.

Distributed switch vDS-02-Orapub-Vmotion connects two port groups: pub-25GbE-vlan382-dPG (for VM public network) and vMotion-25GBE-vlan99-dPG (for Motion) with two physical uplinks vmic2 and vmnic4.

The right side of the figure shows how these physical links are mapped to the physical NIC ports of each server and how these NICs ports are connected to the 25GBE TOR physical switches that connect to outside network.

The lower right side of the figure shows how the two dual ports. The HBAs are connected to the ports of two FC switches.

Figure 3: Physical and virtual network design