Home > Workload Solutions > High Performance Computing > White Papers > HPC Software-Defined Storage with PixStor > System Design

System Design

-

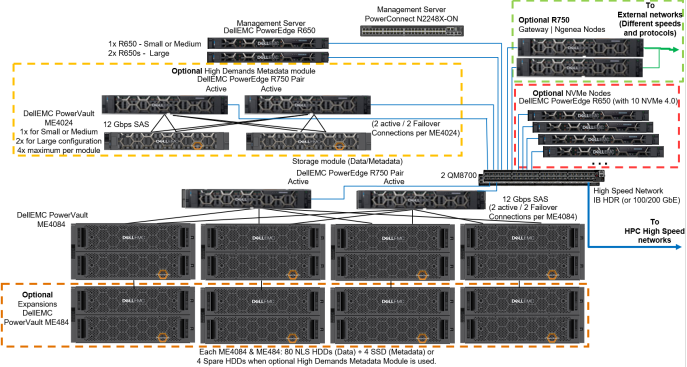

Figure 2 presents the design for the new generation of the Validated Design for HPC PixStor Storage, which leverages Dell EMC PowerEdge R650, R750 and R7525 servers and PowerVault ME4084 and ME4024 storage arrays, with PixStor 6.0 software from ArcaStream. In addition, optional PowerVault ME484 EBOD arrays can be used to increase the capacity of the solution. Figure 2 presents the solution design depicting such capacity expansion as SAS additions to the existing PowerVault ME4084 storage arrays.

PixStor software includes the widespread general parallel file system (GPFS) also known as Spectrum Scale as the PFS component which is considered software-defined storage due to its flexibility and scalability. In addition, PixStor software includes many other ArcaStream software components like advanced analytics, simplified administration and monitoring, efficient file search, advanced gateway capabilities and many other features.

Figure 2. Solution design

The main components of the PixStor storage solution are:Management servers

Dell EMC PowerEdge R650 servers provide graphic user interface (GUI) and command line interface (CLI) access for management and monitoring of the PixStor storage solution, as well as performing the advanced search capabilities compiling some metadata information in a database to speed searches and avoid loading metadata network shared disks (NSDs).

Storage module

Currently- the main building block for the PixStor storage solution, each module includes one pair of storage servers, 1, 2 or 4 backend storage arrays (ME4084) with optional capacity expansions (ME484), and the network-shared disks contained in those arrays.

Storage server (SS)

An essential part of storage modules, HA pairs of Dell EMC PowerEdge R750 servers (failover domains) are connected to ME4084s arrays via SAS 12 Gbps cables, manage data NSDs and provides access to NSDs via redundant high-speed network interfaces. For the standard PixStor configuration, these servers have the dual role of metadata servers and manage metadata NSDs (using SSDs that replace all spare HDDs).

Backend storage

Stores the file system data (MD4084) or metadata (ME4024). PowerVault ME4084s are part of the storage module and PowerVault ME4024s are part of the optional High-Demand metadata module in Figure 2.

Stores the file system data (MD4084) or metadata (ME4024). PowerVault ME4084s are part of the storage module and PowerVault ME4024s are part of the optional High-Demand metadata module in Figure 2.Capacity expansion storage

Optional Capacity Expansions are PowerVault ME484 (inside dotted orange square in Figure 2) connected behind ME4084s via SAS 12 Gbps cables to expand the capacity of a storage module. For PixStor storage configurations, each ME4084 can only use one ME484 expansion, for performance and reliability (even that ME4084 supports up to three ME484s).

Network-shared disks (NSDS)

NSDS are backend block devices (e.g. RAID LUNs from ME4 arrays or RAID 10 NVMeoF devices) that store information, data and metadata. In the PixStor storage solution, file system data and metadata are stored in different NSDs, data NSDs normally use spinning media (NLS SAS3 HDDs), while metadata NSDs use SAS3 SSDs (metadata include directories, filenames, permissions, time stamps and the location of data in other NSDs).

NVMeoF-based NSDs are currently used only for data, however, we are currently testing them for metadata, and we plan to test them for data + metadata.

High-demand metadata server (HDMDS)

Part the optional high-demand metadata module (inside dotted yellow square in Figure 2). Pairs of Dell EMC PowerEdge R750 servers in HA (failover domains) connected to PowerVault ME4024s arrays via SAS cables, manage the metadata NSDs and provides access to the metadata backend via redundant high-speed network interfaces.

NVMe nodes

Main part of the optional NVMe Tier modules (inside dotted green square in Figure 2). Pairs of PowerEdge R650 servers in HA (failover domains) provide a high-performance flash-based tier for the PixStor storage solution. Performance and capacity for this NVMe tier can be scaled out by additional pair of NVMe nodes. Increased capacity is provided by selecting the appropriate capacity for the NVMe devices supported in the PowerEdge R650.

Each PowerEdge R650 has ten NVMe devices split in slices/partitions. Then, slices from all drives in both servers are combined into RAID10 devices, for high throughput. These NVMe nodes leverage NVMesh as the NVMe over Fabric (NVMeoF) component to have each mirror copy from the RAID10 on a different server (for HA purposes) and provide block devices to the file system to use as NSDs.

Native client software

Software installed on the clients that allow access to the file system. The file system must be mounted for access and appears as a single namespace.

Gateway nodes

The optional gateway nodes (inside dotted red square in Figure 2) are PowerEdge R750 servers (same hardware as Ngenea nodes but different software) in a Samba’s Clustered Trivial Data Base (CTDB) cluster providing NFS or SMB access to clients that do not have, or cannot have, the native client software installed, but instead use NFS or SMB protocols to access information.

Ngenea nodes

The optional Ngenea nodes (inside dotted red square in Figure 2) are PowerEdge R750 servers (same hardware as gateway nodes but different software) that use PixStor software to access to external storage devices that could be used as another tier in the same single namespace (e.g. Object Storage, cloud storage, tape libraries, etc.) using enterprise protocols, including cloud protocols.

Management switch

The Dell EMC PowerSwitch N2248X-ON gigabit ethernet switch was used to connect the different servers and storage arrays. It is used for administration of the solution, interconnecting all the components.

High-performance switch

NVIDIA® QM8700 switches provide high-speed access via InfiniBand (IB) HDR and HDR100. For Ethernet solutions, the NVIDIA Mellanox SN3700 can be used.