Home > Workload Solutions > High Performance Computing > White Papers > HPC Software-Defined Storage with PixStor > Storage Configuration on ME4 Arrays

Storage Configuration on ME4 Arrays

-

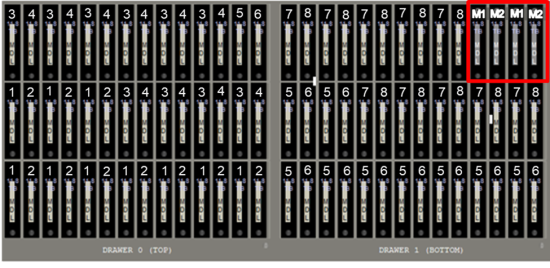

The Validated Design for HPC PixStor Storage has two variants — the standard configuration and the one that includes the high-demand metadata module. On the standard configuration, the same pair of PowerEdge R750 servers use their PowerVault ME4084 arrays to store DATA on NLS SAS3 HDDs and metadata on SAS3 SSDs. On Figure 12 we can see this PowerVault ME4084 configuration showing how are the drives assigned to the different LUNs. Notice that each PowerVault ME4084 has eight linear RAID6 (8 data disks + 2 parity disks) Virtual Disks used for DATA only where HDDs are selected alternating even-numbered disk slots for one LUN, and odd-numbered disk slots for the next LUN, and that pattern repeats until all 80 NLS disks are used. The last four disk slots have SAS3 SSDs, which are configured as two linear RAID 1 pairs, and only hold metadata. Linear RAIDs were chosen over virtual RAIDs to provide the maximum possible performance for each virtual disk. Similarly, RAID 6 was chosen over ADAPT despite the speed advantage of the later when rebuilding after failures.

When the storage module has a single PowerVault ME4084, then GPFS is instructed to replicate the first RAID 1 on the second RAID 1 as part of a failure group. However, when the storage module has 2 or 4 PowerVault ME4084s, those arrays are divided in pairs and GPFS is instructed to replicate each RAID 1 on one array to the other array, using different failure groups. Therefore, each RAID 1 always has a replica managed by a GPFS failure group.

All the virtual disks have associated volumes that span their whole size and are mapped to all ports, so that they are all accessible to any HBA port from the two PowerEdge R750s connected to them. Also, each PowerEdge R750 has one HBA port connected to each PowerVault ME4084 controller from their storage arrays. Such that even if one server is operational and only a single SAS cable remains connected to each PowerVault ME4084, the solution can still provide access to all data stored in the ME4 arrays. Optional capacity expansion arrays PowerVault ME484 use the exact same configuration of the PowerVault ME4084 they are connected to, including the four SSDs for metadata.

Figure 12. PowerVault ME4084 (or MD484) drives assigned to LUN for Standard configuration

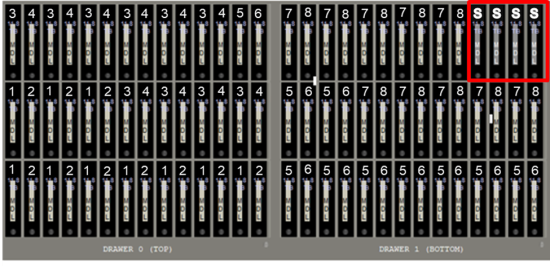

When the optional high-demand metadata module is used, the eight RAID 6 are assigned just like the standard configuration and are also used only to store data. However, instead of SSDs, the last four disk slots have NLS SAS3 HDDs to be used as hot spares for any failed disk within the array, see Figure 13.

Figure 13. PowerVault ME4084 (or ME484) drives assigned to LUN for configuration with high-demand metadata

With the high-demand metadata module, optional capacity expansion arrays PowerVault ME484 also use the exact same configuration of the PowerVault ME4084 they are connected to, including the four spare HDDs.

An additional pair of PowerEdge R750 servers are connected to one or more PowerVault ME4024, which are used to store metadata. Those arrays are populated with SAS3 SSDs that are configured as twelve RAID 1 Virtual Disks (VDs), and disks for such VDs are selected starting in disk slot 1 and for its mirror moving twelve disks to the right (slot N & Slot N+12), as can be seen in Figure 14. Similar to the standard configuration, when a single PowerVault ME4024 array is used, GPFS is instructed to replicate metadata using pairs of RAIDs 1 LUNs as part of a failure group. However, if more than one PowerVault ME4024 is used, then LUNs part of failure groups are kept in separate arrays.

All the virtual disks on the storage module and the HDMD module are exported as volumes that are accessible to any HBA port from the two PowerEdge R750s connected to the correspondent PowerVault ME4 arrays, and each PowerEdge R750 has one HBA port connected to each PowerVault ME4 controller from their storage arrays. Such that even if one server is operational and only a single SAS cable remains connected to each ME4, the solution can still provide access to all data (or metadata) stored in those arrays.

Figure 14. PowerVault ME4024 drives assigned to LUN for configuration with high-demand metadata

Finally, high-speed networks are connected via CX6 adapters to handle information exchange with clients, but also to evaluate if a node part of a module is operational.