Home > Workload Solutions > High Performance Computing > White Papers > HPC Software-Defined Storage with PixStor > Metadata Performance with MDtest Using Empty Files

Metadata Performance with MDtest Using Empty Files

-

Metadata performance was measured with MDtest version 3.3.0, assisted by OpenMPI v4.1.2A1 to run the benchmark over the 16 compute nodes. Tests executed varied from single thread up to 512 threads. The benchmark was used for files only (no directories metadata), getting the number of creates, stats, reads and removes the solution can handle.

To properly evaluate the solution in comparison to other Dell Technologies HPC storage solutions, the optional high-demand metadata module was used, with a single PowerVault ME4024 array.

This high-demand metadata module can support up to four PowerVault ME4024 arrays, and it is suggested to increase the number of PowerVault ME4024 arrays to 4, before adding another metadata module. Additional PowerVault ME4024 arrays are expected to increase the metadata performance linearly with each additional array, except maybe for Stat operations (and Reads for empty files), since their numbers are very high, at some point, the CPUs will become a bottleneck and performance will not continue to increase linearly.

The following command was used to execute the benchmark, where Threads was the variable with the number of threads used (1 to 512 incremented in powers of two), and my_hosts.$Threads is the corresponding file that allocated each thread on a different node, using round robin to spread them homogeneously across the 16 compute nodes. Similar to the random I/O benchmark, the maximum number of threads was limited to 512, since there are not enough cores for 1024 threads and context switching could affect the results, reporting a number lower than the real performance of the solution.

mpirun --allow-run-as-root -np $Threads --hostfile my_hosts.$Threads –map-by node --mca btl_openib_allow_ib 1 --oversubscribe --prefix /usr/mpi/gcc/openmpi-4.1.2a1 /usr/local/bin/mdtest -v -d /mmfs1/perf/mdtest -P -i 1 -b $Directories -z 1 -L -I 1024 -u -t -F

Since performance results can be affected by the total number of IOPs, the number of files per directory and the number of threads, it was decided to keep fixed the total number of files to 2 MiB files (2^21 = 2097152), the number of files per directory fixed at 1024, and the number of directories varied as the number of threads changed as shown in Table 3.

Table 3. MDtest distribution of files on directories

Number of Threads

Number of Directories per Thread

Total Number of Files

0001

2048

2,097,152

0002

1024

2,097,152

0004

0512

2,097,152

0008

0256

2,097,152

0016

0128

2,097,152

0032

0064

2,097,152

0064

0032

2,097,152

0128

0016

2,097,152

0256

0008

2,097,152

0512

0004

2,097,152

1024

0002

2,097,152

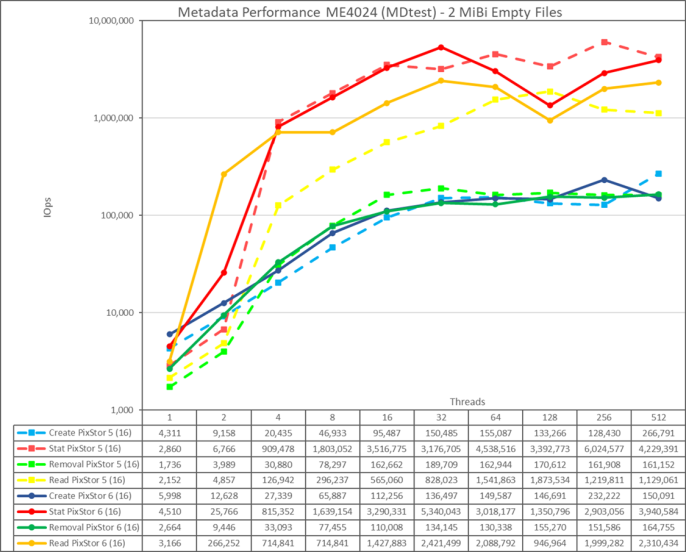

Figure 18. Metadata performance - empty files

First, note that the scale chosen was logarithmic with base 10, to allow comparing operations that have differences several orders of magnitude; otherwise, some of the operations would look like a flat line close to 0 on a normal graph. A log graph with base 2 could be more appropriate, since the number of threads are increased in powers of 2, but such graph would look pretty similar, and people tend to handle and remember better numbers based on powers of 10.

The storage system gets very good results with Stat and Read operations reaching their peak value at 32 threads with 5.34M Op/s and 2.42M op/s respectively. Removal operations attained the maximum of 164.76K Op/s at 512 threads and Create operations achieving their peak at 256 threads with 232.22K Op/s. Stat and Read operations have more variability, but once they reach their peak value, performance does not drop below 2.9M Op/s for Stats and 2M op/s for Reads. Create and Removal are more stable once their reach a plateau and remain above 130K op/s for Removal and 146K op/s for Create.