Home > Workload Solutions > High Performance Computing > White Papers > HPC Software-Defined Storage with PixStor > High-Speed, Management and SAS Connections

High-Speed, Management and SAS Connections

-

Since the Validated Design for HPC PixStor Storage is sold with deployment services included, a detailed depiction of the cabling for SAS or high-speed network is out of scope for this document. Similarly, the cabling of the different components to the management switch is also out of scope for this document.

Management servers are PowerEdge R650s with riser configuration 0 that has 3 x16 slots, Figure 3 shows the slot allocation for the server. The LAN on motherboard port 1 (LOM 1) is connected to the 1 GbE management network and named man0, and the LOM port 2 is named man2 and along with the iDRAC dedicated port, are connected to an external management network to access the PixStor storage solution. Slots 1 and 2 used for ConnextX-6 adapters connected to the high-speed network switches, and slot 3 has an optional serial port card.

Figure 3. PowerEdge R650 management server - slot allocation

Storage nodes (SN) and high-demand metadata (HDMD) servers use PowerEdge R750 servers with the Riser configuration 1 that has 8 slots, 2 x16 and 6 x8, and Figure 4 shows the slot allocation for the server. The LOM port 1 and iDRAC dedicated port are connected to the 1 GbE management network. All PowerEdge R750 servers have slots 3 & 6 (x16) used for ConnectX-6 (CX6) adapters connected to the high-speed network switches. Storage or HDMD servers connected to PowerVault ME4 arrays have two SAS HBA355e cards in slots 2 & 5 (x8). Slot 8 is used for an optional serial port card.

Figure 4. PowerEdge R750 storage and metadata nodes - slot allocation

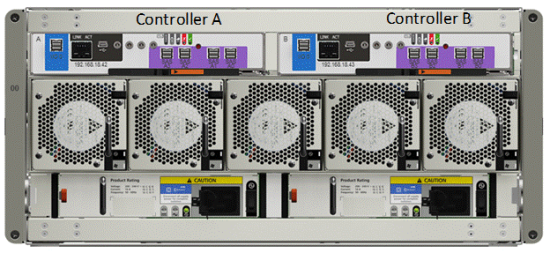

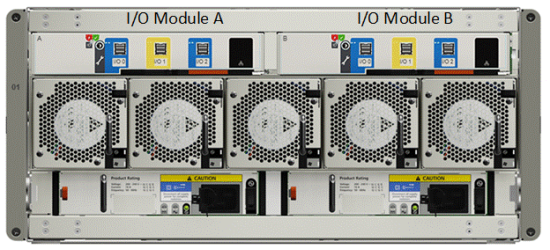

Figure 5 shows the controllers of the PowerVault ME4084s, one SAS port (A0-A3 & B0-B3) from each controller (A & B) is connected to a different HBAs in slots 2 and 7 on each of the storage node. The SAS connector I/O 0 (blue section) of each controller is used to connect an expansion PowerVault ME484 in Figure 6 using port I/O 0 of the correspondent I/O module (controller A to I/O module A, controller B to I/O module B).

Figure 5. PowerVault ME4084 - controllers and SAS ports

Figure 6. PowerVault ME484 - I/O module and SAS ports

Figure 7 shows the controllers of the PowerVault ME4024, one SAS port (A0-A3 & B0-B3) from each controller (A & B) is connected to a different HBAs in slots 2 and 7 on each of the high-demand metadata nodes.

Figure 7. PowerVault ME4024 - controllers and SAS ports

Gateways/Ngenea nodes use PowerEdge R750 servers with the Riser configuration 2 have 6 slots, 2 x8 and 4 x16, Figure 8 shows the slot allocation for the server. The LOM port 1 and iDRAC dedicated port are connected to the 1 GbE management network. All 4 x16 can be used with CX6 adapters or other high speed network interface cards (NICs) supported by the PowerEdge R750, two full-height (FH) in slots 2 and 7 and two low-profile (LP) in slots 3 and 6. Slots 4 and 5 (x8) can be used for any supported NIC. Slots 1 and 8 are not available in this configuration.

Figure 8. PowerEdge R750 gateway or Ngenea nodes - slot allocation

The management switch for this generation of the solution is the Dell EMC PowerSwitch N2248X-ON (shown in Figure 9), unless the HPC cluster has enough free ports to connect all the redundant connections from the different PixStor solution servers.

Figure 9. Management switch - N2248X-ON

For InfiniBand deployments, a pair of Mellanox QM8700 IB HDR 200 Gbps switches (shown in Figure 10) is used as a simple Layer 2 switch using 1 GbE ports.

Figure 10. InfiniBand HDR managed switch - QM8700

For Ethernet deployments, a pair of Mellanox SN3700 Ethernet 200 Gbps switches (shown in Figure 11) are required, unless the HPC cluster has enough free ports to connect all the redundant connections from the different PixStor storage servers. The CX6 adapters used by the solution are VPI, which can be configured as InfiniBand or Ethernet as needed. There is also a 100 Gbps version of the switch, the SN3700C (not shown).

Figure 11. Ethernet 200 Gbps managed switch - SN3700