Home > Workload Solutions > Data Analytics > White Papers > GenAI on Dell APEX File Storage for Azure using Databricks, HuggingFace, and MosaicML > Solution Validation GenAI LLM model fine-tuning

Solution Validation GenAI LLM model fine-tuning

-

This section illustrates the step-by-step process of validating fine-tuning for pretrained models from the Hugging Face Open-source library. This section will also cover validation for three foundational models: OpenAI GPT, Google Bert, and Falcon.

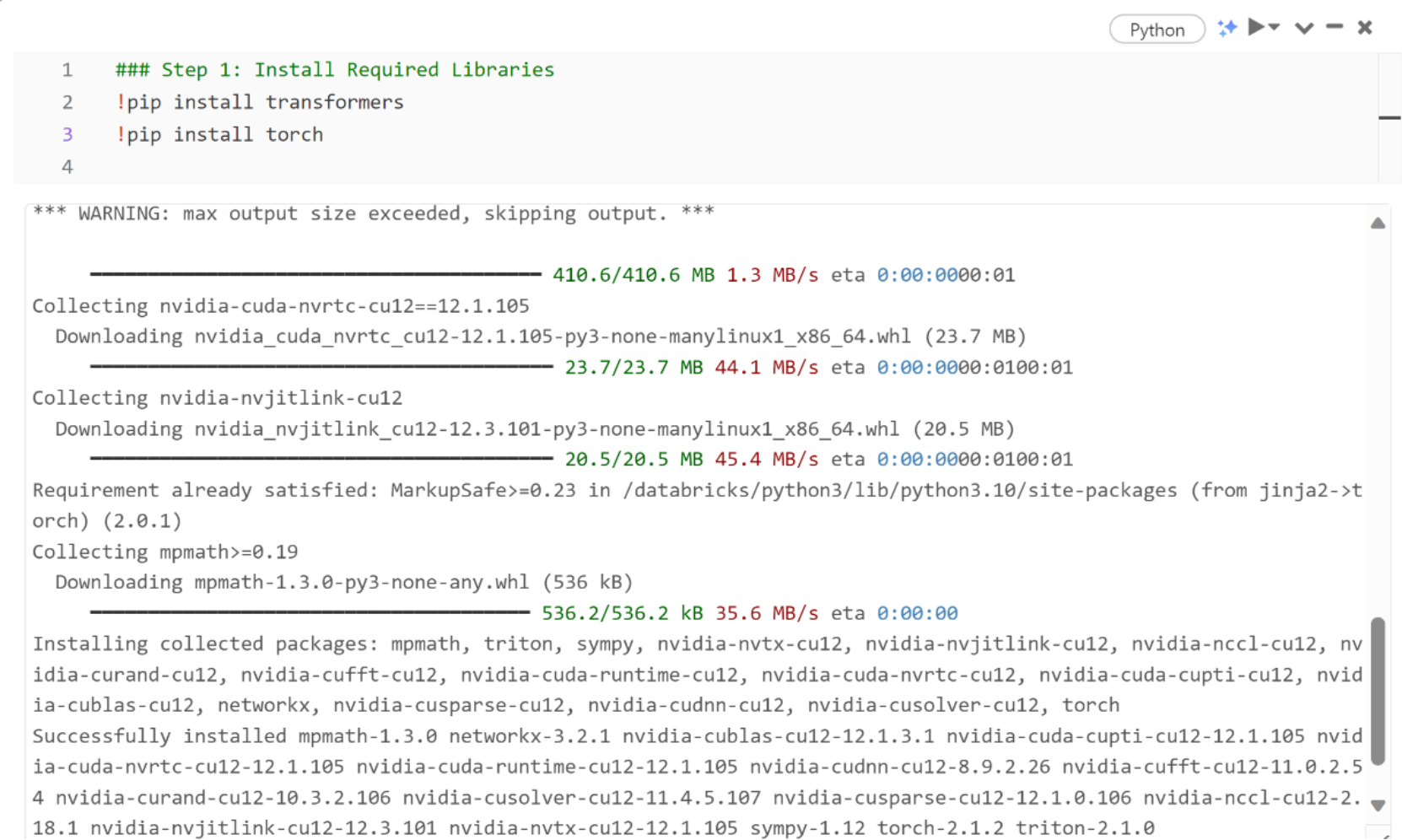

Step 1: Install Required Libraries

After setting up the solution and establishing connectivity, initiate the Databricks computer cluster with the intended resources for fine-tuning.

Create a new notebook and install necessary libraries such as Hugging Face’s transformers and other Python libraries. Torch will be installed for fine-tuning and inference.Step 2: Prepare Data

Fine-tuning the dataset involves preparing the data to meet the model's requirements, which is beyond the scope of this validation. The pre-tuned data from the raw dataset has already been stored for use.

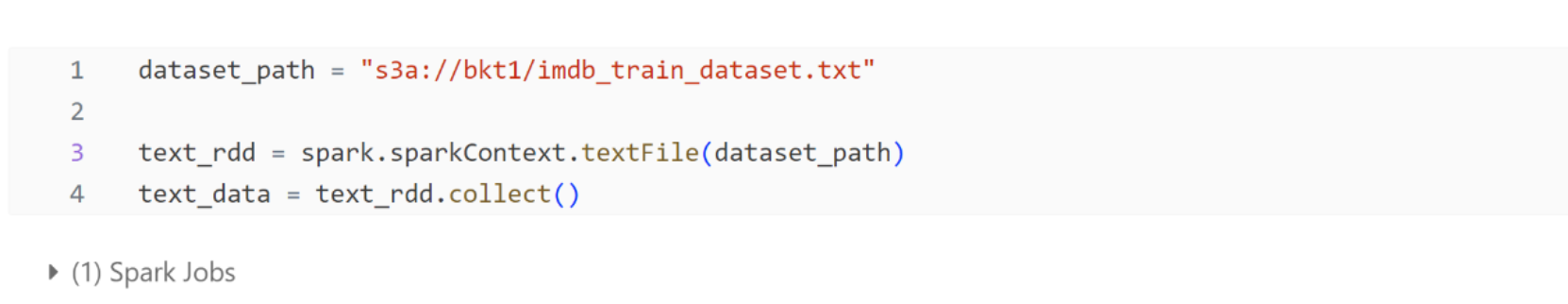

The dataset is placed in Dell APEX File Storage; the data access pattern is S3A.The access setup and exposing endpoint of the storage clusters to Compute cluster is provided by Azure network setup. Ensure necessary user authorization and authentication are handled appropriately.

Step 3: Load data into Databricks Spark

Convert the raw data to fine-tuned data and store it in the same Dell APEX File Storage. The Spark cluster reads the fine-tuned data, and all compute and storage cluster input/output operations are facilitated through the Spark distributed computation framework, using the S3A protocol.Step 4: Fine-tune the pre-trained LLM models

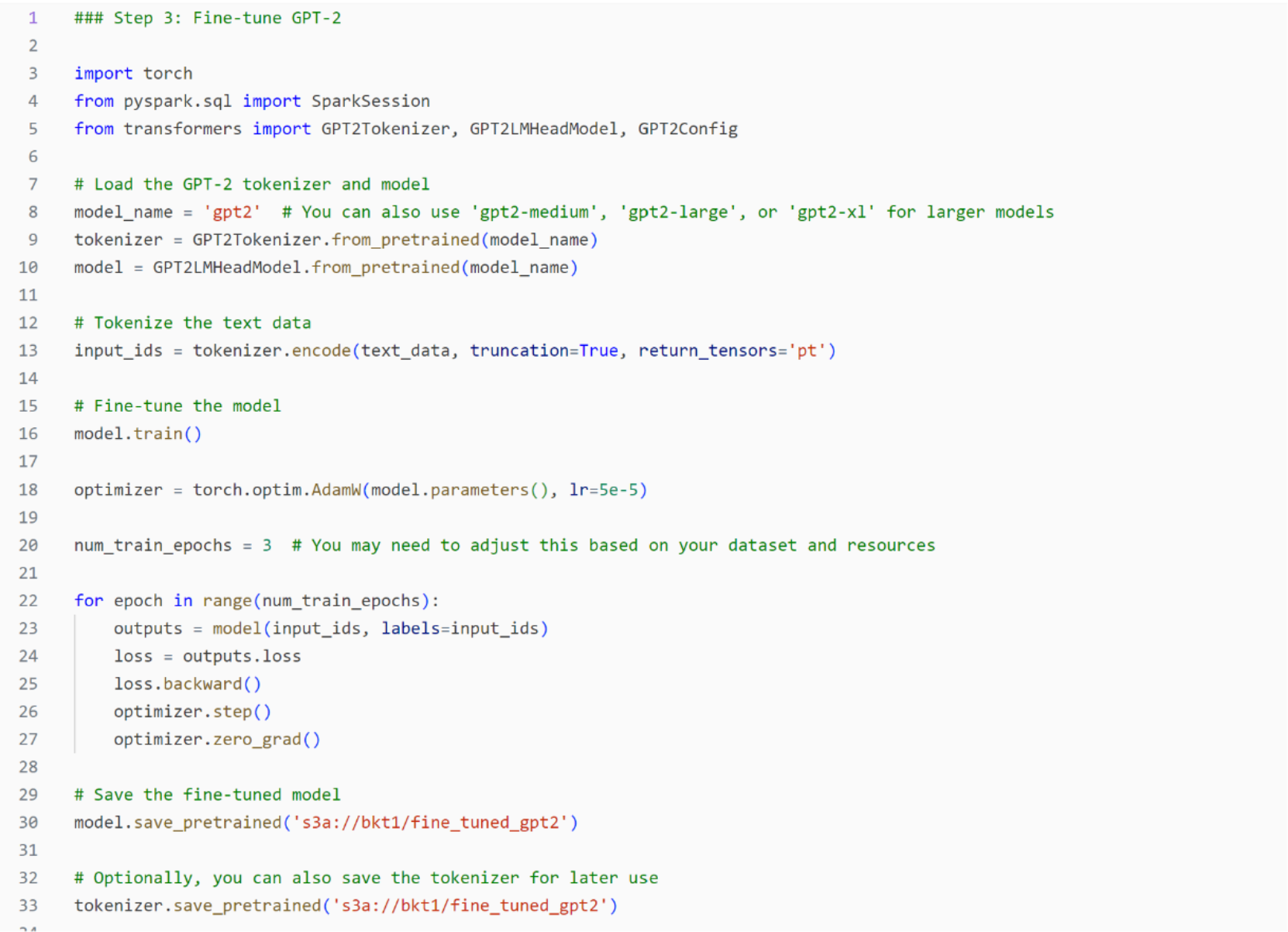

Import the Hugging Face transformer library and select the model and tokenizer.

OpenAI GPT: Import GPT Model and Tokenizer.

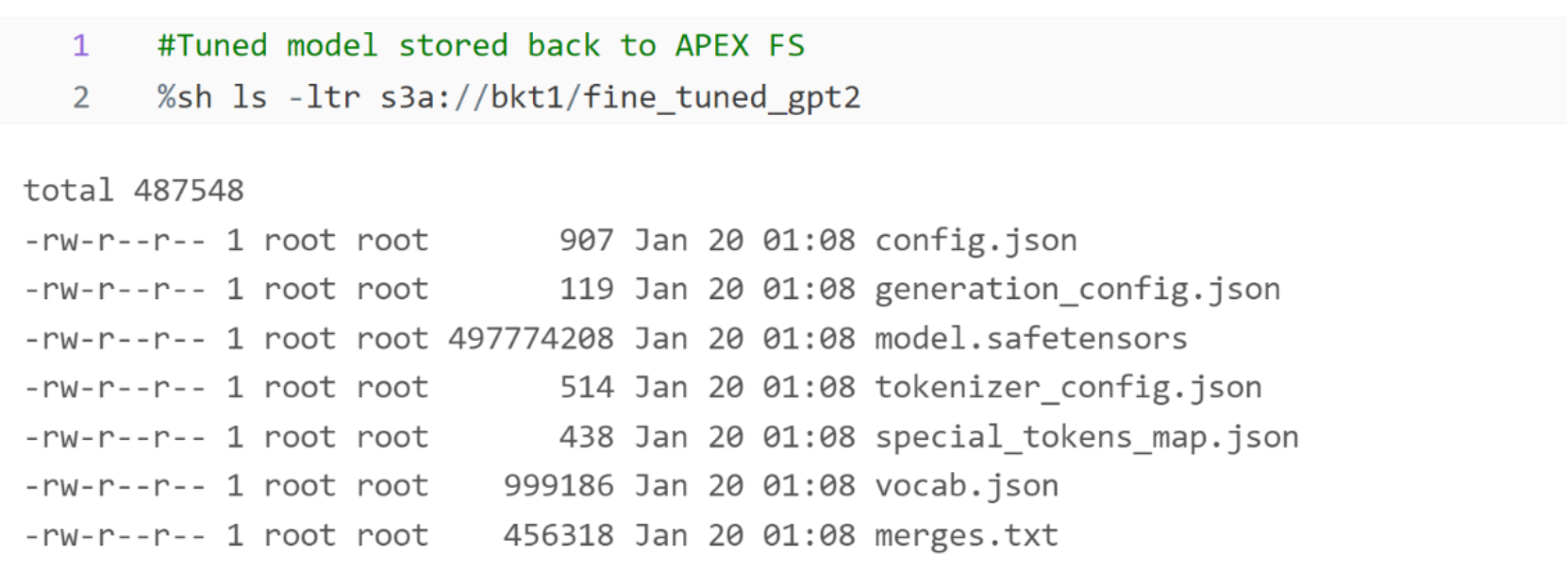

OpenAI GPT: Tokenize the text data, train, optimize and save the fine-tuned model and tokenizer back into Dell APEX File Storages.OpenAI GPT: Verify fine-tuned model and tokenizer saved back into Dell APEX File storages.

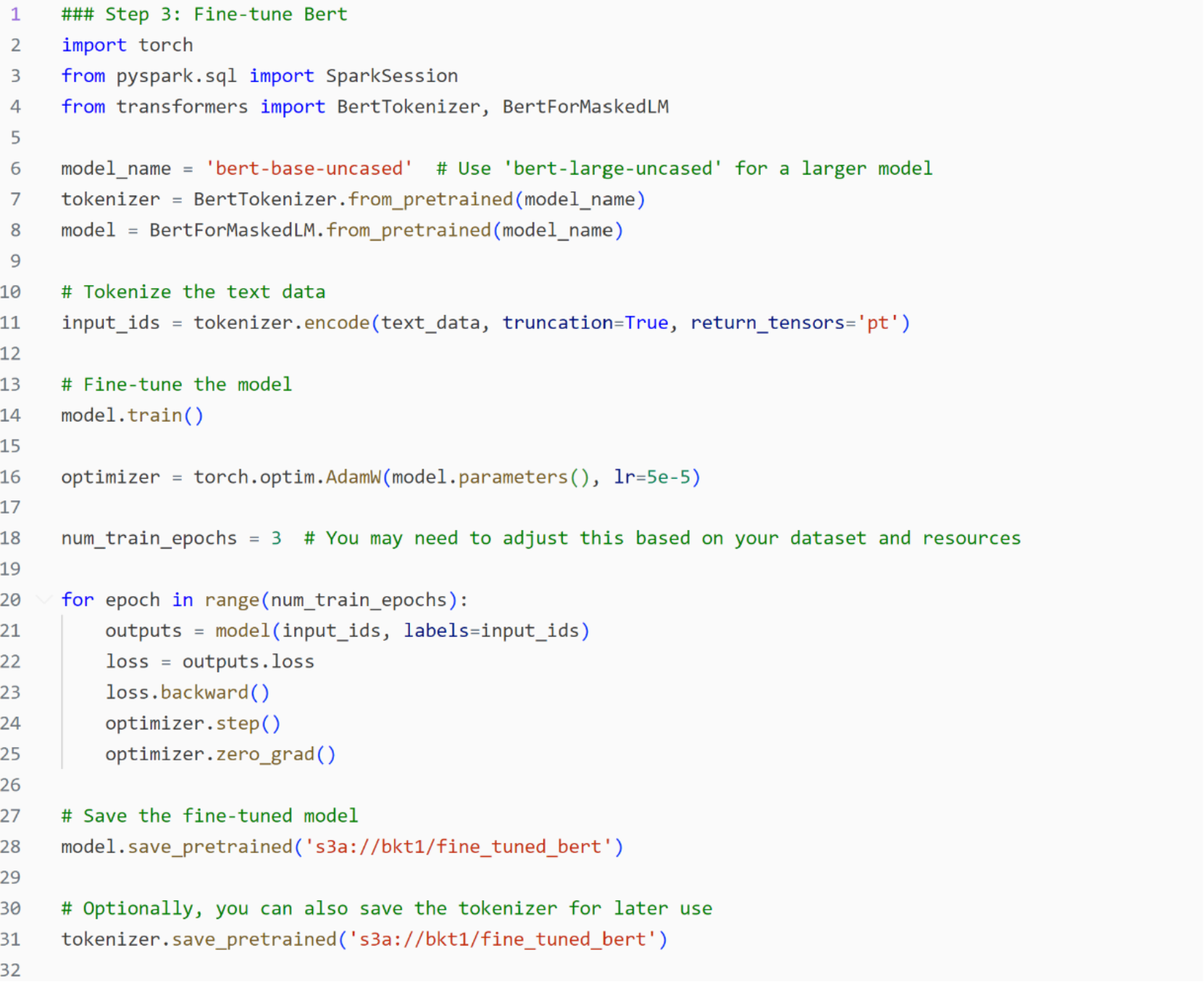

Google Bert: Import BERT Model and Tokenizer

Google Bert: Tokenize the text data, train, optimize, and save the fin-tuned model and tokenizer back into Dell APEX File Storages.

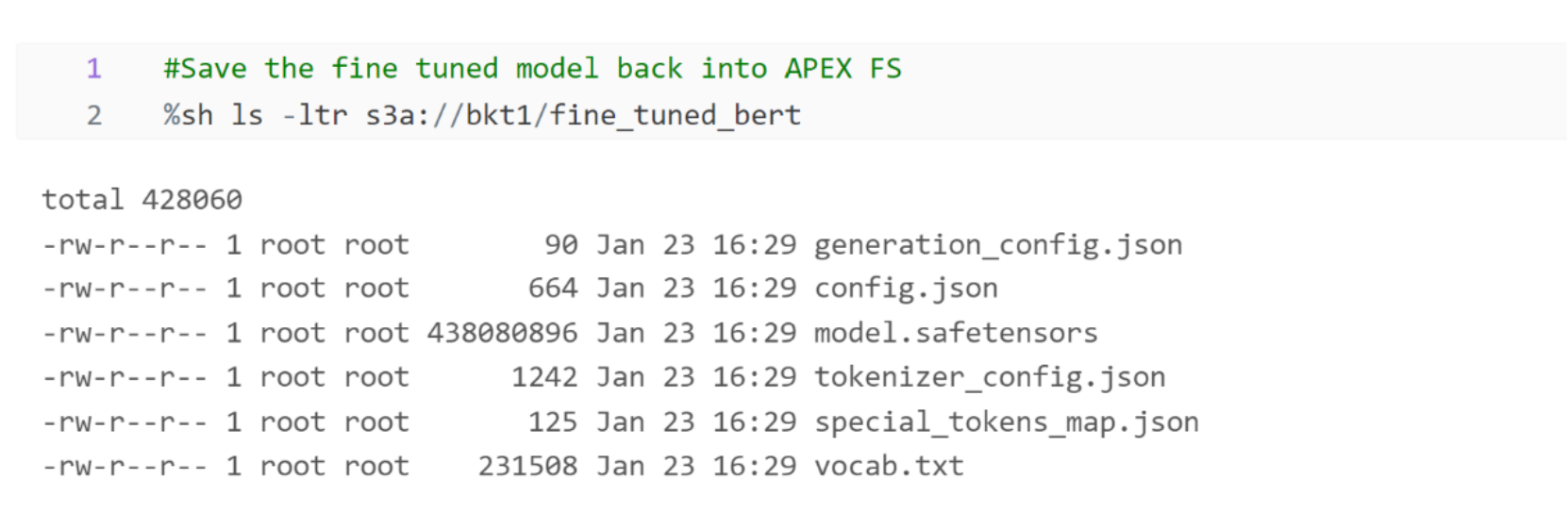

Google Bert: Verify fine-tuned model and tokenizer saved back into Dell APEX File Storages. Falcon: Import Falcon Model and Tokenizer

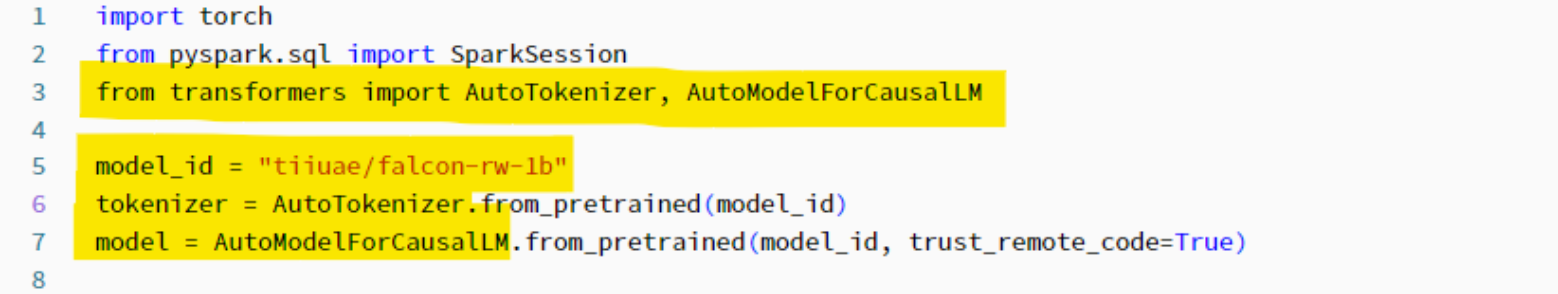

Falcon: Import Falcon Model and Tokenizer

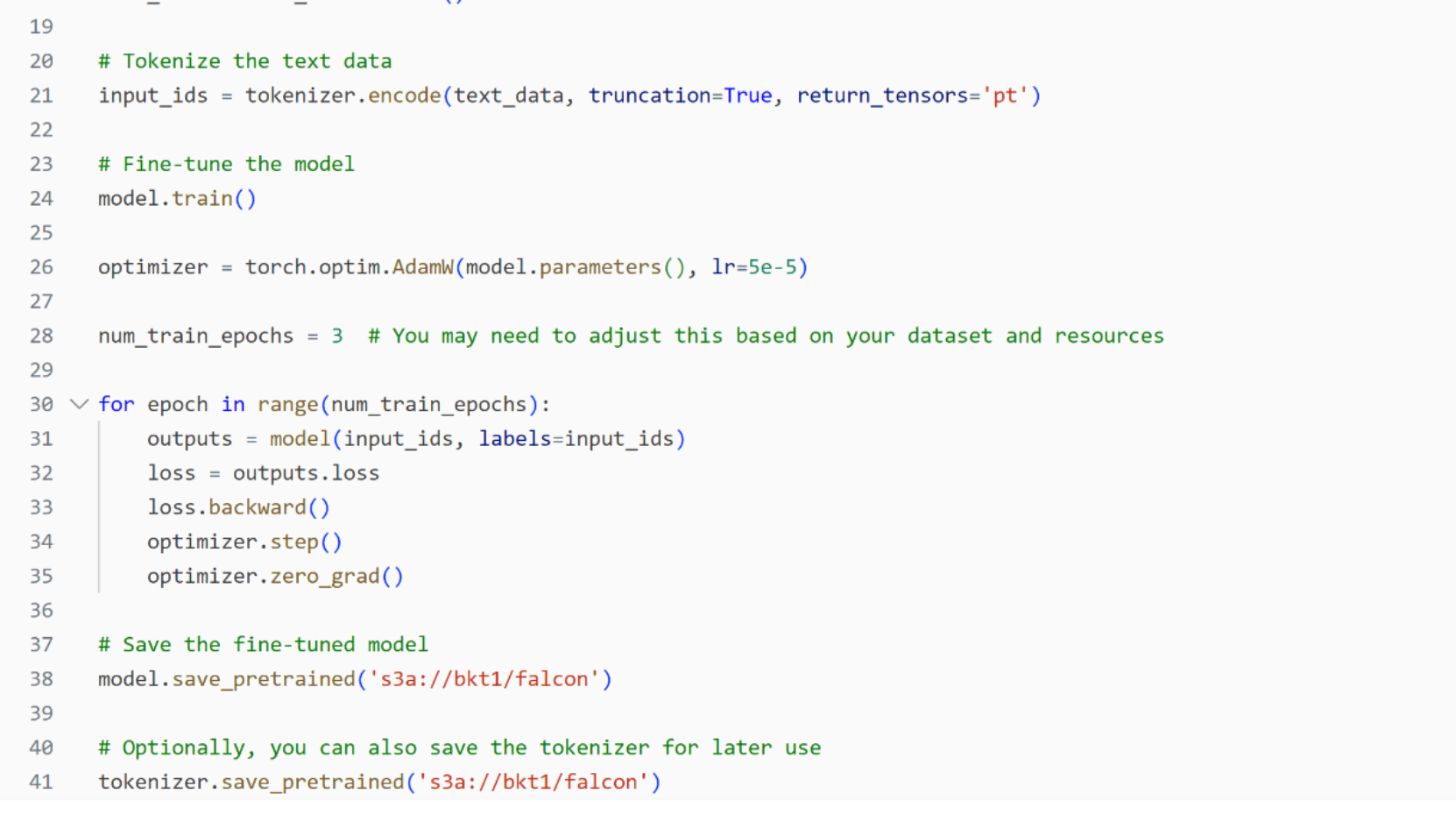

Falcon: Tokenize the text data, train, optimize, and save the fine-tuned model and tokenizer back into Dell APEX File Storages.

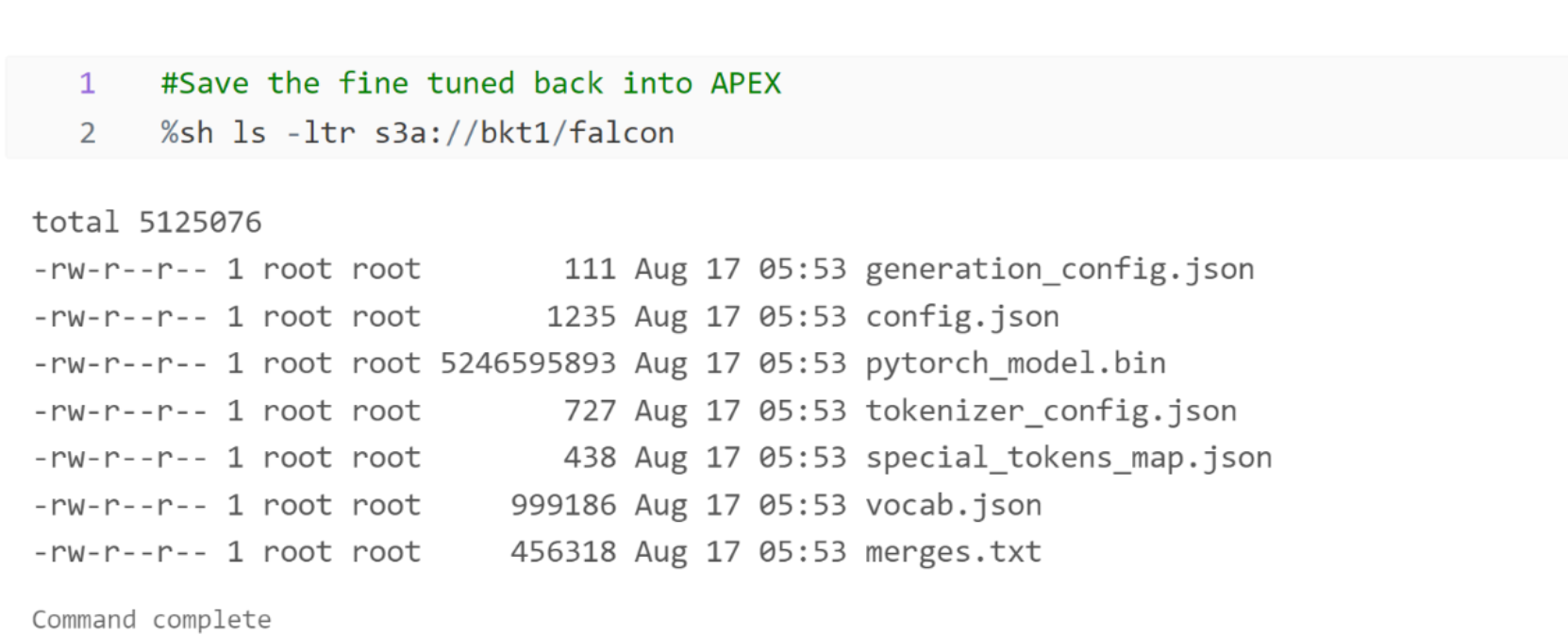

Falcon: Verify fine-tuned model and tokenizer saved back into Dell APEX File Storages.Step 4: Inference with fine-tuned Model

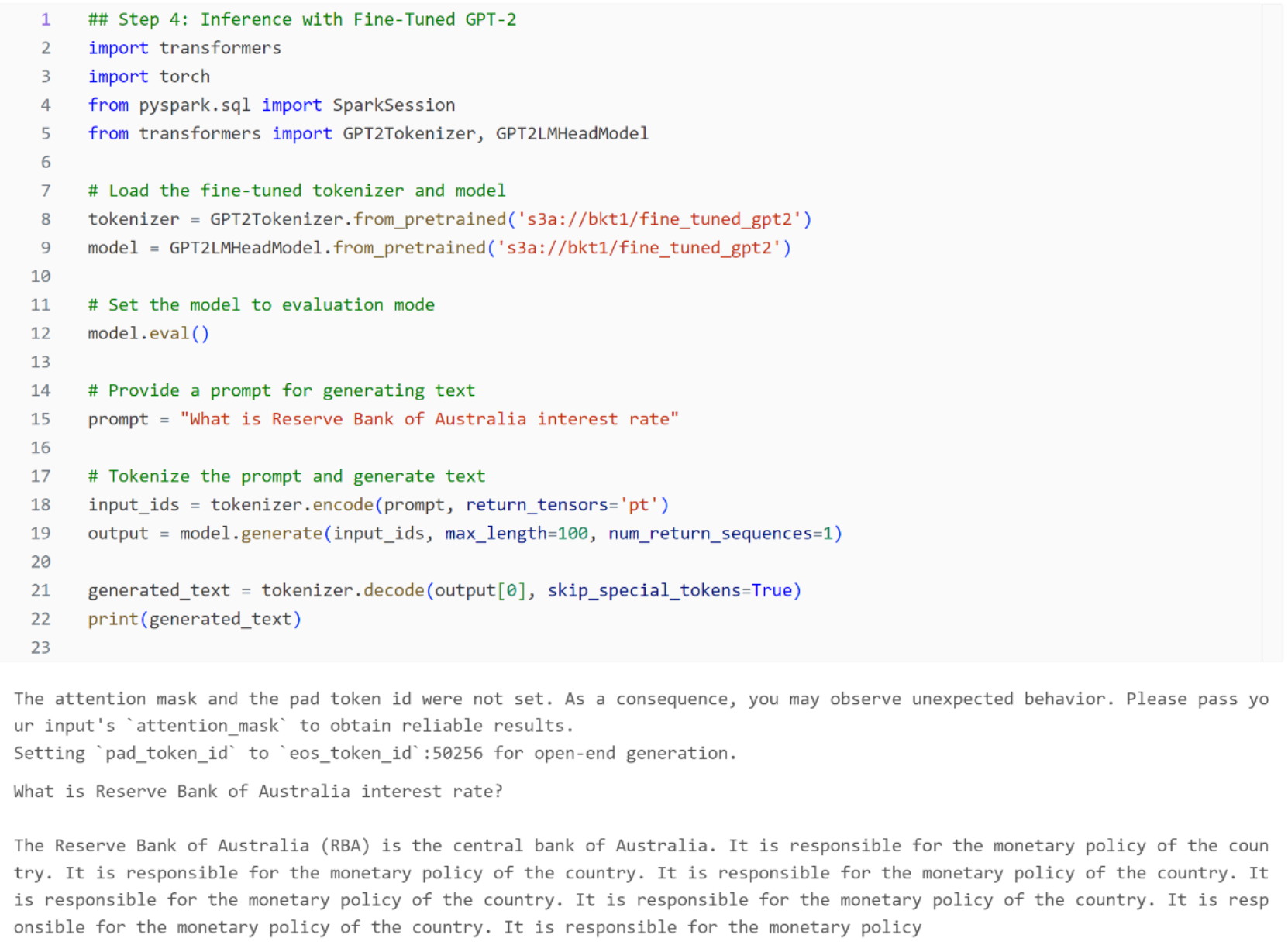

OpenAI GPT: Read the fine-tuned data from Dell APEX File Storage, evaluate the model, and run a simple inferencing that provides a prompt and generates text output.

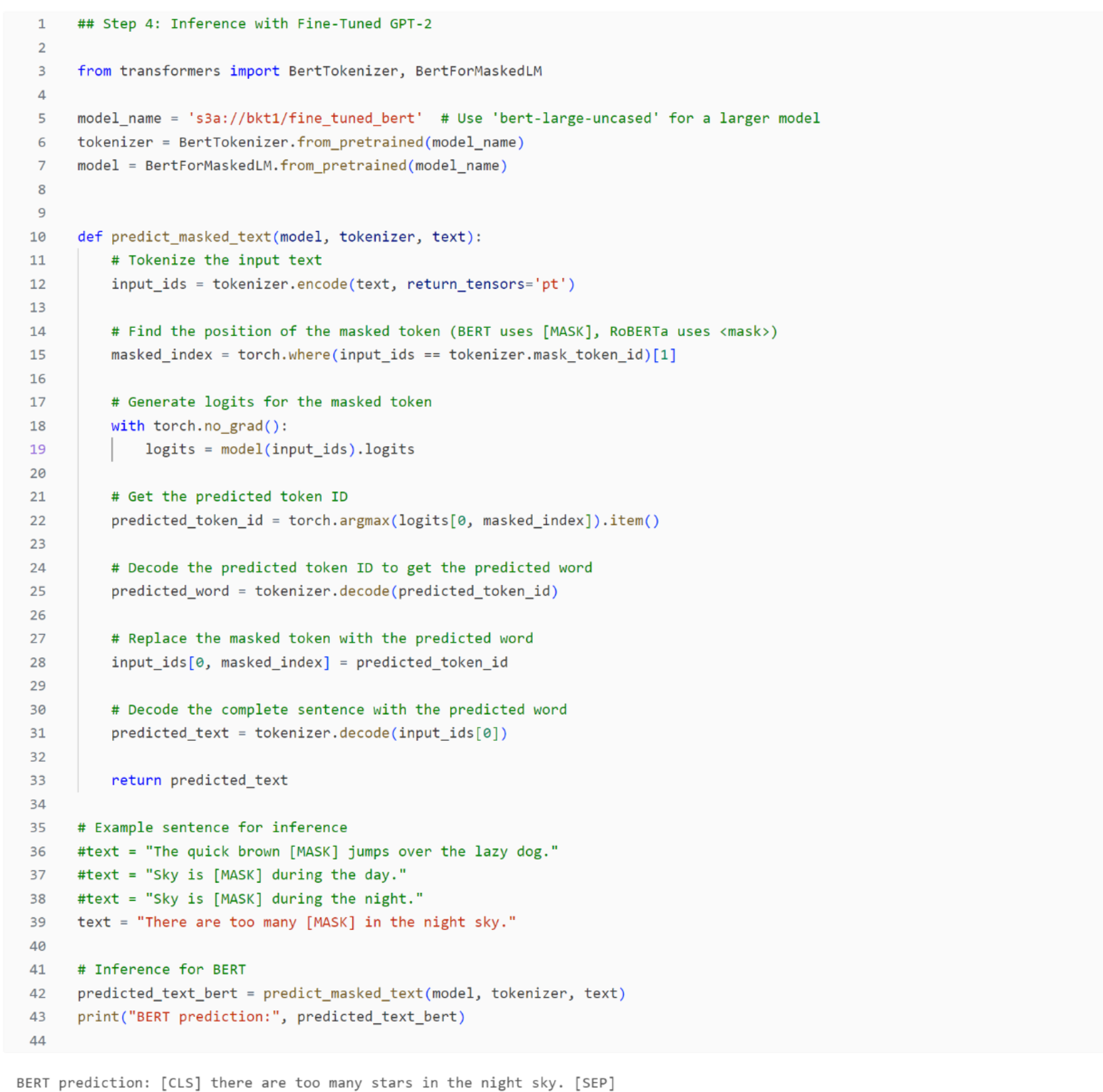

Google Bert: Read the fine-tuned data from Dell APEX File Storage, evaluate the model, and run a simple inferencing that provides a prompt and generates text output

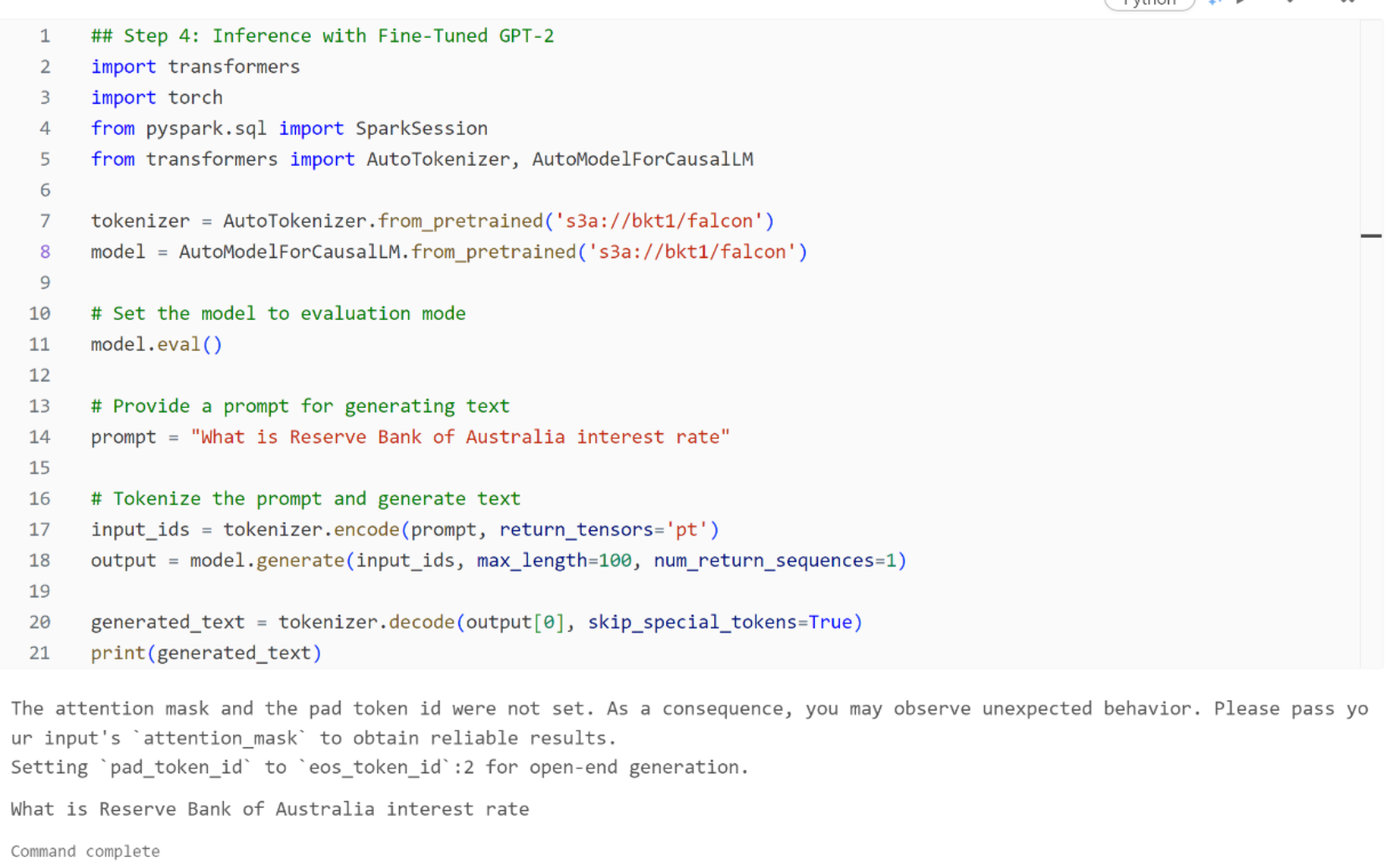

Falcon: Read the fine-tuned data from Dell APEX File Storage, evaluate the model, and run a simple inferencing that provides a prompt and generates text output.