Home > Workload Solutions > Data Analytics > White Papers > GenAI on Dell APEX File Storage for AWS using Databricks, Hugging Face, and MosaicML > Key components

Key components

-

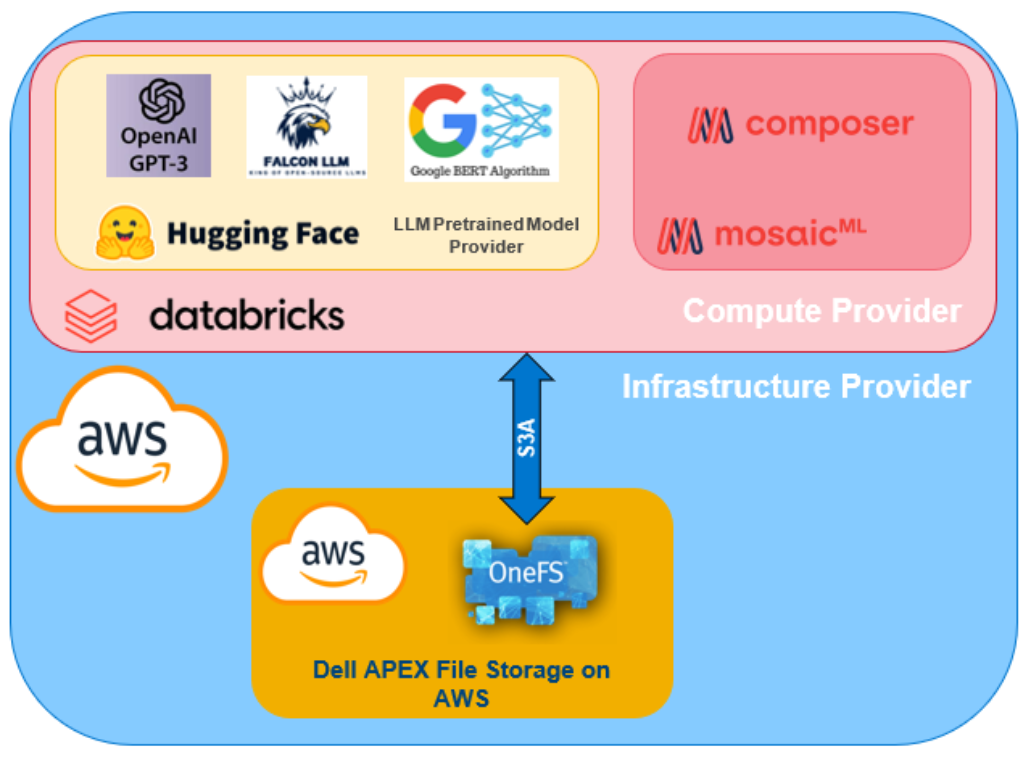

Figure 2 shows the logical architecture of the solution. This solution consists of the following components:

- Storage – Data for training, testing and inferencing is stored in Dell APEX File Storage on the cloud.

- Compute – Databricks provides computing and orchestration to perform fine-tuning dataset, LLM training, model fine-tuning, and inferencing.

- LLM libraries, models, and datasets – both Hugging Face's transformer and MosaicML's open-source Streaming Dataset, along with Composer libraries, offer pretrained LLM models and Synthetic Datasets for fine-tuning. Also, they provide tools to refine raw data sourced from Dell APEX File Storages, enabling efficient model fine-tuning and inferencing. We demonstrate usage of several open source LLMs such as OpenAI GPT-3, Falcon 1 B, Google BERT.

- Cloud Infrastructure Provider – the entire solution is hosted on AWS.

Figure 2. Logical architecture of the solution