Home > Workload Solutions > High Performance Computing > White Papers > Enhance Availability of Storage Services with an NFS Storage System > Configuration and tuning

Configuration and tuning

-

The Dell Technologies Validated Design for HPC NFS Storage is optimized and tuned for the best general purpose performance in an HPC environment assuming common uses like home directory for many users with a high number of files per directory, large files, and random access to relatively large files (several GiBs) from concurrent independent processes.

Concurrent access to a shared file is supported, but its performance is not characterized in this document. In addition, data should be protected by two sets of parity (RAID 6 volumes), automatically allowing writing to a large number of disks without any extra configuration (eight x 8+2_RAID6 volumes stripped via LVM = 80 HDDs working together, and then formatted as a single XFS file system), shared to HPC client nodes via the latest network file system (NFSv4), with the NFS service hosted from a highly available (HA) environment (active-passive HA cluster), with as few single points of failure as possible (ideally none).

The minimum supported operating system for use of third-generation Intel Xeon Scalable processors and Red Hat Enterprise Linux 8, is RHEL 8.2. However, the RHEL 8.3 version was selected since it offered newer versions for several vital components, implying some improvements and a longer production time before software updates are considered.

The system design and deployment guidelines of this solution remain aligned to the NFS Storage Solution (NSS)-High Availability (HA) solutions family. For example, NSS7.4-HA, shares the same Dell EMC PowerVault ME4084, switched rack PDUs for server fencing (APC AP7921B), and Ethernet switching with the PowerSwitch S3048-ON with this solution.

This Design for NFS Storage relies on Dell EMC PowerEdge R750 servers with Intel’s third-generation Xeon Scalable processors, codenamed “Ice Lake.” The improved Intel Xeon Scalable processors feature up to 40 cores, up to 60 MiB of last level cache, eight 3200 MT/s memory channels per CPU socket (one or two DIMMs per memory channel) and PCIe 4.0 slots, all key features for localized improved performance, since the bottleneck is typically in the back-end storage. The new high-speed networking, InfiniBand HDR100, provides enhancements like: Self-healing networking technology, improved message rate, adaptive routing, congestion control, quality of service, security, offloading and more.

The new operating system RHEL 8.3 provides important improvements in many different areas, including among others the IO subsystem, XFS file system, HA suite, networking, and better resource monitoring. However, the central improvement offered by RHEL 8.x, is that XFS advances allowed Red Hat to increase the default supported capacity limit from 500 TiB in RHEL 7.x, to 1 PiB in RHEL 8.x. This allows Dell EMC PowerVault ME4084 to use all the new 3.5” NLS HDD capacities supported up to 18TB disks.

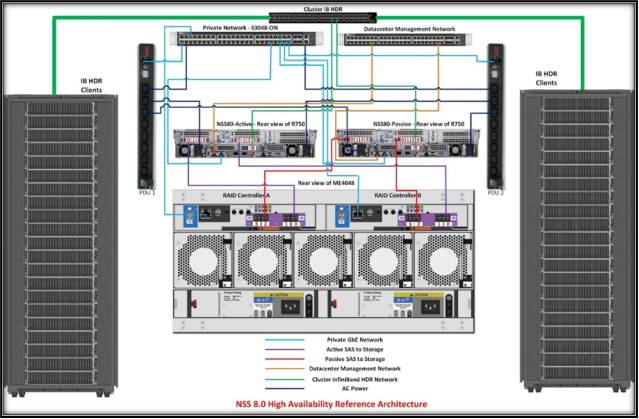

Figure 1 shows the architecture for the Dell Technologies Validated Design for NFS Storage. The pair of PowerEdge R750 servers work as NFS servers in an active-passive high availability cluster configuration, both connected via 12 Gb/s SAS cables to the Dell EMC PowerVault ME4084 storage array.

There are two SAS HBA355e cards in each NFS server, with each HBA card is connected to a different PowerVault ME4084 SAS controller via SAS cables. Therefore, a single SAS HBA card or a single SAS cable failure does not impact data availability on the active server. However, performance would be reduced since instead of accessing 4 LUNs per SAS cable, all 8 LUNs will use the same SAS path. The storage array configuration basically remained the same as used in recent ME4 based NFS solutions, and the HA cluster configuration is very similar to that used by version 7.4, however the latest version of the HA software included with RHEL 8 entailed some changes.

It is recommended to configure the NFS servers’ BIOS settings based on the HPC profile as described in the blog BIOS characterization for Intel Ice Lake processors. This includes BIOS tuning as: logical processor disabled, system profile set to Performance Optimized, other setting affecting transfers set as enabled: DeadLineLlcAlloc, LlcPrefetch, XptPrefetch, UpiPrefetch, DcuIpPrefetcher, DcuStreamerPrefetcher and ProcAdjCacheLine.

However, since storage solutions are I/O bound, and this solution has HBA adapters under both sockets, Sub-NUMA cluster disabled avoids latency crossing domains within each socket with more memory available for buffer, cache. Updating to the latest BIOS and firmware versions for all the different server components (SSDs, PERC, Backplane, LOM, InfiniBand CX6, PSU, etc.) including iDRAC is recommended.

Similarly, using the newest firmware version for the PowerVault ME4084 controller (currently version GT280R008-04) and its HDDs is highly recommended. If it is possible to modify the client nodes’ InfiniBand configuration, for best IP over InfiniBand (IPoIB) performance, using datagrams and MTU of 4096 for all InfiniBand connections is recommended. Crossing domains within each socket with more memory available for buffer, cache. Updating to the latest BIOS and firmware versions for all the different server components (SSDs, PERC, backplane, LOM, InfiniBand CX6, PSU, etc.) including the iDRAC is recommended. Similarly, using the newest firmware version for the PowerVault ME4084 controller (currently version GT280R008-04) and its HDDs is highly recommended. If it is possible to modify the client nodes’ InfiniBand configuration, for best IPoIB performance, using datagrams and MTU of 4096 for all InfiniBand connections is recommended.

Figure 2. Architecture for the Dell Technologies Validated Design for HPC NFS storage

The HPC Engineering team performed a series of high availability and performance benchmark tests on this NFS Storage solution, to compare with the performance results of the previous version called NSS7.4-HA.