Home > Workload Solutions > SAP > Guides > DVD for High Availability with Red Hat Pacemaker Clusters Running SAP HANA on Dell S5000 Series Servers > Configuring the cluster

Configuring the cluster

-

Configure the SAP HANA replication

Before you start, configure the hdbuser-store for a backup user. This design guide uses “backup” as the username. On both hosts, run the following commands:

# su – th0adm

# hdbuserstore -i add backup localhost:30013@SYSTEMDB systemNote: For data protection reasons, create a backup user with the appropriate permissions on your databases. You can also use the SYSTEM permissions.

To configure the SAP HANA replication:

- Create a backup on the first node, which will become the primary database, and then enable system replication by running the following commands:

hana01:# su – th0adm

# hdbsql -i 00 -U backup -d SYSTEMDB "BACKUP DATA USING FILE ('/hana/shared/th0_sys')"# hdbsql -i 00 -U backup -d SYSTEMDB "BACKUP DATA FOR TH0 USING FILE ('/hana/shared/th0_data')"

Note: Ensure that the backup file destination has enough free space available and is writable for the th0adm user.

- After running the backups, prepare the primary node for system replication by running the following command:

th0adm@hana01:# hdbnsutil -sr_enable --name=Node1

- Add the second database server to the replication by running the following command:

hana02:# su – th0adm

th0adm@hana02:# HDB stop- Copy the security keys from the first server to the second server by running the following command:

hana02:# scp root@hana01:/usr/sap/TH0/SYS/global/security/rsecssfs/key/SSFS_TH0.KEY /usr/sap/TH0/SYS/global/security/rsecssfs/key/SSFS_TH0.KEY

hana02:# scp root@hana01:/usr/sap/TH0/SYS/global/security/rsecssfs/data/SSFS_TH0.DAT /usr/sap/TH0/SYS/global/security/rsecssfs/data/SSFS_TH0.DAT

- Register the second node by running the following command:

hana02:# su – th0adm

th0adm@hana02:# hdbnsutil -sr_register --remoteHost=hana01 --remoteInstance=00 --replicationMode=syncmem --name=Node2- Start the database on the second node by running the following command:

hana02:# su – th0adm

th0adm@hana02:# HDB start- Optionally, verify the status of the replication by running the following commands on the first node:

hana01:# su – th0adm

th0adm@hana01:# cdpy

th0adm@hana01:# python systemReplicationStatus.py

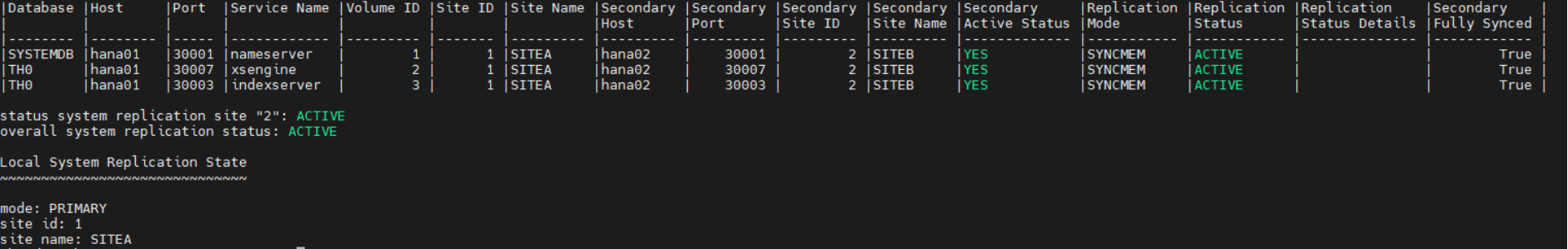

The command output confirms that the replication was successful, as shown in the following figure:

Figure 3. Replication command output – successful

Configure Pacemaker

To configure Pacemaker in the cluster:

- Set up a network time protocol (NTP) client and start the client.

- Install the required packages on all nodes by running the following commands:

# yum -y install pcs pacemaker fence-agents-ipmilan resource-agents-sap-hana

# passwd hacluster

[enter a password for the user hacluster]

# systemctl enable pcsd.service; systemctl start pcsd.service

- Create the cluster with no pre-defined cluster resources by running the following commands:

# pcs host auth hana01 hana02

Username: hacluster

Password: [enter a password for the user hacluster]

# pcs cluster setup clhana hana01 hana02

# pcs cluster start --all

# pcs cluster enable --all

- Configure fencing for the cluster. Red Hat supports two fencing mechanisms: power fence agents, and I/O fence agents. For more information, see Fencing in a Red Hat High Availability Cluster. The example in this guide uses Intelligent Platform Management Interface (IPMI) as an industry-proven power fence agent mechanism.

- For general information about fencing scenarios, see Automating startup on failure in this guide.

- For the configuration steps, see Configuring fencing in a Red Hat High Availability cluster.

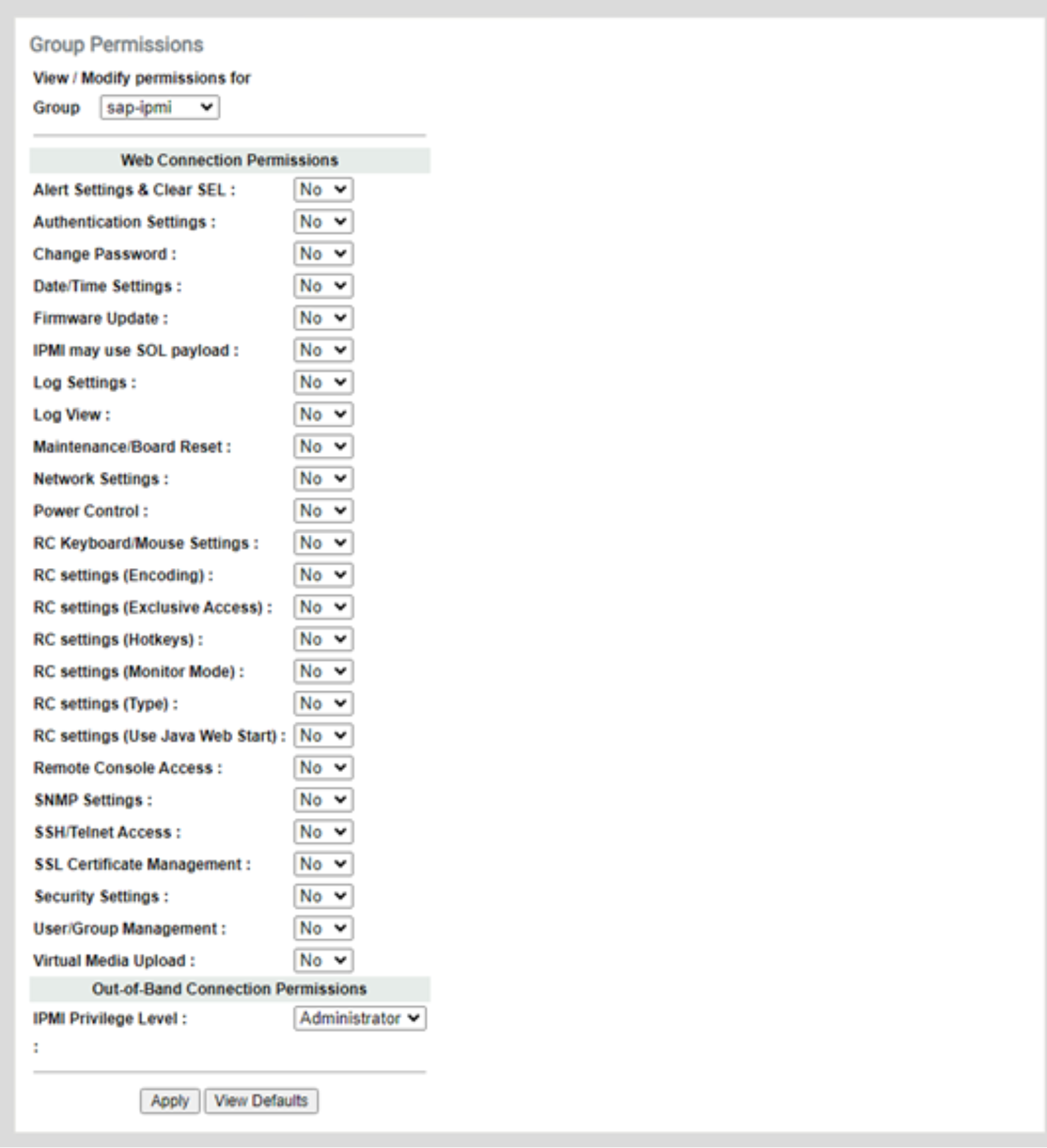

- Create IPMI permissions in the BMC User Managementpage in the main BMC web interface:

- Under Out-of-Band Connection Permissions, set the IPMI Privilege Level for a group to Administrator, as shown in the following figure:

Figure 4. Creating a group with IPMI permissions

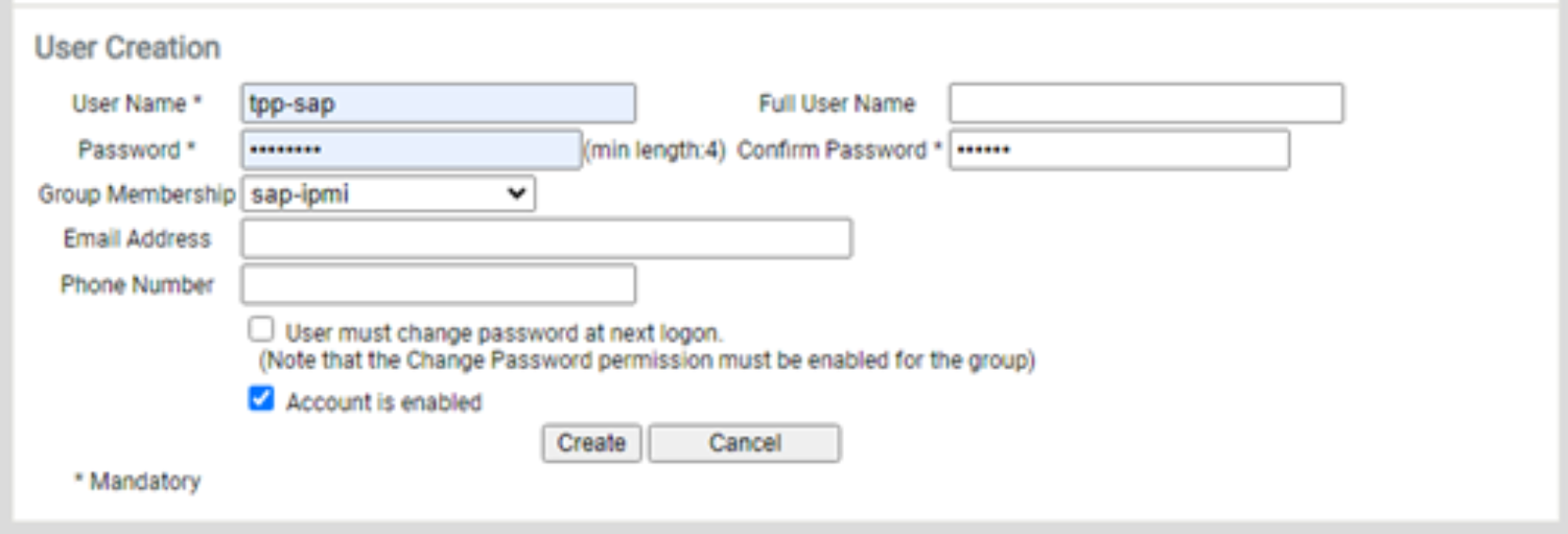

- Create a user that belongs to the IPMI group you created in the previous step, as shown in the following figure:

Figure 5. Creating a user with IPMI permissions

- Configure the “stonith” cluster resources by running the following commands:

hana01:# pcs stonith create Hana01Stonith fence_ipmilan pcmk_host_list=hana01 ip=192.168.9.100 username=sap-ipmi password=<ipmi user password> lanplus=1 op monitor interval=30s

hana01:# pcs constraint location Hana01Stonith avoids hana01

hana01:# pcs stonith create Hana02Stonith fence_ipmilan pcmk_host_list=hana02 ip=192.168.9.101 username=sap-ipmi password=<ipmi user password> lanplus=1 op monitor interval=30s

hana01:# pcs constraint location Hana02Stonith avoids hana02

- Configure the srConnectionChangedhooks on both cluster nodes.

- Stop the database instances by running the following commands:

hana01:# su – th0adm

th0adm@hana01:# HDB stophana02:# su – th0adm

th0adm@hana02:# HDB stop- Copy the files on both nodes by running the following commands:

[root]# mkdir -p /hana/shared/myHooks

[root]# cp /usr/share/SAPHanaSR/srHook/SAPHanaSR.py /hana/shared/myHooks

[root]# chown -R th0adm:sapsys /hana/shared/myHooks

- On both database nodes, edit the /hana/shared/TH0/global/hdb/custom/config/global.ini file, as shown in the following sample command:

[ha_dr_provider_SAPHanaSR]

provider = SAPHanaSR

path = /hana/shared/myHooks

execution_order = 1

[trace]

ha_dr_saphanasr = info

- Create the /etc/sudoers.d/20-saphana file with the following content:

Cmnd_Alias NODE1_SOK = /usr/sbin/crm_attribute -n hana_th0_site_srHook_Node1 -v SOK -t crm_config -s SAPHanaSR

Cmnd_Alias NODE1_SFAIL = /usr/sbin/crm_attribute -n hana_th0_site_srHook_Node1 -v SFAIL -t crm_config -s SAPHanaSR

Cmnd_Alias NODE2_SOK = /usr/sbin/crm_attribute -n hana_th0_site_srHook_Node2 -v SOK -t crm_config -s SAPHanaSR

th0adm ALL=(ALL) NOPASSWD: Node1_SOK, Node1_SFAIL, Node2_SOK, NODE2_SFAIL

Defaults!NODE1_SOK, NODE1_SFAIL, NODE2_SOK, NODE2_SFAIL !requiretty

Note: Replace the th0 values with the lowercase SAP SID of your database. Replace Node1 and Node2 with the database names that you provided in the replication setup.

- Set the permissions for the sudoers file that you created by running the following command:

hana01:# chmod 0440 /etc/sudoers.d/20-saphana

hana02:# chmod 0440 /etc/sudoers.d/20-saphana

- Start the databases again by running the following commands:

hana01:# su – th0adm

th0adm@hana01:# HDB starthana02:# su – th0adm

th0adm@hana02:# HDB start- Configure the cluster resources:

- To prevent the cluster from interfering with the database, set “maintenance mode” to “true” during the system configuration:

# pcs property set maintenance-mode=true

- Define global parameters for all cluster resources by running the following command:

# pcs resource defaults update resource-stickiness=1000

# pcs resource defaults update migration-threshold=5000

- Add the configuration for the SAP host resource by running the following command:

# pcs resource create SAPHanaTopology_TH0_00 SAPHanaTopology SID=TH0 InstanceNumber=00 \

op start timeout=600 \

op stop timeout=300 \

op monitor interval=10 timeout=600 \

clone clone-max=2 clone-node-max=1 interleave=true

- Add the resource configuration for the active-passive SAP HANA database by running the following command:

# pcs resource create SAPHana_TH0_00 SAPHana SID=TH0 InstanceNumber=00 \

PREFER_SITE_TAKEOVER=true DUPLICATE_PRIMARY_TIMEOUT=7200 AUTOMATED_REGISTER=true \

op start timeout=3600 \

op stop timeout=3600 \

op monitor interval=61 role="Slave" timeout=700 \

op monitor interval=59 role="Master" timeout=700 \

op promote timeout=3600 \

op demote timeout=3600 \

promotable notify=true clone-max=2 clone-node-max=1 interleave=true

- Add the virtual IP as a cluster resource by running the following command:

# pcs resource create vip_TH0_00 IPaddr2 ip="10.14.20.10"

- Add resource constraints to ensure that each cluster resource runs on the appropriate node, such as the virtual IP address on the node with the writable primary database:

# pcs constraint order SAPHanaTopology_TH0_00-clone then SAPHana_TH0_00-clone symmetrical=false

# pcs constraint colocation add vip_TH0_00 with master SAPHana_TH0_00-clone 2000

- Now that all the resources on the cluster are configured properly, disable maintenance mode:

pcs property set maintenance-mode=false

- Verify that the cluster is installed on hana01 by running the following command:

trhana01:~ # crm_mon -r1

Status of pacemakerd: 'Pacemaker is running' (last updated 2023-12-15 10:42:58 -05:00)

Cluster Summary:

* Stack: corosync

* Current DC: hana01 (version 2.1.5-9.3.el8_8-a3f44794f94) - partition with quorum

* Last updated: Fri Dec 15 10:42:59 2023

* Last change: Fri Dec 15 10:42:54 2023 by root via crm_attribute on hana01

* 2 nodes configured

* 7 resource instances configured

Node List:

* Online: [ hana01 hana02 ]

Full List of Resources:

* Node0Stonith (stonith:fence_ipmilan): Started hana02

* Node1Stonith (stonith:fence_ipmilan): Started hana01

* Clone Set: SAPHanaTopology_TH0_00-clone [SAPHanaTopology_TH0_00]:

* Started: [ hana01 hana02 ]

* Clone Set: SAPHana_TH0_00-clone [SAPHana_TH0_00] (promotable):

* Masters: [ hana01 ]

* Slaves: [ hana02 ]

* vip_TH0_00 (ocf::heartbeat:IPaddr2): Started hana01

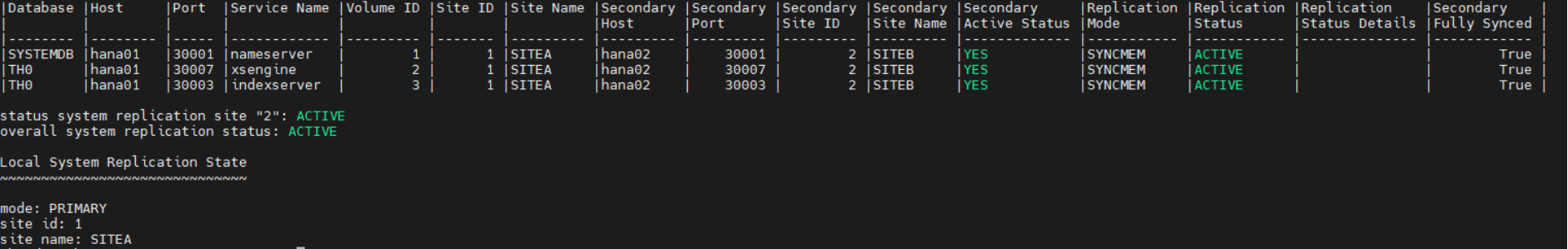

- Verify the status of the replication by running the following commands:

trhana01:# su – th0adm

th0adm@hana01:# cdpy

th0adm@hana01:# python systemReplicationStatus.py

The following figure shows the command output:

Figure 6. Replication status command output