Home > AI Solutions > Artificial Intelligence > Guides > Design Guide—Digital Assistant on Dell APEX Cloud Platform for Red Hat OpenShift with Red Hat OpenShift AI > Overview

Overview

-

This section discusses the concepts involved in building the solution. The following list includes the hardware and software layers in the solution stack.

- Dell APEX Cloud Platform for Red Hat OpenShift

- Dell PowerFlex

- Dell ObjectScale

- Dell PowerSwitch

- Red Hat OpenShift Container Platform

- Red Hat OpenShift AI

- Digital assistant related components (Llama 2, LangChain, embedding model, Vector store, Gradio, and vLLM)

Dell APEX Cloud Platform

Dell APEX Cloud Platforms deliver innovation, automation, and integration across your choice of cloud ecosystems, empowering innovation in multicloud environments.

Dell APEX Cloud Platforms are a portfolio of fully integrated, turnkey systems integrating Dell infrastructure, software, and cloud-operating stacks that deliver consistent multicloud operations. This portfolio extends cloud operating models to on-premises and edge environments.

Dell APEX Cloud Platform provides an on-premises, private cloud environment with consistent full-stack integration for the most widely deployed cloud ecosystem software including Microsoft Azure, Red Hat OpenShift, and VMware vSphere.

For more information, see the Dell APEX Cloud Platforms webpage.

Dell APEX Cloud Platform for Red Hat OpenShift

Dell APEX Cloud Platform for Red Hat OpenShift is designed collaboratively with Red Hat to optimize and extend OpenShift deployments on-premises with a seamless operational experience.

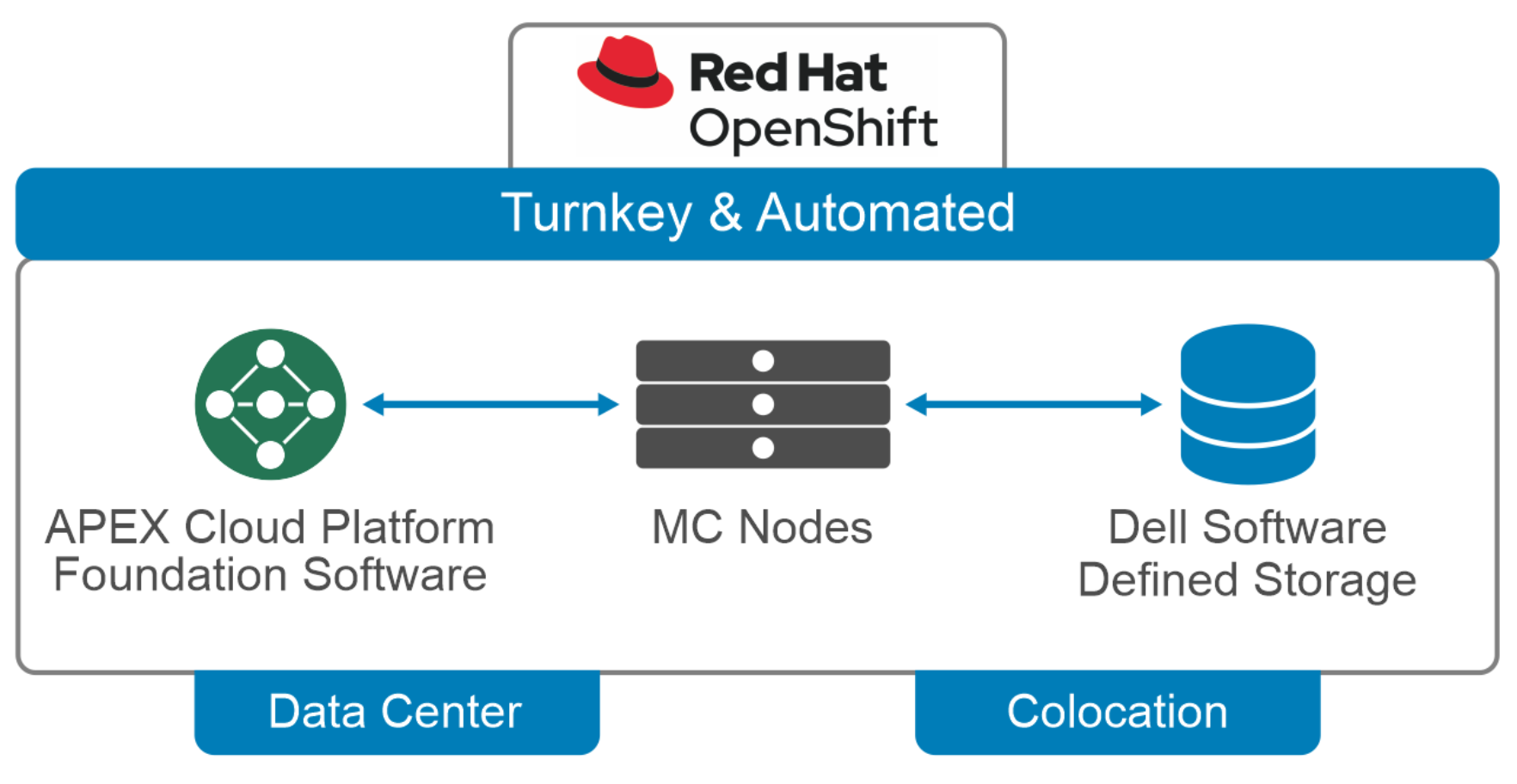

Figure 1. Dell APEX Cloud Platform for Red Hat OpenShift high-level physical architecture

This turnkey platform provides:

- Deep integrations and intelligent automation between layers of Dell and OpenShift technology stacks, accelerating time-to-value and eliminating the complexity of management using different tools in disparate portals.

- Simplified, integrated management using the OpenShift Web Console.

A bare metal architecture delivers the performance, predictability, and linear scalability needed to meet even the most stringent SLAs.

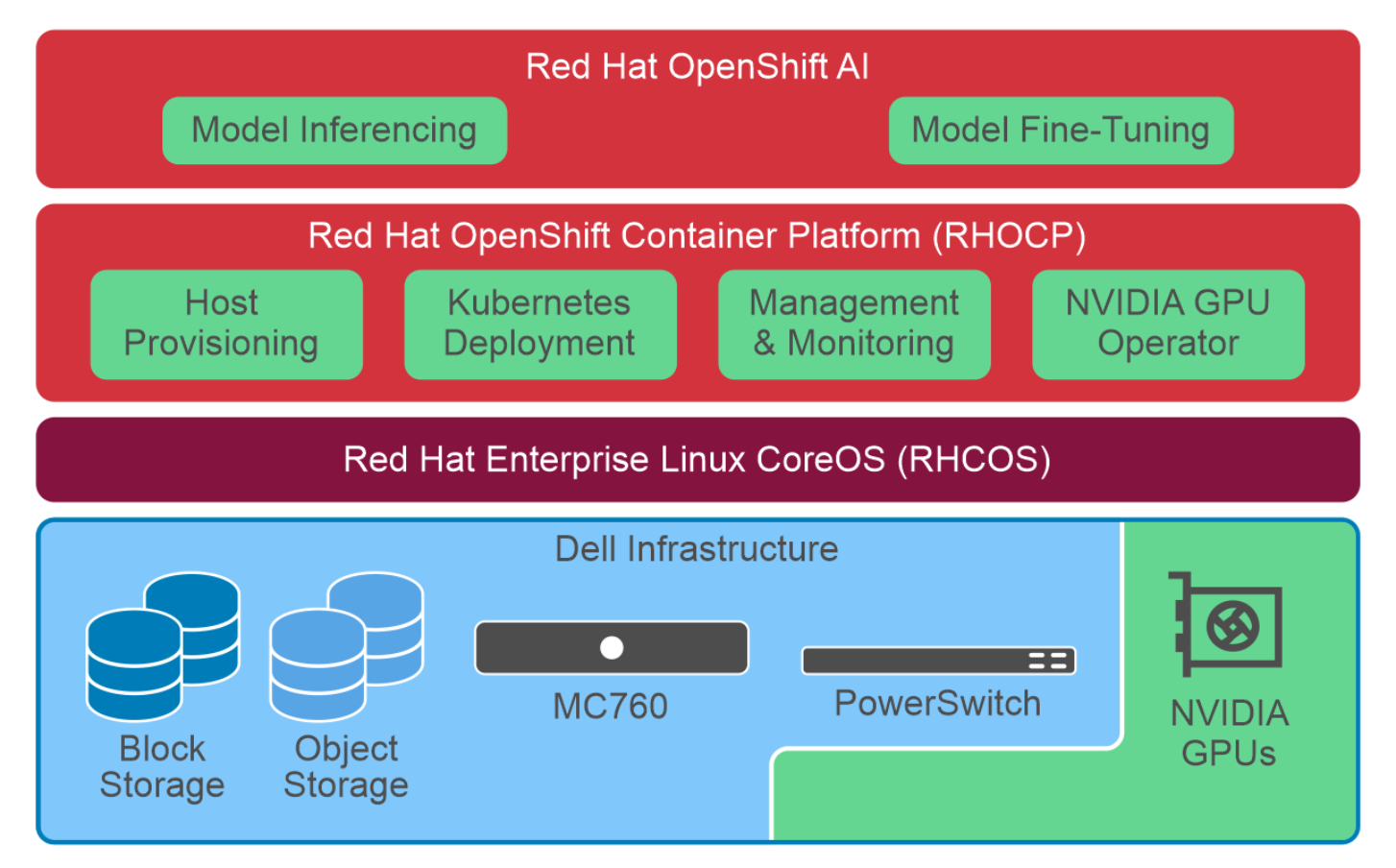

Figure 2. High-level overview of OpenShift AI on Dell APEX Cloud Platform for Red Hat OpenShift logical architecture

The Dell APEX Cloud Platform for Red Hat OpenShift introduces a new level of integration for running OpenShift on bare metal servers. The Dell APEX Cloud Platform Foundation Software mitigates this complexity by integrating the infrastructure management into the OpenShift Web Console. This integration enables administrators to update the hardware using the same workflow that updates the OpenShift software. It also enables OpenShift administrators to manage the infrastructure using the same management tools they use to control the cluster and the applications that run on it.

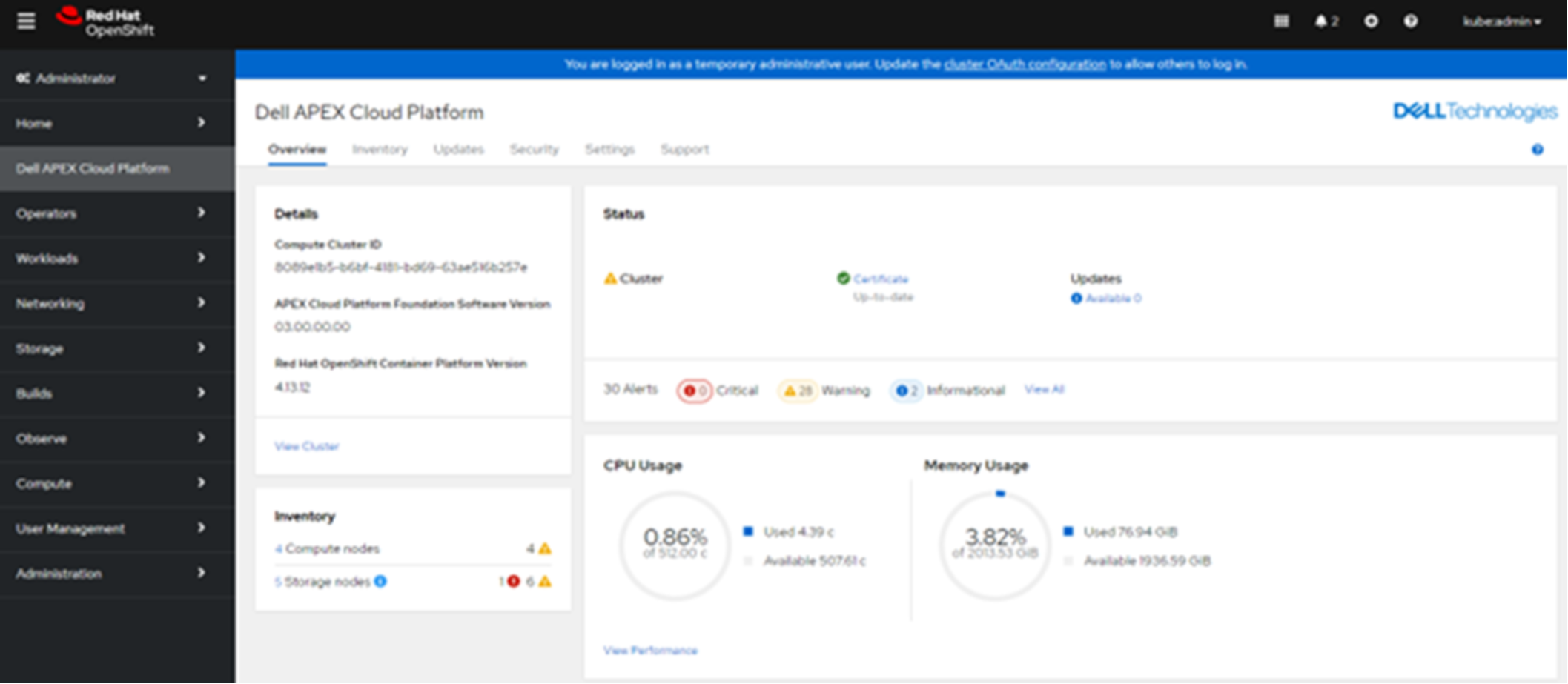

The Dell APEX Cloud Platform for Red Hat OpenShift introduces a new level of integration for running OpenShift on bare metal servers. The Dell APEX Cloud Platform Foundation Software mitigates this complexity by integrating the infrastructure management into the OpenShift Web Console. This integration enables administrators to update the hardware using the same workflow that updates the OpenShift software. It also enables OpenShift administrators to manage the infrastructure using the same management tools they use to control the cluster and the applications that run on it.Figure 3. Dell APEX Cloud Platform Foundation Software integration in OpenShift Web Console

Note: The Federal Information Processing Standards (FIPS) is a ruleset that outlines methods for how data is handled and processed by encryption algorithms on endpoints and across various communication channels. FIPS is enabled by default in Dell APEX Cloud Platform for Red Hat OpenShift to provide a secure environment. This default setting is important to consider while developing your digital assistant applications, as some of the Python library may not be FIPS-compliant.

Dell PowerFlex

Dell PowerFlex is a dynamic and adaptable software-defined infrastructure meticulously crafted to modernize IT landscapes, increase business agility, and expertly handle modern workloads' intricacies. Its unparalleled performance and extensive scalability make PowerFlex a prime choice for consolidating diverse workloads, perfectly suited for demanding operational scenarios.

A noteworthy aspect of PowerFlex is its extreme performance. With its robust scale-out architecture, resource optimization capabilities, and low latency offerings, enterprises can meet stringent operational demands and ensure consistent performance benefits.

For more information, see the Dell PowerFlex webpage.

Dell ObjectScale

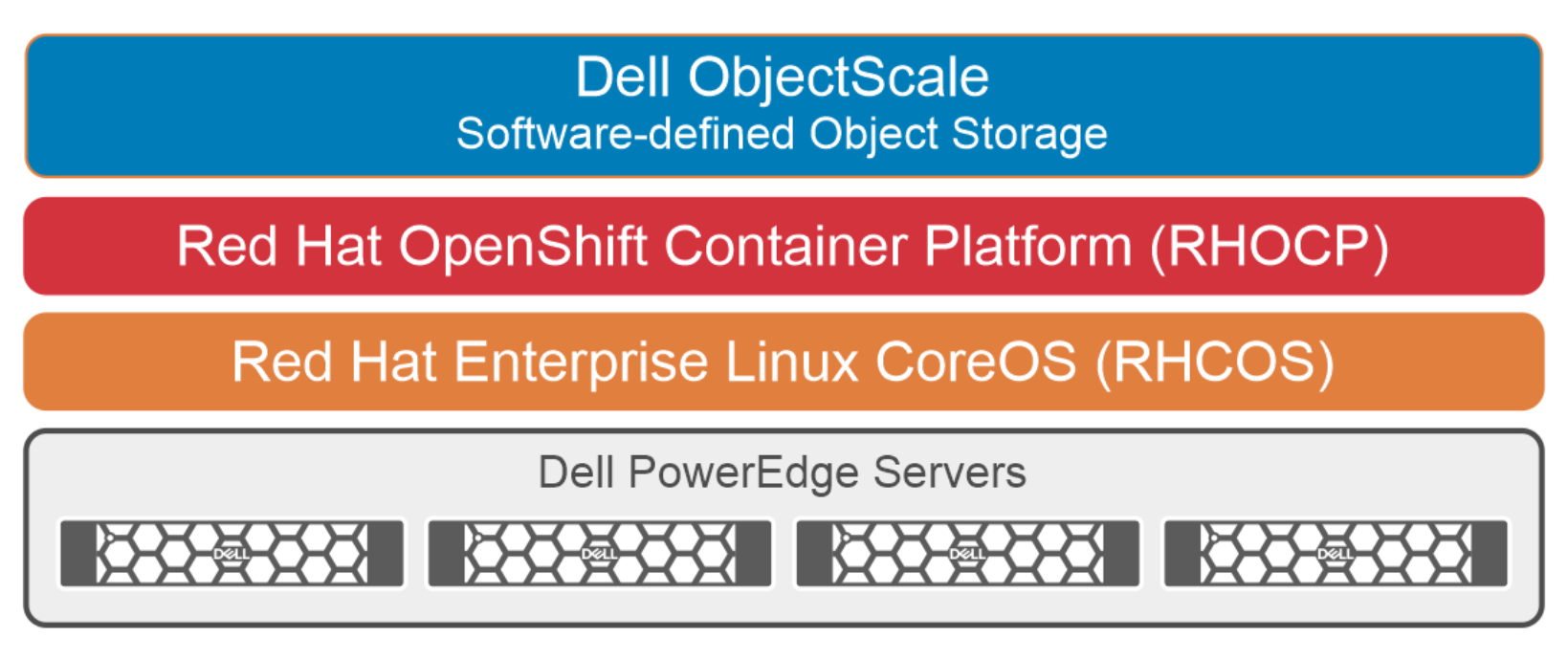

Dell ObjectScale provides high-performance containerized object storage built for demanding applications and workloads including Generative AI, analytics, and more. It is a software-defined, containerized object storage that delivers enterprise-class and high-performance in a Kubernetes-native environment. It empowers organizations to put data closer to the applications they support, reduce latency, and improve the user experience.Figure 4. Dell ObjectScale Storage Cluster

Dell ObjectScale uses various Erasure Coding (EC) schemes for data protection. EC is a method of data protection in which data is broken into fragments, expanded, and then encoded with redundant data pieces. The data pieces are stored across different locations or storage media. The objective of EC is to enable data that becomes corrupted at some point in the disk storage process to be reconstructed. The data reconstruction process uses information about the data that is stored elsewhere in ObjectScale instance.

Dell PowerSwitch

Dell Technologies stands at the forefront of GenAI networking innovation, offering solutions that meet the requirements of GenAI environments today and tomorrow, from the edge, core, and cloud. By focusing on open and extensible solutions.

Dell PowerSwitch is a dense, high-capacity, reliable spine and leaf and top-of-rack switches to meet the demands of modern AI fabrics and data center networks. The Dell PowerSwitch delivers performance from 10 GbE to 800 GbE of nonblocking network performance critical for GenAI applications. This allows customers to deploy AI clusters with low latency and high throughput using high bandwidth switching and new features like Advanced Routing, RoCEv2, Enhanced Hashing, and Priority Flow Control, for enhanced fabric performance and better congestion monitoring.

Red Hat OpenShift

Red Hat OpenShift Container Platform is a consistent hybrid cloud foundation for containerized applications, powered by Kubernetes. Developers and DevOps engineers using Red Hat OpenShift Container Platform can quickly build, modernize, deploy, run, and manage applications anywhere, securely, and at scale.

Open-source technologies power Red Hat OpenShift Container Platform and offer flexible deployment options ranging from physical, virtual, private cloud, public cloud, and Edge. Red Hat OpenShift Container Platform cluster consists of one or more control-plane nodes and a set of worker nodes.

For more information about Red Hat OpenShift Container Platform, see the Red Hat OpenShift web page.

Red Hat OpenShift AI

Deploying AI applications can be complex due to a lack of integration among rapidly evolving tools. Popular cloud platforms offer attractive tools and scalability but often lock users in, limiting architectural and deployment options.

Red Hat OpenShift AI, formerly referenced as Red Hat OpenShift Data Science (RHODS), is a platform that unlocks the power of AI for developers, data engineers, and data scientists in Red Hat OpenShift. It is easily installed through a Kubernetes operator and provides a fully focused development environment called workbench that automatically manages the storage and integrates different tools. This provides users the ability to rapidly develop, train, test, and deploy machine learning models on-premises or in the public cloud environment.

Red Hat OpenShift AI allows data scientists and developers to focus on their data modeling and application development without waiting for infrastructure provisioning. The ML models developed using Red Hat OpenShift AI are portable to deploy in Production, on containers, on-premises, at the edge, or in the public cloud.

Red Hat OpenShift Pipelines is a cloud-native, continuous integration and continuous delivery (CI/CD) solution based on Kubernetes resources. It uses Tekton building blocks to automate deployments across multiple platforms by abstracting away the underlying implementation details. The data science pipeline is part of OpenShift AI that helps enhance data science projects by portable ML workflows. It enables standardization and automation of machine learning workflows to enable teams to develop and deploy data science models.

For more information about Red Hat OpenShift AI, see the Red Hat OpenShift AI web page.

Llama 2

Llama 2 is an open-access pre-trained LLM, from Meta that is freely available for research and commercial use. Llama 2 was trained on 40 percent more data than its predecessor, Llama 1, and has twice the context length (4096 compared to 2048 tokens), hence Llama 2 can understand and interpret the larger context better and provide users with more relevant and accurate information. Llama 2 can be used in use cases to build digital assistant for consumers and enterprise usage, language translation, research, code generation, and various AI-powered tools.

Llama-2-13b is used in this solution. It is more powerful and provides better responses to user queries than Llama-2-7b because of the increased number of weights, which are the building blocks of a language model’s intelligence.

For more information about Llama 2, see the Meta web page.

LangChain

LangChain is an open-source framework for developing LLM-powered applications. It simplifies the process of building LLM powered applications by providing an abstracted standard interface that makes it easier to interact with different language models, including Llama 2.

LangChain's plug-and-play features allows users to use different data sources, LLMs, and UI tools without having to rewrite code and build powerful NLP applications with minimal effort.

LangChain provides various tools and APIs to connect language models to other data sources, interact with their environment, and build complex applications. Developers are required to use language models such as Llama 2 to build applications using LangChain. LangChain can be used to build digital assistants to generate a question-answering system over domain-specific information.

For more information about LangChain, see the LangChain web page.

Embedding model

Embedding models are the implementation of neural networks that are trained to represent words, phrases, or sentences as dense vectors in a high-dimensional space. all-mpnet-base-v2 is one such sentence-transforming model used in our solution for embedding the knowledge base into Vector store. Given an input text, in our case knowledge base document chunks, it outputs vectors which capture the semantic information, that will be later used to perform similarity search against user query.

For more information about sentence transformers, see the Hugging Face sentence transformers web page.

Vector store

Vector stores are databases designed to store and retrieve vector embeddings efficiently and to perform semantic searches.

PGVector: PGVector is a PostgreSQL extension that provides powerful functionalities for working with vectors in high-dimensional space. It introduces a dedicated datatype, operators, and functions that enable efficient storage, manipulation, and analysis of vector data directly within the PostgreSQL database.

For more information about the PGVector vector database, see the PGVector webpage.

Redis: Redis is a popular in-memory data structure store. The Redis database features the ability to store embeddings with metadata for later use by LLMs. Redis vector database is an excellent choice for applications that have to store and search vector data quickly and efficiently. It offers an effective solution to efficiently query and retrieve relevant information from massive amounts of data.

For more information about the Redis vector database, see the Redis webpage.

Gradio

Gradio is an open-source Python library that enables incredibly fast development/ prototyping of the ML web applications with user interfaces. It provides a simple and intuitive API which is compatible with all Python programs and libraries. Gradio provides various options to customize various elements of the user interface (UI).

Gradio is a fastest way to protype any ML model with a friendly web interface. It has a unique capability to visualize the intermediate steps or thought processes during a language model's decision-making process. This unique feature makes Gradio a useful tool for analyzing and debugging the decision processing abilities for language models.

For more information about Gradio, see the Gradio webpage.

vLLM

LLM models can be surprisingly slow, even on expensive hardware. vLLM serving runtime is a fast and easy-to-use LLM-inference engine that can help overcome this challenge. It uses high-throughput LLM-serving architecture with efficient memory management, enabled by PagedAttention, and it natively supports Llama 2 model, which also does not require weights to be converted to a specific format. PagedAttention is a new attention algorithm that allows attention keys and values to be stored in non-contiguous paged memory.

For more information about vLLM, see the vLLM webpage.