Dell Technologies Open Networking is designed around a highly scalable, cloud-ready data center network fabric. It uses the Dell Enterprise SONiC network operating system. IT organizations can leverage SONiC's first commercial offering for innovation, automation, and reliability, with enterprise enhancements and global support for cloud, data center, and edge fabrics.

Dell Technologies has long been a pioneer in open networking, and its leadership in the development and deployment of SONiC demonstrates its continued commitment to community innovation, collaboration, and contribution. Dell's approach to SONiC is built on the principles of openness and interoperability. This empowers enterprises to avoid vendor lock-in, enabling them to customize and optimize their networks to meet specific needs.

Enterprise SONiC Distribution by Dell Technologies offers several features that are designed to enhance the performance, efficiency, and connectivity of AI fabrics. Enterprise SONiC brings substantial advancements in AI fabric enablement. With features such as Dynamic Load Balancing with Adaptive Routing and Enhanced User-Defined Hashing, this release empowers organizations to use AI fabrics more effectively. Dynamic Load Balancing ensures optimal use of links in an AI fabric, while Adaptive Routing enhances forwarding behavior, maximizing the performance and efficiency of network resources. These advanced network architecture capabilities allow AI data flow to simultaneously access all available paths to its destination.

Deploying AI technologies presents challenges, such as technical complexities, shortages of skilled professionals, and the limitations of proprietary technologies like InfiniBand. These limitations complicate integration, leading to high costs, long evaluation periods, and vendor lock-in.

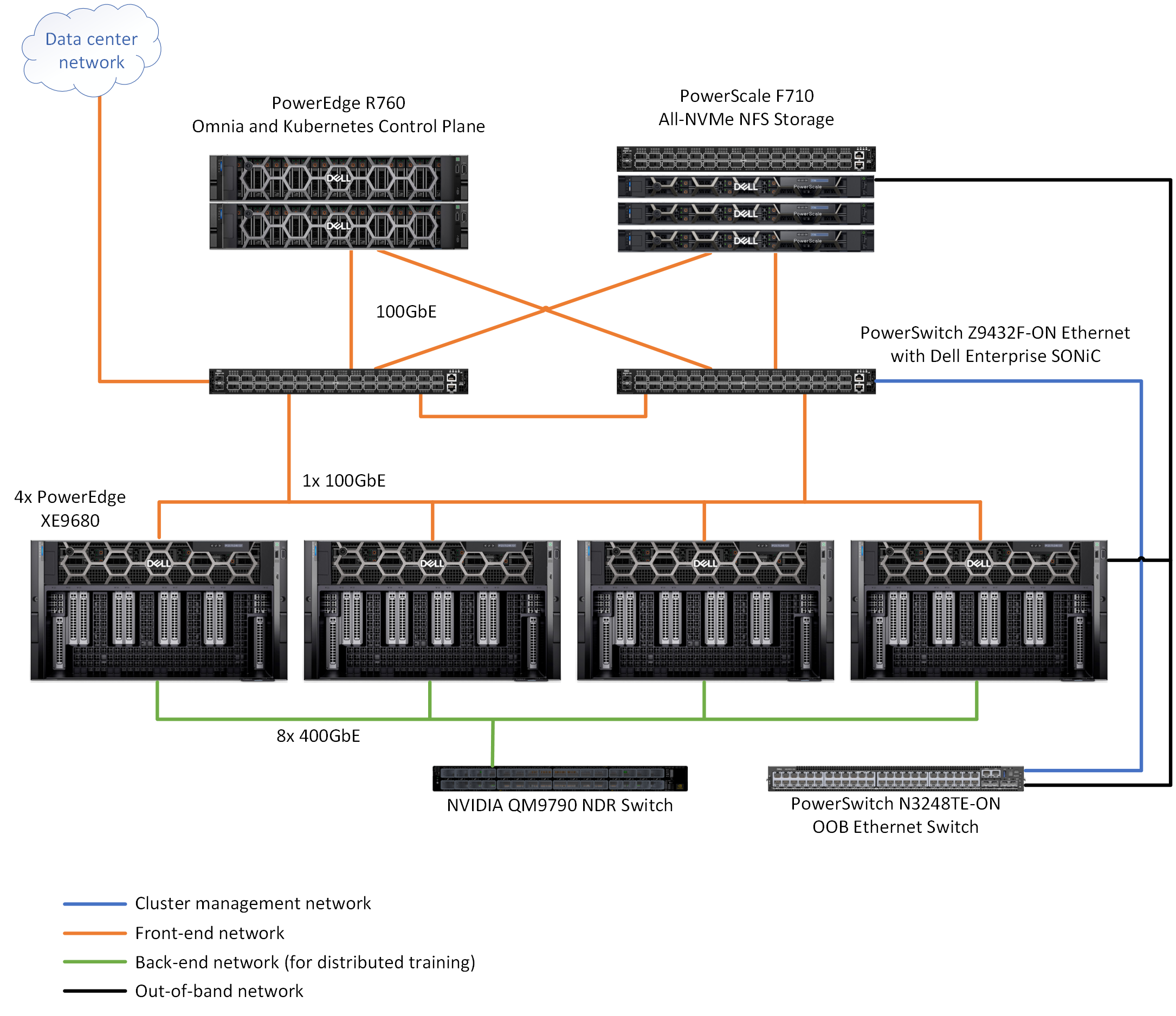

As AI models scale from billions to trillions of parameters, the need for substantial data transfer becomes critical, and any network-induced delay can affect performance. Therefore, rapid bulk data transfer with elephant flows from source to destination is vital for job completion. Although per packet latency is important, the total time that is required to complete an entire processing step is even more crucial. These large AI clusters also require coordinated congestion management to avoid packet loss and ensure efficient GPU utilization, along with synchronized management and monitoring to optimize both compute and network resources. This design incorporates three physical networks:

- Frontend network for management, storage, client/server traffic powered by two PowerSwitch Z9432F-ON

- Backend network for internode GPU communication powered by NVIDIA Quantum-2 QM9790

- Out-of-band traffic management powered by PowerSwitch N3248TE-ON

The PowerSwitch Z-series switches are high-performance, open, and scalable data center switches used for spine, core, and aggregation applications. The PowerSwitch Z9432F-ON is a high-density 400 GbE fabric switch, offering up to 32 ports of 400 GbE or up to 128 ports of 100 GbE with breakout cables. It provides a broad range of functionalities to meet the growing demands of today's data center environments. The PowerSwitch Z9664F-ON offers high density with either 64 ports of 400 GbE in a QSFP56-DD form factor or 256 ports of 100 GbE in a 2U design. It can function as a 10, 25, 40, 50, 100, or 200 switch with breakout cables, supporting up to 256 ports.

The following figure shows the network architecture, which shows the network connectivity for compute servers: