MLPerf is a benchmark suite that is used to evaluate training and inference performance of on-premises and cloud platforms. It serves as an independent, objective performance yardstick for software frameworks, hardware platforms, and cloud platforms in machine learning. Developed and continuously evolved by a consortium of AI community researchers and developers, MLPerf aims to provide developers with a tool to evaluate hardware architectures and the diverse range of advancing machine learning frameworks.

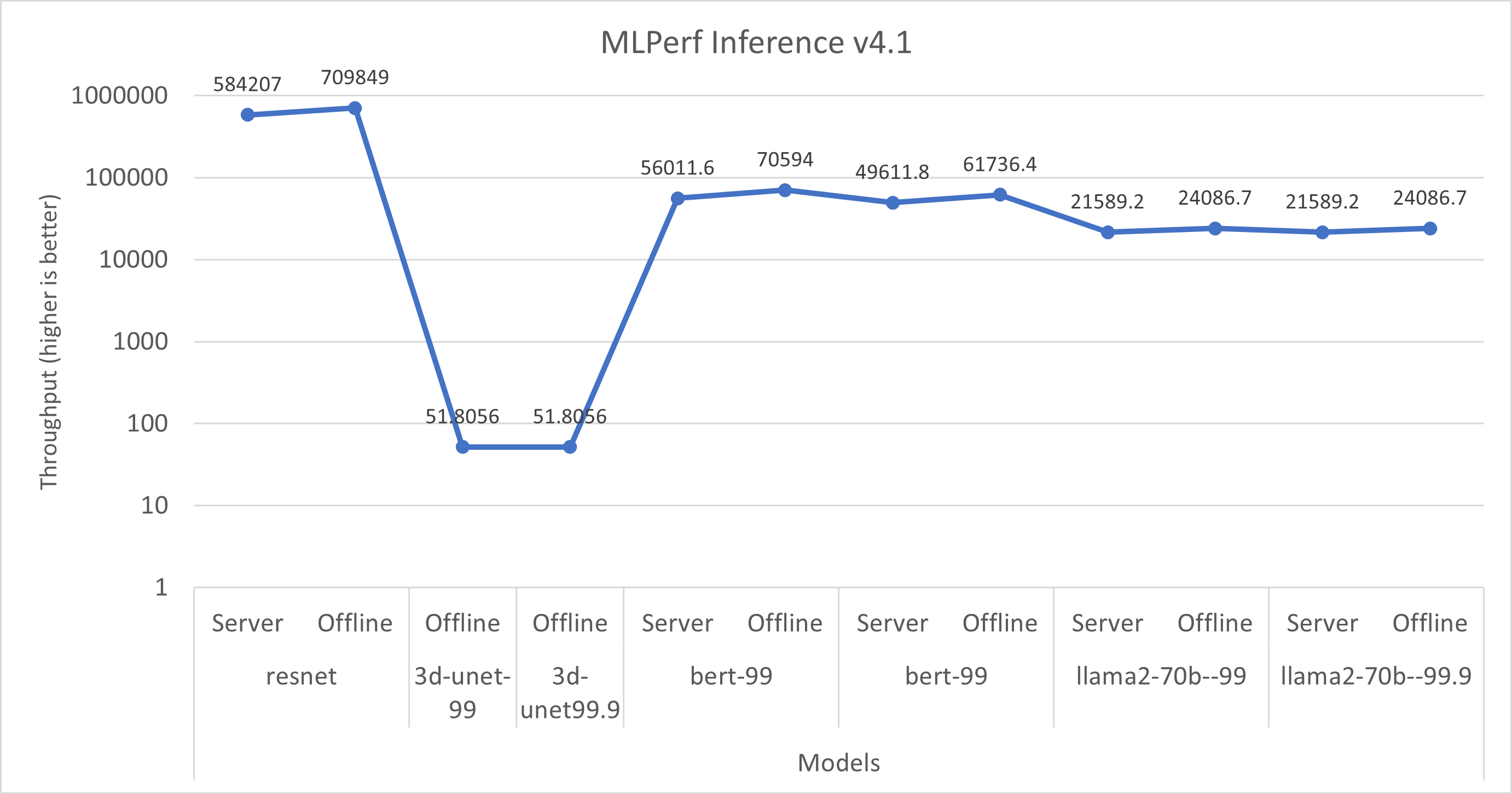

MLPerf Inference benchmarks measure the speed at which a trained neural network can perform inference tasks.

Code can be found on GitHub.

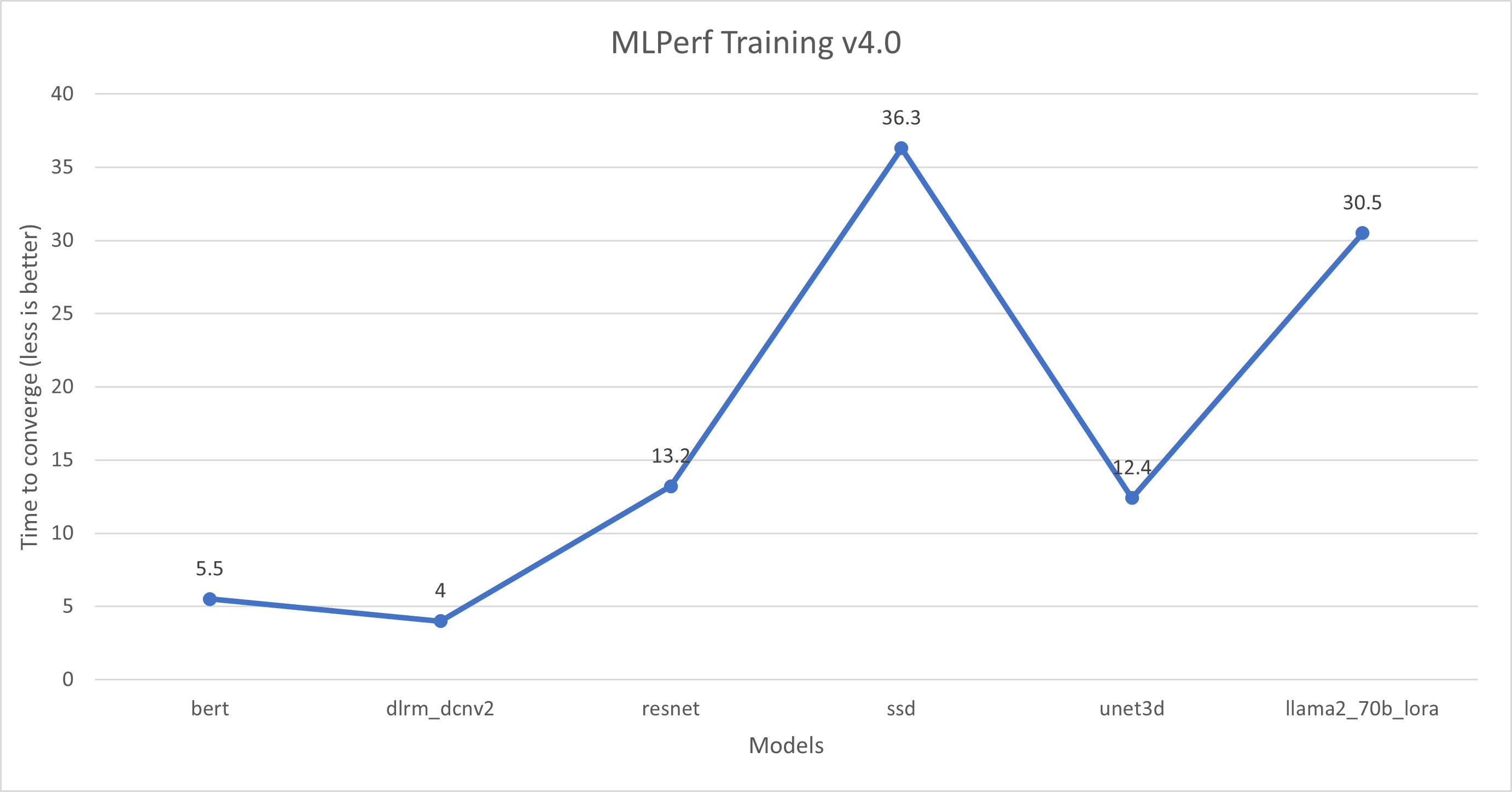

The MLPerf Training benchmarking suite measures the time that is required to train machine learning models to a target level of accuracy on new data.

Code can be found on GitHub.