PowerEdge R650 metadata performance with MDtest using 4 KiB files

Home > Workload Solutions > High Performance Computing > White Papers > Dell Validated Design for HPC pixstor Storage—Joint Solution with Kalray > PowerEdge R650 metadata performance with MDtest using 4 KiB files

PowerEdge R650 metadata performance with MDtest using 4 KiB files

-

This test is almost identical to the previous test, except that we used small files of 4 KiB instead of 3 KiB files, so that part of each file does not fit in its inode, and another devices (four R650 nodes) are used to store the rest of the files. The following command was used to run the benchmark, where the Threads variable is the number of threads used (1 to 512 incremented in powers of two), and my_hosts.$Threads is the corresponding file that allocated each thread on a different node, using round robin to spread them homogeneously across the 16 compute nodes.

mpirun --allow-run-as-root -np $Threads --hostfile my_hosts.$Threads –map-by node --mca btl_openib_allow_ib 1 --oversubscribe --prefix /usr/mpi/gcc/openmpi-4.1.2a1 /usr/local/bin/mdtest -v -d /mmfs1/perf/mdtest -P -i 1 -b $Directories -z 1 -L -I 1024 -u -t -F -w 4K -e 4K

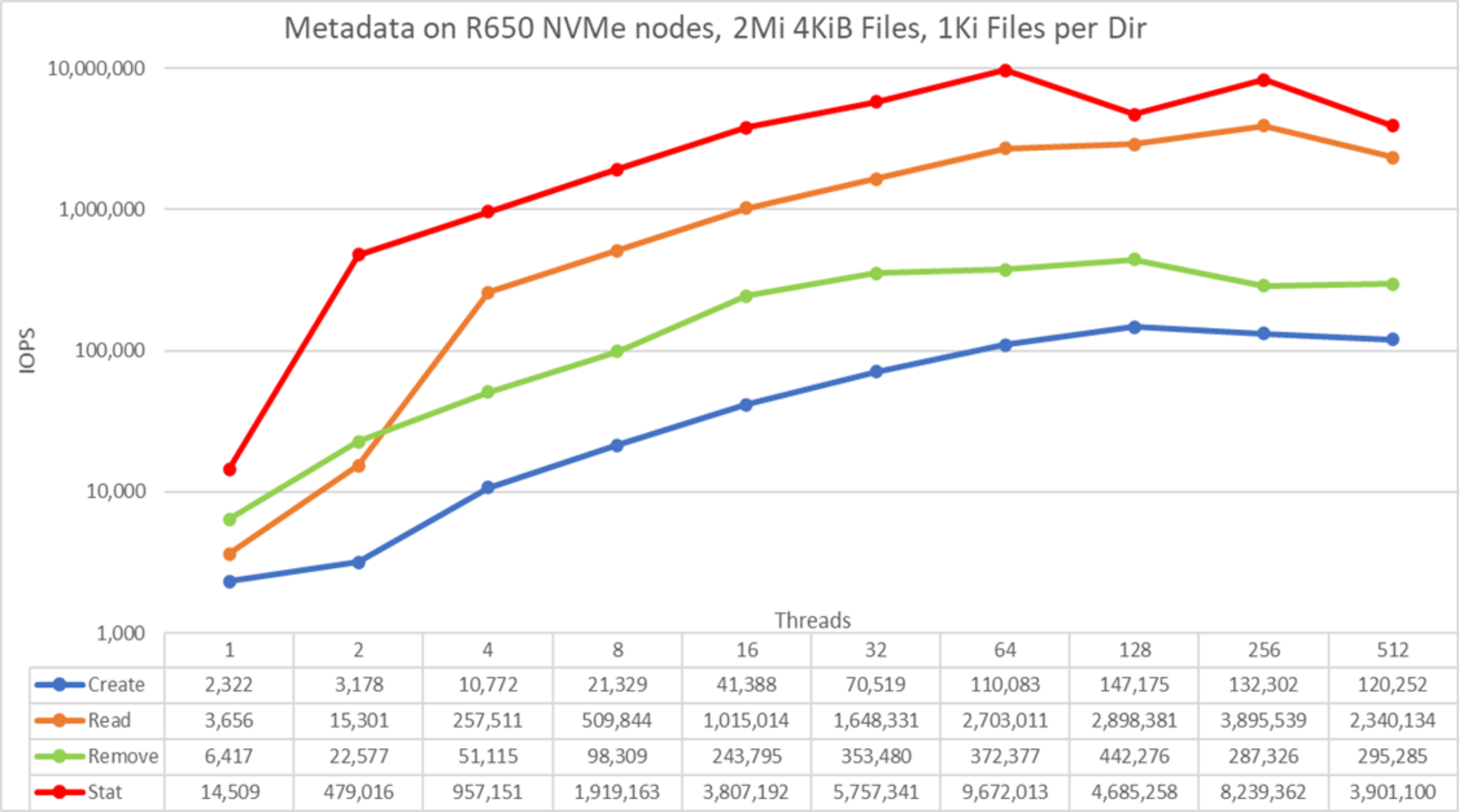

Figure 34. Metadata Performance - Small files (4 K)

Stat operations showed good numbers, reaching a peak value at 64 threads with 9.7M op/s and read operations reaching 3.9M op/s at 256 threads. Remove operations attained the maximum of 442.3K op/s and create operations achieved their peak of 147.2K op/s, both at 128 threads.

Because these numbers are for a metadata module with a single PowerEdge R650 NVMe metadata pair and two PowerEdge R650 NVMe pairs for data, metadata performance increases for each additional PowerEdge R650 NVMe metadata pair. Each PowerEdge R650 NVMe pair for data helps increase the performance of some operations like create, remove, and read. The larger the files, the bigger the effect of additional PowerEdge R650 NVMe pairs used for data.