Home > Workload Solutions > High Performance Computing > White Papers > Dell Validated Design for HPC pixstor Storage—Joint Solution with Kalray > HDMD Metadata performance with MDtest using empty files

HDMD Metadata performance with MDtest using empty files

-

It was decided recently to replace the HDMD module based on two PowerEdge R750 servers and one or more ME4024 arrays, with one or more pairs of NVMe servers based on the PowerEdge R650 servers (R750 or R7525 could also be used, but were not characterized in this work and have lower density). The optional HDMD used in this testbed with ME5084 arrays (no storage expansions) to store data consisted of a single pair of PowerEdge R650 servers with ten PM1735 NVMe PCIe 4 devices on each server. Metadata performance on that new HDMD NVMe module with other NVMe servers for data (instead of using ME5084s) is documented later in this document.

Metadata performance was measured with MDtest version 3.3.0, with OpenMPI v4.1.ARC1 to run the benchmark over the 16 compute nodes. The tests that we ran varied from a single thread up to 512 threads. The benchmark was used for files only (no directories metadata), getting the number of create, stat, read, and remove operations that the solution can handle.

The study with empty and 3 KiB files are included for completeness. Results with 4 KiB files might be more relevant because 4 KiB files cannot fit into an inode along with the metadata information and ME5 arrays are used to store data for each file. Therefore, MDtest can also provide an approximate estimate of small files performance for read operations and the rest of the metadata operations using ME5 arrays.

The following command was used to run the benchmark, where the Threads variable is the number of threads used (1 to 512 incremented in powers of 2), and my_hosts.$Threads is the corresponding file that allocated each thread on a different node, using the round-robin method to spread them homogeneously across the 16 compute nodes. Like the Random IO benchmark, the maximum number of threads was limited to 512 because there are not enough cores for 1024 threads and context switching can affect the results, reporting a number lower than the actual performance of the solution.

mpirun --allow-run-as-root -np $Threads --hostfile my_hosts.$Threads –map-by node --mca btl_openib_allow_ib 1 --oversubscribe --prefix /usr/mpi/gcc/openmpi-4.1.2a1 /usr/local/bin/mdtest -v -d /mmfs1/perf/mdtest -P -i 1 -b $Directories -z 1 -L -I 1024 -u -t -F

Because the total number of IOPs, the number of files per directory, and the number of threads can affect performance results, we decided to keep the total number of files fixed at 2 MiB files (2^21 = 2097152), the number of files per directory fixed at 1024, and the number of directories varied, as the number of threads changed as shown in the following table:

Table 4. Distribution of files on directories by MDtest

Number of threads

Number of directories per thread

Total number of files

1

2048

2,097,152

2

1024

2,097,152

4

512

2,097,152

8

256

2,097,152

16

128

2,097,152

32

64

2,097,152

64

32

2,097,152

128

16

2,097,152

256

8

2,097,152

512

4

2,097,152

1024

2

2,097,152

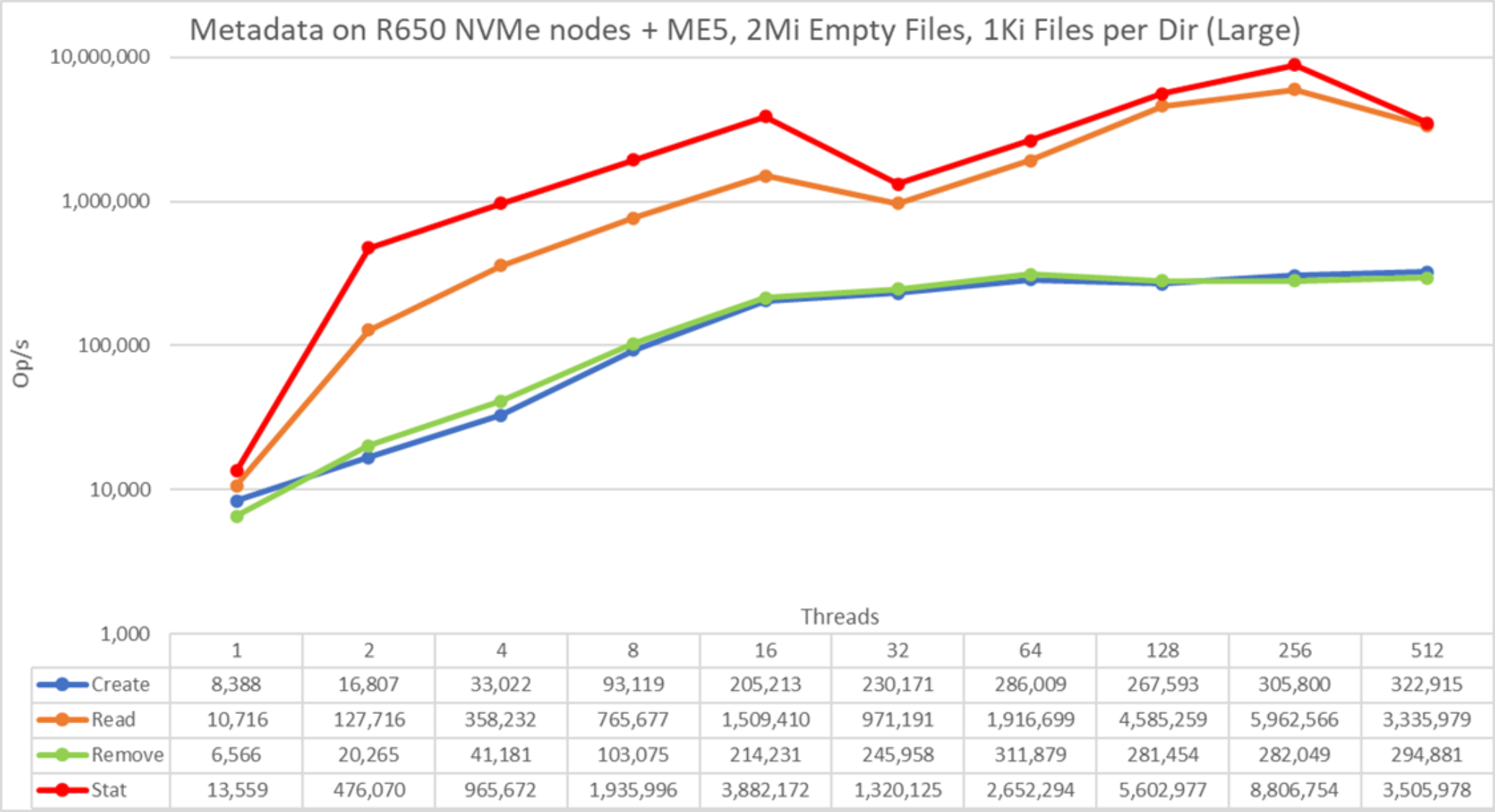

Figure 25. Metadata performance - empty files

Note that the scale chosen was logarithmic with base 10, to allow comparing operations that have differences of several orders of magnitude; otherwise, some of the operations appear like a flat line close to 0 on a normal graph. A log graph with base 2 is more appropriate because the number of threads are increased by powers of 2. Such a graph looks similar, but people tend to perceive and remember numbers based on powers of 10 better.

The system provides good results with stat and read operations reaching their peak value at 256 threads with 8.8M op/s and 5.34M op/s respectively. Remove operations attained the maximum of 311.9K op/s at 64 threads and create operations achieved their peak at 512 threads with 322.9K op/s. Stat and read operations have more variability, but when they reach their peak value, performance does not drop below 1.3M op/s for stat operations and 970K op/s for read operations. Create and remove operations are more stable when they reach a plateau and remain above 280K op/s for remove operations and remain above 265K op/s for create operations.

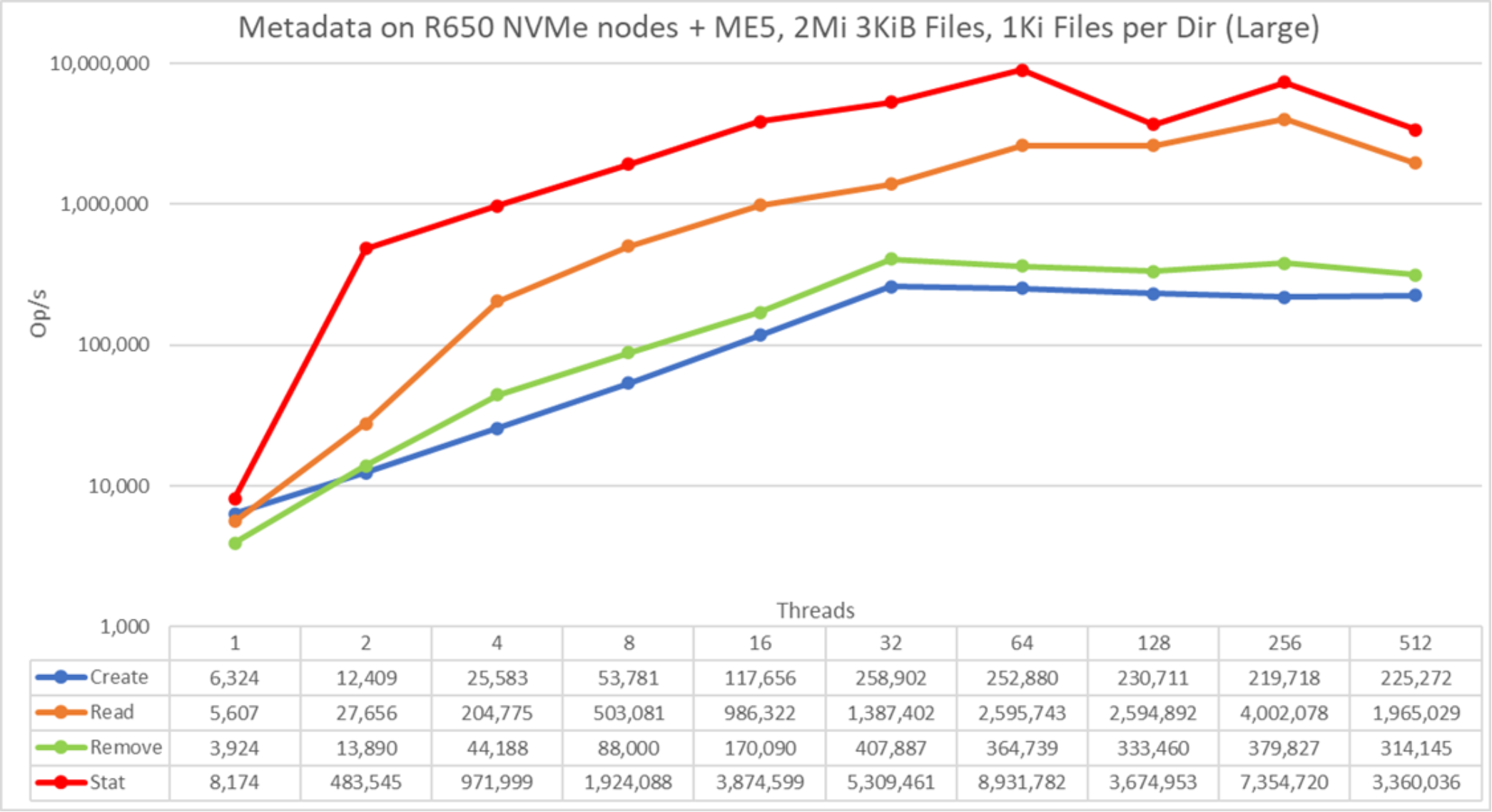

Metadata performance with MDtest using 3 KiB files

This test is almost identical to the previous test, except that we used small files of 3 KiB instead of empty files; data will still fit inside the metadata inode. The following command was used to run the benchmark, where the Threads variable is the number of threads used (1 to 512 incremented in powers of 2), and my_hosts.$Threads is the corresponding file that allocated each thread on a different node, using the round-robin method to spread them homogeneously across the 16 compute nodes.

mpirun --allow-run-as-root -np $Threads --hostfile my_hosts.$Threads –map-by node --mca btl_openib_allow_ib 1 --oversubscribe --prefix /usr/mpi/gcc/openmpi-4.1.2a1 /usr/local/bin/mdtest -v -d /mmfs1/perf/mdtest -P -i 1 -b $Directories -z 1 -L -I 1024 -u -t -F -w 3K -e 3K

Figure 26. Metadata performance - small files (3 KiB)

The system provides good results for stat operations, reaching their peak of 8.9M Op/s at 64 threads and read operations reaching their peak of 4M Op/s at 256 threads. Removal operations attained the maximum of 407.9K Op/s and create operations achieved a peak of 258.9K op/s, both at 32 threads. Stat and read operations have more variability, but when they reach their peak value, performance does not drop below 3.6M op/s for stat operations and 2.5M op/s for reads operations. Create and remove operations have less variability, increasing almost linearly as the number of threads grows, then reaching a plateau with more than 314K Op/s for Remove operations and 219K Op/s for create operations.

Metadata performance with MDtest using 4 KiB files

This test is almost identical to the previous two tests, except that we used small files of 4KiB. The following command was used to run the benchmark, where the Threads variable is the number of threads used (1 to 512 incremented in powers of 2), and my_hosts.$Threads is the corresponding file that allocated each thread on a different node, using the round-robin method to spread them homogeneously across the 16 compute nodes.

mpirun --allow-run-as-root -np $Threads --hostfile my_hosts.$Threads –map-by node --mca btl_openib_allow_ib 1 --oversubscribe --prefix /usr/mpi/gcc/openmpi-4.1.2a1 /usr/local/bin/mdtest -v -d /mmfs1/perf/mdtest -P -i 1 -b $Directories -z 1 -L -I 1024 -u -t -F -w 4K -e 4K

Figure 27. Metadata Performance – small files (4 KiB)

The system provides good results with all operations reaching their peak value at 256 threads. Stat operations attained 7.6M Op/s, Read operations attained approximately 3.5M op/s, remove operations attained a maximum of 440.3K Op/s, and create operations achieved a peak of 137.3K Op/s. Stat and read operations have more variability, but when they reach their peak value, performance does not drop below 2.5M Op/s for Stat operations and 590K Op/s for read operations. Create and remove operations have less variability, keep increasing as the number of threads grows until reaching the maximum value, and then start to slowly decrease performance (at 512 threads).

Summary

The current solution can deliver good performance, which is expected to be stable regardless of the used space (because the system was formatted in scattered mode), as can be seen in Table 4. Furthermore, the solution scales in capacity and performance linearly as more storage node modules are added, and a similar performance increase can be expected from the optional HDMD with one NVMe pair.

Table 5. Peak and sustained performance

Benchmark

Peak performance

Sustained performance

Write

Read

Write

Read

Large Sequential N clients to N files

28.5 GB/s

31.4 GB/s

27.1 GB/s

28 GB/s

Large Sequential N clients to single shared file

29.1 GB/s

30.9 GB/s

26.9 GB/s

24.3 GB/s

Random Small blocks N clients to N files

20.8K IOps

31.8K IOps

20.8K IOps

31.8K IOps

Metadata Create empty files

323K Ops

Metadata Stat empty files

8.8M Ops

Metadata Read empty files

5.96M Ops

Metadata Remove empty files

311.9K Ops

Metadata Create 4 KiB files

137.3K Ops

Metadata Stat 4 KiB files

7.65M Ops

Metadata Read 4 KiB files

3.5M Ops

Metadata Remove 4 KiB files

440.3K Ops