Home > Workload Solutions > High Performance Computing > White Papers > Dell Validated Design for HPC Digital Manufacturing with SIMULIA Abaqus FEA > Multiserver scalability

Multiserver scalability

-

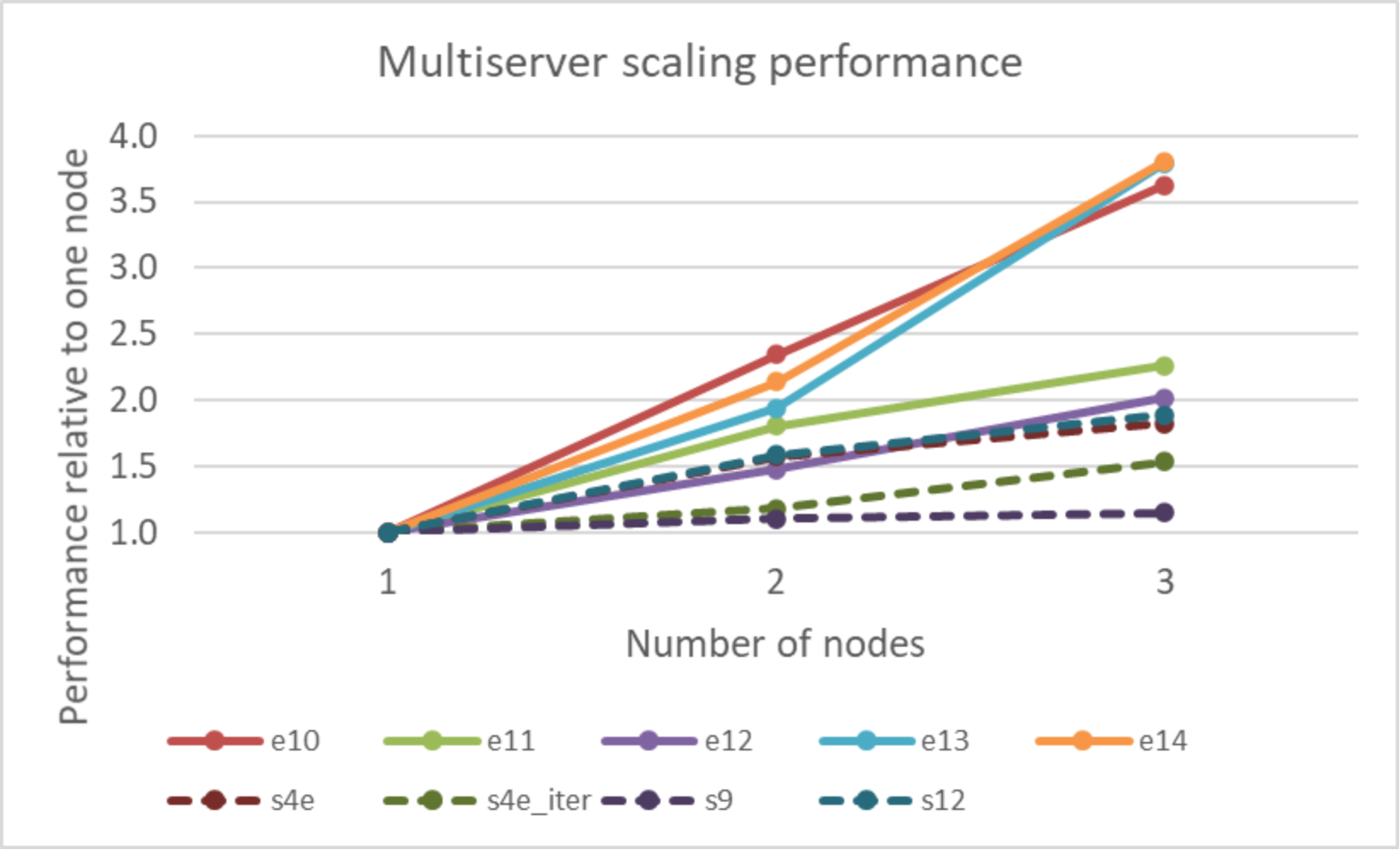

The following figure shows the parallel scalability when running Abaqus using up to four nodes configured with AMD EPYC 7573X processors for various Abaqus/Standard and Abaqus/Explicit benchmarks. Larger values indicate better overall performance. The performance is presented relative to the performance of a single server.

Figure 3. Multiserver scaling performance

The parallel speedup when running jobs using more than a single node is mixed. While the parallel speedup tends to correlate to the model size, there are several factors that might impact it. Most of the models tested showed a noticeable speedup going from one to two nodes. The notable exception is the e10 case, which displays a superlinear speedup. This behavior can be explained by “cache effects,” where the dataset is distributed among a greater number of nodes, and there can be a point in which the entire problem can fit into cache, and the speed of the solver can increase dramatically. Such cache effects are highly problem-specific. In general, there is a tradeoff in distributed memory parallelism in which the cache performance typically improves as the problem is distributed to more nodes. However, the communication overhead also increases, counteracting the increased performance from the caching benefit. Users are encouraged to perform a few tests with new models to determine the optimum node count for the best job throughput with available resources.