Home > Workload Solutions > SAP > Archive > Dell Ready Bundle for SAP with Unity Storage > Findings

Findings

-

Our test results show that the integration of the Ready Bundle for SAP Landscapes solution with Data Domain storage protection systems can effectively and quickly protect SAP databases while offering space savings that enable the business to protect more data. We obtained test results under the following categories:

- Backup times

- CPU usage

- Network usage

- Pre- and post-compression

- Deduplication and compression savings

Backup times

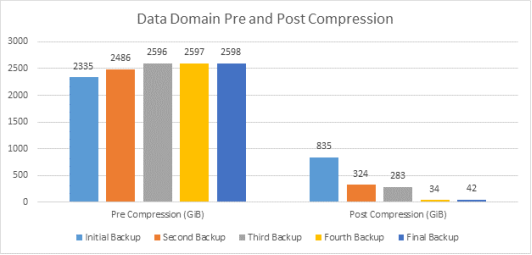

Figure 50 shows the duration times of the five backup procedures. The initial backup of our SAP Netweaver 2.7 TB database took only 45 minutes to complete. The subsequent backups included a data change in a range from 5.75 percent to 0.2 percent.

Full backups require RMAN to read the entire database for every backup. Because we performed a full backup operation each time, the backup duration time increased slightly when the database size increased. However, a full backup operation is the best way to represent the effects of deduplication and compression. With incremental backup operations, second and subsequent backups complete faster than the initial backup.

Figure 50. Test results: Backup time and database size test

CPU usage

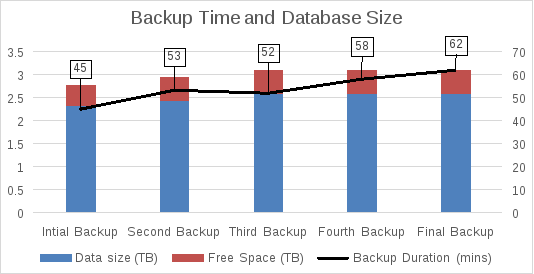

Moving some of the deduplication work from the Data Domain system to the database server did not negatively impact the server workload. Because sending data is resource-intensive for the database server, sending less data significantly reduces the load and it takes fewer CPU cycles to perform the deduplication process than to push full backups.

As Figure 51 shows, the initial full backup of our SAP database used, on average, 27 percent of the database server’s CPU. The first full back is the most resource-intensive because the entire database is transferred and protected on the Data Domain system. The database server’s CPU usage fell in all subsequent backups, to a range between 17 percent and 10 percent. This change occurs because the database server processes only the unique data in each additional backup, freeing up CPU cycles for other operations. The test results appear to show the following relationship between the amount of unique data and CPU utilization for DD Boost: the greater the amount of unique data, the higher the CPU utilization.

Figure 51. Database server: CPU usage and database size

Network usage

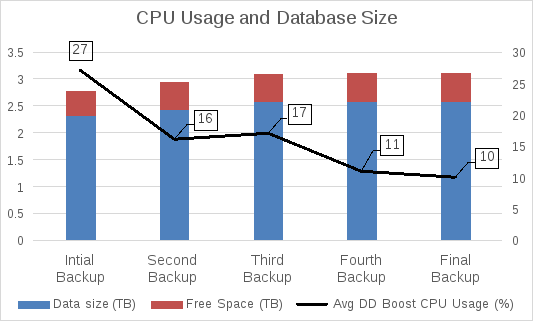

DD Boost software sends only unique data from the database server or client to the Data Domain system, enabling more efficient use of the network. Up to 99 percent less data is moved across the network, even in full backups.

As Figure 52 shows, the initial full backup network usage was, on average, 450 Mbps. Because all of the data had to be sent to the Data Domain system, the entire database was considered as unique data and must be protected on Data Domain. The second full backup network usage drops significantly, by 350 Mbps, because most of the database was protected already and only the unique data must be transferred. Network usage drops more with the fourth and final backups because the unique data sets were smaller.

Figure 52. Network usage and database size

High network utilization is a significant concern because most applications and databases are backed up during the same off-business hours. Minimizing network utilization makes it possible to efficiently protect more databases that share the same network. The larger the data center and the greater number of applications, the more important it is to have lower network utilization usage during backup periods.

Pre- and post-compression

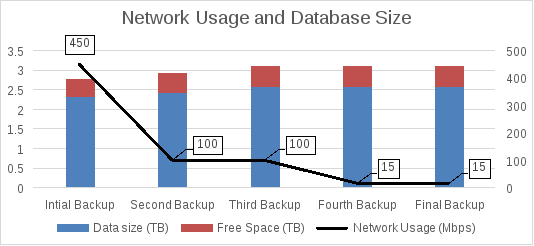

The Data Domain system performs inline deduplication and local compression as the backup data enters the system and stores only unique elements on disk, leading to lower storage consumption and costs and a smaller footprint in your data center.

The initial size of our data backup on Data Domain was 2,335 GiB. After the data transfer to the Data Domain system and application of deduplication and compression algorithms, physical storage consumption fell to 835 GiB. The second backup increased the database by five percent. After the data transfer to the Data Domain system, physical storage consumption fell to 324 GiB. The amount was significantly lower than the initial backup because deduplication became the dominant factor for the fourth and final backups, as Figure 53 shows.

Figure 53. Data Domain pre- and post-compression values

Deduplication and compression savings

Data Domain compresses data at two levels: global and local. Global compression, or deduplication, is used to identify redundant data segments and store only the unique data segments by comparing received data to data already stored on disk, while local compression compresses the unique data segments. Certain compression algorithms give a total compression effect of global compression combined with local compression.

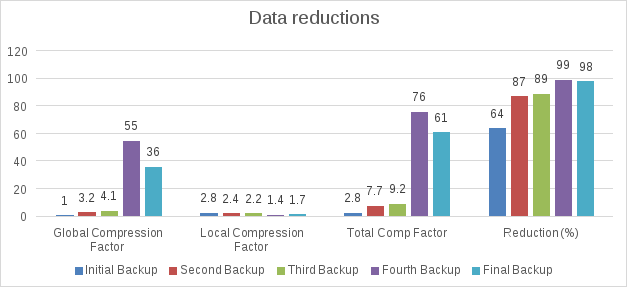

Global compression factor

Figure 54 shows a global compression factor of 1.0x, indicating that all the data written from the first full backup was unique. The second and third backups had large unique deltas, ranging from 120 GB to 140 GB, giving global compression factors of 3.2x and 4.1x respectively. The fourth backup of 600 MB and the final backup of 1 GB included significantly less unique data. Therefore, the global compression factor for these backups was much higher.

Local compression factor

The local compression factor is 2.8x, indicating that the 2,335 GiB database size was compressed to 835 GiB, an overall reduction of 64 percent. As Figure 54 shows, there is a relationship between the amount of unique data and the local compression factor: the greater the amount of unique data, the greater the compression opportunity and the higher the compression factor. In our tests, the first backup consisted of entirely unique data and had the largest compression factor, while the fourth backup had the least amount of unique data and the lowest compression factor.

Total compression factor

Figure 54 also shows the total amount of compression the Data Domain system performed with the data it received. The first backup consisted of unique data and had the lowest total compression factor (2.8x). The second and third backups were similar in the amount of unique data they included and their total compression factors were 7.7x and 9.2x respectively. The fourth and final backups had the smallest amount of unique data and therefore the highest total compression factors.

There is a relationship between the compression factor and space usage on a Data Domain appliance: the higher the total compression factor for a backup, the greater the space savings for that backup.

Figure 54. Test results: Deduplication and compression savings

Data reduction percentage

The data reduction percentage represents the total compression savings to show the consolidation we achieved: the higher the reduction percentage, the greater the space savings on the Data Domain appliance. In our test, the first backup had unique data and yielded the lowest reduction percentage, namely 64 percent. This percentage was substantial because of the amount of unique data that was transferred. The second and third backups were similar in the amount of unique data transferred and their reduction percentages were 87 and 89 percent respectively. The fourth and final backups had the smallest amount of unique data and the highest reduction percentages.

After application of the deduplication and compression algorithms, we achieved a total savings of 87 percent after five full backups totaling 12.6 Tib, with a physical storage consumption of approximately 1.6 Tib on the Data Domain system.