Home > Workload Solutions > Oracle > White Papers > Dell PowerMax 2500 and 8500 Best Practices for Mission Critical Oracle Databases > Lab configuration used for the performance tests

Lab configuration used for the performance tests

-

Table 4 shows the server and storage configuration used for the performance tests. Each server has 32 physical cores and 512 GB RAM. A single node pair PowerMax 8500 is used with 28 x SSD drives. Three PowerEdge R740xd servers are connected with 32 Gb FC (two dual-port HBAs per server). Each initiator (server HBA port) is connected to four targets (storage FA ports) for 16 paths per volume. This allows testing of workload scalability where each server benefits from full storage connectivity.

Note: In many production environments, only four or eight paths per volume are required. The load is meant to be divided across the cluster nodes.

Table 4. Server and storage components used for performance tests

Host name

Operating system

Hardware

Purpose

DSIB0252

Oracle Linux 8.7 / UEK 7

PowerEdge R740xd, 2 x Intel Gold 6242 (32 cores @ 2.80 GHz), 512 GB RAM

Oracle CDB RAC node 1

DSIB0253

Oracle CDB RAC node 2

DSIB0254

Oracle CDB RAC node 3

PowerMax 8500

PowerMaxOS 10

Single node pair PowerMax 8500, 768 GB raw DRAM (1,024 GB NVDIMM for metadata), single disk enclosure (28 x NVMe SSD)

Table 5 shows the software releases used for the performance tests. Oracle 21c RAC and Database software are installed on the servers using Container Database (CDB) deployment option, where the user database is configured as Pluggable Database (PDB). SLOB release 2.5.4 benchmark tool is used to perform both OLTP and DSS tests. The relevant SLOB configuration settings are discussed in more detail later as part of the specific tests. PowerPath release 7.5 is used to provide multipath resiliency and load balancing.

Table 5. Software releases used for performance tests

Host name

Version

Oracle RAC and Database with ASM Filter Driver (AFD)

Oracle 21c (21.8.0.0)

SLOB benchmark tool

SLOB 2.5.4

PowerPath for Linux

Release 7.5

Host kernel level

5.15.0-3.60.5.1.el8uek.x86_64

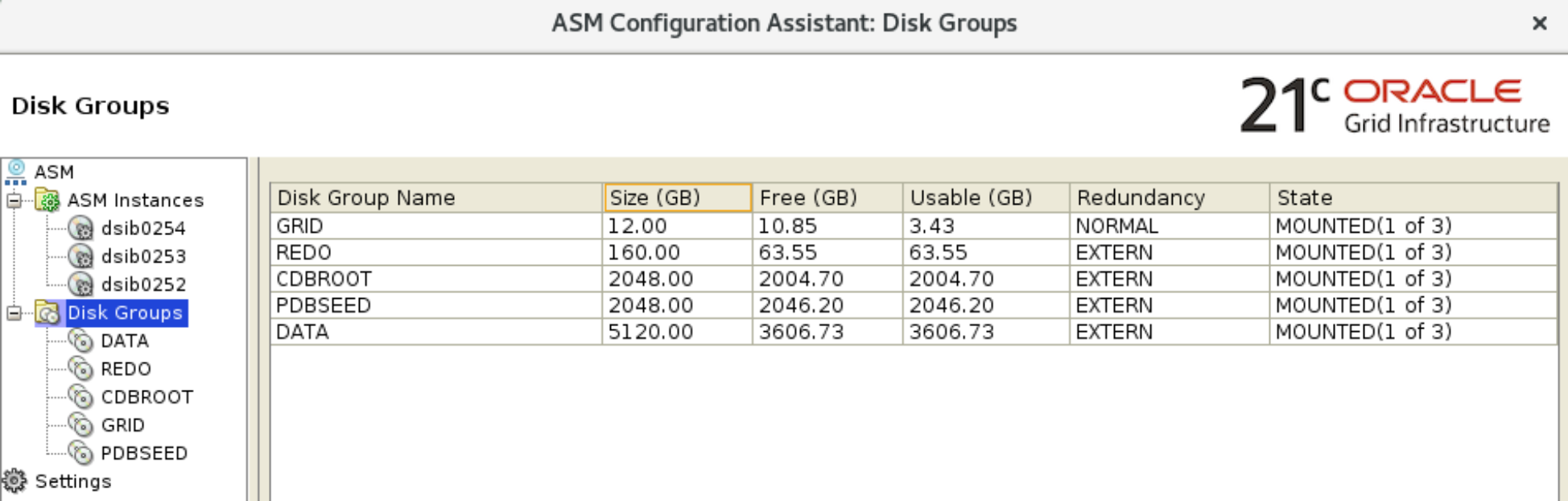

Oracle ASM is configured as shown in Figure 6. The cluster ASM disk group GRID is the only one configured with NORMAL redundancy (two ASM failure groups) since it is small and does not contain any user data. The NORMAL redundancy for Grid Infrastructure ASM disk group means that Oracle will configure three quorum files rather than only one. If EXTERNAL redundancy is used for that disk group, no ASM mirroring or failure groups are used. All other ASM disk groups are configured with EXTERNAL redundancy.

Figure 6. Oracle ASM Disk Group configuration

Two ASM disk groups are used for the Oracle container database. The first is +CDBROOT, used for CDB root container, which contains the system schema objects used by all PDBs. The second is +PDBSEED, which is used for the seed database template to create PDBs.

+REDO ASM disk group is used to host the database redo log files. These redo logs serve all the PDBs. As mentioned in the Oracle redo log considerations section, for high-performance databases, the redo log files should be sized at least 10 GB each and at least four log files (redo log groups) per cluster node (redo log thread).

+DATA ASM disk group is used for the SLOB PDB user database. All the tests performed in this section used local undo tablespace for the SLOB PDB rather than using the CDB shared undo. This design allows for high-performance PDBs to size their undo tablespaces to their specific needs. The +DATA ASM disk group also contained the default temporary tablespace used for SLOB.