Home > Workload Solutions > SQL Server > Guides > Dell ObjectScale and Integrated Systems for Data Analytics using Microsoft Azure HCI and SQL 2022 > Solution concepts

Solution concepts

-

Azure Stack HCI is a hyperconverged infrastructure (HCI) solution that runs Windows and Linux VMs or containerized workloads and their persistent storage. It's a hybrid solution that connects on-premises system to Azure for cloud-based services, monitoring, and management.

Microsoft Azure Arc simplifies the management and governance of complex environments that are spread across core on-premises, multicloud, and edge. Microsoft Azure Arc provides a single control plane by extending non-Azure resources from on-premises, multicloud, or edge into the Azure Resource Manager. Microsoft Azure Arc extends the Azure data services and management capabilities to any infrastructure, fulfilling the rapidly increasing hybrid cloud requirements across organizations.

Storage Spaces Direct provides fault tolerance, often called "resiliency," for our data. There are a few different ways Storage Spaces can do this, which make different tradeoffs between fault tolerance, storage efficiency, and compute complexity. These broadly fall into two categories: "mirroring" and "parity"— or a combination of both. Software-defined networking (SDN) is a core feature of Azure Stack HCI which provides benefits such as additional network virtualization, automation, and programmability features, allowing for better management and optimization of data traffic between nodes, improved security policies, and Quality of Service capabilities.

Azure Stack HCI, version 22H2: This version introduces significant features, such as Hyper-V live migration, which enhances speed and reliability. Furthermore, it facilitates the transition from a single-server cluster to either a two-node or a three-node configuration, enabling greater flexibility in adapting to evolving infrastructure needs. For more information about Azure Stack HCI 22H2 version, see Azure Stack HCI solution overview.

Dell Integrated System for Microsoft Azure Stack HCI is an ideal solution for organizations that are refreshing and modernizing their aging virtualization environments to support high-value, highly performant workloads. This all-in-one validated hyperconverged infrastructure (HCI) system includes full-stack life cycle management, native integration into Microsoft Azure stack HCI, flexible consumption models, and solution-level enterprise support and services expertise.

Dell Technologies has developed OpenManage Integration with Microsoft Windows Admin Center (OMIMSWAC). The integration with WAC adds another level of management, visibility, and control to the resources in the data center. The extension is designed to communicate with the cluster nodes in-band and completely agent-free using the OS-to-iDRAC passthrough and Redfish technology. Its first release in 2019 featured the hardware and firmware inventory, real-time health monitoring, iDRAC integrated management, and troubleshooting tools. Since then, based on customer feedback, Dell Technologies has improved the feature set by adding capabilities such as Full Stack Cluster-Aware Updating, CPU core management, Cluster node expansion, and much more.

Dell Integrated System for Microsoft Azure Stack HCI provides a wide range of intelligently designed AX nodes that are geared towards workload and validated with deliberately selected hardware components and BIOS, firmware, and driver revisions. High-performance architectures use technologies like 25/100 GbE RDMA networking and all-NVMe storage configurations. Our engineering team also validates scalable and switchless storage networking protocols using Dell Power Switch network switches. We also provide prescriptive guidance on expanding clusters to accommodate the anticipated workload demand.

Dell Integrated System for Microsoft Azure Stack HCI combined with Azure Arc lets IT administrators leverage feature-rich management and governance services like Azure Monitor, Azure Security Center, and Azure Policy.

SQL Server has been a leader in performance, availability, and security. Now, SQL Server 2022 as a product also delivers the most cloud connected database platform to date. One major feature in SQL Server 2022 includes the ability to read from S3 compatible object storage. DBAs can also transfer data to and from S3 object storage. As organizations seek to gain a competitive edge with their smart data estate, it is crucial to have the ability to access all types of data. SQL Server 2022, combined with Dell ObjectScale, are complementary technologies for querying a data lake using a T-SQL surface area. This combination of products and tools creates a modern opportunity to store and manage various types of data on-premises and at public cloud scale.

In this guide the recent release of T-SQL functions such as GREATEST, LEAST, DATE_BUCKET, GENERATE_SERIES, STRING_SPLIT, FIRST_VALUE, and LAST_VALUE had their potential analytics use cases evaluated.

Data virtualization

Data virtualization is a data management methodology that enables applications to access and manipulate data without necessitating technical knowledge of the source data physical location or the format.

It involves abstracting multiple sources through a single data access layer. This enables organizations to integrate different types of data virtually. The integration is critical for data mining, analytics, and predictive analytics tools which use machine learning (ML) and artificial intelligence (AI).

Dell ObjectScale is a software-defined, containerized architecture that provides enterprise-class, high-performance object storage in a Kubernetes-native setting. ObjectScale provides organizations with the ability to provide data closer to the applications they support, reducing latency and improving user experience. It supports storing, manipulating, and analyzing unstructured data on a large scale.

Dell ObjectScale is built on the Kubernetes platform to deliver a simplified product where OS and hardware-level layers are handled by Kubernetes and ObjectScale handles storage and storage management.

It is a next-generation object storage software that is lighter, faster, and deployable on existing infrastructure. It can be deployed on the Red Hat OpenShift Container Platform or SUSE Linux Enterprise Server (SLES) framework. ObjectScale allows organizations to provide scalable cloud services with the availability and control of a private cloud infrastructure.

Dell ObjectScale key characteristics include:

- Data protection.

- Geo-replication

- Scalability.

- Built as a Scaled-out, software-defined architecture, following the microservices principle of cloud applications.

- Superior performance irrespective of the size of an object

- Kubernetes native, customer-deployable

- Rich S3 compatibility.

- Self-service APIs help in the quick spin-up of object storage containers.

Additional information regarding ObjectScale is available on Dell ObjectScale webpage.

A traditional data lake is an efficient and cost-effective solution by storing data in its raw form, typically unstructured or semi-structured. A data warehouse is a more advanced repository that provides structured data for reporting and analysis, usually filtered, and optimized for better data quality and querying.

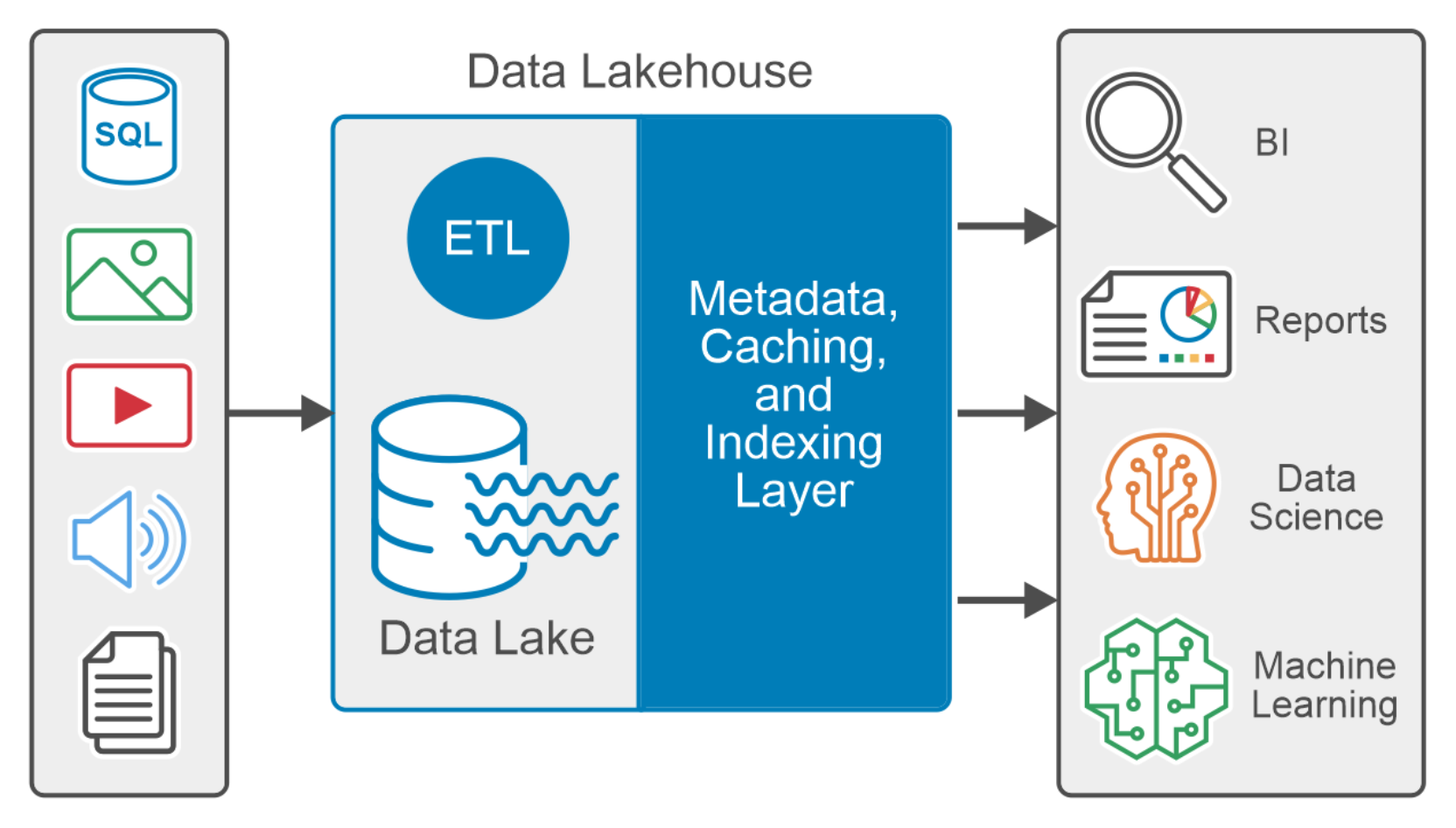

A Data Lakehouse is a modern data platform that combines the flexibility, low cost, and scalability of Data lakes with the ACID transactions, data management, and data querying capability of the data warehouse to provide a centralized repository for all data.

It is equipped with diverse datasets such as structured, semi-structured, and unstructured data, using the requirements of both business intelligence and data science use cases. It typically supports programming languages like Python, R, and SQL.

Figure 1. Data Lakehouse logical diagram

The metadata layer, like the open-source Delta Lake, is the Data Lakehouse architecture’s foundation. The metadata layer is a comprehensive catalog that maintains metadata for every object in the data lake storage, which helps to organize and provide information about the data in the system. The metadata layer facilitates other features in Data Lakehouse, such as support for ACID transactions, streaming, caching hot data, time travel, schema enforcement, evolution, and data validation.

Apache Spark

Apache Spark is an open-source distributed data-processing engine used for big data. It can manage both batch and real-time analytics and data processing tasks. It is designed to provide the computational speed, scalability, and programmability necessary for big applications.

One of the main features of Spark is its memory computing that increases the processing speed for any application. Spark is designed to provide a range of workloads such as batch processing, interactive queries, and streaming. Apart from supporting all these tasks in a system, it also reduces the burden of managing multiple tools.

More information about Apache spark is available on the Spark webpage.

Delta Lake

A Delta Lake is an open-source storage framework that enhances reliability, security, and performance in the data lake. A Linux Foundation project since 2019, Delta Lake is an independent project controlled by a development community rather than any single technology vendor. Delta Lake is located on the top of your existing data lake and provides support for ACID transactions, scalable metadata handling, and integrated streaming and batch data processing.

It provides Lakehouse architecture with compute engines such as Spark, PrestoDB, Flink, Trino, and Hive and APIs for Scala, Java, Rust, and Python.

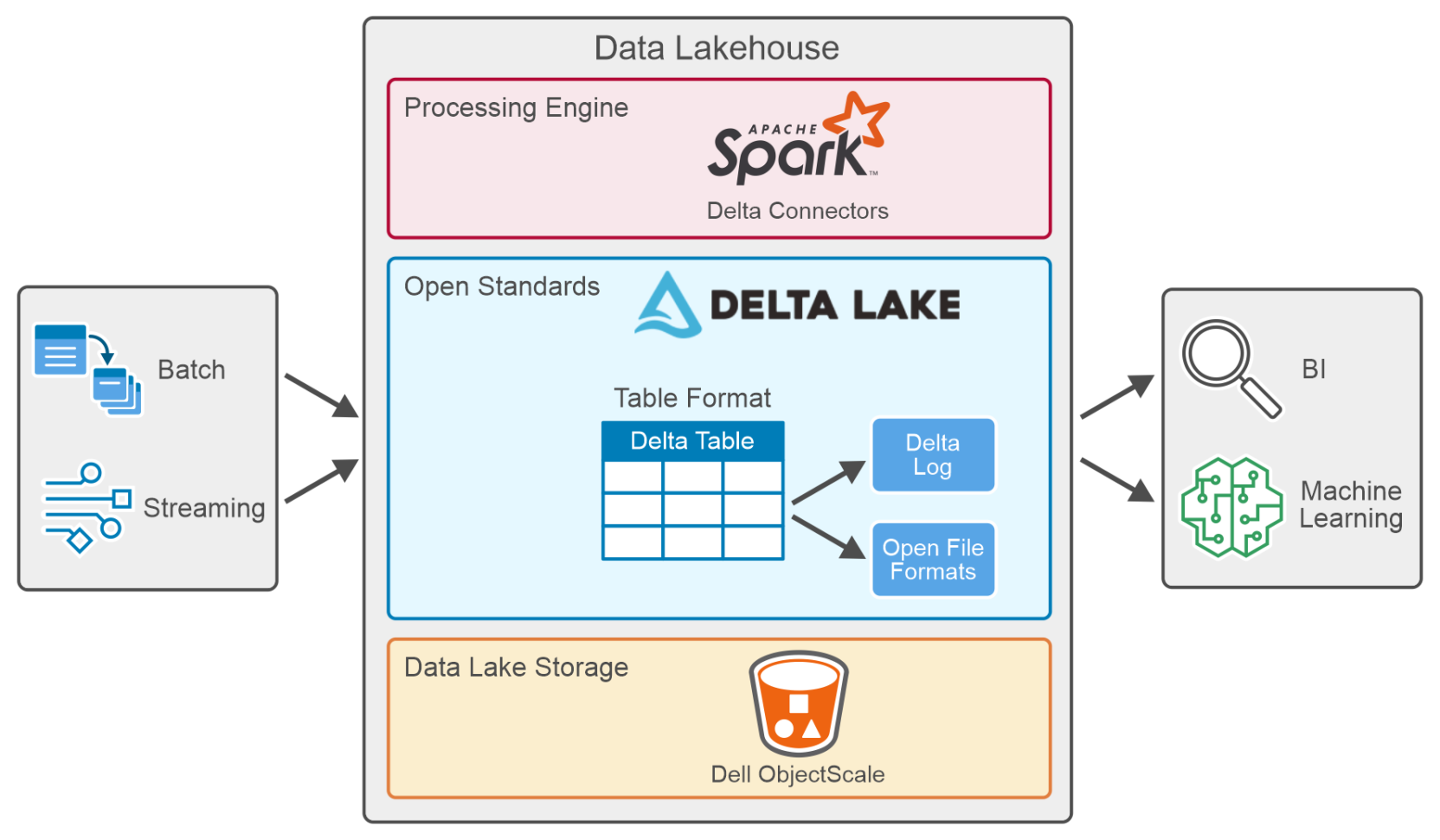

A table in Delta Lake (Delta Table) is stored in open formats such as Parquet files and file-based transaction logs stored in JSON format for ACID transactions and scalable metadata handling. Figure 2. Data Lakehouse data processing

Figure 2. Data Lakehouse data processingA delta connector is a library that facilitates external applications to read from and write to Delta tables. Delta connectors are available for numerous popular big data frameworks, including Apache Spark, Apache Hive, and Apache Flink.

Key features defining Delta Lake:

- ACID transactions: ACID properties ensure that data does not fall into a bad data quality due to a transaction that failed or partially completed and provide data reliability and integrity. Delta Lake records all the transactions made to the delta table in a transaction log to implement ACID Transactions.

- Scalable metadata: Handles terabytes or even petabytes of table data without any difficulty. Metadata is stored like any other data handled by Delta Lake.

- Time travel (Data Versioning): Delta Lake lets users access and analyze previous versions of data using them for querying, auditing, and rollback. Utilizes data exploration and analysis at different times, making it easier to identify trends, track changes, and perform historical analysis.

- Unified batch and stream processing: Every table in a Delta Lake is a batch and streaming sink. Furthermore, with efficient metadata handling, ease of scale, and ACID transactions, near-real-time analytics will be possible without using a more complicated two-tiered data architecture.

- Schema enforcement: Automatically handles schema variations to prevent bad records insertion during data ingestion.

- Schema evolution: Delta Lake supports schema evolution, allowing users to evolve their data’s schema over time.

- Audit history: Delta Lake logs all transaction log changes and provides historical audit trails.

- DML operations: Delta Lakes supports DML operations such as updates, deletes, and merges, which play a crucial role in complex data operations.

- Open source: Community-driven, open standards, open protocol, open file format, and open conversations.

Additional information regarding Delta Lake is available on delta IO webpage.